A Comprehensive Guide to Multi-Omics Data Collection and Integration for Precision Medicine

This guide provides researchers, scientists, and drug development professionals with a comprehensive framework for multi-omics data collection and integration.

A Comprehensive Guide to Multi-Omics Data Collection and Integration for Precision Medicine

Abstract

This guide provides researchers, scientists, and drug development professionals with a comprehensive framework for multi-omics data collection and integration. It covers foundational principles, from defining omics layers and their biological significance to explaining data structures like matched versus unmatched datasets. The article delves into the core challenges of data heterogeneity, missing values, and batch effects, offering practical troubleshooting strategies. A detailed comparison of statistical, multivariate, and machine learning integration methods—including MOFA+, DIABLO, and deep learning approaches—is presented to inform method selection. The guide also outlines rigorous validation techniques, from clinical association to biological interpretation, ensuring robust and biologically meaningful insights. By synthesizing current methodologies and emerging trends, this resource aims to empower the translation of complex multi-omics data into actionable discoveries for biomarker identification, disease subtyping, and therapeutic development.

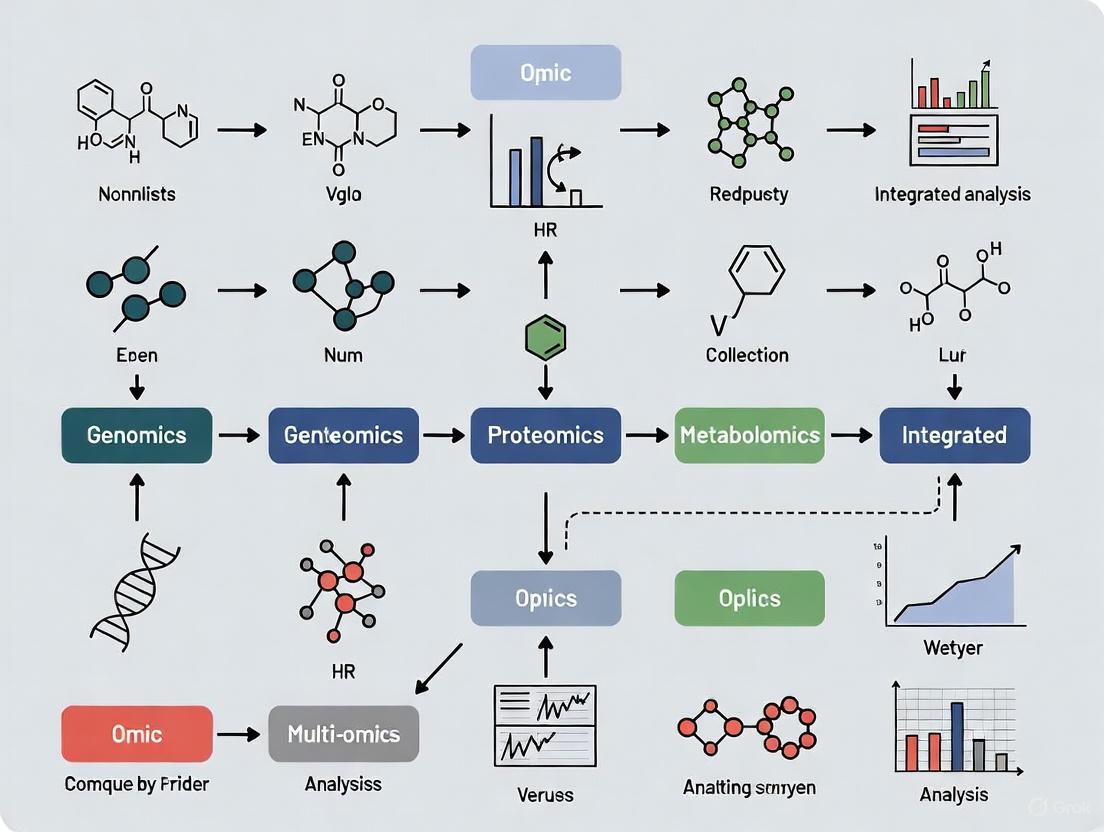

Understanding Multi-Omics: Core Concepts, Data Types, and Biological Significance

The study of biological systems has been revolutionized by the development of high-throughput technologies that allow for the comprehensive analysis of biomolecules on a massive scale. These fields, collectively known as "omics" technologies, enable researchers to move beyond studying individual molecules to understanding entire systems. The core omics fields—genomics, transcriptomics, proteomics, and metabolomics—each focus on a distinct layer of biological information, from genetic blueprint to functional endpoints. Together, they provide complementary insights into the complex molecular networks that underlie health and disease [1].

The integration of these multi-modal datasets represents a paradigm shift in biomedical research, offering holistic views into biological systems that single data types cannot provide [2]. This integrated approach is particularly valuable for precision medicine, where the goal is to tailor treatments based on a patient's unique molecular profile rather than population averages [2] [3]. However, this integration presents significant challenges due to the heterogeneity, scale, and complexity of the data generated by each omics platform [2] [4].

Comparative Analysis of Omics Fields

The four major omics fields each interrogate a specific level of the biological hierarchy, from genetic instruction to metabolic activity. The table below provides a structured comparison of their core characteristics, methodologies, and outputs.

Table 1: Technical Comparison of Core Omics Fields

| Omics Field | Molecule Studied | Key Analytical Technologies | Primary Output | Temporal Dynamics |

|---|---|---|---|---|

| Genomics [1] [4] | DNA | Sanger sequencing, DNA microarrays, Next-Generation Sequencing (NGS) including Whole Genome Sequencing (WGS) & Whole Exome Sequencing (WES) | Catalog of genetic variants (SNVs, CNVs, indels) | Static (with minor exceptions) |

| Transcriptomics [1] [5] | RNA (especially mRNA) | RNA sequencing (RNA-seq), microarrays | Gene expression profiles, quantification of transcript levels | Dynamic (minutes to hours) |

| Proteomics [1] [3] | Proteins and post-translational modifications | Liquid Chromatography with Tandem Mass Spectrometry (LC-MS/MS), Data-Independent Acquisition (DIA), Tandem Mass Tags (TMT) | Protein identification, quantification, and characterization of modifications | Dynamic (hours to days) |

| Metabolomics [1] [3] | Small molecule metabolites | Gas Chromatography-MS (GC-MS), Liquid Chromatography-MS (LC-MS), Nuclear Magnetic Resonance (NMR) | Concentration profiles of metabolites, metabolic pathway activity | Highly dynamic (seconds to minutes) |

Detailed Field Specifications

Genomics

Genomics is the study of an organism's complete set of DNA, including both coding and non-coding regions [4]. While genetics focuses on individual genes, genomics examines the entire genome and the interactions between multiple genes [1]. The human genome consists of approximately 3 billion DNA base pairs encoding about 20,000 genes, with coding regions representing only 1-2% of the entire genome [4]. Genomics captures various types of genetic variants, including single nucleotide variations (SNVs), insertions/deletions (indels), and structural variations (SVs) such as copy number variants (CNVs) [4]. In medical applications, genomics is used not only for diagnosing difficult-to-identify conditions but is increasingly being applied to identify inherited health risks and guide cancer treatment by identifying targetable mutations [1].

Transcriptomics

Transcriptomics focuses on the complete set of RNA transcripts, known as the transcriptome, produced in a cell or population of cells [1]. The primary transcript of interest is messenger RNA (mRNA), which carries genetic information from DNA to the protein synthesis machinery. A key insight from transcriptomics is that the transcriptome varies significantly between different cell types, despite all cells containing the same genomic DNA, reflecting cell-specific gene expression patterns [1]. While transcriptomics can measure gene expression more directly than genomics, it has an important limitation: mRNA levels do not always correlate perfectly with protein abundance due to various post-transcriptional regulatory mechanisms [5]. In clinical practice, transcriptomic tests exist for conditions like breast cancer, where they help determine the likely benefit of chemotherapy [1].

Proteomics

Proteomics is the large-scale study of proteins, their structures, functions, interactions, and modifications [1] [3]. Unlike the genome, the proteome is highly dynamic and reflects the functional state of a biological system at a given time. Proteomic approaches can be categorized into three main types: expression proteomics (quantifying protein levels), structural proteomics (determining protein structures and locations), and functional proteomics (characterizing protein activities and interactions) [1]. A critical aspect of proteomics is the study of post-translational modifications (PTMs)—chemical changes such as phosphorylation, acetylation, and ubiquitination that dramatically alter protein activity [3]. Proteomics faces technical challenges including the detection of low-abundance proteins, the dynamic range problem where abundant proteins mask rare ones, and a lack of standardization in sample processing [3] [5].

Metabolomics

Metabolomics is the systematic study of small-molecule metabolites, typically under 1,500 Da in molecular weight, that represent the end products of cellular processes [1] [3]. The metabolome provides the most direct reflection of a cell's physiological state and responds rapidly to environmental or pathological changes. Metabolites include diverse classes of compounds such as amino acids, lipids, sugars, and organic acids [3]. Because metabolomics captures the functional outcome of molecular activity, it is often described as providing a molecular "phenotype" that integrates information from genomics, transcriptomics, and proteomics [1]. Metabolomics is particularly valuable for studying conditions like obesity, diabetes, cancer, and neurodegenerative diseases, and for understanding individual variations in response to drugs and environmental factors [1].

Multi-Omics Integration Methodologies

Conceptual Framework for Data Integration

The integration of multi-omics data requires sophisticated computational and statistical approaches to extract meaningful biological insights from these complex, heterogeneous datasets. The integration strategy can be categorized based on when in the analytical process the datasets are combined, each with distinct advantages and challenges [2].

Table 2: Multi-Omics Data Integration Strategies

| Integration Strategy | Timing of Integration | Key Advantages | Principal Challenges |

|---|---|---|---|

| Early Integration (Concatenation-based) [2] [6] | Before analysis | Captures all potential cross-omics interactions; preserves raw information | Extremely high dimensionality; computationally intensive; risk of spurious correlations |

| Intermediate Integration (Transformation-based) [2] [6] | During analysis | Reduces complexity; incorporates biological context through networks | Requires domain knowledge; may lose some raw information during transformation |

| Late Integration (Model-based) [2] [6] | After individual analysis | Handles missing data well; computationally efficient; robust | May miss subtle cross-omics interactions not captured by individual models |

Computational Approaches and AI Applications

The analysis of integrated multi-omics data relies heavily on advanced computational methods, particularly machine learning and artificial intelligence, which can detect subtle patterns across millions of data points that are invisible to conventional analysis [2]. Several state-of-the-art approaches have proven particularly effective for multi-omics integration:

Deep Learning Methods: Autoencoders (AEs) and Variational Autoencoders (VAEs) are unsupervised neural networks that compress high-dimensional omics data into a dense, lower-dimensional "latent space," making integration computationally feasible while preserving key biological patterns [2]. Graph Convolutional Networks (GCNs) learn from biological network structures, making them effective for integrating multi-omics data onto protein-protein interaction or gene co-expression networks [2].

Network-Based Integration: Similarity Network Fusion (SNF) creates patient-similarity networks from each omics layer and iteratively fuses them into a single comprehensive network, enabling more accurate disease subtyping [2]. This approach strengthens strong similarities and removes weak ones across data modalities.

Multivariate Statistical Methods: Tools like MixOmics (an R package) provide multivariate methods including Partial Least Squares (PLS) to uncover correlations across datasets [3]. MOFA2 (Multi-Omics Factor Analysis) captures latent factors driving variation across multiple omics layers [3].

Experimental Protocols for Multi-Omics Research

Integrated Workflow for Proteomics and Metabolomics

The integration of proteomics and metabolomics is particularly powerful for systems biology as it connects molecular regulators (proteins) with their functional outcomes (metabolites) [3]. Below is a detailed protocol for a typical proteomics-metabolomics integrated study:

Step 1: Sample Preparation The goal is to obtain high-quality extracts of both proteins and metabolites from the same biological material. Best practices include using joint extraction protocols where possible, keeping samples on ice to minimize degradation, and adding internal standards (e.g., isotope-labeled peptides and metabolites) for accurate quantification [3]. A key challenge is balancing conditions that preserve proteins (which often require denaturants) with those that stabilize metabolites (which may be heat- or solvent-sensitive) [3].

Step 2: Data Acquisition For proteomics, data acquisition typically involves high-resolution mass spectrometry, with common strategies including Data-Dependent Acquisition (DDA) and Data-Independent Acquisition (DIA) for comprehensive detection, or targeted approaches like Parallel Reaction Monitoring (PRM) for specific proteins [3]. For metabolomics, untargeted profiling uses LC-MS or GC-MS to broadly capture metabolites, while targeted approaches use LC-MS/MS with Multiple Reaction Monitoring (MRM) or NMR for precise quantification of predefined metabolites [3].

Step 3: Data Processing and Integration Data preprocessing applies normalization techniques (e.g., quantile normalization, log transformation) to harmonize proteomic and metabolomic scales, and uses batch effect correction tools like ComBat to minimize technical variation [2] [3]. Integration employs statistical correlation analysis (e.g., Pearson/Spearman correlation) and network-based methods to identify protein-metabolite relationships [3].

Essential Research Reagents and Materials

Successful multi-omics research requires carefully selected reagents and analytical tools. The table below details key solutions and their applications in integrated omics studies.

Table 3: Essential Research Reagent Solutions for Multi-Omics Studies

| Reagent/Material | Function/Application | Specific Use Cases |

|---|---|---|

| Tandem Mass Tags (TMT) [3] | Multiplexed protein quantification | Enables simultaneous analysis of multiple samples in proteomics, improving throughput and reducing technical variability |

| Stable Isotope-Labeled Standards [3] | Internal standards for quantification | Allows accurate quantification of both peptides and metabolites by correcting for technical variation in MS analysis |

| Liquid Chromatography Columns [3] | Separation of complex mixtures | Reversed-phase columns for peptide/protein separation; HILIC columns for polar metabolite separation in LC-MS |

| Cross-linking Reagents | Protein-protein interaction studies | Captures transient protein interactions for structural proteomics and network analysis |

| Antibody Conjugates [5] | Protein detection and quantification | Metal-tagged antibodies for CyTOF technology enable high-parameter single-cell protein analysis |

| RNAscope Probes [5] | Spatial transcriptomics | Enables precise localization of RNA transcripts in tissue samples when combined with proteomic imaging |

The integration of genomics, transcriptomics, proteomics, and metabolomics represents a fundamental shift in biological research, moving from reductionist approaches to systems-level understanding. Each omics field provides a unique and essential perspective on biological systems, from the static genetic blueprint to the dynamic functional state. The true power of these technologies emerges when they are integrated, enabling researchers to construct comprehensive models of biological systems and disease processes [2] [4] [3].

The future of multi-omics research will be shaped by advances in several key areas. Technologically, improvements in mass spectrometry sensitivity, single-cell omics applications, and spatial omics technologies will provide unprecedented resolution [5]. Computationally, more sophisticated AI and machine learning methods will be essential for extracting biologically meaningful patterns from these complex, high-dimensional datasets [2]. Clinically, the transition of multi-omics from research to routine clinical application will require standardized protocols, robust analytical frameworks, and thoughtful attention to ethical considerations [2] [4]. As these technologies continue to mature and integrate, they hold immense promise for advancing precision medicine and delivering tailored therapeutic interventions based on a comprehensive understanding of individual molecular profiles.

The complexity of human diseases, influenced by multifaceted interactions between genetic, environmental, and molecular factors, has long challenged traditional biological research. Single-omics approaches—which analyze one molecular layer such as genomics or transcriptomics in isolation—often fail to capture the complete biological picture, generating inconsistent biomarkers and providing limited insights into causal disease mechanisms [7]. Multi-omics, the integrated analysis of diverse biological datasets including genomics, transcriptomics, proteomics, epigenomics, and metabolomics, has emerged as a transformative solution. By simultaneously examining multiple molecular layers, multi-omics provides a comprehensive, systems-level view of biological processes, enabling researchers to uncover intricate molecular interactions that drive disease pathogenesis [8] [7].

This integrated approach is revolutionizing biomedical research and therapeutic development. Where single-omics studies might identify a genetic mutation associated with disease, multi-omics can reveal how that mutation affects RNA expression, protein function, and metabolic pathways, ultimately elucidating the complete mechanistic pathway from genetic predisposition to physiological manifestation [8]. The power of multi-omics integration lies in its ability to connect these disparate biological layers, providing unprecedented insights into disease mechanisms and opening new avenues for diagnosis, treatment, and personalized medicine [9] [10].

Multi-Omics Integration Methodologies and Technical Approaches

Integrating multiple omics datasets requires sophisticated computational and statistical strategies that can handle the heterogeneity, high dimensionality, and complex noise profiles inherent in different molecular data types. The integration methodologies can be broadly categorized into three principal approaches: early, intermediate, and late integration [11].

Early integration involves combining raw data from different omics layers at the beginning of the analysis pipeline. While this approach can identify direct correlations between different molecular types, it may introduce significant challenges related to data scaling, normalization, and information loss due to the varying structures and distributions of each datatype [11].

Intermediate integration employs sophisticated algorithms to extract features from each omics dataset separately before combining them for joint analysis. This balanced approach preserves the unique characteristics of each datatype while enabling the identification of cross-omics patterns. Key intermediate integration methods include:

- Similarity Network Fusion (SNF): Constructs sample-similarity networks for each omics dataset and fuses them via non-linear processes to generate an integrated network that captures complementary information from all omics layers [12].

- Multi-Omics Factor Analysis (MOFA): An unsupervised Bayesian framework that infers a set of latent factors that capture principal sources of variation across multiple data types, effectively decomposing each datatype-specific matrix into shared and unique components [12].

- Data Integration Analysis for Biomarker discovery using Latent Components (DIABLO): A supervised integration method that uses known phenotype labels to identify shared latent components across omics datasets that are most relevant to the outcome of interest [12].

Late integration involves analyzing each omics dataset independently and combining the results at the final interpretation stage. This approach preserves dataset-specific analyses but may miss important inter-omics relationships [11].

Table 1: Comparison of Major Multi-Omics Integration Methods

| Method | Integration Type | Key Characteristics | Best Use Cases |

|---|---|---|---|

| MOFA | Intermediate, Unsupervised | Bayesian factor analysis; identifies latent factors across datasets; no phenotype requirement | Exploratory analysis of shared variation across omics layers |

| DIABLO | Intermediate, Supervised | Uses phenotype labels; multivariate methodology; identifies discriminative features | Biomarker discovery; patient stratification; classification tasks |

| SNF | Intermediate, Unsupervised | Network-based fusion; constructs similarity networks; non-linear integration | Identifying patient subgroups; cancer subtyping |

| MCIA | Intermediate, Unsupervised | Covariance optimization; aligns multiple omics features onto shared dimensional space | Joint analysis of multiple high-dimensional datasets |

| xMWAS | Early/Intermediate | Pairwise association analysis; PLS components; creates integrative networks | Correlation network analysis; identifying inter-omics connections |

Machine Learning and AI in Multi-Omics Integration

Artificial intelligence, particularly deep learning, is becoming increasingly prominent in multi-omics research due to its ability to handle the complexity and high dimensionality of integrated biological data [13]. These methods can be categorized into non-generative approaches (feedforward neural networks, graph convolutional networks, autoencoders) designed for direct feature extraction and classification, and generative methods (variational autoencoders, generative adversarial networks, generative pretrained transformers) that create adaptable representations shared across modalities [13].

AI-driven multi-omics integration has demonstrated particular success in oncology research, where models trained on TCGA (The Cancer Genome Atlas) data have outperformed traditional statistical approaches in predicting patient outcomes, identifying novel biomarkers, and understanding therapeutic resistance mechanisms [13]. However, most AI models remain at the proof-of-concept stage with limited clinical validation, presenting a significant opportunity for future translation into clinical practice [13].

Experimental Design and Workflow for Multi-Omics Studies

Implementing a robust multi-omics study requires careful planning and execution across multiple experimental and computational phases. The workflow below illustrates the key stages in a comprehensive multi-omics investigation:

Sample Preparation and Data Generation

The foundation of any successful multi-omics study lies in proper sample collection and processing. For matched multi-omics analysis—where multiple molecular layers are profiled from the same sample set—careful preservation methods are essential to maintain the integrity of DNA, RNA, proteins, and metabolites [12]. Recent advances in single-cell and spatial technologies have further enhanced multi-omics capabilities, allowing researchers to analyze molecular profiles at cellular resolution within their native tissue context [8] [10].

High-throughput technologies for data generation include:

- Next-generation sequencing for genomics, transcriptomics, and epigenomics

- Mass spectrometry for proteomics and metabolomics

- Spatial transcriptomics and proteomics for mapping molecular distributions within tissues

Data Processing and Computational Integration

The processing of multi-omics data requires specialized computational pipelines to address challenges such as batch effects, varying data distributions, missing values, and data harmonization [12] [14]. Tailored preprocessing pipelines are typically applied to each datatype before integration, including normalization, quality control, and feature selection [14].

Table 2: Essential Research Reagents and Platforms for Multi-Omics Studies

| Category | Specific Technologies/Platforms | Primary Function |

|---|---|---|

| Sequencing Platforms | Illumina NovaSeq, PacBio, Oxford Nanopore | Genomics, transcriptomics, epigenomics profiling |

| Proteomics Technologies | Mass spectrometry (LC-MS/MS), Olink, SomaScan | Protein identification and quantification |

| Spatial Omics Platforms | 10x Genomics Visium, Nanostring GeoMx, Akoya CODEX | Spatial mapping of transcripts and proteins |

| Single-Cell Technologies | 10x Genomics Single Cell, Parse Biosciences | Single-cell multi-omics profiling |

| Computational Tools | MOFA+, DIABLO, SNF, Omics Playground | Data integration and analysis |

| Bioinformatics Resources | TCGA, GTEx, Human Cell Atlas, Bioconductor | Reference data and analytical packages |

Application in Disease Mechanism Elucidation: Breast Cancer Case Study

The power of multi-omics integration is powerfully demonstrated in oncology, particularly breast cancer research. A 2025 study published in Scientific Reports developed an adaptive multi-omics integration framework for breast cancer survival analysis that combined genomics, transcriptomics, and epigenomics data from The Cancer Genome Atlas [11]. The methodology and outcomes provide a compelling template for how multi-omics reveals disease mechanisms.

Experimental Protocol and Implementation

The breast cancer survival study employed a sophisticated multi-stage analytical approach:

Data Acquisition and Preprocessing: Collected genomic (SNVs, CNVs), transcriptomic (RNA-seq), and epigenomic (DNA methylation) data from TCGA breast cancer samples. Each datatype underwent modality-specific preprocessing, normalization, and batch effect correction [11].

Feature Selection: Implemented genetic programming to evolutionarily optimize feature selection from each omics layer, identifying the most informative molecular features associated with survival outcomes [11].

Multi-Omics Integration: Applied intermediate integration using the genetic programming framework to combine selected features from all omics layers into a unified model [11].

Survival Modeling: Developed a Cox proportional hazards model using the integrated multi-omics features to predict patient survival, evaluated using the concordance index (C-index) [11].

The integrated multi-omics approach achieved a C-index of 78.31 during cross-validation and 67.94 on the test set, significantly outperforming single-omics models [11]. This demonstrates the superior predictive power of multi-omics integration for clinical outcome prediction.

Biological Insights Gained

Beyond improved prediction accuracy, the multi-omics approach revealed previously obscured molecular networks driving breast cancer progression. The integrated analysis identified:

- Cross-omics regulatory networks where genetic alterations epigenetically influenced gene expression patterns

- Novel biomarker combinations spanning multiple molecular layers that better defined cancer subtypes

- Mechanistic pathways connecting genetic susceptibility with transcriptional and epigenetic dysregulation

These insights provide a more comprehensive understanding of breast cancer heterogeneity and progression, enabling better patient stratification and personalized treatment approaches [11].

Advanced Applications and Emerging Frontiers

Single-Cell and Spatial Multi-Omics

The integration of single-cell technologies with multi-omics represents one of the most exciting frontiers in biomedical research. Single-cell multi-omics allows researchers to analyze genomic, transcriptomic, and proteomic changes at the individual cell level, revealing cellular heterogeneity and rare cell populations that bulk analyses cannot detect [9] [10]. When combined with spatial technologies, which preserve the architectural context of tissues, researchers can map molecular interactions within their native tissue microenvironment, providing unprecedented insights into cellular communication and tissue organization in health and disease [8] [15].

Clinical Translation and Precision Medicine

Multi-omics is increasingly driving advances in clinical diagnostics and therapeutic development. In rare disease diagnosis, integrated analysis of genomic, transcriptomic, and epigenomic data has significantly improved diagnostic yields compared to single-omics approaches alone [7]. For complex diseases like Alzheimer's, multi-omics studies have identified epigenetic alterations and molecular networks associated with disease progression, revealing potential therapeutic targets [7].

Liquid biopsies exemplify the clinical impact of multi-omics, analyzing biomarkers like cell-free DNA, RNA, proteins, and metabolites non-invasively [9] [10]. Initially focused on oncology, these approaches are expanding into other medical domains, enabling early detection, treatment monitoring, and personalized therapeutic strategies through multi-analyte integration [9].

The following diagram illustrates how AI-driven multi-omics analysis transforms raw data into clinical insights:

Challenges and Future Directions

Despite its transformative potential, multi-omics integration faces significant challenges that must be addressed to fully realize its capabilities. Key limitations include:

Data Integration and Computational Challenges: The heterogeneous nature of multi-omics data, with varying scales, resolutions, and noise profiles, creates substantial barriers to effective integration [8] [12]. The massive volume of data generated requires advanced computational infrastructure, scalable storage solutions, and specialized analytical expertise [9] [8]. Development of user-friendly analytical platforms like Omics Playground aims to democratize multi-omics analysis for researchers without extensive computational backgrounds [12].

Standardization and Reproducibility: The absence of standardized preprocessing protocols and analytical pipelines threatens the reproducibility of multi-omics studies [12]. Establishing community-wide standards for data quality control, normalization, and integration methodologies is essential for advancing the field [9].

Clinical Implementation and Equity: Translating multi-omics discoveries into clinical practice requires addressing regulatory considerations, demonstrating clinical utility, and ensuring accessibility across diverse populations [9]. Engaging underrepresented populations in multi-omics research is critical to ensure that biomarker discoveries and therapeutic benefits are broadly applicable and do not perpetuate health disparities [9].

Future advancements in multi-omics will be driven by continued technological innovations, particularly in single-cell and spatial profiling, improved AI and machine learning algorithms for data integration, and greater emphasis on longitudinal multi-omics profiling to understand dynamic biological processes [8] [10]. As these technologies mature and challenges are addressed, multi-omics integration will increasingly become the cornerstone approach for unraveling disease mechanisms and enabling precision medicine.

Multi-omics integration represents a paradigm shift in biological research and clinical medicine. By simultaneously analyzing multiple molecular layers, this approach provides unprecedented insights into the complex mechanisms underlying human diseases, overcoming the limitations of single-omics methodologies. While significant challenges remain in data integration, standardization, and clinical translation, ongoing advancements in computational methods, AI technologies, and analytical frameworks are rapidly addressing these barriers. As multi-omics continues to evolve and mature, it promises to revolutionize our understanding of disease pathogenesis, accelerate therapeutic development, and ultimately enable truly personalized precision medicine approaches tailored to the unique molecular profile of each patient.

The advent of high-throughput technologies has enabled the concurrent measurement of multiple molecular layers—such as the genome, epigenome, transcriptome, proteome, and metabolome—within biological systems. This approach, known as multi-omics, provides an unprecedented, holistic view of biological processes and disease mechanisms. The principal value of multi-omics lies in integration: the computational and statistical harmonization of these distinct data types. While each omic layer provides valuable insights alone, their integration can reveal novel cell subtypes, regulatory interactions, and pathways that are not detectable when analyzing layers in isolation [16] [12]. This is because biological components operate within a highly interconnected network; for instance, a genetic variant (genomics) might influence how a gene is regulated (epigenomics), affecting its expression (transcriptomics) and ultimately the abundance of its corresponding protein (proteomics). Multi-omics integration serves to disentangle these complex, causal relationships to properly capture cellular phenotype [16].

However, integrating these diverse datasets presents significant bioinformatics challenges. Each omic data type has a unique scale, statistical distribution, noise profile, and preprocessing requirements, making integration a complex task without a universal "one-size-fits-all" solution [16] [12]. This technical guide outlines the core data structures underpinning multi-omics integration, focusing on the critical distinctions between matched and unmatched, and horizontal and vertical integration strategies. Framing the integration problem through these lenses is a fundamental first step for researchers and drug development professionals designing robust, biologically meaningful multi-omics studies.

Core Data Structures and Integration Typologies

The strategy for integrating multi-omics data is profoundly influenced by the experimental design, specifically whether the same cell or sample was used to generate the different omics measurements. This leads to the primary distinction between matched and unmatched data, which in turn dictates the computational approach, often categorized as horizontal, vertical, or diagonal integration.

Matched vs. Unmatched Data

The concepts of matched and unmatched data define the fundamental structure of the input data for integration tools.

- Matched Data: In this structure, multi-omics profiles are measured from the same cell or the same set of samples. For example, single-cell technologies can simultaneously profile the transcriptome (RNA) and epigenome (ATAC-seq) from within a single cell [16] [12]. This design keeps the biological context consistent and allows for the direct investigation of non-linear relationships between molecular modalities within the same biological unit.

- Unmatched Data: Here, the different omics data types are generated from different, unpaired cells or samples. This could involve integrating transcriptomic data from one set of cells with proteomic data from a different set of cells from the same tissue, or even from different studies altogether [16]. This scenario is technically easier to perform experimentally but presents a greater computational challenge for integration.

Horizontal, Vertical, and Diagonal Integration

These terms describe the computational strategies used to merge the data based on its structure.

- Vertical Integration: This strategy is used for matched data. It merges data from different omics layers (e.g., RNA, DNA methylation, chromatin accessibility) within the same set of samples. The sample or cell itself is used as a natural anchor to bring the different omic layers together [16] [12]. This is often considered the most desirable form of integration as it preserves the direct biological context from the same source.

- Horizontal Integration: This involves merging datasets of the same omic type across multiple different studies or batches. For instance, combining RNA-seq data from multiple experiments to increase statistical power. While a form of data integration, it is not considered true multi-omics integration and will not be a focus of this guide [16].

- Diagonal Integration: This is the most technically challenging form of integration and is applied to unmatched data. It involves integrating different omics types from different cells or different studies. Since the cell cannot be used as an anchor, the method must instead project cells into a co-embedded space or use prior biological knowledge to find commonalities between the disparate datasets [16].

The following diagram illustrates the logical relationships and workflows between these core data structures and integration types.

The table below provides a structured comparison of these integration approaches, including their defining characteristics, challenges, and example computational tools.

| Integration Type | Data Structure | Key Characteristic | Primary Challenge | Example Tools |

|---|---|---|---|---|

| Vertical Integration [16] [12] | Matched | The cell/sample is the anchor for integration. | Managing different data scales and noise ratios from the same cell. | MOFA+ [16], Seurat v4 [16], totalVI [16] |

| Diagonal Integration [16] | Unmatched | No common cell anchor; requires creating a shared latent space. | Finding biological commonality between cells from different populations/studies. | GLUE [16], LIGER [16], Pamona [16] |

| Mosaic Integration [16] | Partially Matched | Integrates datasets with various, overlapping omics combinations. | Leveraging sparse, overlapping measurements to create a unified representation. | StabMap [16], Cobolt [16], Bridge Integration [16] |

| Horizontal Integration [16] | Unmatched (Same Omics) | Merges the same omic type from multiple datasets. | Batch effect correction and data normalization. | (Not the focus of this guide) |

Methodologies and Experimental Protocols

Selecting the appropriate computational method is critical for successful multi-omics integration. The choice depends on the data structure (matched or unmatched) and the specific biological question. The following workflow chart outlines a structured decision-making process for selecting and applying an integration method, from data input to biological validation.

Protocols for Key Integration Methods

Below are detailed methodologies for three prominent multi-omics integration tools, each representing a different computational approach.

MOFA+ (Multi-Omics Factor Analysis)

- Methodology Type: Unsupervised, probabilistic factor analysis (Bayesian framework) [12].

- Core Protocol:

- Input: MOFA+ accepts multiple matched omics data matrices (e.g., mRNA, DNA methylation, chromatin accessibility) from the same set of samples [16] [12].

- Decomposition: The model decomposes each data matrix into a set of latent factors (shared across all omics) and weight matrices (specific to each omics modality), plus a residual noise term [12].

- Training: It is trained to infer the set of latent factors and weights that best explain the variance in the observed multi-omics data.

- Output: The result is a low-dimensional representation where each factor captures an independent source of variation across the datasets. Researchers can then analyze how much variance each factor explains in each omics modality and associate factors with sample phenotypes [12].

- Ideal Use Case: Exploratory analysis of matched multi-omics data to identify major, unlabeled sources of biological and technical variation.

GLUE (Graph-Linked Unified Embedding)

- Methodology Type: Unsupervised, graph-based variational autoencoder [16].

- Core Protocol:

- Input: GLUE is designed for unmatched integration and can handle multiple omics layers (e.g., chromatin accessibility, DNA methylation, mRNA) [16].

- Prior Knowledge: A key innovation is the use of a prior knowledge graph that defines known biological relationships between features across omics layers (e.g., linking a transcription factor to its target genes) [16].

- Integration: The model uses a graph variational autoencoder to learn a co-embedded space for cells from different modalities. The prior knowledge graph acts as a guide to "link" the omics and align the cells meaningfully.

- Output: A unified low-dimensional embedding of all cells from all omics types, enabling joint analysis such as clustering and trajectory inference on unmatched data [16].

- Ideal Use Case: Integrating multiple unpaired omics datasets (diagonal integration) where a reliable prior knowledge base is available.

SNF (Similarity Network Fusion)

- Methodology Type: Unsupervised, network-based method [12].

- Core Protocol:

- Input: SNF operates on multiple omics data matrices.

- Network Construction: Instead of merging raw data, SNF first constructs a sample-similarity network for each individual omics dataset. In these networks, nodes represent samples, and edges represent the similarity between samples (e.g., based on Euclidean distance) [12].

- Fusion: These datatype-specific networks are then fused into a single, consolidated network using a non-linear process that iteratively updates each network based on the information from the others.

- Output: A fused network that captures complementary information from all omics layers. This network can be used for downstream analyses like clustering patients into integrative subtypes [12].

- Ideal Use Case: Integrating data from different omics to discover disease subtypes or patient subgroups based on multiple layers of molecular information.

Successful multi-omics research relies on both computational tools and high-quality biological data. The following table details key resources mentioned in this guide.

| Resource / Tool Name | Type | Primary Function in Multi-Omics | Reference |

|---|---|---|---|

| MOFA+ | Computational Tool / R Package | Unsupervised integration of matched multi-omics data using factor analysis to identify latent sources of variation. | [16] [12] |

| Seurat v4/v5 | Computational Tool / R Package | A comprehensive toolkit for single-cell analysis, including weighted nearest-neighbor (WNN) methods for vertical integration and bridge integration for unmatched data. | [16] |

| GLUE (Graph-Linked Unified Embedding) | Computational Tool / Python Package | Unsupervised integration of unmatched multi-omics data using a graph-guided variational autoencoder. | [16] |

| TCGA (The Cancer Genome Atlas) | Public Data Repository | A vast resource of publicly available multi-omic data (RNA-Seq, DNA-Seq, methylation) across many tumor types, used for robust, large-scale analyses. | [12] |

| Omics Playground | Integrated Analysis Platform | A code-free platform that provides multiple state-of-the-art integration methods (like MOFA and SNF) and visualization capabilities for multi-omics data analysis. | [12] |

The strategic integration of multi-omics data is a powerful paradigm for advancing biomedical research and drug development. The initial and most critical step in this process is understanding and defining the underlying data structure—whether it is matched or unmatched—as this directly dictates the applicable integration strategy, be it vertical or diagonal. While vertical integration of matched data is often more straightforward and provides direct correlative power within a single cell, real-world constraints frequently necessitate the use of more complex diagonal and mosaic integration methods for unmatched data.

As the field continues to evolve, the development of more sophisticated computational tools that can leverage prior biological knowledge, handle missing data, and provide interpretable results will be crucial. For researchers, the path forward involves careful experimental planning to maximize data compatibility, coupled with a reasoned selection of integration methods that align with both their data structure and biological objectives. By systematically applying the principles of data structures and integration typologies outlined in this guide, scientists can more effectively unlock the profound insights hidden within coordinated multi-omics datasets.

The landscape of disease research and therapeutic development is undergoing a fundamental transformation, shifting from a traditional, symptom-focused approach to a molecular-driven, systems-level understanding. This paradigm shift is powered by multi-omics—the integrated analysis of diverse biological datasets spanning the genome, epigenome, transcriptome, proteome, and metabolome [17] [8]. Where single-omics approaches could only provide a fragmented view, multi-omics integration delivers a holistic picture of the complex molecular interactions that underlie health and disease. This comprehensive perspective is critical for uncovering robust biomarkers and designing personalized treatment strategies that align with an individual's unique molecular profile [18] [19].

The central thesis of this whitepaper is that the effective collection, integration, and interpretation of multi-omics data serves as the foundational guide for modern biomedical research, directly linking biomarker discovery to clinically actionable insights. The journey from data to therapy faces significant challenges, including the "tar pit" of biomarker validation, where countless candidates fail to achieve clinical utility [20]. However, by employing a structured framework for multi-omics data integration, researchers can systematically bridge this gap, thereby accelerating the development of precision medicine [18] [17]. This guide will detail the key biological insights, computational strategies, and experimental protocols that are defining the future of biomarker discovery and personalized treatment.

Computational Strategies for Multi-Omics Data Integration

The immense volume and heterogeneity of multi-omics data necessitate sophisticated computational methods for integration and interpretation. These methods can be broadly categorized based on their approach to data synthesis and their intended analytical objectives.

Integration Methodologies and Analytical Objectives

The choice of integration strategy is heavily influenced by the specific scientific question at hand. Studies aiming to identify patient subtypes or discover disease-associated patterns often employ intermediate integration methods that learn a joint representation from multiple omics datasets [18]. These approaches are particularly powerful for finding co-varying features across molecular layers that define distinct disease subgroups with prognostic or therapeutic implications. For objectives such as understanding regulatory mechanisms or predicting drug response, other methods, including network-based integration or knowledge-driven approaches, may be more appropriate [18] [19]. These techniques often leverage prior biological knowledge to connect disparate omics findings into a coherent model of disease pathophysiology.

Table 1: Multi-Omics Data Repositories for Biomarker Discovery

| Repository Name | Primary Focus | Available Omics Data Types | Key Utility |

|---|---|---|---|

| The Cancer Genome Atlas (TCGA) [19] | Pan-Cancer | Genomics, Transcriptomics, Epigenomics, Proteomics | Molecular profiling of >33 cancer types; foundational for cancer biomarker discovery. |

| Clinical Proteomic Tumor Analysis Consortium (CPTAC) [17] [19] | Cancer Proteomics | Proteomics, Post-translational Modifications | Provides proteomic data correlated with TCGA genomic cohorts. |

| International Cancer Genomics Consortium (ICGC) [19] | Pan-Cancer Genomics | Whole Genome Sequencing, Somatic/Germline Mutations | Catalogs genomic alterations across cancer types and ethnicities. |

| Cancer Cell Line Encyclopedia (CCLE) [19] | Preclinical Models | Gene Expression, Copy Number, Drug Response | Facilitates in vitro validation of biomarker candidates and drug sensitivity testing. |

| Answer ALS [18] | Neurodegenerative Disease | Genomics, Transcriptomics, Epigenomics, Proteomics | Integrated omics and deep clinical data for Amyotrophic Lateral Sclerosis. |

Workflow for Multi-Omics Data Processing

A standardized workflow is essential for transforming raw multi-omics data into reliable biological insights. The process typically involves sequential stages of data acquisition, preprocessing, integration, and model interpretation [21]. The initial preprocessing and quality control stage is critical, as it addresses the technical variability and noise inherent in high-throughput technologies, ensuring that downstream analyses are based on clean, standardized data [17] [22]. Following this, intra-omics harmonization aligns data from different platforms or studies, while inter-omics integration seeks to find statistical and biological relationships across the different molecular layers [17].

The following diagram illustrates a generalized logical workflow for a multi-omics biomarker discovery project, from data collection to clinical application.

The Biomarker Discovery Pipeline: From Candidates to Clinical Application

The biomarker discovery pipeline is a multi-stage, rigorous process designed to systematically identify and verify measurable indicators of biological processes or therapeutic responses.

Pipeline Stages and Key Methodologies

The pipeline can be conceptualized in three core stages [21]. The journey begins with the acquisition of high-quality biological samples and the generation of multi-omics data, followed by extensive preprocessing and feature extraction using AI/ML models to identify meaningful molecular patterns [17] [21]. The final and most demanding stage is clinical validation, where biomarker candidates are tested for reliability, sensitivity, and specificity across large, diverse patient populations to confirm their clinical utility [20] [21].

A persistent challenge is the high attrition rate, with only about 0.1% of published biomarker candidates progressing to routine clinical use [23]. This bottleneck is most pronounced in the verification stage, where the transition from discovery to validation requires reliable assays to credential candidates before costly large-scale clinical trials [20].

Experimental Protocols for Biomarker Verification

Advancements in analytical technologies are crucial for overcoming the verification bottleneck. While traditional methods like ELISA have been the gold standard, newer platforms offer superior performance.

Table 2: Key Technologies for Biomarker Verification and Validation

| Technology / Reagent | Function | Key Advantage | Considerations |

|---|---|---|---|

| LC-MS/MS (Liquid Chromatography Tandem Mass Spectrometry) [23] | Targeted proteomics; quantification of specific proteins/peptides. | High specificity and sensitivity; ability to detect low-abundance species. | Requires expertise; complex data analysis. |

| MSD (Meso Scale Discovery) U-PLEX [23] | Multiplexed immunoassay for simultaneous analyte measurement. | High dynamic range & sensitivity; cost-effective for multiple analytes. | Dependent on antibody quality. |

| Next-Generation Sequencing (NGS) [17] | Genome/Transcriptome-wide profiling for mutation and expression analysis. | Provides comprehensive view of genetic and transcriptomic alterations. | Data volume and storage challenges. |

| Reverse Phase Protein Array (RPPA) [19] | High-throughput antibody-based protein quantification. | Allows profiling of known proteins and signaling phospho-proteins. | Limited to available antibodies. |

Detailed Protocol: Biomarker Verification using LC-MS/MS and MSD A fit-for-purpose validation protocol must be established, tailored to the biomarker's intended clinical use [23].

- Sample Preparation: For LC-MS/MS, proteins are extracted from biofluids (e.g., plasma) or tissues and digested into peptides using a protease like trypsin. For MSD assays, samples are typically diluted in a specific buffer provided in the kit.

- Assay Configuration: For LC-MS/MS, stable isotope-labeled synthetic peptides (SIS peptides) are spiked into the sample as internal standards for precise quantification. For MSD, a U-PLEX plate coated with capture antibodies is used.

- Analysis and Quantification: In LC-MS/MS, peptides are separated by liquid chromatography and analyzed by mass spectrometry, monitoring specific ion transitions (MRM or PRM). The ratio of the native peptide to the SIS peptide provides absolute quantification. In MSD, after incubation with detection antibodies, the plate is read using an MSD instrument that measures electrochemiluminescence signal, which is proportional to analyte concentration.

- Validation Parameters: The assay must be characterized for:

- Specificity: Ability to accurately measure the target analyte in the presence of similar compounds.

- Sensitivity (LLOQ): The lowest concentration that can be quantified with acceptable precision and accuracy.

- Precision and Accuracy: Intra- and inter-assay reproducibility and closeness to the true value.

- Dynamic Range: The range of concentrations over which the assay provides a linear response.

Application in Personalized Oncology: A Case Study in Laryngeal Cancer

The integration of multi-omics data is revolutionizing oncology by enabling molecularly guided patient stratification and treatment. Laryngeal squamous cell carcinoma (LSCC) serves as a compelling case study.

Key Genetic Drivers and Dysregulated Pathways

Comprehensive molecular profiling of LSCC has identified recurrent genetic alterations that drive tumorigenesis and serve as potential biomarkers and therapeutic targets. Key among these are mutations in the tumor suppressor gene TP53 (occurring in up to 70% of cases), which are associated with poor prognosis and therapy resistance [24]. Other frequently altered genes include CDKN2A, which promotes uncontrolled cell cycle progression, and PIK3CA, whose mutations lead to hyperactivation of the PI3K/AKT/mTOR pro-survival and proliferation pathway, making it a compelling therapeutic target [24]. Furthermore, alterations in NOTCH1 and epigenetic changes, such as promoter methylation of MGMT, have been identified as key players, with the latter also serving as a predictive biomarker for response to temozolomide in glioblastoma, highlighting a translatable insight [17] [24].

The following diagram summarizes the key signaling pathways and their interactions in the context of LSCC, illustrating potential therapeutic targets.

Integrating Biomarkers for Personalized Treatment Strategies

The ultimate goal of multi-omics profiling is to inform clinical decision-making. In LSCC, biomarker integration enables personalized strategies across several domains:

- Prognostic Stratification: Combining TP53 mutation status with CDKN2A loss and high-risk gene expression signatures can identify patients with aggressive disease who may benefit from more intensive or novel treatment regimens [24].

- Predictive Biomarkers for Therapy Selection: The presence of PD-L1 expression, high Tumor Mutational Burden (TMB), and specific immune cell infiltrates in the tumor microenvironment can predict response to immune checkpoint inhibitors (e.g., anti-PD-1/PD-L1 antibodies) [24]. TMB, for instance, has been validated as a predictive biomarker for pembrolizumab across solid tumors [17].

- Targeted Therapy Guidance: Identifying specific driver alterations, such as PIK3CA mutations, opens the door for targeted therapies, including PI3K or AKT inhibitors, within clinical trials or off-label use [24].

Persistent Challenges in Clinical Translation

Despite its promise, the translation of multi-omics insights into validated biomarkers and routine clinical practice faces significant hurdles. Data heterogeneity from different omics platforms and studies complicates integration and requires sophisticated harmonization [17] [8]. The "small n, large p" problem—where the number of features (genes, proteins) vastly exceeds the number of patient samples—poses a major statistical challenge for robust biomarker discovery [21]. Furthermore, issues of analytical variability and a lack of reproducibility across labs undermine the validation process [21]. Finally, navigating ethical considerations, data privacy, and establishing clear data governance frameworks are essential for fostering the large-scale collaboration needed to validate biomarkers across diverse populations [8] [21].

The Future Multi-Omics Toolkit

Emerging technologies and approaches are poised to address these challenges and deepen our biological insights. Single-cell and spatial multi-omics technologies are revolutionizing our understanding of tumor heterogeneity and the tumor microenvironment by allowing molecular profiling at the individual cell level within its spatial context [17] [8]. The synergy between multi-omics and Artificial Intelligence (AI) and Machine Learning (ML) is powerful; AI models can detect complex, non-linear patterns in high-dimensional datasets that are beyond human discernment, improving target identification and drug response prediction [17] [8]. Finally, the adoption of FAIR (Findable, Accessible, Interoperable, Reusable) data principles and open-source pipelines, such as the Digital Biomarker Discovery Pipeline (DBDP), promotes standardization, transparency, and collaboration, which are critical for accelerating the entire biomarker development pipeline [21].

The integration of multi-omics data represents a fundamental advancement in our approach to understanding and treating complex diseases. By systematically connecting molecular profiles from multiple biological layers to clinical phenotypes, researchers can uncover key biological insights that drive the discovery of robust biomarkers and the design of personalized treatment strategies. While challenges in data integration, validation, and clinical implementation remain, the continued evolution of computational methods, analytical technologies, and collaborative frameworks is steadily bridging the gap between biomarker discovery and patient benefit. As this field matures, multi-omics will undoubtedly become an indispensable component of a future where medicine is not only personalized but also predictive and preventive.

Multi-Omics Integration Strategies: From Statistical Models to AI-Driven Approaches

In the field of multi-omics research, data integration is a critical step for achieving a holistic understanding of complex biological systems. Integration models, primarily categorized into early, intermediate, and late fusion, provide structured methodologies for combining diverse omics data types, such as genomics, transcriptomics, proteomics, and metabolomics [25]. These strategies enable researchers to uncover interactions across different molecular layers that are often invisible when analyzing single omics datasets in isolation [25]. The choice of fusion strategy directly impacts the biological insights gained, influencing everything from cancer subtyping and biomarker discovery to personalized treatment selection [25] [26]. This guide provides a technical overview of these core integration models, their applications, and implementation protocols for a research audience.

Core Fusion Strategies

The three primary fusion strategies—early, intermediate, and late—differ based on the stage at which data from multiple omics sources are integrated. The following table summarizes their key characteristics, advantages, and challenges.

Table 1: Comparison of Multi-Omics Data Fusion Strategies

| Feature | Early Fusion (Data-Level) | Intermediate Fusion (Feature-Level) | Late Fusion (Decision-Level) |

|---|---|---|---|

| Integration Stage | Combines raw or pre-processed data from different omics platforms before model input [25]. | Integrates learned features or patterns from each omics layer for joint analysis [25]. | Combines predictions or decisions from models trained independently on each omics modality [25] [26]. |

| Key Methodology | Principal Component Analysis (PCA), Canonical Correlation Analysis (CCA) [25]. | Network-based methods, multi-omics factor analysis (MOFA), DIABLO [25] [12]. | Weighted voting, weighted averaging, machine learning-based fusion [25] [26]. |

| Advantages | Discovers novel cross-omics patterns; preserves maximum information [25]. | Balances information retention and computational feasibility; allows incorporation of biological knowledge [25]. | Robust against noise in individual omics layers; handles missing data well; modular and interpretable workflow [25] [26]. |

| Disadvantages | High computational demand; requires sophisticated pre-processing to handle data heterogeneity [25] [12]. | May miss subtle raw-level interactions; complex biological interpretation [25]. | Might miss subtle cross-omics interactions present in the raw data [25]. |

| Ideal Use Case | Hypothesis-free discovery of novel, complex patterns across omics layers. | Balanced analysis leveraging feature selection for large-scale studies. | Clinical settings with potential for missing data, or when interpretability of each omics layer is key. |

The workflow for selecting and applying these fusion strategies can be visualized as follows:

Detailed Methodologies and Experimental Protocols

Early Fusion (Data-Level Fusion)

Early fusion involves concatenating or merging raw or pre-processed data from different omics sources into a single, combined dataset before analysis [25]. The key to successful early fusion lies in robust preprocessing to manage the high heterogeneity of multi-omics data.

Experimental Protocol:

- Data Normalization: Apply omics-specific normalization techniques to each dataset individually (e.g., quantile normalization for RNA-Seq, z-score standardization for proteomics) to make values comparable across platforms [25] [12].

- Feature Space Alignment: Use dimensionality reduction techniques like Principal Component Analysis (PCA) or Canonical Correlation Analysis (CCA) on the normalized data to project different omics modalities into a shared feature space [25].

- Data Concatenation: Combine the top principal components or canonical variates from each omics dataset into a unified feature matrix.

- Model Training: Input the combined matrix into a machine learning model (e.g., random forest, support vector machine, or deep neural network) for classification or regression tasks.

Intermediate Fusion (Feature-Level Fusion)

Intermediate fusion first transforms each omics dataset into a set of relevant features or latent representations, which are then integrated. This approach effectively reduces dimensionality while preserving cross-omics interactions.

Experimental Protocol using MOFA+:

- Individual Data Processing: Normalize and preprocess each omics dataset (e.g., RNA-Seq, DNA methylation) separately to handle technical noise and batch effects [25] [12].

- Model Application: Input the processed data matrices into the MOFA+ (Multi-Omics Factor Analysis) framework. MOFA+ is an unsupervised Bayesian model that infers a set of latent factors that capture the principal sources of variation across all omics datasets [12].

- Variance Decomposition: Analyze the model output to determine the variance explained by each factor in each omics modality. This identifies factors that are shared across omics layers and those that are dataset-specific.

- Biological Interpretation: Correlate the inferred factors with known sample phenotypes (e.g., disease status, survival) and use functional enrichment analysis on the highly weighted features (genes, proteins) in significant factors to derive biological insights [12].

Late Fusion (Decision-Level Fusion)

Late fusion involves training separate models on each omics dataset and then combining their predictions. This method is highly flexible and robust to missing modalities.

Experimental Protocol for NSCLC Subtyping: This protocol is based on a study that achieved high performance (AUC > 0.99) in classifying Non-Small Cell Lung Cancer (NSCLC) subtypes [26].

- Independent Model Training: Train a specialized machine learning model for each available omics modality (e.g., a CNN for whole-slide images, a Random Forest for RNA-Seq data, an SVM for miRNA-Seq) [26].

- Prediction Generation: Each model outputs a set of probabilities for the sample belonging to each class (e.g., LUAD, LUSC, control).

- Fusion Weight Optimization: Instead of simple averaging, use an optimization algorithm (e.g., gradient descent) to learn the optimal weights for combining the probability outputs from each model. The objective is to minimize the classification error on a validation set [26].

- Final Decision Making: Compute the weighted sum of the probabilities from all models and assign the sample to the class with the highest fused probability score.

The data flow and model architecture for this late fusion approach are illustrated below:

The Scientist's Toolkit: Key Research Reagents and Computational Solutions

Successful implementation of multi-omics fusion strategies relies on a suite of computational tools and resources. The following table details essential "research reagents" for the field.

Table 2: Essential Computational Tools for Multi-Omics Data Integration

| Tool/Solution Name | Type/Function | Key Utility in Multi-Omics Research |

|---|---|---|

| MOFA+ [12] | Software Package (R/Python) | An unsupervised Bayesian method for factor analysis that identifies latent factors representing shared and specific variations across multiple omics datasets. |

| DIABLO [12] | Software Package (R mixOmics) | A supervised integration method designed for biomarker discovery, identifying features highly correlated across omics datasets and predictive of a phenotype. |

| Similarity Network Fusion (SNF) [12] | Computational Algorithm | Constructs sample-similarity networks for each data type and then fuses them into a single network that captures complementary information. |

| Omics Playground [12] | Integrated Bioinformatics Platform | Provides a code-free interface with multiple state-of-the-art integration methods (including MOFA and SNF) and extensive visualization capabilities. |

| Cloud & Hybrid Computing Infrastructures [27] | Data Infrastructure | Scalable computational platforms (e.g., cloud services) essential for handling the storage and processing demands of large, heterogeneous multi-omics datasets. |

| TensorFlow/PyTorch | Deep Learning Frameworks | Enable the building of custom deep learning models for fusion, including autoencoders for intermediate fusion and neural networks for late fusion [26] [28]. |

Performance Comparison and Application Insights

The performance of fusion strategies is highly context-dependent. The following table synthesizes quantitative results from real-world studies, highlighting the superior performance of integrated approaches over single-omics methods.

Table 3: Performance Comparison of Fusion Strategies in Biomedical Applications

| Application Context | Fusion Strategy | Reported Performance | Key Insight |

|---|---|---|---|

| NSCLC Subtype Classification [26] | Late Fusion (5 modalities: RNA-Seq, miRNA-Seq, WSI, CNV, DNA methylation) | AUC: 0.993, F1-score: 96.81% | Late fusion of multiple modalities significantly outperformed results from any single modality, improving diagnostic precision. |

| Cancer Subtyping (Pan-Cancer) [25] | Multi-Omics Integration (various strategies) | Major improvement in classification accuracy vs. single-omics | Integrated approaches consistently show superior performance for classifying cancer subtypes across multiple cancer types. |

| Alzheimer's Disease Diagnosis [25] | Multi-Omics Signatures | Diagnostic accuracy >95% (in some studies) | Integrated multi-omics signatures significantly outperformed single-biomarker methods. |

| Prostate Cancer Classification [28] | Early Fusion (with CNNs) | Outperformed unimodal approaches | The fusion of clinical, imaging, and molecular data provided a more comprehensive understanding than any single data type. |

Early, intermediate, and late fusion strategies each offer distinct advantages for multi-omics data integration. The choice of strategy should be guided by the specific research question, data characteristics, and computational resources. Early fusion is powerful for uncovering novel patterns but is computationally intensive. Intermediate fusion strikes a balance, effectively reducing dimensionality while capturing biological interactions. Late fusion provides robustness and is particularly suited for clinical translation where model interpretability and handling missing data are crucial.

The future of multi-omics integration lies in the development of more sophisticated, explainable AI models and scalable computational infrastructures that can seamlessly combine these fusion strategies to accelerate the translation of molecular insights into clinical applications [25] [27].

The complexity of biological systems necessitates computational strategies that can integrate multiple layers of molecular information. Multi-omics integration methods have emerged as powerful tools to address this challenge, moving beyond single-omics analyses to provide a holistic view of biological processes and disease mechanisms. These methods enable researchers to disentangle coordinated sources of variation across different molecular layers, including genome, epigenome, transcriptome, proteome, and metabolome [19]. By simultaneously analyzing multiple data modalities, these approaches can reveal interconnected biological networks that would remain hidden when examining individual omics layers in isolation.

The fundamental goal of multi-omics integration is to characterize heterogeneity between samples as manifested across multiple data modalities, particularly when the relevant axes of variation are not known a priori [29]. These methods help bridge the gap from genotype to phenotype by assessing the flow of information from one omics level to another, thereby providing more comprehensive insights into the biological systems under study. Integrated approaches have demonstrated superior ability to improve prognostics and predictive accuracy of disease phenotypes compared to single-omics analyses, ultimately contributing to better treatment and prevention strategies [19].

This technical guide focuses on three prominent statistical and multivariate methods for multi-omics integration: MOFA+ (Multi-Omics Factor Analysis+), DIABLO (Data Integration Analysis for Biomarker discovery using Latent cOmponents), and MCIA (Multiple Co-Inertia Analysis). Each method offers distinct mathematical frameworks and is suited to different biological questions and experimental designs. Understanding their core principles, applications, and implementation requirements is essential for researchers seeking to leverage these powerful tools in their multi-omics research programs.

Core Principles and Mathematical Frameworks

MOFA+ is a statistical framework for the comprehensive and scalable integration of single-cell multi-modal data. It reconstructs a low-dimensional representation of the data using computationally efficient variational inference and supports flexible sparsity constraints, allowing researchers to jointly model variation across multiple sample groups and data modalities [30]. Intuitively, MOFA+ can be viewed as a statistically rigorous generalization of principal component analysis (PCA) to multi-omics data [29]. The model employs Automatic Relevance Determination (ARD), a hierarchical prior structure that facilitates untangling variation shared across multiple modalities from variability present in a single modality. The sparsity assumptions on the weights facilitate the association of molecular features with each factor, enhancing interpretability [30].

DIABLO (Data Integration Analysis for Biomarker discovery using Latent cOmponents) is a supervised method that focuses on uncovering disease-associated multi-omic patterns [31]. As a generalization of Partial Least Squares Discriminant Analysis (PLS-DA) to multiple datasets, DIABLO identifies components that maximize covariance between omics datasets while simultaneously achieving optimal separation between predefined sample groups. This makes it particularly valuable for classification problems and biomarker discovery where the outcome variable is known. DIABLO constructs a correlation-based network that integrates multiple omics datasets to identify key variables that drive the separation between classes [31].

MCIA (Multiple Co-Inertia Analysis) is a multivariate method that extends co-inertia analysis to multiple datasets. It identifies successive orthogonal components that maximize the covariance between scores from different omics datasets, thereby revealing common structures across multiple data tables. MCIA operates by finding a consensus space in which the projections of all datasets have maximum variance while being as similar as possible. Unlike DIABLO, MCIA is unsupervised and does not require predefined sample classes, making it suitable for exploratory analysis of multi-omics datasets where class labels are unavailable or uncertain.

Comparative Analysis of Methodologies

Table 1: Comparative Analysis of MOFA+, DIABLO, and MCIA

| Feature | MOFA+ | DIABLO | MCIA |

|---|---|---|---|

| Analysis Type | Unsupervised | Supervised | Unsupervised |

| Primary Application | Identifying latent factors driving variation | Biomarker discovery and classification | Exploratory analysis of common structure |

| Data Structure | Multiple groups and views | Single group with multiple views | Multiple tables without group structure |

| Handling Missing Data | Explicitly designed to handle missing values | Requires complete cases or imputation | Requires complete cases or imputation |

| Scalability | High (GPU acceleration available) | Moderate | Moderate |

| Output | Latent factors with sample activities and feature weights | Integrated components and variable loadings | Common components and table projections |

| Interpretation | Variance decomposition by factor and view | Classification performance and variable selection | Variance explained across tables |

Table 2: Suitability for Different Research Objectives

| Research Objective | Recommended Method | Rationale |

|---|---|---|

| Exploratory Analysis | MOFA+ or MCIA | Unsupervised approach ideal for hypothesis generation |

| Biomarker Discovery | DIABLO | Supervised framework optimized for predictive biomarker identification |

| Patient Stratification | MOFA+ | Identifies latent factors that define patient subgroups |

| Temporal/Spatial Data | MOFA+ (MEFISTO extension) | Explicitly models temporal or spatial dependencies |

| Pathway Analysis | DIABLO or MOFA+ | Both provide feature weights for functional interpretation |

MOFA+ in Detail

Core Algorithm and Implementation

MOFA+ builds upon the Bayesian Group Factor Analysis framework, employing stochastic variational inference to enable the analysis of datasets with potentially millions of cells [30]. The model inputs consist of multiple datasets where features have been aggregated into non-overlapping sets of modalities (views) and where cells have been aggregated into non-overlapping sets of groups. During model training, MOFA+ infers K latent factors with associated feature weight matrices that explain the major axes of variation across the datasets [30].

The mathematical foundation of MOFA+ relies on a hierarchical Bayesian framework with group-wise sparsity priors. The model assumes that the observed data for each view can be approximated as a linear combination of the latent factors, with view-specific weights and additive noise. Let ( X^{(m)} ) denote the data matrix for view m, the model can be represented as:

[ X^{(m)} = Z W^{(m)T} + \epsilon^{(m)} ]

where Z is the matrix of latent factors, ( W^{(m)} ) is the weight matrix for view m, and ( \epsilon^{(m)} ) is the noise term. MOFA+ employs ARD priors over the weights to automatically determine the number of relevant factors and encourage sparsity, facilitating interpretability [30].

The implementation of MOFA+ is available as open-source software in both R (MOFA2) and Python (mofapy2) [32]. The framework includes comprehensive documentation, tutorials, and an interactive web server for exploratory analysis. For large-scale datasets, MOFA+ supports GPU-accelerated training through its stochastic variational inference implementation, achieving up to a 20-fold increase in speed compared to conventional variational inference [30].

Experimental Protocol and Application

A representative application of MOFA+ can be found in a study of chronic kidney disease (CKD) progression, where researchers applied MOFA+ to integrate transcriptomic, proteomic, and metabolomic data [31]. The experimental protocol followed these key steps:

Step 1: Data Preprocessing

- Collected multi-omics data from 37 participants with CKD, including tubulointerstitial transcriptomics (16,840 features), urine proteomics (1,301 features), plasma proteomics (1,301 features), and metabolomics (164 features)

- Normalized data dimensionality by retaining the top 20% most variable gene expression profiles, resulting in 3,368 gene expression features

- Combined all input features into a total of 6,134 features for integration [31]

Step 2: Model Training

- Selected 7 independent factors based on MOFA guidelines for factor selection

- Trained the model using standard variational inference (deterministic approach)

- Configured the model to handle different data distributions appropriate for each omics type [31]

Step 3: Result Interpretation

- Evaluated the proportion of variance explained by each factor across different omics types

- Identified Factors 2 and 3 as significantly associated with CKD progression using Kaplan-Meier survival analysis

- Examined feature weights to identify biological drivers of each factor [31]

The analysis revealed that MOFA+ Factors 2 and 3 were significantly associated with long-term kidney outcomes, with lower factor levels correlating with disease progression. Factor 2 was primarily explained by variance in urine proteomic profiles, while Factor 3 captured variance across multiple omics types. Key urinary proteins including F9, F10, APOL1, and AGT were identified as important contributors to Factor 2 [31].

Figure 1: MOFA+ Experimental Workflow for CKD Study

DIABLO in Detail

Core Algorithm and Implementation

DIABLO (Data Integration Analysis for Biomarker discovery using Latent cOmponents) is a supervised multivariate method designed to identify multi-omics biomarker panels that discriminate between predefined sample classes. The method builds on the PLS framework extended to multiple blocks of omics data, seeking components that maximize covariance between omics datasets while achieving optimal separation between classes.