AI and Deep Learning in Multi-Omics Analysis: Transforming Biomedical Research and Precision Oncology

This article provides a comprehensive overview of the transformative role of Artificial Intelligence (AI) and deep learning in multi-omics data analysis.

AI and Deep Learning in Multi-Omics Analysis: Transforming Biomedical Research and Precision Oncology

Abstract

This article provides a comprehensive overview of the transformative role of Artificial Intelligence (AI) and deep learning in multi-omics data analysis. Tailored for researchers, scientists, and drug development professionals, it explores the foundational principles of integrating diverse omics layers—such as genomics, transcriptomics, proteomics, and metabolomics—to gain a holistic understanding of complex biological systems and disease mechanisms. The scope extends from core concepts and methodologies, including generative and non-generative models, to their practical applications in precision oncology, drug repurposing, and clinical trial optimization. It also addresses critical challenges such as data heterogeneity, model interpretability, and analytical validation, offering insights into troubleshooting and optimizing AI workflows. Finally, the article presents a comparative evaluation of statistical versus deep learning approaches, empowering professionals to select the most effective strategies for their research and accelerate the translation of multi-omics insights into clinical practice.

The New Frontier: How AI is Decoding Multi-Omics Complexity for Systems Biology

Traditional biological research has often relied on single-omics approaches, analyzing one molecular layer in isolation, such as genomics or transcriptomics. While valuable, these approaches create significant blind spots by failing to capture the complex interactions and regulatory networks that span multiple biological layers. The inherent complexity of biological systems means that changes at the DNA level do not necessarily correlate directly with protein abundance or metabolic activity, leading to incomplete mechanistic understanding [1]. This limitation is particularly problematic in complex diseases like cancer and cardiovascular diseases, where molecular heterogeneity across patients and even within individual tumors presents major challenges for developing effective therapeutics [2] [3].

Multi-omics integration represents a paradigm shift toward comprehensive biological analysis that simultaneously studies multiple 'omics' datasets, including the genome, proteome, transcriptome, epigenome, metabolome, and microbiome [1]. This approach enables researchers to explore the complex interactions and networks underlying biological processes and diseases. The advent of high-throughput technologies has significantly broadened our ability to analyze biological underpinnings at various levels of complexity, providing unprecedented opportunities for discovery across various biological levels [1]. In oncology, for instance, single-cell multi-omics technologies have dramatically enhanced our ability to dissect tumor heterogeneity at single-cell resolution with multi-layered depth, illuminating tumor biology, immune escape mechanisms, treatment resistance, and patient-specific immune response mechanisms [2].

Artificial intelligence (AI) and deep learning serve as the crucial engine that makes multi-omics integration actionable on a practical scale [4]. These computational approaches provide the framework for processing large volumes of complex, high-dimensional multi-omics data and identifying complex nonlinear patterns that traditional statistical methods cannot detect [1] [3]. The strong generalization capacity of deep learning models allows them to make accurate predictions for unseen data, making them particularly valuable for clinical translation where patient-specific insights are essential for precision medicine [1].

Deep Learning Architectures for Multi-Omics Integration

Categorization of Deep Learning Approaches

Deep learning-based multi-omics integration methods can be broadly categorized into non-generative and generative architectures, each with distinct strengths and applications. Non-generative methods include feedforward neural networks (FNNs), graph convolutional neural networks (GCNs), and autoencoders (AEs), while generative methods encompass variational autoencoders, generative adversarial networks (GANs), and generative pretrained transformers (GPT) [1]. The selection of architecture depends on the specific research question, data characteristics, and desired output, with each approach offering unique capabilities for handling the complexity of multi-omics data.

Table 1: Deep Learning Architectures for Multi-Omics Integration

| Architecture Category | Specific Models | Key Strengths | Representative Applications |

|---|---|---|---|

| Non-Generative Models | Feedforward Neural Networks (FNN) | Handles concatenated features effectively; Good for prediction tasks | Drug response prediction (MOLI); Classification (SNN) [1] |

| Graph Convolutional Networks (GCN) | Incorporates biological network information; Captures topological relationships | Biological network analysis (MOGONET); Classification (MoGCN) [1] | |

| Autoencoders (AE) | Learns compressed representations; Effective for dimensionality reduction | Feature learning (Chaudhary et al.); Data integration [1] | |

| Generative Models | Variational Autoencoders (VAE) | Generates latent representations; Handles uncertainty | Imputation of missing modalities; Data generation [1] |

| Generative Adversarial Networks (GAN) | Generates synthetic data; Enhances training data | Data augmentation; Handling missing data [1] | |

| Generative Pretrained Transformers (GPT) | Models long-range dependencies; Transfer learning capability | Sequence analysis; Predictive modeling [1] |

Integration Strategies and Methodologies

The strategy for integrating multiple omics modalities significantly impacts model performance and interpretability. Three primary integration approaches have emerged, each with distinct methodological considerations and applications:

Early Integration: This approach involves concatenating features from each modality before processing them as a single input to the model. While methodologically straightforward, early integration can present challenges when dealing with heterogeneous data types and missing modalities [1]. The concatenated feature space can become非常高-dimensional, requiring robust regularization techniques to prevent overfitting.

Intermediate Integration: Methods utilizing intermediate integration treat modalities as separate entities while learning inter-modality relationships and generating an integrated model or shared latent space [1]. Autoencoder-based architectures often employ this strategy, learning modality-specific encoders that project different data types into a common latent space where integration occurs. This approach preserves modality-specific characteristics while capturing cross-modal relationships.

Late Integration: This strategy involves training separate models for each modality and then combining the predictions to generate a final aggregated result [1]. Late integration is particularly valuable when dealing with unpaired datasets or when modality-specific models benefit from specialized architectures. Ensemble methods and attention mechanisms can effectively combine these disparate predictions.

Advanced Single-Cell Multi-Omics Technologies and Protocols

Single-Cell Isolation and Sequencing Methodologies

The progression from bulk to single-cell multi-omics represents one of the most significant advancements in biological research, enabling the resolution of cellular heterogeneity that was previously obscured in population-averaged measurements. Several advanced single-cell isolation strategies have been developed to meet the technical demands of high-resolution analysis [2]:

Fluorescence-Activated Cell Sorting (FACS): This high-throughput technique utilizes fluorescent dyes or fluorescent proteins conjugated to antibodies to specifically label target cells. The cell suspension is hydrodynamically focused into a single-cell stream that passes through a laser interrogation zone, with charged droplets containing target cells deflected into collection devices by an external electric field [2]. While FACS enables efficient and precise isolation of desired subpopulations from heterogeneous mixtures, it requires a large number of starting cells and relies on monoclonal antibodies targeting specific surface markers.

Microfluidic Technologies: These platforms precisely control fluid dynamics within microscale channels, leveraging principles such as laminar flow, capillary effects, and microvolume manipulation to achieve highly efficient cell separation [2]. Microfluidic technologies offer significant advantages in terms of high throughput, low technical noise, and minimal cellular stress, though they often involve higher operational costs. Commercially available platforms like 10x Genomics Chromium X and BD Rhapsody HT-Xpress enable profiling of over one million cells per run with improved sensitivity and multimodal compatibility [2].

Laser Capture Microdissection (LCM): This technique isolates target cells manually under microscopic guidance using laser beams to excise specific cells or regions directly from fixed tissue sections [2]. By precisely tuning laser parameters and integrating microscopic control, LCM allows for targeted acquisition of cells from complex tissues while preserving spatial context, making it particularly suitable for studies of tumor heterogeneity that require spatial omics data.

Table 2: Single-Cell Multi-Omics Sequencing Technologies

| Omics Layer | Primary Technology | Key Measurements | Technical Considerations |

|---|---|---|---|

| Transcriptomics | Single-cell RNA sequencing (scRNA-seq) | Gene expression programs; Cell states | Utilizes UMIs and cell barcodes to minimize technical noise [2] |

| Genomics | Single-cell DNA sequencing (scDNA-seq) | Copy number variations; Single nucleotide variants | Multiple displacement amplification preferred over PCR for better coverage [2] |

| Epigenomics | scATAC-seq | Chromatin accessibility; Regulatory elements | Tn5 transposase-mediated insertion labels accessible regions [2] |

| Epigenomics | scCUT&Tag | Histone modifications; Protein-DNA interactions | Antibody-guided capture of specific epigenetic marks [2] |

| DNA Methylation | Bisulfite sequencing | Methylation patterns at CpG islands | Harsh chemical treatment risks DNA degradation; enzyme-based alternatives emerging [2] |

Longitudinal Multi-Omics Analysis Framework

Longitudinal study designs that track molecular changes over time provide unique insights into dynamic biological processes, disease progression, and therapeutic responses. The PALMO (Platform for Analyzing Longitudinal Multi-Omics data) platform represents a comprehensive analytical framework specifically designed to address the complexities of longitudinal bulk and single-cell omics data [5]. This platform incorporates five specialized analytical modules:

Variance Decomposition Analysis (VDA): Evaluates contributions of factors of interest (e.g., donor, timepoint, cell type) to the total variance of individual features, helping to distinguish biological signals from technical variations [5].

Coefficient of Variation Profiling (CVP): Assesses intra-participant variation over time in bulk data and identifies consistently stable or variable features among participants, revealing molecular elements with dynamic or stable expression patterns [5].

Stability Pattern Evaluation Across Cell Types (SPECT): Assesses longitudinal stability patterns of features in single-cell omics data and identifies stable or variable features that are unique to individual cell types but consistent among participants [5].

Outlier Detection Analysis (ODA): Examines the possibility of abnormal events occurring during a longitudinal study, such as adverse events in clinical trials or technical artifacts [5].

Time Course Analysis (TCA): Evaluates transcriptomic changes over time based on longitudinal scRNA-seq data of the same participant and identifies genes that exhibit significant temporal changes [5].

Experimental Protocols for Multi-Omics Integration

Protocol 1: Multi-Omics Data Processing and Quality Control

Purpose: To establish a standardized workflow for processing raw multi-omics data from diverse modalities into analysis-ready formats while maintaining data quality and integrity.

Materials and Reagents:

- 10x Genomics Chromium X platform or equivalent single-cell sequencing system

- Illumina NovaSeq or comparable high-throughput sequencer

- FASTQ files from sequencing facilities

- High-performance computing infrastructure with ≥64GB RAM

- Bioinformatics pipelines (Cell Ranger, ArchR, STAR, FeatureCounts)

Procedure:

- Data Preprocessing:

- For scRNA-seq data: Process raw FASTQ files using Cell Ranger pipeline to generate gene expression matrices. Perform quality control to remove low-quality cells (high mitochondrial percentage, low unique gene counts).

- For scATAC-seq data: Utilize ArchR package for processing FASTQ files to peak matrices. Remove doublets and low-quality cells based on transcription start site enrichment and unique nuclear fragments.

- For proteomics data: Process mass spectrometry raw files using MaxQuant or equivalent, followed by normalization and imputation of missing values.

Data Normalization:

- Apply SCTransform for scRNA-seq data normalization and variance stabilization.

- Utilize term frequency-inverse document frequency (TF-IDF) normalization for scATAC-seq data.

- Perform quantile normalization for proteomics and metabolomics data.

Batch Effect Correction:

- Identify potential batch effects using principal component analysis and visualization.

- Apply harmony, Seurat's integration, or Combat algorithms to remove technical variations while preserving biological signals.

Quality Assessment:

- Generate quality control metrics including number of features per cell, counts per cell, mitochondrial percentage, and complexity measures.

- Visualize data quality using violin plots, scatter plots, and dimensionality reduction techniques.

Troubleshooting Tips:

- High mitochondrial percentage may indicate stressed or dying cells; consider more stringent filtering.

- Low unique molecular identifier (UMI) counts may suggest poor cell viability or library preparation issues.

- Batch effects dominating biological signals may require optimization of integration parameters.

Protocol 2: Deep Learning Model Training for Multi-Omics Integration

Purpose: To implement and train deep learning models for integrating multiple omics modalities and extracting biologically meaningful representations.

Materials and Reagents:

- Processed multi-omics datasets (from Protocol 1)

- Python 3.8+ with TensorFlow 2.8+ or PyTorch 1.12+

- High-performance computing with GPU acceleration (NVIDIA A100 or equivalent recommended)

- Deep learning frameworks (SCVI, MOFA+, custom architectures)

Procedure:

- Data Preparation:

- Partition data into training (70%), validation (15%), and test (15%) sets, maintaining patient-wise splits to prevent data leakage.

- Standardize features per modality using z-score normalization or min-max scaling as appropriate.

- Handle missing data using modality-specific imputation or implement models that can handle missingness.

Model Architecture Design:

- Select appropriate architecture based on integration strategy (early, intermediate, or late integration).

- For intermediate integration using autoencoders: Design modality-specific encoders with 2-3 hidden layers, decreasing dimensionality progressively.

- Implement a shared latent space with dimensionality determined by empirical testing (typically 10-50 dimensions).

- Design decoders that reconstruct original inputs from the latent representation.

Model Training:

- Initialize model weights using He or Xavier initialization.

- Implement early stopping with patience of 20-50 epochs based on validation loss.

- Utilize Adam optimizer with learning rate of 0.001-0.0001, adjusted based on validation performance.

- Apply gradient clipping to prevent explosion in unstable training conditions.

Model Validation:

- Evaluate reconstruction accuracy using mean squared error for continuous data and binary cross-entropy for binary features.

- Assess integration quality using metrics such as silhouette score, clustering accuracy, or biological concordance.

- Perform ablation studies to determine contribution of individual modalities.

Troubleshooting Tips:

- Training instability may require learning rate reduction, gradient clipping, or different weight initialization.

- Overfitting may be addressed through increased regularization, dropout, or early stopping.

- Poor integration quality may benefit from architecture modifications or hyperparameter optimization.

Table 3: Essential Research Reagents and Computational Resources for Multi-Omics Studies

| Category | Item | Specification/Function | Application Notes |

|---|---|---|---|

| Wet Lab Reagents | Single-cell isolation kit | 10x Genomics Chromium X, BD Rhapsody | Enables high-throughput single-cell partitioning and barcoding [2] |

| Library preparation kits | Single-cell multiome ATAC + Gene Expression | Allows simultaneous profiling of gene expression and chromatin accessibility from the same cell [2] | |

| Antibody panels | TotalSeq antibodies for CITE-seq | Enables protein surface marker quantification alongside transcriptome [2] | |

| Nucleic acid purification kits | SPRIselect beads, QIAGEN kits | High-quality nucleic acid extraction for downstream sequencing [2] | |

| Computational Tools | Single-cell analysis suites | Seurat, Scanpy, SingleCellExperiment | Comprehensive frameworks for single-cell data analysis and integration [5] |

| Multi-omics integration platforms | PALMO, MOFA+, Multi-Omics Factor Analysis | Specialized tools for integrating multiple data modalities [5] | |

| Deep learning frameworks | TensorFlow, PyTorch, JAX | Flexible environments for building custom multi-omics models [1] | |

| Visualization tools | ggplot2, Plotly, SCope | Create publication-quality visualizations and interactive explorers [5] | |

| Data Resources | Reference datasets | Human Cell Atlas, TCGA, GTEx | Provide essential context and benchmarking capabilities [1] |

| Pathway databases | KEGG, Reactome, MSigDB | Enable functional interpretation of multi-omics findings [1] | |

| Protein-protein interaction networks | STRING, BioGRID | Facilitate network-based analysis of multi-omics data [1] |

Applications in Drug Discovery and Therapeutic Development

The integration of AI with multi-omics approaches is particularly transformative in pharmaceutical research and development, addressing key challenges in target identification, mechanism elucidation, and patient stratification. In complex diseases such as opioid use disorder (OUD), multi-omics allows researchers to understand the multifactorial nature of the disease, involving complex interactions between genetics, brain circuitry, immune response, and environmental stressors [4]. By combining this data with AI-driven simulations, researchers can identify new molecular targets, stratify patient populations, and discover non-obvious mechanisms of action that are crucial for developing precision therapies in fields where one-size-fits-all approaches have largely failed [4].

AI-powered multi-omics platforms enable a shift from empirical to predictive science in drug development. For instance, the Multiomics Advanced Technology (MAT) platform developed by GATC Health simulates human biology based on multi-omic inputs, allowing researchers to model drug-disease interactions, predict efficacy and toxicity, and optimize compounds in silico before a molecule ever reaches a petri dish or animal model [4]. This approach has the potential to significantly compress development timelines and improve success rates by generating biologically grounded hypotheses and de-risking early-stage development programs.

In cardiovascular disease research, AI methods integrated with multi-omics have shown promising outcomes across the entire continuum of disease prevention, diagnosis, treatment, and prognosis [3]. These approaches facilitate the exploration of complex regulatory mechanisms and enhance the prediction and interpretation of disease progression, ultimately supporting the development of personalized therapeutic strategies. The application of machine learning to analyze huge and high-dimensional multi-omics datasets significantly improves the efficiency of mechanistic studies and clinical practice of cardiovascular diseases [3].

The transition from single-omic blind spots to a holistic multi-omic view represents a fundamental evolution in biological research and therapeutic development. By integrating complementary molecular perspectives through advanced AI and deep learning architectures, researchers can now construct comprehensive models of biological systems that more accurately reflect their inherent complexity. The methodologies and protocols outlined in this application note provide a roadmap for implementing robust multi-omics integration strategies that can uncover novel biological insights and accelerate therapeutic innovation.

As the field continues to evolve, we anticipate several key advancements will further enhance multi-omics integration capabilities. Methods that can handle missing data natively will become increasingly important, as missing modalities represent a common challenge in working with complex and heterogeneous clinical samples [1]. Additionally, the integration of emerging data types, particularly imaging modalities such as radiomics and pathomics, with molecular omics data promises to provide even more comprehensive views of biological systems [1]. Finally, the development of more interpretable AI models will be crucial for translating computational findings into biologically and clinically actionable insights, bridging the gap between pattern recognition and mechanistic understanding.

The convergence of sophisticated single-cell technologies, longitudinal study designs, and AI-driven analytical frameworks is poised to transform our approach to biological research and precision medicine. By embracing these integrated approaches, researchers and drug development professionals can look beyond the limitations of single-omics approaches and begin to truly decode the complex, multi-layered nature of health and disease.

The integration of multi-omics data represents a fundamental challenge and opportunity in modern biological research. Deep learning (DL) has emerged as a powerful set of techniques for addressing this challenge, enabling researchers to uncover complex, non-linear relationships across genomic, transcriptomic, epigenomic, proteomic, and metabolomic data layers [6] [7]. These approaches are particularly valuable in cancer research, where molecular heterogeneity necessitates sophisticated analytical methods for subtype classification, biomarker discovery, and therapeutic development [6] [8]. Unlike traditional machine learning methods that often rely on manually engineered features, deep learning automatically learns relevant representations from raw data, reducing human bias and capturing the intricate dynamics of biological systems [9]. This capability is critical for advancing personalized medicine, as it allows for more accurate prediction of disease progression, drug response, and patient outcomes based on comprehensive molecular profiling.

Core Deep Learning Paradigms for Biological Data Integration

Pathway-Guided Interpretable Deep Learning Architectures (PGI-DLA)

Pathway-Guided Interpretable Deep Learning Architectures (PGI-DLA) represent a revolutionary approach that integrates established biological knowledge directly into model design. Unlike conventional "black box" deep learning models, PGI-DLA structures neural networks based on known biological pathway relationships from databases such as the Kyoto Encyclopedia of Genes and Genomes (KEGG), Gene Ontology (GO), Reactome, and MSigDB [9]. This integration ensures that the model's decision-making process aligns with biological mechanisms, significantly enhancing interpretability. The architecture fundamentally differs from traditional approaches that use pathways merely for input feature preprocessing; instead, it embeds domain knowledge into the model's foundational structure to guide the learning process by mimicking the actual flow of biological information [9] [7].

Several specialized architectural implementations have emerged within the PGI-DLA paradigm. Variable Neural Networks (VNNs), exemplified by models like DCell and DrugCell, organize hidden layers according to the hierarchical structure of biological pathways, creating a direct mapping between network topology and biological relationships [9]. Sparse Deep Neural Networks incorporate sparsity constraints based on pathway knowledge, where connections between neurons reflect documented molecular interactions, substantially improving model interpretability. Graph Neural Networks (GNNs) represent biological pathways as graphs with genes or proteins as nodes and their interactions as edges, enabling sophisticated relational reasoning across the molecular landscape [9]. These architectures demonstrate how structural priors from biological knowledge can simultaneously enhance both performance and interpretability in deep learning applications for multi-omics integration.

Benchmarking Frameworks for Integration Methods

The rapid proliferation of deep learning methods for single-cell and multi-omics integration has created an urgent need for systematic benchmarking frameworks. Recent research has evaluated 16 different integration methods using a unified variational autoencoder framework that incorporates both batch and cell-type information [10]. These investigations have revealed significant limitations in existing evaluation metrics, particularly the single-cell integration benchmarking index (scIB), which often fails to adequately preserve intra-cell-type biological information during the integration process [10].

In response to these limitations, researchers have developed enhanced benchmarking strategies including correlation-based loss functions and refined metrics that better capture biological conservation [10]. The proposed scIB-E framework and associated metrics provide deeper insights into the integration process and offer practical guidance for method selection and development. These advancements are particularly important as single-cell technologies continue to generate increasingly complex datasets from diverse biological contexts, including lung and breast cancer atlases [10]. The benchmarking efforts highlight critical trade-offs between batch effect correction and biological signal preservation that must be carefully balanced in analytical workflows.

Table 1: Performance Comparison of Multi-Omics Integration Methods for Breast Cancer Subtype Classification

| Method | Type | F1 Score (Nonlinear) | Pathways Identified | Key Strengths |

|---|---|---|---|---|

| MOFA+ | Statistical-based | 0.75 | 121 | Effective feature selection, superior clustering |

| MoGCN | Deep learning-based | 0.69 | 100 | Captures non-linear relationships, automated feature learning |

| MOGONET | Graph-based DL | N/A | N/A | Integrates heterogeneous networks |

| DCell | Pathway-guided DL | N/A | N/A | Mechanistically interpretable predictions |

Table 2: Pathway Databases for Biologically-Informed Deep Learning Architectures

| Database | Knowledge Scope | Hierarchical Structure | Curation Focus | Common Applications |

|---|---|---|---|---|

| KEGG | Metabolic & signaling pathways | Moderate | Molecular interactions | Cancer mechanisms, metabolism |

| Gene Ontology (GO) | Biological processes, molecular functions, cellular components | High | Functional annotations | Functional enrichment, process analysis |

| Reactome | Detailed biochemical reactions | High | Pathway steps & relationships | Drug mechanisms, disease pathways |

| MSigDB | Curated gene sets | Variable | Expert-curated collections | Signature analysis, translational research |

Application Notes: Multi-Omics Integration for Breast Cancer Subtyping

Experimental Design and Data Processing

A comprehensive comparative analysis of statistical and deep learning-based multi-omics integration was conducted using 960 breast cancer patient samples from The Cancer Genome Atlas (TCGA-PanCanAtlas 2018) [8]. The study incorporated three distinct omics layers: host transcriptomics (20,531 features), epigenomics (22,601 features), and shotgun microbiomics (1,406 features). Samples represented five breast cancer subtypes: Basal (168), Luminal A (485), Luminal B (196), HER2-enriched (76), and Normal-like (35) [8].

Critical data preprocessing steps included batch effect correction using unsupervised ComBat for transcriptomics and microbiomics data, while the Harman method was applied to methylation data [8]. Following batch correction, features with zero expression in 50% of samples were discarded to reduce noise and dimensionality. To ensure a fair comparison between integration methods, the top 100 features from each omics layer were selected using approach-specific criteria: for the statistical method (MOFA+), features were selected based on absolute loadings from the latent factor explaining the highest shared variance, while for the deep learning approach (MoGCN), selection was based on importance scores derived by multiplying absolute encoder weights by the standard deviation of each input feature [8].

Performance Evaluation and Biological Validation

The integrated features from both statistical and deep learning approaches were rigorously evaluated using multiple complementary strategies. Unsupervised embedding evaluation employed t-SNE visualization alongside the Calinski-Harabasz index (measuring between-cluster versus within-cluster dispersion) and Davies-Bouldin index (assessing cluster similarity) [8]. For supervised evaluation, both linear (Support Vector Classifier with linear kernel) and nonlinear (Logistic Regression) models were trained using grid search with five-fold cross-validation and evaluated using the F1 score to account for class imbalance across breast cancer subtypes [8].

Biological validation constituted a critical component of the analysis, wherein transcriptomic features selected by each method were used to construct molecular networks using OmicsNet 2.0 with the IntAct database [8]. Pathway enrichment analysis identified biologically relevant pathways associated with the selected features, with a particular focus on their implications for breast cancer mechanisms. Additionally, clinical association analysis assessed the relevance of selected features to key clinical variables including tumor stage, lymph node involvement, metastasis, patient age, and race using OncoDB, with significance determined by false discovery rate (FDR < 0.05) [8].

Experimental Protocols

Protocol 1: Multi-Omics Factor Analysis (MOFA+) Integration

Purpose: Unsupervised integration of multiple omics datasets to identify latent factors representing shared variation across data modalities.

Materials and Reagents:

- Multi-omics datasets (e.g., transcriptomics, epigenomics, microbiomics)

- MOFA+ package (R version 4.3.2 or higher)

- Computational environment with minimum 16GB RAM

Procedure:

- Data Preparation: Format each omics dataset as a matrix with samples as rows and features as columns. Ensure consistent sample ordering across datasets.

- Model Configuration: Create a MOFA+ object and specify the three omics layers with appropriate data distributions (Gaussian for continuous data).

- Training Parameters: Set training options including 400,000 maximum iterations and a convergence threshold based on evidence lower bound (ELBO) stabilization.

- Factor Selection: Retain latent factors that explain a minimum of 5% variance in at least one data type.

- Feature Extraction: Calculate absolute loadings for each feature in the selected latent factors, prioritizing features with the highest loadings for downstream analysis.

- Validation: Assess model convergence by examining the ELBO trajectory and evaluate factor interpretability through variance explained plots.

Troubleshooting Tips:

- For non-converging models, increase iteration count or adjust learning rate parameters.

- If factors explain minimal variance, reassess data preprocessing and normalization steps.

- For biological interpretation issues, correlate factors with sample metadata and perform gene set enrichment analysis.

Protocol 2: Multi-Omics Graph Convolutional Network (MoGCN) Implementation

Purpose: Deep learning-based integration of multi-omics data using graph convolutional networks for enhanced feature selection and subtype classification.

Materials and Reagents:

- Preprocessed multi-omics datasets

- Python 3.11.5 with PyTorch and DGL libraries

- GPU acceleration (recommended)

Procedure:

- Autoencoder Pretraining:

- Implement separate encoder-decoder pathways for each omics type.

- Configure encoder architecture with hidden layers of 100 neurons each.

- Train autoencoders using learning rate of 0.001 and mean squared error reconstruction loss.

- Extract learned representations from the bottleneck layer for each omics type.

Graph Construction:

- Create patient similarity networks for each omics modality using k-nearest neighbors.

- Combine omics-specific graphs into a multi-omics heterogeneous network.

GCN Training:

- Implement two-layer graph convolutional architecture with ReLU activation.

- Train model with cross-entropy loss for classification tasks.

- Apply dropout regularization (rate=0.5) between layers to prevent overfitting.

Feature Importance Calculation:

- Compute importance scores by multiplying absolute encoder weights by feature standard deviations.

- Select top 100 features per omics layer based on importance scores for downstream analysis.

Validation Metrics:

- Classification performance: F1 score, accuracy, precision, recall

- Clustering quality: Calinski-Harabasz index, Davies-Bouldin index

- Biological relevance: Pathway enrichment significance, clinical association FDR

Visualization of Workflows and Signaling Pathways

Diagram 1: MOFA+ multi-omics integration workflow for breast cancer subtyping.

Diagram 2: Pathway-guided interpretable deep learning architecture (PGI-DLA) framework.

Table 3: Key Computational Tools for Deep Learning-Based Multi-Omics Integration

| Tool/Resource | Type | Primary Function | Application Context |

|---|---|---|---|

| MOFA+ (R package) | Statistical tool | Unsupervised multi-omics factor analysis | Latent pattern discovery, dimensionality reduction |

| MoGCN (Python) | Deep learning framework | Graph convolutional networks for multi-omics | Cancer subtype classification, biomarker discovery |

| DCell | Pathway-guided DL | Variable neural networks based on GO hierarchy | Predictive modeling with mechanistic interpretation |

| OmicsNet 2.0 | Network analysis | Biological network construction and visualization | Pathway enrichment, molecular interaction mapping |

| IntAct Database | Protein interaction database | Curated molecular interaction data | Network validation, pathway context |

| OncoDB | Clinical genomics database | Gene-clinical association analysis | Clinical relevance assessment, survival analysis |

Table 4: Pathway Databases for Biologically-Informed Model Development

| Database | Key Features | Best Suited For | Access Method |

|---|---|---|---|

| KEGG | Metabolic pathways, disease maps | Modeling metabolic alterations, cancer mechanisms | API, downloadable flat files |

| Gene Ontology (GO) | Three ontologies: BP, MF, CC | Functional enrichment, hierarchical modeling | OBO format, RDF, API |

| Reactome | Detailed reaction knowledgebase | Drug mechanism studies, signaling pathways | REST API, Pathway Browser |

| MSigDB | Curated gene sets, hallmark collections | Translational research, signature analysis | GMT files, web interface |

The complexity of biological systems arises from dynamic interactions across multiple molecular layers, from genetic blueprint to functional phenotype [11]. Multi-omics approaches represent a fundamental shift from traditional reductionist methods that examine single molecular classes in isolation. By integrating disparate biological datasets, researchers can now capture the interconnectedness of cellular systems and recover system-level signals that are often missed by single-modality studies [11]. This holistic perspective is particularly crucial for understanding complex diseases like cancer, where molecular heterogeneity fuels therapeutic resistance and metastasis through coordinated alterations across genomic, transcriptomic, proteomic, and metabolomic strata [11].

The four primary omics layers—genomics, transcriptomics, proteomics, and metabolomics—provide complementary insights into biological processes. Genomics identifies DNA-level alterations that drive disease processes; transcriptomics reveals gene expression dynamics and regulatory networks; proteomics catalogs the functional effectors of cellular processes; and metabolomics profiles the small-molecule endpoints of cellular processes [11] [12]. Together, these layers construct a comprehensive molecular atlas that enables researchers to move beyond correlation to causation in biological research [12]. The integration of these orthogonal yet interconnected biological insights has become essential for advancing personalized medicine, identifying novel biomarkers, and understanding complex pathophysiological processes [13].

Unique Insights from Each Omics Layer

Genomics: The Biological Blueprint

Genomics focuses on the comprehensive analysis of an organism's complete set of DNA, including genes and non-coding sequences. This foundational omics layer identifies DNA-level alterations such as single-nucleotide variants (SNVs), copy number variations (CNVs), and structural rearrangements that can drive disease processes like oncogenesis [11]. Next-generation sequencing (NGS) technologies enable comprehensive profiling of cancer-associated genes and pathways including KRAS, BRAF, and TP53 [11]. The static nature of genomic information (with some exceptions like epigenetic modifications) provides the fundamental blueprint that remains relatively constant throughout an organism's lifetime, making it particularly valuable for understanding inherited risk factors and fundamental molecular etiology of diseases [14].

Transcriptomics: Dynamic Gene Expression

Transcriptomics measures the expression levels of RNA transcripts (both mRNA and non-coding RNA) in cells or tissues, providing an indirect measure of DNA activity [12]. Through techniques like RNA sequencing (RNA-seq), researchers can quantify mRNA isoforms, non-coding RNAs, and fusion transcripts that reflect active transcriptional programs and regulatory networks within biological systems [11]. Unlike the relatively static genome, the transcriptome is highly dynamic and responsive to both internal biological signals and external environmental stimuli. This responsiveness makes transcriptomics particularly valuable for understanding how genes are regulated under different conditions, how cells respond to perturbations, and identifying actively dysregulated pathways in disease states [12]. The transcriptome serves as a crucial intermediary between the genetic code and functional proteins, capturing a snapshot of gene activity at a specific moment in time.

Proteomics: Functional Effectors

Proteomics involves the large-scale identification and quantification of proteins, the primary functional effectors of biological processes [12]. Proteins and enzymes (typically >2 kDa) are the functional products of genes and play diverse roles in cellular processes, including maintaining cellular structure, facilitating communication, and catalyzing biochemical reactions [12]. Mass spectrometry and affinity-based techniques enable cataloging of post-translational modifications, protein-protein interactions, and signaling pathway activities that directly influence therapeutic responses and cellular behavior [11]. The proteome displays remarkable complexity due to alternative splicing, post-translational modifications, and protein degradation, creating substantial divergence between transcript abundance and protein levels. This layer provides the most direct information about functional cellular states and has become indispensable for understanding disease mechanisms and identifying druggable targets.

Metabolomics: Biochemical Endpoints

Metabolomics comprehensively analyzes small molecules (≤1.5 kDa), known as metabolites, which serve as intermediate or end products of metabolic reactions and regulators of metabolism [12]. Using NMR spectroscopy and liquid chromatography-mass spectrometry (LC-MS), metabolomics exposes metabolic reprogramming in diseases such as Warburg effects in cancer or oncometabolite accumulation [11]. As the ultimate mediators of metabolic processes, metabolites represent the most downstream product of the biological information flow and provide a direct readout of cellular phenotype and physiological status. The metabolome is highly responsive to both environmental and biological regulatory mechanisms, making it particularly valuable for capturing the integrated effects of genetics, transcriptomics, proteomics, and environmental exposures [15]. Lipidomics, a specialized branch of metabolomics, focuses specifically on the lipidic composition of samples [12].

Table 1: Comparative Analysis of Key Omics Technologies

| Omics Layer | Analyzed Components | Key Technologies | Temporal Dynamics | Primary Applications |

|---|---|---|---|---|

| Genomics | DNA sequences, SNVs, CNVs, structural variations | Next-generation sequencing | Static (with epigenetic exceptions) | Inherited risk, driver mutations, molecular taxonomy |

| Transcriptomics | mRNA, non-coding RNAs, fusion transcripts | RNA-seq, microarrays | Dynamic (minutes to hours) | Gene regulation, active pathways, transcriptional networks |

| Proteomics | Proteins, post-translational modifications | Mass spectrometry, affinity assays | Moderate (hours to days) | Functional states, signaling activity, drug targets |

| Metabolomics | Metabolites, lipids, biochemical intermediates | LC-MS, NMR spectroscopy | Rapid (seconds to minutes) | Metabolic phenotypes, environmental responses, functional endpoints |

Multi-Omics Integration Strategies and Protocols

Data Integration Methodologies

Integrating multiple omics datasets presents significant computational challenges due to the inherent heterogeneity of the data types, including dimensional disparities, temporal variations, and technical variability from different analytical platforms [11]. Several strategic approaches have been developed to address these challenges:

Pathway- or Biochemical-Ontology-Based Integration leverages predefined biochemical pathways and ontological frameworks to interpret multi-omics data in the context of existing biological knowledge. Tools such as IMPALA, iPEAP, and MetaboAnalyst support integration of different omics platforms through pathway enrichment and overrepresentation analyses [15]. While these approaches benefit from incorporating established domain knowledge, they are limited by the completeness and accuracy of the predefined pathways, which may not fully capture the complexity of biological systems [15].

Biological-Network-Based Integration utilizes graph-based representations of complex connections among diverse cellular components. Methods implemented in tools like SAMNetWeb, pwOmics, and Metscape map multiple omic experimental results onto biological networks to identify altered graph neighborhoods without relying on predefined pathways [15]. For example, Metscape, a Cytoscape plug-in, facilitates calculation, analysis, and visualization of gene-to-metabolite networks in the context of metabolism [15]. These approaches can reveal novel interactions but may yield limited insights when domain knowledge of molecular interactions is insufficient.

Empirical Correlation Analysis identifies statistical relationships between molecular features across omics layers, often employed when biochemical domain knowledge is limited. The R package mixOmics implements methods such as regularized sparse principal component analysis (sPCA), canonical correlation analysis (rCCA), and sparse PLS discriminant analysis (sPLS-DA) to identify co-varying features across datasets [15]. Weighted gene correlation network analysis (WGCNA) extends correlation concepts to include graph topology measures and has been widely used to analyze gene coexpression networks and relate them to other data types [15].

AI and Deep Learning Integration Protocols

Artificial intelligence, particularly deep learning, has emerged as a powerful approach for multi-omics integration due to its ability to identify non-linear patterns across high-dimensional spaces [11]. The following protocol outlines a typical AI-driven multi-omics integration workflow:

Protocol: Deep Learning-Based Multi-Omics Integration Using Flexynesis

Objective: Integrate genomic, transcriptomic, proteomic, and metabolomic data to predict clinical outcomes such as disease subtypes, survival, or drug response.

Materials:

- Multi-omics datasets (e.g., from TCGA, CCLE, or in-house studies)

- Clinical annotation data

- Flexynesis deep learning toolkit (available via PyPi, Bioconda, or Galaxy Server)

- Python environment with PyTorch dependencies

- High-performance computing resources (GPU recommended)

Procedure:

Data Preprocessing and Harmonization

- Perform quality control on each omics dataset separately

- Apply platform-specific normalization (e.g., DESeq2 for RNA-seq, quantile normalization for proteomics)

- Address batch effects using ComBat or similar methods

- Impute missing values using appropriate methods (e.g., matrix factorization, K-nearest neighbors)

- Standardize features to have zero mean and unit variance

Feature Selection

- Apply variance-based filtering to remove low-variance features

- Implement domain-specific feature selection (e.g., differentially expressed genes, differentially abundant metabolites)

- Use multi-omics feature selection methods if available

Model Architecture Configuration

- Choose appropriate encoder networks for each data type (fully connected or graph-convolutional)

- Define the multi-task learning architecture based on prediction goals

- Configure supervisor multi-layer perceptrons (MLPs) for each outcome variable

Model Training and Validation

- Split data into training (70%), validation (15%), and test (15%) sets

- Implement cross-validation strategies appropriate for sample size

- Perform hyperparameter optimization using validation set performance

- Train model with early stopping to prevent overfitting

Model Interpretation and Biomarker Discovery

- Apply explainable AI techniques (e.g., SHAP, attention mechanisms)

- Extract feature importance scores across omics layers

- Identify key molecular drivers and biomarkers

- Validate findings in independent cohorts when possible

Troubleshooting Tips:

- For small sample sizes, consider transfer learning or pre-trained models

- If model performance is poor, try simplifying architecture or increasing regularization

- For integration challenges, consider intermediate integration approaches rather than early fusion

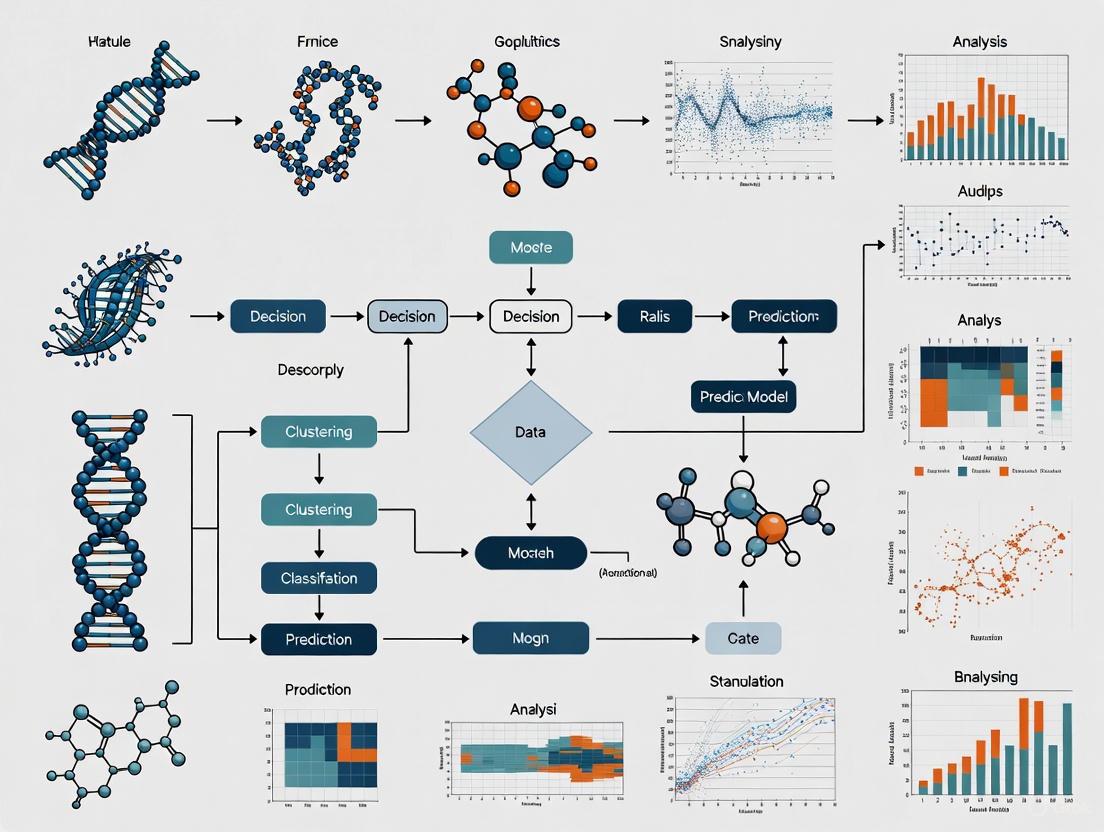

Diagram 1: AI-Driven Multi-Omics Integration Workflow. This illustrates the flow from raw multi-omics data through preprocessing, feature selection, deep learning encoding, and final clinical applications.

Table 2: Essential Research Reagents and Computational Tools for Multi-Omics Research

| Category | Resource | Specific Examples | Function/Purpose |

|---|---|---|---|

| Wet Lab Reagents | Sequencing Kits | Illumina RNA Prep with Enrichment | Library preparation for transcriptomics |

| Mass Spectrometry Standards | TMT/SILAC labeled peptides | Quantitative proteomics | |

| Metabolomics Kits | Biocrates AbsoluteIDQ p400 HR Kit | Targeted metabolomics quantification | |

| Computational Tools | Pathway Analysis | IMPALA, iPEAP, MetaboAnalyst | Pathway-based multi-omics integration |

| Network Analysis | SAMNetWeb, Metscape, MetaMapR | Biological network construction and analysis | |

| Correlation Analysis | WGCNA, mixOmics, DiffCorr | Identify cross-omics correlations | |

| AI/Deep Learning Platforms | Flexynesis, Graph Neural Networks | Non-linear multi-omics integration | |

| Data Resources | Public Repositories | TCGA, CCLE, Answer ALS | Source of validated multi-omics datasets |

| Knowledge Bases | STRING, KEGG, Reactome | Prior knowledge for biological interpretation |

Application Notes: Multi-Omics in Translational Research

Case Study: Molecular Subtyping in Oncology

Multi-omics approaches have demonstrated particular value in disease subtyping and classification, moving beyond traditional histopathological classifications to molecular taxonomy. For example, integrative analysis of 729 cancer cell lines across 23 tumor types from the Cancer Cell Line Encyclopedia (CCLE) identified 12 distinct clusters using the iClusterPlus tool [16]. While many cell lines grouped by tissue of origin, the analysis revealed novel subgroups characterized by shared molecular alterations regardless of tissue origin. Notably, one cluster contained both non-small cell lung cancer (NSCLC) and pancreatic cancer cell lines linked by the presence of KRAS mutations [16]. This molecular stratification provides insights for drug repurposing and personalized treatment strategies that would not be apparent from single-omics analyses.

Case Study: Biomarker Discovery in Metabolic Disease

A 2025 multi-omics study of childhood central obesity exemplifies the power of integrating lipidomics and proteomics to elucidate disease mechanisms [17]. The researchers conducted a case-control study involving 169 children (aged 7-16 years), measuring plasma lipidomics in all participants and proteomics in a subset of 112 children. Their analysis identified 46 key lipids significantly associated with central obesity (predominantly triglycerides with some diacylglycerols) and six key proteins (PLIN1, PLAT, ADH1A, ADH4, LEP, and INHB) that potentially influence the central obesity phenotype by modulating lipid levels [17]. These proteins exhibited increased expression in children with central obesity and were validated in mouse models, highlighting their potential as biomarkers and therapeutic targets.

Protocol: Knowledge Graph Construction for Multi-Omics Data

Objective: Structure multi-omics data using knowledge graphs to enable sophisticated AI analysis and interpretation.

Materials:

- Multi-omics datasets with appropriate metadata

- Biological knowledge bases (e.g., STRING, KEGG, Reactome)

- Graph database platforms (e.g., Neo4j)

- GraphRAG or similar graph-based AI frameworks

Procedure:

Entity Identification and Extraction

- Identify key entities across omics layers (genes, proteins, metabolites, pathways)

- Extract relationships from established biological databases

- Incorporate experimental measurements as node attributes

Knowledge Graph Construction

- Define node types for each biological entity

- Establish relationship types (e.g., interactswith, regulates, partof)

- Integrate quantitative data (e.g., expression levels, fold changes)

Graph-Based AI Analysis

- Implement graph neural networks for pattern detection

- Apply community detection algorithms to identify functional modules

- Utilize graph traversal methods for hypothesis generation

Interpretation and Validation

- Extract subgraphs associated with specific phenotypes

- Identify key network hubs and bottlenecks

- Validate predictions through experimental follow-up

Diagram 2: Knowledge Graph Structure for Multi-Omics Data Integration. This diagram illustrates how different omics layers connect through biological pathways and molecular networks to inform disease understanding and AI-powered insights.

The integration of genomics, transcriptomics, proteomics, and metabolomics provides a comprehensive framework for understanding biological systems at multiple levels of complexity. Each omics layer offers unique and complementary insights: genomics reveals the fundamental blueprint, transcriptomics captures dynamic gene regulation, proteomics identifies functional effectors, and metabolomics reflects the biochemical endpoints of cellular processes. The true power of multi-omics approaches emerges from the strategic integration of these layers, enabled by advanced computational methods including pathway analysis, network modeling, and increasingly, AI and deep learning algorithms.

As multi-omics technologies continue to evolve and computational methods become more sophisticated, we anticipate a paradigm shift toward increasingly dynamic, personalized disease management across therapeutic areas. The integration of spatial omics, single-cell technologies, and temporal profiling will provide unprecedented resolution into biological systems. However, realizing the full potential of multi-omics approaches will require addressing ongoing challenges in data harmonization, method standardization, and result interpretation. By leveraging the unique insights from each omics layer and their integrative power, researchers and clinicians can look forward to transformative advances in understanding disease mechanisms, identifying novel biomarkers, and developing personalized therapeutic strategies.

Precision medicine represents a transformative healthcare model that shifts from conventional, reactive disease management to a proactive approach focused on disease prevention and health preservation. This model utilizes a detailed understanding of an individual’s genome, environment, and lifestyle to deliver customized healthcare [18]. The foundation for realizing this promise was laid by the genomics revolution, but it has become increasingly clear that genotype alone is insufficient to capture the dynamic processes and complex interactions governing health and disease [19]. Multi-omics integration has emerged as the essential methodology to address this complexity, combining diverse biological data layers—including genomics, transcriptomics, epigenomics, proteomics, and metabolomics—to generate comprehensive molecular portraits of biological systems [19] [11].

In oncology, this integrated approach is particularly crucial due to the staggering molecular heterogeneity of cancer, which drives therapeutic resistance, metastasis, and relapse [11]. Traditional single-omics approaches often fail to capture the interconnectedness of molecular pathways, yielding incomplete mechanistic insights and suboptimal clinical predictions [20] [11]. The integration of orthogonal molecular and phenotypic data enables researchers to recover system-level signals, such as spatial subclonality and microenvironment interactions, that are frequently missed by single-modality studies [11]. This multi-omics framework is reshaping biomedical research by providing a synergistic approach to decode cancer's emergent properties, thereby advancing diagnostic accuracy, prognostic evaluation, and therapeutic decision-making [21] [11].

The Computational Challenge and AI-Driven Solutions

The implementation of multi-omics approaches generates unprecedented data volume and heterogeneity, creating formidable analytical challenges characterized by the "four Vs" of big data: volume, velocity, variety, and veracity [11]. The high dimensionality of molecular assays, where the number of features (e.g., >20,000 genes, >500,000 CpG sites) often dwarfs sample sizes, overwhelms conventional biostatistical methods [11]. Furthermore, the inherent technical variability between different sequencing platforms, mass spectrometry configurations, and microarray technologies introduces platform-specific artifacts and batch effects that can obscure biological signals [11].

Artificial intelligence (AI), particularly machine learning (ML) and deep learning (DL), has emerged as the essential scaffold bridging multi-omics data to clinically actionable insights [11] [3]. Unlike traditional statistical methods, AI excels at identifying non-linear patterns across high-dimensional spaces, making it uniquely suited for multi-omics integration [11]. Three primary computational strategies have been developed for this integration:

- Early Integration: Combining raw data from different omics layers at the beginning of the analysis pipeline. This approach can identify correlations between omics layers but may lead to information loss and biases [20].

- Intermediate Integration: Integrating data at the feature selection, feature extraction, or model development stages, allowing more flexibility and control over the integration process [20]. Methods include variational autoencoders and graph neural networks that capture complex, nonlinear structures among omics layers [19].

- Late Integration: Analyzing each omics dataset separately and combining the results at the final stage. This preserves unique characteristics of each dataset but may complicate identifying relationships between different omics layers [20].

Advanced AI architectures being applied in this domain include graph neural networks (GNNs) for modeling biological networks perturbed by somatic mutations [11], multi-modal transformers for fusing disparate data types like MRI radiomics with transcriptomic data [11], and explainable AI (XAI) techniques like SHapley Additive exPlanations (SHAP) for interpreting "black box" models to clarify how genomic variants contribute to clinical outcomes [11].

Performance Comparison of AI-Driven Multi-Omics Models

Table 1: Performance metrics of recent AI-driven multi-omics models in oncology and pharmacogenomics.

| Method Name | Reference, Year | AI Method | Use Case | Performance Outcome |

|---|---|---|---|---|

| DeepDRA | Mohammadzadeh-Vardin et al, 2024 [19] | Autoencoders + MLP | Cancer drug sensitivity | AUPRC: 0.99 (internal), 0.72 (external) |

| MOICVAE | Wang et al, 2023 [19] | Variational Autoencoder | Pan-cancer drug sensitivity | AUC up to 0.91 on TCGA |

| Adaptive Framework (Breast Cancer) | Scientific Reports, 2025 [20] | Genetic Programming | Breast cancer survival analysis | C-index: 78.31 (training), 67.94 (test) |

| DeepProg | Poirion et al, [20] | Deep/Machine Learning | Liver & breast cancer survival | C-index: 0.68 to 0.80 |

| MSI Classifier | Nature Communications, 2025 [22] | Deep Learning (Flexynesis) | Microsatellite instability classification | AUC = 0.981 |

Available Toolkits for Multi-Omics Analysis

Table 2: Key computational tools and frameworks for AI-driven multi-omics integration.

| Tool/Framework | Primary Methodology | Key Features | Accessibility |

|---|---|---|---|

| Flexynesis | Deep Learning [22] | Modular, multi-task training (regression, classification, survival), standardized input, hyperparameter optimization | PyPi, Bioconda, Galaxy Server, GitHub |

| MOFA+ | Bayesian Group Factor Analysis [20] | Learns shared low-dimensional representation, interpretable latent factors, handles missing data | R/Python package |

| MOGLAM | Dynamic Graph Convolutional Network [20] | Feature selection, multi-omics attention mechanism, interpretable embeddings | Not specified |

| MoAGL-SA | Graph Learning & Self-Attention [20] | Creates patient relationship graphs, adaptive weighting for integration | Not specified |

| SKI-Cox / LASSO-Cox | Classical Statistical Models [20] | Incorporates inter-omics relationships into Cox regression | Not specified |

Application Note: Addressing Intra-Tumoral Heterogeneity in Oncology

Background and Rationale

Intra-tumoral heterogeneity (ITH) represents a formidable barrier in oncology, characterized by the coexistence of genetically and phenotypically diverse subclones within a single tumor [23]. ITH challenges the core assumption of targeted therapy—that a single molecular signature can guide treatment—and directly contributes to drug resistance, disease relapse, and diagnostic uncertainty [23]. Conventional bulk tissue analysis often overlooks subtle cellular heterogeneity, resulting in incomplete or misleading interpretations of tumor biology [23]. Multi-omics technologies enable comprehensive mapping of ITH across molecular layers, facilitating the construction of holistic tumor "state maps" that link molecular variation to phenotypic behavior [23].

Experimental Protocol: Multi-Region Sequencing for ITH Analysis

Objective: To characterize ITH and reconstruct tumor evolutionary history using multi-region bulk sequencing. Materials: Fresh-frozen or FFPE tumor tissue samples from multiple geographically distinct regions of the same tumor, matched normal tissue (e.g., blood). Methods:

- Sample Collection: Obtain at least 3-5 spatially separated biopsies from different regions of the solid tumor, ensuring representative sampling of morphologically distinct areas.

- Nucleic Acid Extraction: Extract high-quality DNA and RNA from each sample using standardized kits. Assess quality and quantity via agarose gel electrophoresis, Bioanalyzer, and spectrophotometry.

- Library Preparation and Sequencing:

- For Whole-Exome Sequencing (WES), perform exome capture using SureSelect or similar kits followed by sequencing on Illumina platforms (150bp paired-end, >100x coverage).

- For RNA Sequencing, prepare poly-A enriched libraries and sequence on Illumina platforms (≥50 million reads per sample).

- Bioinformatic Processing:

- Genomics: Align sequences to reference genome (BWA), call somatic variants (GATK MuTect2), and identify copy number alterations (Control-FREEC).

- Transcriptomics: Align RNA-seq reads (STAR), quantify gene expression (featureCounts), and identify fusion transcripts and alternative splicing events.

- ITH Quantification and Clonal Reconstruction:

- Calculate Cancer Cell Fractions (CCFs) by integrating variant allele frequencies (VAF), tumor purity estimates, and copy number data.

- Use tools like PyClone or EXPANDS to infer subclonal architecture.

- Construct phylogenetic trees using tools such as PhyloWGS to visualize tumor evolution.

Expected Outcomes: Identification of truncal (clonal) and branch (subclonal) mutations, estimation of subclonal diversity, and reconstruction of tumor evolutionary history. High subclonal diversity is often associated with early relapse and resistance to targeted therapies [23].

Application in Breast Cancer Survival Analysis

A recent study demonstrated the power of adaptive multi-omics integration for breast cancer survival analysis [20]. The framework integrated genomics, transcriptomics, and epigenomics data from The Cancer Genome Atlas (TCGA) to identify complex molecular signatures driving breast cancer progression. The researchers employed genetic programming to optimize the feature selection and integration process, evolving optimal combinations of molecular features associated with survival outcomes [20]. This approach yielded a concordance index (C-index) of 78.31 during cross-validation and 67.94 on the test set, demonstrating the potential of adaptive multi-omics integration to improve prognostic accuracy in a heterogeneous disease [20].

Application Note: Multi-Omics in Pharmacogenomics and Drug Response Prediction

Background and Rationale

Pharmacogenomics is entering a transformative phase as high-throughput omics techniques integrate with AI methods [19]. While early pharmacogenetic applications focused on single genes, many drug response phenotypes are governed by intricate networks of genomic variants, epigenetic modifications, and metabolic pathways [19]. Multi-omics approaches address this complexity by capturing genomic, transcriptomic, proteomic, and metabolomic data layers, offering a comprehensive view of patient-specific biology that can predict drug efficacy, toxicity, and optimal dosage [19]. For example, adding gene expression profiles to genomic variants improved warfarin dose prediction by 8-12% in explained variance [19].

Experimental Protocol: Predictive Modeling of Drug Sensitivity in Cell Lines

Objective: To build a deep learning model that integrates multi-omics data from cancer cell lines to predict sensitivity to anti-cancer drugs. Materials: Cell line models (e.g., from CCLE or GDSC databases), multi-omics profiling data (gene expression, copy number variation, methylation), drug response data (e.g., IC50 values from GDSC). Methods:

- Data Acquisition: Download multi-omics data (e.g., RNA-seq, CNV, DNA methylation) and drug response data for a panel of cancer cell lines from public databases (CCLE, GDSC).

- Data Preprocessing:

- Perform quantile normalization for gene expression and methylation data.

- Apply ComBat or similar algorithms for batch effect correction.

- Handle missing data using k-nearest neighbors (KNN) imputation or DL-based reconstruction.

- Split data into training (70%), validation (15%), and test (15%) sets.

- Model Training with Flexynesis:

- Utilize the Flexynesis toolkit for its modularity and deployability [22].

- Configure an asymmetric encoder-decoder architecture with fully connected encoders for each omics type.

- Attach a supervisor multi-layer perceptron (MLP) for the regression task (predicting IC50 values).

- Train the model using the Adam optimizer with Cox Proportional Hazards or Mean Squared Error loss function.

- Model Validation:

- Evaluate model performance on the held-out test set using concordance index (C-index) for survival outcomes or Pearson correlation for continuous IC50 values.

- Apply explainability modules (e.g., SHAP) to identify features driving predictions.

Expected Outcomes: A trained model capable of predicting drug sensitivity based on multi-omics input. For instance, as demonstrated with Flexynesis, such models can show high correlation between known and predicted drug response values when trained on CCLE data and validated on GDSC data [22].

The Scientist's Toolkit: Essential Research Reagents and Platforms

Table 3: Key research reagents and platforms for multi-omics experiments.

| Category | Product/Platform Examples | Primary Function in Multi-Omics |

|---|---|---|

| Sequencing Instruments | Illumina NovaSeq, Element Biosciences | High-throughput DNA/RNA sequencing for genomics and transcriptomics [18] [24] |

| Single-cell Multi-omics Solutions | Mission Bio Tapestri, BD NEO/Python Junior | Comprehensive analysis of DNA and protein at single-cell level to resolve cellular heterogeneity [24] |

| Spatial Biology Platforms | Akoya Biosciences (via Thermo Fisher agreement), COSMO Center services | Visualize and map molecular data within tissue architecture, preserving cellular context [24] |

| Library Preparation Kits | QIAGEN QIAseq Multimodal DNA/RNA Library Kit | Enables preparation of DNA and RNA libraries for NGS from a single sample [24] |

| Automation & Robotics | Hamilton Company robotic kits (via BD partnership) | Standardize and automate single-cell multi-omics experiments, minimizing human error [24] |

| Mass Spectrometry | Bruker Corporation, Shimadzu Corporation | Quantify proteins and metabolites for proteomics and metabolomics studies [24] |

The integration of multi-omics data, powered by advanced artificial intelligence, represents a fundamental shift in our approach to precision medicine, particularly in oncology. This paradigm moves beyond single-layer analyses to capture the complex, non-linear interactions across genomic, transcriptomic, proteomic, and metabolomic layers that underlie disease pathogenesis and therapeutic response [19] [11]. As demonstrated in the application notes, this approach enables more accurate patient stratification, biomarker discovery, and prediction of treatment outcomes in complex conditions like cancer [20] [23].

The field is rapidly evolving with several emerging trends. Spatial multi-omics technologies are now enabling the mapping of molecular data within tissue architecture, preserving crucial cellular context and microenvironment interactions [21] [24]. Federated learning approaches are being developed to enable privacy-preserving collaboration across institutions, addressing data-sharing barriers [11]. Furthermore, the concept of "N-of-1" models and in silico "digital twins" promises to shift precision oncology from population-based approaches to truly dynamic, individualized cancer management [11].

Despite the remarkable progress, challenges remain in data harmonization, model interpretability, and regulatory alignment [11]. The translation of these sophisticated computational approaches into routine clinical practice requires continued development of standardized, accessible tools like Flexynesis [22], robust validation in prospective clinical trials, and a focus on creating explainable AI that clinicians can trust and understand. As these hurdles are addressed, AI-driven multi-omics integration will undoubtedly continue to transform precision medicine, enabling proactive, personalized healthcare that fundamentally improves patient outcomes across oncology and beyond.

The convergence of artificial intelligence (AI) with multi-omics data—spanning genomics, transcriptomics, proteomics, metabolomics, and epigenomics—is fundamentally reshaping biomarker discovery and biological research [11] [25]. Cancer's staggering molecular heterogeneity, for instance, demands innovative approaches beyond traditional single-omics methods [11]. The integration of these disparate data layers using deep learning and machine learning enables the identification of non-linear, complex patterns that are imperceptible to conventional statistical methods, thereby uncovering novel biomarkers and biological pathways with high translational potential [26] [27]. This paradigm shift moves research from a reductionist, single-analyte focus toward a holistic, systems-level understanding of disease biology, accelerating the development of precision medicine [13] [28]. This Application Note provides a structured framework and detailed protocols for implementing AI-driven multi-omics integration to uncover robust biological insights and biomarker signatures.

Performance Benchmarks of AI in Multi-Omics Analysis

Evaluating the performance of AI models is critical for assessing their utility in biomarker discovery and biological integration. The table below summarizes key quantitative benchmarks reported in recent literature for various AI applications in multi-omics studies.

Table 1: Performance Benchmarks of AI Models in Multi-Omics Applications

| AI Application | Reported Performance | Clinical or Biological Utility | Data Types Integrated |

|---|---|---|---|

| Integrated Classifiers for Early Detection [11] | AUC: 0.81–0.87 | Improved diagnostic and prognostic accuracy for early-stage cancers. | Genomics, transcriptomics, proteomics, metabolomics |

| AI-Enhanced Multi-Omics Diagnostics [26] | Superior efficacy in cancer type/stage classification vs. traditional methods. | Enhanced early detection and diagnostic precision for breast, lung, brain, and skin cancers. | Radiomics, pathomics, clinical records, genomics |

| Convolutional Neural Networks (CNNs) [11] | Pathologist-level accuracy in IHC staining quantification (e.g., PD-L1, HER2). | Reduces inter-observer variability; provides consistent, quantitative pathology reads. | Digital pathology images (Pathomics) |

| Predictive Biomarker Modeling Framework (PBMF) [26] | Significant improvement in patient survival rates in retrospective studies. | Predicts patient response to therapy; informs personalized treatment plans. | Clinical data, genomics, transcriptomics |

Protocol for AI-Driven Multi-Omics Biomarker Discovery

This protocol outlines a comprehensive workflow for integrating multi-omics datasets to discover and validate biomarker signatures using AI.

The following diagram illustrates the end-to-end logical workflow for AI-driven biomarker discovery, from data collection to clinical interpretation.

Materials and Reagents

Table 2: Essential Research Reagent Solutions for Multi-Omics Studies

| Reagent / Technology | Function in Workflow | Specific Application Example |

|---|---|---|

| Next-Generation Sequencing (NGS) | Comprehensive profiling of genomic, transcriptomic, and epigenomic alterations. | Whole-genome sequencing for variant calling; RNA-seq for gene expression and fusion transcripts [11]. |

| Mass Spectrometry | Quantification of proteins and metabolites, identifying functional effectors and metabolic reprogramming. | LC-MS for proteomic and metabolomic profiling to identify signaling pathway activities [11]. |

| Spatial Transcriptomics | Enables gene expression analysis within the intact tissue context, preserving spatial relationships. | Characterizing tumor microenvironment (TME) and cellular neighborhoods for spatial biomarker discovery [27] [29]. |

| Multiplex Immunohistochemistry (IHC) | Simultaneous detection of multiple protein biomarkers on a single tissue section. | Mapping immune contexture (e.g., T-cell populations) and cell-to-cell interactions within the TME [11] [29]. |

| Organoid and Humanized Models | Pre-clinical platforms that recapitulate human tissue architecture and tumor-immune interactions. | Functional biomarker screening, target validation, and studying immunotherapy response mechanisms [29]. |

Step-by-Step Procedure

Step 1: Multi-Omics Data Collection and Preprocessing

- Action: Collect matched patient samples for genomic, transcriptomic, proteomic, and metabolomic profiling using platforms like NGS and mass spectrometry [11] [13].

- Critical Parameters: Ensure high RNA Integrity Number (RIN > 7) for transcriptomics, and optimize protein yield and purity for proteomics.

- Quality Control: Implement rigorous quality control pipelines. For RNA-seq data, use tools like FastQC and align with STAR. For batch effect correction, apply algorithms like ComBat [11].

Step 2: Data Harmonization and Feature Reduction

- Action: Harmonize structurally disparate data types (discrete mutations, continuous intensity values) into a unified analytical framework [11] [25].

- Computational Methods: Address the "curse of dimensionality" using feature reduction techniques such as Principal Component Analysis (PCA) or autoencoders. For missing data, employ advanced imputation strategies like matrix factorization or DL-based reconstruction [11] [13].

Step 3: AI-Driven Data Integration and Model Training

- Action: Apply AI models to integrate the harmonized multi-omics data for pattern recognition.

- Model Selection:

- Graph Neural Networks (GNNs): Ideal for modeling biological networks (e.g., protein-protein interactions) perturbed by disease mutations [11].

- Multi-modal Transformers: Effective for fusing heterogeneous data types, such as MRI radiomics with transcriptomic data, to predict disease progression [11].

- Explainable AI (XAI) Frameworks: Utilize techniques like SHapley Additive exPlanations (SHAP) to interpret model outputs and clarify the contribution of specific features to the prediction [11] [26].

- Implementation: Train models on large-scale multi-omics repositories (e.g., The Cancer Genome Atlas - TCGA) using cloud-based platforms like AWS HealthOmics and SageMaker for scalable computation [30].

Step 4: Biomarker Signature Identification and Validation

- Action: Extract and validate robust biomarker signatures from the AI model.

- Identification: Use the trained model to identify co-varying features across omics layers that stratify sample groups (e.g., responders vs. non-responders) [26] [25].

- Experimental Validation:

- Clinical Correlation: Correlate biomarker signatures with clinical outcomes such as treatment response, survival rates, and disease recurrence [26].

Protocol for Network Integration and Pathway Analysis

This protocol details the procedure for mapping multi-omics data onto shared biochemical networks to gain mechanistic understanding.

Signaling Pathway Analysis Workflow

The diagram below outlines the process of deriving mechanistic insights from integrated multi-omics data through network and pathway analysis.

Step-by-Step Procedure

Step 1: Network Construction

- Action: Map analytes (genes, proteins, metabolites) from the integrated multi-omics dataset onto shared biochemical networks based on known interactions [25].

- Data Sources: Utilize prior knowledge from databases such as protein-protein interaction networks, transcription factor-target gene databases, and metabolic networks (e.g., KEGG, Reactome) [13].

Step 2: Integrative Pathway Analysis

- Action: Interweave omics profiles into a single dataset for higher-level analysis to identify dysregulated pathways.