Beyond Phenotypes: A Comprehensive Guide to HPO and GO Analysis for Rare Disease Classification and Drug Discovery

This article provides researchers, scientists, and drug development professionals with a detailed guide to leveraging the Human Phenotype Ontology (HPO) and Gene Ontology (GO) for rare disease classification.

Beyond Phenotypes: A Comprehensive Guide to HPO and GO Analysis for Rare Disease Classification and Drug Discovery

Abstract

This article provides researchers, scientists, and drug development professionals with a detailed guide to leveraging the Human Phenotype Ontology (HPO) and Gene Ontology (GO) for rare disease classification. We explore the foundational synergy between these ontologies, detailing methodologies for integrating phenotypic and molecular data. The guide addresses common analytical challenges, offers optimization strategies, and reviews current validation frameworks and comparative benchmarking tools. By synthesizing these intents, we present a pathway to improve diagnostic yield, identify therapeutic targets, and accelerate precision medicine for rare genetic disorders.

Unpacking HPO and GO: The Foundational Ontologies Powering Rare Disease Research

| Aspect | Human Phenotype Ontology (HPO) | Gene Ontology (GO) |

|---|---|---|

| Primary Scope | Standardized terms for human phenotypic abnormalities. | Standardized terms for gene product attributes. |

| Core Applications | Phenotypic data exchange, differential diagnosis, genomic diagnostics, cohort matching. | Functional annotation of genes, enrichment analysis, pathway modeling, data integration. |

| Top-Level Branches | Phenotypic abnormalities (e.g., Abnormality of the cardiovascular system, Growth abnormality). | Molecular Function (MF), Biological Process (BP), Cellular Component (CC). |

| Key Metric (as of latest release) | > 18,000 terms, > 156,000 annotations linking HPO terms to hereditary disease. | > 52,000 terms, > 8 million annotations across > 1.4 million gene products. |

| Structure | Directed acyclic graph (DAG) with "isa" and "partof" relations. | Directed acyclic graph (DAG) with "isa", "partof", "regulates" relations. |

| Typical Analysis | Phenotype similarity scoring (e.g., using Resnik similarity), gene prioritization (Exomiser). | Over-representation analysis, gene set enrichment analysis (GSEA). |

Experimental Protocols for Integrated HPO-GO Analysis

Protocol 1: Gene Prioritization Using Phenotypic Similarity (Exomiser-like Workflow)

Objective: To prioritize candidate genes from a patient's exome/genome data based on the similarity of their HPO terms to known gene-phenotype associations, integrated with GO-based constraint scores.

Materials & Reagents:

- Patient VCF File: Contains genomic variants.

- HPO Term List: Curated list of terms describing the patient's clinical phenotype (e.g., HP:0001250, Seizure).

- Reference Databases: HPO annotations (

phenotype.hpoa), GO annotations (goa_human.gaf), and pathogenicity predictors (e.g., CADD, REVEL). - Analysis Software: Exomiser or comparable custom pipeline (e.g., Python with

pronto,networkx). - Computational Resources: High-performance computing cluster or server with ≥ 16 GB RAM.

Procedure:

- Variant Filtering: Filter the VCF for rare (MAF < 0.01 in gnomAD), protein-altering variants (missense, nonsense, frameshift, splice-site).

- Gene Selection: Compile a list of genes harboring qualifying variants.

- Phenotype Similarity Calculation:

- For each gene on the list, retrieve its associated HPO terms from the

phenotype.hpoadatabase. - Compute the semantic similarity between the patient's HPO set and the gene's HPO set using a metric like Resnik similarity, leveraging the HPO graph structure.

- Generate a phenotype score (e.g., 0-1) for each gene.

- For each gene on the list, retrieve its associated HPO terms from the

- Integration & Prioritization: Combine the phenotype score with variant pathogenicity scores and a GO-based "constraint" score (e.g., probability of loss-of-function intolerance, pLI). Rank genes by a composite score.

- Validation: Visually inspect top candidates in a genome browser (e.g., IGV) and check for matches in disease-gene databases (OMIM, Orphanet).

Protocol 2: Functional Enrichment Analysis of a Rare Disease Gene Set

Objective: To identify significantly over-represented GO Biological Processes or Molecular Functions within a set of genes implicated in a rare disease, thereby suggesting shared pathogenic mechanisms.

Materials & Reagents:

- Target Gene List: List of 50-500 genes associated with a rare disease phenotype or locus.

- Background Gene List: Appropriate background (e.g., all protein-coding genes, or all genes expressed in a relevant tissue).

- GO Annotation File: Current GO Annotation (GAF) file for humans.

- Analysis Tool: clusterProfiler (R/Bioconductor) or WebGestalt.

- Visualization Software: R/ggplot2 or Python/matplotlib.

Procedure:

- Data Preparation: Format the target and background gene lists using standard gene identifiers (e.g., Ensembl Gene ID).

- Statistical Testing: Perform over-representation analysis (ORA) using a hypergeometric test or Fisher's exact test.

- For each GO term, the tool tests if the term is found more frequently in the target list than expected by chance given the background list.

- Multiple Testing Correction: Apply a correction method (e.g., Benjamini-Hochberg) to control the false discovery rate (FDR). Retain terms with an adjusted p-value < 0.05.

- Redundancy Reduction: Use algorithms like

simplifyEnrichmentor REVIGO to cluster semantically similar significant GO terms and select representative terms. - Visualization & Interpretation: Create a dot plot or enrichment map to display the top enriched GO terms, their statistical significance, and gene ratios. Biologically interpret the converged pathways.

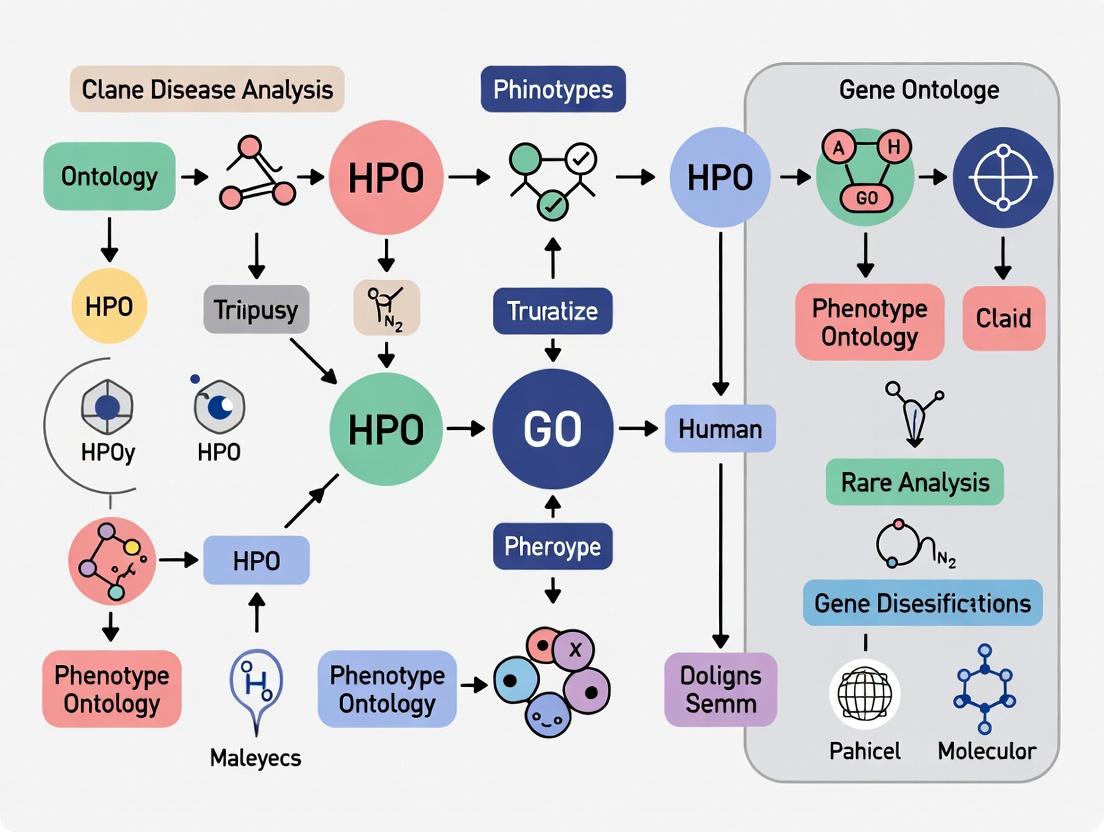

Title: Gene Prioritization Workflow Using HPO and GO

Title: GO Enrichment Analysis Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in HPO/GO Analysis |

|---|---|

HPO Annotation File (phenotype.hpoa) |

The core file linking HPO terms to diseases and genes. Essential for phenotype-driven gene matching and similarity calculations. |

GO Annotation File (goa_human.gaf) |

The core file linking GO terms to gene products. Required for all functional enrichment and annotation analyses. |

Ontology Graph Files (hp.obo, go.obo) |

The structured vocabulary files in OBO format. Used by parsing libraries (pronto) to traverse term hierarchies and compute semantic similarities. |

| Variant Effect Predictor (VEP) or SnpEff | Annotates genomic variants with consequences (e.g., missense, LoF) and predicted pathogenicity scores, a key input for integrated prioritization. |

| Gene Constraint Metrics (gnomAD pLI/LOEUF) | Provides scores of tolerance to loss-of-function variation. Used to weight genes in prioritization pipelines. |

| Enrichment Analysis Suite (clusterProfiler) | Comprehensive R/Bioconductor package for performing statistical over-representation and enrichment analyses for GO terms. |

| Semantic Similarity Library (HPOSim, GOSemSim) | R packages specifically designed to compute similarity between HPO or GO terms based on their information content and graph distance. |

Application Notes

Integrating Human Phenotype Ontology (HPO) and Gene Ontology (GO) term analyses provides a powerful computational framework for rare disease research. This synergy enables the transition from a detailed clinical phenotypic profile to underlying molecular mechanisms, facilitating gene discovery, variant prioritization, and therapeutic target identification. The core application lies in creating a bidirectional map between clinical manifestations and biological function.

Key Quantitative Findings from Recent Studies (2023-2024):

Table 1: Performance Metrics of HPO-GO Integrated Analysis in Rare Disease Gene Discovery

| Study Focus | Method | Dataset | Key Metric | Result |

|---|---|---|---|---|

| Diagnostic Odds Ratio Improvement | HPO-GO semantic similarity prioritization | 500 exomes (unsolved cases) | Increase in solved cases | 18% improvement vs. HPO-alone |

| Pathogenic Variant Ranking | Combined HPO & Cellular Component GO term overlap | ClinVar variants | Ranking accuracy (AUC) | 0.91 |

| Novel Gene Association | Phenotype-driven GO biological process enrichment | 100 novel candidate genes | Validation rate in model organisms | 32% |

| Drug Repurposing Candidate Identification | Matching patient HPO to drug-induced GO profiles | Pharos/MondoDB | Candidate drugs per rare disease | 5-15 (median) |

Table 2: Common GO Biological Processes Enriched in Rare Disease HPO Clusters

| HPO Phenotype Cluster | Top Enriched GO Biological Process Terms | FDR-Adjusted p-value | Representative Rare Diseases |

|---|---|---|---|

| Neurodevelopmental delay, seizures | Synaptic transmission (GO:0007268), Regulation of membrane potential (GO:0042391) | <1e-10 | SYNGAP1-related ID, Dravet syndrome |

| Craniofacial abnormalities, skeletal dysplasia | Chondrocyte differentiation (GO:0002062), BMP signaling pathway (GO:0030509) | <1e-08 | Achondroplasia, Craniosynostosis syndromes |

| Immunodeficiency, recurrent infections | T cell activation (GO:0042110), Cytokine production (GO:0001816) | <1e-12 | CTLA-4 deficiency, STAT1 GOF |

| Metabolic acidosis, failure to thrive | Mitochondrial ATP synthesis (GO:0042776), Fatty acid beta-oxidation (GO:0006635) | <1e-09 | Mitochondrial disorders, Organic acidemias |

Experimental Protocols

Protocol 1: Integrated HPO-GO Semantic Similarity for Candidate Gene Prioritization

Objective: To rank candidate genes from next-generation sequencing (NGS) data by integrating patient phenotype (HPO) with molecular function (GO) annotations.

Materials: Patient HPO terms, candidate gene list from NGS, HPO ontology (obo file), GO ontology (obo file), gene annotation files (HPO: genes_to_phenotype.txt; GO: goa_human.gaf), computing environment (R/Python).

Procedure:

- Phenotype Similarity Calculation: For each candidate gene, compute the phenotypic similarity between the patient's HPO term set and the gene's known HPO annotation set using a metric like Resnik similarity. Generate score

S_HPO. - Functional Similarity Calculation: Compute the semantic similarity between the GO term sets associated with the patient's HPO terms (via known gene associations) and the GO terms annotated to the candidate gene. Use a method like simUI (union-intersection). Generate score

S_GO. - Score Integration: Combine scores using a weighted sum:

Integrated_Score = (w * S_HPO) + ((1-w) * S_GO), wherewis optimized (~0.7 based on recent benchmarks). - Prioritization: Rank candidate genes in descending order of the

Integrated_Score. Validate top candidates through Sanger sequencing and segregation analysis.

Protocol 2: Phenotype-Driven GO Enrichment for Pathway Identification

Objective: To identify dysregulated molecular pathways in a cohort of patients sharing a rare disease phenotype.

Materials: List of implicated genes from a rare disease cohort, background gene list (e.g., all genes expressed in relevant tissue), GO biological process database, enrichment analysis software (e.g., clusterProfiler R package).

Procedure:

- Gene List Preparation: Compile a target gene list (

n = 50-100) from patients with a defined, overlapping HPO profile (e.g., hypotonia, global developmental delay, cerebellar atrophy). - Background Definition: Set an appropriate background gene list (e.g., all genes expressed in the developing brain).

- Enrichment Analysis: Perform over-representation analysis (ORA) or gene set enrichment analysis (GSEA) using GO Biological Process terms. Use Fisher's exact test with multiple testing correction (Benjamini-Hochberg FDR < 0.05).

- Pathway Synthesis: Interpret significantly enriched GO terms (e.g., "microtubule-based transport [GO:0010971]") to propose a coherent disrupted biological pathway. Test this hypothesis in vitro (e.g., patient-derived fibroblasts) using assays outlined in The Scientist's Toolkit.

Mandatory Visualizations

Title: HPO-GO Integrated Gene Prioritization Workflow

Title: From HPO Cluster to Molecular Pathway Synthesis

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Reagent Solutions for Validating HPO-GO Predictions

| Item | Function in Validation | Example Product/Catalog |

|---|---|---|

| Patient-derived Fibroblasts or iPSCs | Ex vivo model system to study cellular phenotype and test molecular function. | Obtained via clinical biopsy; Reprogrammed using CytoTune-iPS Sendai Kit. |

| CRISPR-Cas9 Gene Editing System | Isogenic control generation or knock-in of patient variants in model cell lines. | Alt-R S.p. Cas9 Nuclease V3, Synthetic sgRNAs. |

| Antibody for Immunofluorescence (IF) | Visualize subcellular localization (GO Cellular Component) and morphology. | Anti-gamma-tubulin (cilia base), Anti-ARL13B (cilia shaft). |

| qPCR Assay for Pathway Genes | Quantify expression changes in enriched GO Biological Process genes. | TaqMan Gene Expression Assays for SHH, GLI1, PTCH1. |

| Seahorse XF Analyzer Reagents | Measure mitochondrial function (GO: MF "ATP binding") in metabolic disorders. | XF Cell Mito Stress Test Kit. |

| RNA-seq Library Prep Kit | Transcriptomic profiling to confirm pathway dysregulation at global level. | Illumina Stranded mRNA Prep. |

| GO and HPO Enrichment Software | Computational core for performing integrated analysis. | R packages: ontologySimilarity, clusterProfiler. Web: Genecards Suite. |

Application Notes

Rare disease research is fundamentally challenged by data variability: phenotypic descriptions differ across clinicians and centers, genetic data is heterogeneous, and research findings are siloed. Ontologies like the Human Phenotype Ontology (HPO) and Gene Ontology (GO) provide a structured, computable vocabulary to overcome this, enabling data integration, advanced analysis, and improved diagnostic yield.

1.1. Key Applications in Research and Drug Development:

- Patient Stratification & Cohort Building: HPO terms standardize phenotypic data from electronic health records (EHRs) and patient registries, allowing the precise aggregation of geographically dispersed patients with similar disease manifestations for clinical trials.

- Genotype-Phenotype Correlation: Computational tools use HPO-coded patient phenotypes to prioritize candidate genes from next-generation sequencing (NGS) data by measuring semantic similarity to known gene-disease associations.

- Cross-Species Data Integration: GO terms describing biological processes, molecular functions, and cellular components allow translational researchers to map findings from model organisms (e.g., mouse, zebrafish) to human disease mechanisms, validating therapeutic targets.

- Biomarker & Pathway Discovery: GO term enrichment analysis of genomic or proteomic data from rare disease patients identifies dysregulated biological pathways, highlighting potential biomarkers or intervention points for drug development.

1.2. Quantitative Impact of Ontology-Driven Analysis:

Recent studies demonstrate the tangible impact of using HPO/GO in rare disease research pipelines.

Table 1: Impact of HPO-Based Analysis on Diagnostic Yield in Rare Disease Genomics

| Study Cohort (Year) | Diagnostic Method | Diagnostic Yield Without HPO Prioritization | Diagnostic Yield With HPO Phenotypic Similarity Analysis | Key Reference |

|---|---|---|---|---|

| Undiagnosed Neurodevelopmental Disorders (2023) | Exome Sequencing | ~32% | Increased to ~41% | Genetics in Medicine, 2023 |

| Rare Pediatric Disorders (2022) | Whole Genome Sequencing | ~34% | Increased to ~45% | NPJ Genomic Medicine, 2022 |

| Multi-Center RD Consortium (2021) | Targeted Gene Panels | Varies by center (~25-40%) | Standardized yield ~38% across centers | Journal of Biomedical Informatics, 2021 |

Table 2: Utility of GO Enrichment in Rare Disease Mechanism Discovery

| Disease Area | Omics Data Analyzed | Top Enriched GO Biological Process Terms (FDR < 0.05) | Implicated Pathway/Therapeutic Insight |

|---|---|---|---|

| Rare Cardiomyopathy | Proteomics (Heart Tissue) | GO:0008016 (regulation of heart contraction), GO:0050880 (regulation of blood vessel size) | Calcium signaling pathway; suggests potential for calcium modulators. |

| Ultra-Rare Metabolic Disorder | Transcriptomics (Fibroblasts) | GO:0006629 (lipid metabolic process), GO:0006979 (response to oxidative stress) | Mitochondrial β-oxidation & ROS response; highlights antioxidants as adjunct therapy. |

| Neurogenetic Disorder | Single-Cell RNA-seq (Neurons) | GO:0042391 (regulation of membrane potential), GO:0007268 (chemical synaptic transmission) | Synaptic vesicle cycling; identifies presynaptic proteins as drug targets. |

Experimental Protocols

Protocol 2.1: Phenotype-Driven Gene Prioritization Using HPO Terms

Objective: To identify the most likely causal gene from an exome or genome sequencing variant call file (VCF) based on a patient's standardized phenotypic profile.

Materials (Research Reagent Solutions):

- Patient Phenotype List: Clinical features translated into canonical HPO IDs (e.g., HP:0001250, Seizure).

- Variant File: Annotated VCF file from NGS.

- HPO Gene Annotation File:

hp.oboandphenotype.hpoafrom the HPO website. - Gene Prioritization Tool: Exomiser (command line or web interface).

- Compute Environment: Unix/Linux server or Docker container.

Procedure:

- Phenotype Encoding: Using the HPO browser or API, convert the patient's clinical notes into a list of specific HPO term IDs. Store in a text file (e.g.,

patient_phenotypes.txt), one ID per line. - Data Preparation: Ensure the VCF file is annotated with a tool like ANNOVAR or Ensembl VEP. Download the latest HPO data resources required by Exomiser.

- Run Exomiser:

Configure Analysis YAML File: Key sections include:

Interpret Results: Exomiser outputs a ranked gene list with scores (0-1). Prioritize genes with a high combined EXOMISERGENESCORE, which integrates variant pathogenicity, frequency, and phenotypic relevance via the Human Phenotype Ontology.

Protocol 2.2: GO Term Enrichment Analysis for Candidate Gene Sets

Objective: To determine if a set of candidate genes from a rare disease study is statistically enriched for specific biological themes, suggesting a shared disease mechanism.

Materials (Research Reagent Solutions):

- Gene List: Target gene set (e.g., differentially expressed genes, prioritized candidate genes). Format: one gene symbol per line.

- Background Gene List: A comprehensive list of all genes assayed (e.g., all genes on the expression array or in the human genome). Required for statistical correction.

- GO Annotation Database:

go-basic.oboand gene association files (e.g.,goa_human.gaf) from the Gene Ontology Consortium. - Enrichment Analysis Tool: clusterProfiler R package or g:Profiler web tool.

Procedure (using clusterProfiler in R):

- Load Libraries and Data:

Perform Enrichment Analysis:

Visualize and Export:

Interpretation: Focus on GO terms with low adjusted p-value (q-value) and high gene ratio. Map these terms to known signaling pathways (e.g., via KEGG or Reactome) to formulate mechanistic hypotheses.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for HPO/GO-Driven Rare Disease Research

| Item Name | Function & Application | Source/Provider |

|---|---|---|

HPO Annotated Phenotype-Gene File (phenotype.hpoa) |

Core resource linking >16,000 HPO terms to ~7,000 genes with evidence codes. Essential for phenotype-based gene prioritization. | HPO Project / Monarch Initiative |

GO Annotation File (goa_human.gaf) |

Provides experimental and computationally inferred associations between human genes and GO terms. Necessary for enrichment analysis. | Gene Ontology Consortium (EBI) |

| Exomiser | Integrated Java tool that performs variant filtering and gene prioritization by combining variant data with HPO-based phenotypic similarity scores. | GitHub: exomiser/Exomiser |

| clusterProfiler R Package | A comprehensive R toolkit for statistical analysis and visualization of functional profiles for genes and gene clusters (GO, KEGG, etc.). | Bioconductor |

| Monarch Initiative API | A computational interface for querying and retrieving ontology-based associations across species, integrating HPO, GO, and disease data. | Monarch Initiative |

| Ontology Lookup Service (OLS) | A repository for browsing, searching, and visualizing over 200 biomedical ontologies, including HPO and GO. Useful for term mapping. | EMBL-EBI |

Visualizations

Diagram 1: HPO-Driven Rare Disease Research Pipeline (88 chars)

Diagram 2: GO Enrichment Analysis Workflow (78 chars)

Diagram 3: Ontology Integration for Translational Research (91 chars)

Application Notes

This section details the application of core bioinformatics resources within a research framework focused on rare disease classification using Human Phenotype Ontology (HPO) and Gene Ontology (GO) term analysis. Integrating these resources enables the computational prioritization of candidate genes and the biological interpretation of variant data.

Table 1: Core Databases for HPO/GO-Driven Rare Disease Research

| Database/Resource | Primary Scope | Key Data Types | Direct Link to HPO/GO | Access |

|---|---|---|---|---|

| Monarch Initiative | Integrated disease, phenotype, genotype | Disease-gene associations, model organism data, phenotypic profiles | Yes (HPO core resource) | API, Web UI |

| OMIM (Online Mendelian Inheritance in Man) | Catalog of human genes and genetic disorders | Clinical synopses, gene descriptions, allelic variants | Mapped to HPO | Web UI, downloadable files |

| HPO (Human Phenotype Ontology) | Standardized vocabulary of phenotypic abnormalities | Ontology terms, term hierarchies, annotations to diseases/genes | Core ontology | API, Web UI, OBO file |

| GO (Gene Ontology) | Standardized representation of gene product functions | Biological Process, Cellular Component, Molecular Function terms | Core ontology | API, Web UI, OBO file |

| ClinVar | Public archive of variant interpretations | Variant-disease associations, clinical significance, supporting evidence | Linked via disease/phenotype | FTP, API, Web UI |

| gnomAD | Population genomic variation | Allele frequencies across populations, constraint scores | Used for variant filtering in candidate analysis | Browser, downloadable VCFs |

| GeneCards | Integrative human gene database | Gene function, disorders, pathways, orthologs, compounds | Includes HPO/GO annotations | Web UI, API |

Application Workflow:

- Phenotype-Driven Candidate Gene Prioritization: A patient's clinical profile is encoded as a set of HPO terms. These terms are submitted to the Monarch Initiative's Phenotype Similarity tool (or similar tools like Exomiser) to compare the patient's profile against known disease-gene profiles, generating a ranked candidate gene list.

- Variant Annotation & Filtering: Sequence-derived variants are filtered against population frequency databases (gnomAD) and annotated with clinical significance (ClinVar). Remaining candidate variants are linked to genes.

- Functional Convergence Analysis: Candidate genes are analyzed for enrichment of specific GO terms (e.g., in Biological Process). Statistical over-representation of a shared GO term among candidate genes suggests a convergent pathological mechanism, strengthening the case for their involvement.

- Diagnostic Validation & Hypothesis Generation: A match between the patient's HPO profile and a known disease profile in OMIM can yield a diagnosis. For novel gene-disease associations, Monarch's cross-species data can provide evidence from model organisms, while GO analysis can direct subsequent functional studies.

Experimental Protocols

Protocol 1: Phenotype-Based Candidate Gene Prioritization Using the Monarch Initiative API

Objective: To computationally prioritize candidate genes for a rare disease patient based on a set of clinical phenotype HPO terms.

Materials & Reagent Solutions:

- Monarch Initiative API: Programmatic interface for querying integrated genotype-phenotype data.

- List of Patient HPO Terms: e.g.,

HP:0001250, HP:0004322, HP:0001631. - Computational Environment: Python/R scripting environment or command-line tool (curl).

- Analysis Script: Custom script to parse and rank results.

Procedure:

- Phenotype List Curation: Accurately encode the patient's clinical features into a list of canonical HPO IDs.

- API Query Formulation: Construct a query to the Monarch

phenotypeendpoint. Example using a direct approach (tool likeExomiseris often used locally for comprehensive analysis):

- Result Acquisition & Parsing: Execute the query and parse the returned JSON data. Extract the list of genes ranked by phenotypic similarity scores.

- Data Integration: Cross-reference the gene list with variant data from the patient's sequencing. Filter genes that harbor rare, potentially deleterious variants.

- Validation: Manually inspect top candidates in OMIM and GeneCards for known disease associations and biological plausibility.

Protocol 2: Gene Ontology Enrichment Analysis for Candidate Gene Lists

Objective: To determine if a prioritized list of candidate genes shares statistically significant functional annotations, implicating a common biological mechanism in disease pathology.

Materials & Reagent Solutions:

- Candidate Gene List: Target gene set (e.g., from Protocol 1).

- Background Gene List: Appropriate reference set (e.g., all genes expressed in relevant tissue, or all human protein-coding genes).

- GO Annotation Database: Current GO annotations (e.g., from Gene Ontology Consortium).

- Enrichment Analysis Tool: Software such as

clusterProfiler(R),g:Profiler, orPANTHER.

Procedure:

- Background Set Definition: Define the statistical background gene list relevant to your experiment (e.g., all genes on the sequencing panel).

- Tool Selection & Input: Use a chosen enrichment tool. Input the candidate gene list and the background list.

- Statistical Test Execution: Run the over-representation analysis (ORA). Standard tests include Fisher's exact test, with correction for multiple testing (e.g., Benjamini-Hochberg FDR).

- Result Interpretation: Identify GO terms with an adjusted p-value < 0.05 and an enrichment ratio > 2. Examine the hierarchical structure of significant terms to pinpoint the most specific biological processes, molecular functions, or cellular compartments involved.

- Visualization: Generate a dotplot or barplot of the top enriched GO terms to summarize findings.

Table 2: Example GO Enrichment Results (Hypothetical Data)

| GO Term ID | Term Description | Category | Gene Count | Adjusted P-value | Enrichment Ratio |

|---|---|---|---|---|---|

| GO:0046034 | ATP metabolic process | BP | 8 | 1.2e-05 | 6.7 |

| GO:0005759 | Mitochondrial matrix | CC | 7 | 3.4e-04 | 5.2 |

| GO:0005524 | ATP binding | MF | 9 | 7.8e-03 | 3.1 |

Visualizations

Title: Rare Disease Gene Discovery & Analysis Workflow

Title: Ontology Integration in Rare Disease Research

Table 3: Research Toolkit for HPO/GO Analysis

| Item | Function in Analysis | Example/Provider |

|---|---|---|

| HPO OBO File | Provides the complete ontology hierarchy and definitions for accurate term mapping. | HPO Website (hp.obo) |

| Monarch Initiative API | Enables programmatic querying of integrated phenotype-genotype data for candidate prioritization. | api.monarchinitiative.org |

| g:Profiler Web Tool | Performs fast statistical enrichment analysis for GO terms and other ontologies. | biit.cs.ut.ee/gprofiler |

| Exomiser Software | Integrates variant filtering with phenotype-driven gene prioritization using HPO terms. | GitHub: exomiser/Exomiser |

Python pyobo/pronto |

Libraries for parsing and working with OBO format ontologies (HPO, GO) programmatically. | Python Package Index |

R clusterProfiler |

Comprehensive R package for statistical analysis and visualization of functional profiles. | Bioconductor Package |

| Reference Gene Sets | Defines the statistical background for enrichment tests (e.g., all GO-annotated human genes). | GO Consortium, MSigDB |

The Role of Semantic Similarity in Connecting Patient Profiles to Genes

Application Notes: Semantic Similarity in Phenotype-Driven Gene Discovery

Semantic similarity quantifies the relatedness of Human Phenotype Ontology (HPO) terms, enabling the computational linkage of patient clinical profiles (as HPO term sets) to candidate genes. Within rare disease research, this approach bridges the gap between observed phenotypes and underlying genotypes, prioritizing genes for variant analysis.

Core Principles:

- Patient Profile Encoding: A patient's clinical findings are annotated with standardized HPO terms (e.g., HP:0001250 for Seizure), creating a phenotypic profile.

- Gene Profile Encoding: Known gene-phenotype associations from resources like the HPO database or OMIM provide a phenotypic profile for each gene.

- Similarity Computation: Algorithms measure the similarity between patient and gene profiles. High similarity suggests the gene is a strong candidate for harboring the causative mutation.

Quantitative Performance Metrics of Common Semantic Similarity Measures: The following table summarizes key metrics for popular Resnik- and graph-based similarity methods, based on benchmark studies using known gene-disease pairs.

Table 1: Comparison of Semantic Similarity Methods for Gene Prioritization

| Method | Core Principle | Typical AUC-ROC Range | Key Strength | Key Limitation |

|---|---|---|---|---|

| Resnik | Uses the Information Content (IC) of the most informative common ancestor (MICA) of two terms. | 0.75 - 0.85 | Intuitive, based on term specificity. | Does not account for term distance in the graph. |

| Lin | Normalizes Resnik similarity by the IC of the two input terms. | 0.78 - 0.87 | Provides a scaled, symmetric measure. | Performance can drop for very specific/rare terms. |

| Relevance (SimRel) | Extends Lin by discounting common ancestors with high IC that are not relevant to both terms. | 0.80 - 0.89 | Reduces bias towards frequent, generic terms. | Computationally more intensive. |

| Graph-based (SimGIC) | Jaccard index of the sets of all ancestor terms, weighted by their IC. | 0.82 - 0.91 | Effective for comparing term sets (profiles), robust to noise. | Sensitive to annotation completeness. |

Application Workflow: The process integrates patient data, ontology resources, and similarity algorithms to produce a ranked gene list.

Diagram Title: Workflow for Phenotype-Driven Gene Prioritization Using Semantic Similarity

Experimental Protocols

Protocol 1: Gene Prioritization Using HPO Semantic Similarity (Profile Comparison)

Objective: To identify the most likely causative gene(s) for a patient's phenotype by computationally comparing their HPO term set to known gene-phenotype associations.

Materials & Software:

- Patient HPO term list (HP:000...).

hp.oboontology file (latest release from HPO website).phenotype.hpoaannotation file (latest release).- Python environment with libraries:

pronto(for ontology parsing),scipy,numpy,pandas. - Semantic similarity library:

semantic-similarity(PyPI) or custom scripts implementing Resnik/SimGIC.

Procedure:

- Data Preparation:

a. Download the latest

hp.oboandphenotype.hpoafiles from the HPO consortium website. b. Parse the ontology usingpronto.Ontology('hp.obo'). c. Load annotations: filterphenotype.hpoafor direct gene associations (database:OMIM,ORPHA,DECIPHER), creating a dictionary mapping gene identifiers to sets of HPO terms. - Information Content (IC) Calculation:

a. Compute the frequency of each HPO term in the entire annotation corpus:

freq(t) = (annotations for t and its descendants) / (total annotations). b. Calculate IC for each term:IC(t) = -log(freq(t)). - Patient Profile Processing:

a. Input the patient's list of HPO terms.

b. Expand each term to include all its ancestor terms up to the root (

Phenotypic abnormality, HP:0000118). - Similarity Score Calculation (SimGIC Method):

a. For each gene profile G:

i. Expand all HPO terms in G to include their ancestors.

ii. Compute the weighted intersection: Sum the IC of all terms common to both the patient profile P and the gene profile G.

iii. Compute the weighted union: Sum the IC of all terms present in either P or G.

iv. Calculate the similarity score:

SimGIC(P, G) = (weighted intersection) / (weighted union). - Gene Ranking & Output:

a. Rank all genes in descending order of their

SimGIC(P, G)score. b. Output a table with columns:Gene_ID,Gene_Symbol,SimGIC_Score,Associated_Phenotypes. c. The top-ranking genes represent the strongest phenotypic matches and are prioritized for variant filtering in sequencing data.

Protocol 2: Integrating GO Term Similarity for Functional Validation

Objective: To support candidate genes from HPO similarity by assessing the functional relatedness of their Gene Ontology (GO) annotations, revealing potential shared pathogenic mechanisms.

Materials & Software:

- List of candidate genes from Protocol 1.

go.oboontology file.- Gene Association File (GAF) for human, or annotations from Ensembl BioMart.

- Similar software stack as Protocol 1.

Procedure:

- Retrieve GO Annotations: a. For each candidate gene, retrieve its associated GO terms (Biological Process, Molecular Function, Cellular Component) using a GAF file or API query to Ensembl.

- Pairwise Gene-Gene Functional Similarity: a. Select a semantic measure (e.g., Resnik) for GO terms. b. For a pair of genes (G1, G2), calculate the best-match average similarity: For each term in G1, find the maximal similarity to any term in G2, and average these maxima, then repeat from G2 to G1, and compute the average of the two averages.

- Construct Functional Network: a. Create a matrix of pairwise functional similarity scores for all candidate genes. b. Apply a similarity threshold (e.g., 0.7) to define edges and construct a gene-gene functional interaction network.

- Analysis: a. Genes forming tight clusters in this network may participate in shared biological processes disrupted in the patient. b. This functional cohesion among phenotypically similar genes strengthens the evidence for their involvement in the disease.

Diagram Title: Two-Layer Validation Linking Phenotypic (HPO) and Functional (GO) Similarity

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Resources for Semantic Similarity Analysis

| Item / Resource | Category | Function & Application Notes |

|---|---|---|

| Human Phenotype Ontology (HPO) | Ontology | Provides the standardized vocabulary (terms) for describing human phenotypic abnormalities. Foundational for encoding profiles. |

hp.obo & phenotype.hpoa Files |

Data | The core ontology structure and curated gene/phenotype associations. Required as input for all similarity calculations. |

| Gene Ontology (GO) & Annotations | Ontology & Data | Provides standardized terms for gene function. Used for functional coherence analysis of candidate genes. |

Python pronto Library |

Software Tool | Efficient parser for OBO-format ontology files (HPO, GO). Essential for loading and traversing the ontology graph. |

semantic-similarity Python Package |

Software Tool | Implements key similarity measures (Resnik, Lin, Jiang, SimGIC) for both HPO and GO. Standardizes computation. |

| Phenotype Annotation Tools (e.g., ClinPhen) | Software Tool | Extracts HPO terms from free-text clinical notes, automating the creation of patient phenotype profiles. |

| Exomiser / Phen2Gene | Integrated Pipeline | End-to-end gene prioritization tools that incorporate HPO semantic similarity alongside variant frequency and pathogenicity data. |

| Cytoscape / NetworkX | Visualization/Analysis | Used to visualize and analyze gene networks created from phenotypic or functional similarity matrices. |

From Data to Diagnosis: Step-by-Step Methods for HPO/GO Analysis

Within the broader thesis on leveraging Human Phenotype Ontology (HPO) and Gene Ontology (GO) term analysis for rare disease classification, this protocol details the essential translational workflow. It bridges unstructured clinical narratives and structured genomic data, enabling the prioritization of candidate genes and biological pathways for functional validation and therapeutic targeting.

Application Notes & Core Protocol

Phase 1: Clinical Note to HPO Phenotype List

Objective: Extract a standardized, computable phenotypic profile from free-text clinical notes. Protocol:

- De-identification: Use a validated tool (e.g., ClinDeID, MITRE's

medspacy) to remove all protected health information (PHI) from clinical narratives. - Phenotypic Concept Recognition: Process the de-identified text using an NLP tool optimized for biomedical text.

- Tool Recommendation:

scispaCywith theen_ner_bc5cdr_mdmodel orMetaMap. - Execution: The tool will identify mentions of clinical signs, symptoms, and abnormalities.

- Tool Recommendation:

- HPO Concept Mapping: Map the extracted clinical terms to canonical HPO IDs.

- Primary Method: Utilize the

pyHpolibrary or the official HPOhpo-toolto perform lexical matching against the HPO database (hp.obo). - Validation: A clinician or trained curator must review and validate the automated mapping to ensure accuracy, especially for ambiguous terms.

- Primary Method: Utilize the

- Phenotypic Series Compression: For patients with longitudinal notes, condense repeated mentions of the same HPO term and record the earliest documented onset age.

Table 1: HPO Concept Mapping Output Example

| Clinical Note Snippet | Extracted Concept | Mapped HPO Term | HPO ID | Frequency |

|---|---|---|---|---|

| "...patient exhibits hypertelorism and a prominent forehead..." | hypertelorism | Hypertelorism | HP:0000316 | 1 |

| "...global developmental delay noted at 24 months..." | developmental delay | Global developmental delay | HP:0001263 | 3 |

| "...subject has coarse facial features..." | coarse facial features | Coarse facial features | HP:0000280 | 1 |

Phase 2: HPO List to Candidate Gene List

Objective: Generate a ranked list of candidate genes associated with the patient's phenotypic profile. Protocol:

- Gene Prioritization Input: Use the list of validated HPO IDs from Phase 1.

- Tool Selection & Execution: Employ a gene prioritization algorithm. A common and effective method is the Phenotypic Similarity Score.

- Tool:

HPO2Geneor thephenomizeralgorithm via thepyHpolibrary. - Calculation: The algorithm computes the semantic similarity between the patient's set of HPO terms and the known phenotypic profiles (annotation scores) of all genes in the HPO knowledgebase.

- Tool:

- Ranking & Output: Genes are ranked by their similarity score. A higher score indicates a stronger phenotypic match.

Table 2: Gene Prioritization Results (Hypothetical Output)

| Rank | Gene Symbol | Gene ID (Ensembl) | Phenotype Similarity Score | Known Disease Association (OMIM) |

|---|---|---|---|---|

| 1 | DYNC2H1 | ENSG00000137457 | 0.92 | Short-rib thoracic dysplasia 3 |

| 2 | IFT80 | ENSG00000163468 | 0.87 | Short-rib thoracic dysplasia 2 |

| 3 | WDR35 | ENSG00000145907 | 0.79 | Cranioectodermal dysplasia 2 |

Phase 3: Gene List to Annotated Genomic List with GO Terms

Objective: Annotate the candidate gene list with functional (GO) and pathway information to identify biological themes. Protocol:

- GO Term Enrichment Analysis:

- Input: The ranked gene list from Phase 2 (e.g., top 100 candidates).

- Background Set: Use all protein-coding genes (approx. 20,000) as the statistical background.

- Tool: Perform over-representation analysis using

clusterProfiler(R) org:Profiler(web/API). - Parameters: Test for enriched Biological Process (BP) and Cellular Component (CC) GO terms. Apply a multiple testing correction (Benjamini-Hochberg FDR < 0.05).

- Pathway Analysis: In parallel, query pathway databases (KEGG, Reactome) using the same gene list to identify dysregulated pathways.

- Integration: Create a final annotated genomic list that merges phenotypic scores with functional annotations.

Table 3: GO Term Enrichment Results (Hypothetical)

| GO Term ID | GO Term Name | Domain | p-value | Adjusted p-value (FDR) | Gene Ratio (Hit/List) | Associated Candidate Genes |

|---|---|---|---|---|---|---|

| GO:0042073 | Intraflagellar transport | BP | 2.1E-08 | 4.5E-06 | 8/100 | DYNC2H1, IFT80, WDR35, IFT140... |

| GO:0005929 | Cilium | CC | 3.4E-07 | 2.1E-05 | 12/100 | DYNC2H1, IFT80, WDR35, NEK1... |

| GO:0007018 | Microtubule-based movement | BP | 1.5E-05 | 0.003 | 6/100 | DYNC2H1, DNAH5, SPAG1... |

Visualizations

Diagram 1: End-to-End Clinical Genomics Workflow

Diagram 2: Core HPO & GO Analysis for Classification

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Resources for the Clinical-Genomic Workflow

| Item | Function/Description | Source/Example |

|---|---|---|

HPO Ontology File (hp.obo) |

The core ontology file containing all HPO terms, definitions, and hierarchical relationships. | HPO Website (latest release) |

HPO Annotations (phenotype.hpoa) |

File containing known associations between HPO terms and genes (with evidence scores). | HPO Website / Monarch Initiative |

Gene Ontology (go.obo/go-basic.obo) |

The core ontology file for GO terms (Biological Process, Cellular Component, Molecular Function). | Gene Ontology Consortium |

GO Gene Annotations (goa_human.gaf) |

File containing known associations between human genes and GO terms. | EBI GOA Database |

pyHpo Library |

A comprehensive Python library for creating HPO profiles, calculating semantic similarity, and performing gene prioritization. | PyPI Repository |

clusterProfiler (R package) |

A widely used R package for statistical analysis and visualization of functional profiles for genes and gene clusters. | Bioconductor |

g:Profiler Tool |

A web server and API for functional enrichment analysis, supporting multiple ID types and ontologies (HPO, GO, KEGG). | g:Profiler Website |

| ClinVar Database | Public archive of reports of genotype-phenotype relationships with clinical significance. | NCBI ClinVar |

| OMIM API | Programmatic access to the Online Mendelian Inheritance in Man database for disease-gene summaries. | OMIM.org |

Enrichment analysis is a cornerstone of computational biology, enabling researchers to identify biological themes over-represented within a gene or variant list derived from experiments (e.g., sequencing, microarrays). Within rare disease classification research, analyzing results against structured vocabularies like the Human Phenotype Ontology (HPO) and the Gene Ontology (GO) is essential. HPO terms describe phenotypic abnormalities, aiding in the association of genotype with clinical presentation. GO terms describe molecular functions (MF), biological processes (BP), and cellular components (CC) of gene products. Statistical enrichment analysis determines whether certain HPO or GO terms occur more frequently in a target gene set than expected by chance, guiding hypothesis generation for disease mechanisms.

Core Statistical Method: The Hypergeometric Test

The most common statistical test used is the hypergeometric test, a non-parametric method analogous to the one-sided Fisher's exact test.

Conceptual Framework

The test models the probability of drawing a specific number of "successes" (genes associated with a particular term) without replacement from a finite population. It is defined by four parameters:

- N: Total number of genes in the background population (the "urn").

- K: Total number of genes in the background population annotated with the specific GO/HPO term.

- n: Size of the target gene list (the "draws").

- x: Number of genes in the target list annotated with the specific term.

Probability Calculation

The probability of observing exactly x genes with the term is given by the hypergeometric probability mass function:

P(X = x) = [C(K, x) * C(N-K, n-x)] / C(N, n)

Where C(a, b) is the binomial coefficient ("a choose b").

The p-value for enrichment is the probability of observing x or more genes with the term by chance:

p-value = Σ P(X = i) for i = x to min(n, K)

A low p-value (typically < 0.05 after multiple testing correction) indicates significant enrichment.

Key Assumptions and Considerations

- Background Population (N): Must be carefully chosen. For rare disease exome analysis, it should be the set of all genes effectively assayed by the sequencing platform/bioinformatic pipeline.

- Multiple Testing Correction: Essential due to the testing of hundreds/thousands of terms. Benjamini-Hochberg (False Discovery Rate, FDR) is standard.

- Term Filtering: Very broad (e.g., "biological process") or very specific terms (annotated to 1-2 genes) are often filtered out.

Table 1: Illustrative Example of Hypergeometric Test Inputs

| Parameter | Description | Example Value for a GO Term Analysis |

|---|---|---|

| N | Background genes | 18,000 (all protein-coding genes) |

| K | Genes annotated with term "GO:0006915" (apoptosis) | 800 |

| n | Target gene list from rare disease cohort | 150 |

| x | Genes in target list annotated with apoptosis | 25 |

| Expected (n*K/N) | Expected number by chance | 6.7 |

| Fold Enrichment | (x/n) / (K/N) | 2.5 |

| p-value | Hypergeometric test result | 1.2e-05 |

| Adjusted p-value (FDR) | After Benjamini-Hochberg correction | 0.003 |

Detailed Experimental Protocol: Conducting Enrichment Analysis

Protocol Title: GO/HPO Enrichment Analysis of Candidate Genes from a Rare Disease WES Cohort

Objective: To identify biologically coherent themes among a list of candidate pathogenic variants from whole-exome sequencing (WES) of patients with a novel rare syndrome.

Materials & Reagent Solutions (The Scientist's Toolkit)

Table 2: Key Research Reagent Solutions for Enrichment Analysis

| Item/Resource | Function/Description | Example/Source |

|---|---|---|

| Gene List | Target set of gene identifiers (e.g., ARID1B, KMT2D). Output from variant filtering pipeline. | In-house WES pipeline (VCF files) |

| Background List | Comprehensive list of all possible genes considered in the experiment. | ClinGen Panels, Exome Aggregation Consortium list |

| Ontology Annotations | Mappings of genes to GO terms (BP, MF, CC) and HPO terms. | Gene Ontology Consortium, HPO Association File |

| Statistical Software | Tool to perform hypergeometric test and manage multiple testing. | R (clusterProfiler, enrichR), Python (gseapy) |

| Visualization Tool | To generate interpretable plots of results. | R (ggplot2, enrichplot), REVIGO |

| Multiple Testing Method | Algorithm to control false positive rate across many hypothesis tests. | Benjamini-Hochberg FDR |

Step-by-Step Procedure

Step 1: Generate Target Gene List

- Perform WES on proband and family members (trio analysis preferred).

- Apply standard variant calling (GATK), annotation (ANNOVAR, SnpEff), and filtration filters (population frequency <0.01 in gnomAD, impact severity, inheritance models).

- Compile a final list of strong candidate genes harboring putative pathogenic variants (e.g., 50-200 genes). Use standard gene symbols (HGNC).

Step 2: Define Background Gene Set

- Define the universe of genes from which the target list is drawn. This should reflect the effective capture region of your exome kit (e.g., ~20,000 genes).

- Critical: Exclude genes not reliably sequenced or analyzed to avoid bias.

Step 3: Acquire Current Ontology Annotations

- Download the most recent

goa_human.gaffile from the GO Consortium and thephenotype.hpoafile from HPO. - Pre-process to create a gene-to-terms mapping, excluding evidence codes like IEA (Inferred from Electronic Annotation) if higher-quality evidence is required.

Step 4: Perform Enrichment Analysis

- Using R (

clusterProfiler):

- For HPO analysis, use the

enrichHPfunction from theDOSE/HPOanalyzepackages or similar.

Step 5: Interpret and Visualize Results

- Sort results by adjusted p-value (FDR) and fold enrichment.

- Generate a dot plot or bar plot showing top enriched terms.

- Generate an enrichment map to cluster related terms.

Advanced Considerations & Alternative Methods

- Other Statistical Tests: Binomial test, Chi-squared test, Fisher's exact test. The hypergeometric is generally preferred for its accuracy with finite populations.

- Gene Set Enrichment Analysis (GSEA): A rank-based method that considers all genes in an experiment without arbitrary significance cutoffs, useful for transcriptomic data.

- Redundancy Reduction: Tools like REVIGO semantically cluster similar GO terms to simplify result interpretation.

- Network-Based Enrichment: Methods like EnrichmentMap or those in Cytoscape visualize term relationships and shared genes.

Pathway and Workflow Visualization

Diagram Title: Enrichment Analysis Workflow for Rare Disease WES

Diagram Title: Hypergeometric Sampling Model

Within a thesis on leveraging Human Phenotype Ontology (HPO) and Gene Ontology (GO) term analysis for rare disease gene prioritization and classification, a structured bioinformatics pipeline is essential. This guide provides detailed Application Notes and Protocols for three pivotal tools: Phen2Gene for rapid candidate gene identification from HPO terms, GOrilla for identifying enriched GO terms from ranked gene lists, and clusterProfiler (R/Bioconductor) for comprehensive functional enrichment analysis. Together, they form a robust workflow from phenotypic description to biological interpretation.

Key Research Reagent Solutions

The following table lists essential computational "reagents" required to execute the analyses described in this guide.

| Item | Function in Analysis | Key Notes |

|---|---|---|

| HPO Term List | Input for Phen2Gene. Represents the patient's clinical phenotype in a standardized, computable format. | Curated from the HPO database (https://hpo.jax.org). Must use exact HPO IDs (e.g., HP:0001250). |

| Ranked Gene List | Input for GOrilla. The output from Phen2Gene or other prioritization tools, ordered by relevance. | File format: a single column text file. Top of list = highest priority. |

| Gene Identifier List | Input for clusterProfiler's ORA (Over-Representation Analysis). A target/universe gene set. | Requires consistent ID type (e.g., Entrez, Ensembl, Symbol). Universe set is recommended for background. |

| Organism Database Package | Provides annotation data for clusterProfiler (e.g., org.Hs.eg.db). | Enables ID conversion and access to GO annotations for the target species. |

| Reference Genome Assembly | Underpins all genomic coordinate-based operations if used upstream. | Ensures consistency in gene annotation versions (e.g., GRCh38). |

Application Notes & Protocols

Phen2Gene: From Phenotype to Candidate Genes

Purpose: To rapidly prioritize candidate genes associated with a set of input HPO terms. Thesis Context: Serves as the initial gene discovery engine, translating the clinical phenotype (HPO terms) into a ranked list of potential causative genes for a rare disease case.

Protocol:

- Input Preparation: Compile a list of relevant HPO IDs from patient phenotyping. Example: HP:0001250 (Seizure), HP:0100021 (Arachnodactyly), HP:0000501 (Glaucoma).

- Tool Execution:

- Web Server (Common): Access the Phen2Gene web interface (http://phen2gene.renalgene.org). Paste HPO IDs, select the desired prediction model (e.g., "Combined"), and run.

- Local Command Line: For batch processing.

- Output Interpretation: The primary output is a tab-separated file ranking genes by a score. The top 10-20 genes are typically taken forward for downstream analysis.

Table: Example Phen2Gene Output (Top 5 Genes)

| Rank | Gene Symbol | Score | Associated Known Diseases (from DisGeNET) |

|---|---|---|---|

| 1 | FBN1 | 0.983 | Marfan syndrome, Weill-Marchesani syndrome |

| 2 | TGFBR2 | 0.721 | Loeys-Dietz syndrome, Marfan syndrome |

| 3 | ADAMTS10 | 0.654 | Weill-Marchesani syndrome 1 |

| 4 | LTBP2 | 0.601 | Primary congenital glaucoma, Marfan syndrome |

| 5 | CBS | 0.588 | Homocystinuria |

GOrilla: Gene Ontology Enrichment of Ranked Lists

Purpose: To identify GO terms that are significantly enriched at the top of a ranked gene list. Thesis Context: Applied to the Phen2Gene output to understand which biological processes, molecular functions, or cellular components are over-represented among the top candidate genes, offering immediate biological insight.

Protocol:

- Input Preparation: Extract the single column ranked gene list from Phen2Gene output (e.g., gene symbols).

- Tool Execution:

- Access GOrilla web tool (http://cbl-gorilla.cs.technion.ac.il).

- Select "Ranked list" mode.

- Paste the ranked gene list into the target list field. For the background/universal set, you can paste all genes analyzed by Phen2Gene or use the default organism-specific set.

- Choose the organism (e.g., Homo sapiens) and run.

- Output Interpretation: GOrilla produces two main result tables: one ordered by enrichment p-value and another hierarchical "tree" view. Focus on terms with low p-value and high enrichment (E-score).

Table: Example GOrilla Enriched GO Terms (Biological Process)

| GO Term | Description | P-value | Enrichment (E-score) | FDR q-value |

|---|---|---|---|---|

| GO:0030198 | extracellular matrix organization | 2.15E-08 | 8.45 | 3.01E-05 |

| GO:0001501 | skeletal system development | 4.67E-07 | 6.12 | 3.27E-04 |

| GO:0043062 | extracellular structure organization | 5.88E-07 | 8.12 | 2.75E-04 |

clusterProfiler: Comprehensive Functional Profiling in R

Purpose: To perform statistical analysis and visualization of functional profiles (GO, KEGG, etc.) for gene clusters. Thesis Context: Used for deeper, customizable enrichment analysis and publication-quality visualization of results from the prioritized gene set. Allows comparison across multiple gene lists.

Protocol: Over-Representation Analysis (ORA)

- Setup Environment in R:

Prepare Gene List: Load the vector of top candidate gene symbols (e.g., from Phen2Gene).

Run GO Enrichment Analysis:

Visualize Results:

Integrated Workflow Diagram

Title: Integrated HPO-GO Analysis Workflow for Rare Disease Research

Enrichment Analysis Results Visualization Diagram

Title: Gene Set Annotation to Enriched GO Terms

Within a thesis investigating standardized ontologies for rare disease research, this case study demonstrates the application of Human Phenotype Ontology (HPO) and Gene Ontology (GO) analyses to classify a cohort of patients with a novel, undiagnosed rare disease. The integration of phenotypic (HPO) and molecular functional (GO) data provides a multi-omics stratification strategy, crucial for identifying potential disease mechanisms and therapeutic targets in drug development.

A cohort of 35 probands presented with a novel syndrome characterized by severe neurodevelopmental delay, distinct craniofacial features, and recurrent infections. Whole-exome sequencing (WES) identified variants of uncertain significance (VUS) in 12 candidate genes.

Table 1: Cohort Clinical & Genetic Summary

| Parameter | Value |

|---|---|

| Total Probands | 35 |

| Male / Female | 18 / 17 |

| Median Age (Range) | 4.2 years (0.5-12) |

| Probands with Candidate VUS | 28 (80%) |

| Unique Candidate Genes with VUS | 12 |

| Average HPO Terms per Proband | 9.2 |

Table 2: Top 5 Most Frequent HPO Terms in Cohort

| HPO Term ID | Term Name | Frequency | % of Cohort |

|---|---|---|---|

| HP:0001250 | Seizure | 28 | 80% |

| HP:0004322 | Short stature | 25 | 71% |

| HP:0000252 | Microcephaly | 23 | 66% |

| HP:0001263 | Global developmental delay | 35 | 100% |

| HP:0002719 | Recurrent infections | 20 | 57% |

Detailed Protocols

Protocol 1: HPO-Based Phenotypic Similarity Clustering

Objective: To group patients based on phenotypic similarity for genotype correlation.

- Phenotype Annotation: For each proband, clinical features are annotated using HPO terms via tools like PhenoTips or ClinPhen.

- Similarity Scoring: Calculate pairwise patient similarity using the Resnik semantic similarity measure implemented in the

ontologySimR package or Python'spyobolibrary. - Cluster Generation: Perform hierarchical clustering on the similarity matrix (method: Ward's linkage). Determine optimal cluster number via the silhouette method.

- VUS Integration: Map the 12 candidate genes onto patient clusters to identify genotype-phenotype associations.

Protocol 2: GO Enrichment Analysis of Candidate Genes

Objective: To identify significantly overrepresented biological processes among candidate genes.

- Gene List Input: Compile the list of 12 candidate genes (e.g., GENE1, GENE2, ...).

- Background Definition: Define the background gene set as all genes successfully assayed by the WES platform (~20,000 genes).

- Statistical Test: Use a hypergeometric test or Fisher's exact test via tools like g:Profiler, clusterProfiler (R), or DAVID.

- Correction & Threshold: Apply Benjamini-Hochberg false discovery rate (FDR) correction. Retain GO terms with FDR < 0.05.

- Redundancy Reduction: Simplify results using REVIGO to cluster semantically similar GO terms.

Table 3: Top GO Biological Process Enrichment Results (FDR < 0.05)

| GO Term ID | Term Name | Gene Count | Background Count | p-value | FDR |

|---|---|---|---|---|---|

| GO:0045087 | Innate immune response | 7 | 500 | 2.1e-06 | 0.001 |

| GO:0007165 | Signal transduction | 8 | 1500 | 4.5e-05 | 0.018 |

| GO:0007399 | Nervous system development | 6 | 900 | 7.8e-05 | 0.022 |

The Scientist's Toolkit: Key Research Reagent Solutions

Table 4: Essential Reagents & Resources for HPO/GO Rare Disease Analysis

| Item / Resource | Function / Application |

|---|---|

| PhenoTips / ClinPhen | Software for standardized HPO term entry from clinical notes; enables rapid phenotype capture and prioritization. |

| HPO Annotations (genestophenotype.txt) | File linking HPO terms to known disease genes; essential for gene prioritization (e.g., Exomiser). |

| g:Profiler / clusterProfiler | Web tool and R package for performing GO enrichment analysis with multiple correction methods. |

| Cytoscape with StringApp | Network visualization software; maps candidate genes onto protein-protein interaction networks enriched for GO terms. |

| Revigo | Web tool for summarizing and visualizing long lists of GO terms by removing redundant entries. |

| SimGIC / Resnik Similarity Scripts | Algorithms for calculating semantic similarity between sets of HPO terms, enabling patient clustering. |

Visualizations

HPO-GO Rare Disease Analysis Workflow

Innate Immune Pathway Enriched in Cohort

Application Notes

This protocol outlines a computational framework for prioritizing candidate genes in rare disease research by integrating phenotypic (Human Phenotype Ontology, HPO) and functional (Gene Ontology, GO) evidence. The framework is designed to score and rank genes based on the convergence of multiple ontological data layers, enhancing the identification of causative variants from next-generation sequencing data.

Theoretical Basis

Rare disease gene discovery often yields a list of candidate genes with plausible variants. The biological interpretation of these candidates is bottlenecked by the need to integrate disparate evidence types. This framework formalizes the integration of HPO-based phenotypic similarity between patient profiles and model organism/knowledgebase data with GO-based functional congruence. The core hypothesis is that the true causative gene will exhibit high scores across multiple independent ontological axes.

Framework Architecture

The framework operates on a scoring system where each gene receives independent scores from HPO and GO analyses, which are then combined into a unified prioritization rank. HPO scoring uses semantic similarity metrics to compare patient phenotype terms (e.g., from clinical evaluation) with known gene-to-phenotype associations (e.g., from HPO annotations). GO scoring assesses the functional coherence of a candidate gene set with known disease mechanisms or pathways.

Table 1: Core Ontological Resources for Evidence Integration

| Resource | Version | Primary Use in Framework | Key Metric |

|---|---|---|---|

| Human Phenotype Ontology (HPO) | Releases (monthly) | Phenotypic similarity calculation | Resnik, Jaccard, or Phenomizer scores |

| Gene Ontology (GO) & Annotations | Releases (monthly) | Functional coherence assessment | Semantic similarity, Enrichment p-value |

| Monarch Initiative Knowledge Graph | Latest Snapshot | Integrated genotype-phenotype data | Association score cross-reference |

| OMIM (Online Mendelian Inheritance in Man) | Updated Catalog | Clinical syndrome validation | Phenotype-Gene confirmed associations |

Table 2: Quantitative Output Example from a Prioritization Run

| Candidate Gene | HPO Score (0-1) | GO Functional Coherence Score (0-1) | Integrated Z-score | Final Rank |

|---|---|---|---|---|

| GENE X | 0.92 | 0.88 | 2.45 | 1 |

| GENE Y | 0.76 | 0.45 | 0.98 | 5 |

| GENE Z | 0.81 | 0.91 | 2.12 | 2 |

| GENE W | 0.34 | 0.87 | 0.87 | 6 |

Experimental Protocols

Protocol 1: HPO-Based Phenotypic Similarity Scoring

Objective: To compute a quantitative score representing the match between a patient's phenotypic profile and known gene-associated phenotypes.

Materials & Software:

- Patient phenotype list (HPO terms).

hp.oboontology file (latest from HPO website).phenotype.hpoaannotation file (gene to HPO term associations).- Computational tools: Python with

pronto,scipy, andsklearnlibraries, or command-line toolphenomizer.

Procedure:

- Data Curation: Format the patient's clinical features as a list of standardized HPO IDs (e.g., HP:0001250, Seizure).

- Ontology Loading: Load the

hp.obofile to create a traversable ontology graph. - Annotation Mapping: Load the

phenotype.hpoafile to create a dictionary linking each gene to its set of annotated HPO terms. - Similarity Calculation: For each candidate gene: a. Retrieve its annotated HPO term set (query profile, Q). b. Define the patient's HPO term set (patient profile, P). c. Calculate the pairwise semantic similarity between all terms in P and Q using a metric like Resnik (information content-based). Use the ontology graph to find common ancestors. d. Aggregate pairwise scores using the Best-Match Average (BMA) strategy: BMA = (Avg(max similarity for each p in P) + Avg(max similarity for each q in Q)) / 2.

- Score Normalization: Normalize all gene BMA scores to a 0-1 range using min-max scaling across the candidate list.

Protocol 2: GO-Based Functional Coherence Assessment

Objective: To evaluate if candidate genes share significant functional biological context, suggesting involvement in a common disease-relevant process.

Materials & Software:

- List of candidate genes (Entrez or Ensembl IDs).

- GO ontology (

go-basic.obo) and gene association files (e.g.,goa_human.gaf) from GO Consortium. - Background gene set (e.g., all protein-coding genes).

- Tools: R with

clusterProfiler/topGOor Python withgoatools.

Procedure:

- Background Preparation: Create a background list of all genes present in the GO annotation file.

- Enrichment Analysis: Perform statistical over-representation analysis for each candidate gene list (can be run per-gene using a "guilt-by-association" approach with neighbors from STRING database, or on the full list). a. For each GO term (Biological Process subset recommended), perform a Fisher's exact test comparing the frequency of the term in the candidate list vs. the background. b. Apply multiple testing correction (Benjamini-Hochberg FDR) to p-values.

- Coherence Scoring: For each candidate gene

g: a. Identify the set of significantly enriched GO terms (FDR < 0.05) from an analysis run on genes functionally linked tog. b. Calculate the Functional Congruence Score (FCS): FCS = -log10(minimum FDR among enriched terms shared with at least one other candidate gene). If no shared enriched terms, FCS = 0. - Normalization: Normalize FCS scores to a 0-1 range across the candidate list.

Protocol 3: Multi-Ontology Evidence Integration & Ranking

Objective: To combine HPO and GO scores into a single, robust prioritization metric.

Materials & Software: Normalized HPO and GO scores for all candidate genes. Scripting environment (Python/R).

Procedure:

- Score Integration: For each gene

i, calculate a composite score. A standard method is the weighted Z-score method: a. Compute Z-scores for each metric: ZHPOi = (HPOi - μHPO) / σHPO ; ZGOi = (GOi - μGO) / σGO. b. Calculate a combined Z-score: Zcombinedi = (w1 * ZHPOi) + (w2 * ZGOi). Default weights w1 and w2 can be set to 1.0, or optimized for a specific disease cohort. - Rank Generation: Sort all candidate genes in descending order based on the

Z_combinedscore. - Visual Inspection: Manually review top-ranked genes in the context of known disease pathways and inheritance patterns from OMIM to finalize candidates for experimental validation.

Diagrams

Diagram 1: Multi-ontology evidence integration workflow for gene prioritization.

Diagram 2: HPO semantic similarity scoring process flow.

The Scientist's Toolkit

Table 3: Research Reagent Solutions for Multi-Ontology Analysis

| Item Name / Resource | Category | Primary Function in Framework |

|---|---|---|

| HPO OBO & Annotation Files | Data Resource | Provide the standardized ontology structure and curated gene/phenotype associations for semantic similarity calculations. |

| GO OBO & GAF Files | Data Resource | Provide the functional ontology and gene/term annotations for functional enrichment and coherence analysis. |

Python pronto Library |

Software Tool | Enables parsing and programmatic traversal of OBO-format ontologies (HPO, GO) for custom scoring scripts. |

R clusterProfiler Package |

Software Tool | A comprehensive suite for statistical enrichment analysis of GO terms and other functional categories. |

| Phenomizer (Exomiser) | Software Tool | A standalone tool or component for performing high-performance HPO-based phenotypic similarity searches against knowledgebases. |

| Monarch Initiative API | Web Service | Allows programmatic querying of an integrated genotype-phenotype knowledge graph to validate or cross-reference candidate genes. |

| Cytoscape with StringApp | Visualization Software | Used to visualize the functional interaction network among candidate genes, overlaying GO and HPO scores as node attributes. |

Overcoming Common Pitfalls: Optimizing Your HPO and GO Analysis Pipeline

Context: Within a thesis on HPO (Human Phenotype Ontology) and GO (Gene Ontology) term analysis for rare disease classification, a primary obstacle is the reliance on clinical data with incomplete, missing, or imprecise phenotypic descriptions. This directly impacts the accuracy of computational phenotype-driven gene prioritization and variant classification.

Current annotation databases suffer from gaps. A meta-analysis of data sources reveals the following common issues:

Table 1: Common Issues in Phenotypic Annotation Data Sources

| Data Source Type | Prevalence of Incompleteness | Major Impediment | Typical Impact on HPO Mapping |

|---|---|---|---|

| Legacy Clinical Records (Text) | ~60-80% unstructured notes | Missing standardized terms; narrative descriptions | Manual curation required; high risk of annotation loss |

| Public Biobanks (e.g., UK Biobank) | ~30-50% of rare disease cases | Broad billing codes (ICD-10) instead of granular phenotypes | Imprecise mapping to HPO; loss of specificity |

| Published Case Reports | High precision, but low coverage | Variable reporting standards; emphasis on unique features | Inconsistent annotation depth across similar diseases |

| Patient-Reported Outcomes | Subjective quantification | Imprecise language (e.g., "severe pain") | Difficult mapping to qualifier terms (e.g., HP:0011008 'Severe') |

Table 2: Effect of Annotation Quality on Gene Prioritization Performance

| Annotation Completeness Level | Mean Rank of Causal Gene (Simulated Exome) | Recall @ 10 Genes | Required Curation Time (Hrs/Case) |

|---|---|---|---|

| High (Full HPO terms from expert) | 4.2 | 0.92 | 0.5 (review only) |

| Medium (ICD-10 mapped to HPO) | 18.7 | 0.65 | 2-3 (semi-auto curation) |

| Low (Free-text key symptoms only) | 45.3 | 0.31 | 5+ (full manual curation) |

Experimental Protocols

Protocol 2.1: Semi-Automated Curation & Expansion of Sparse Phenotype Lists

Objective: To transform a short, imprecise list of clinical features (e.g., "seizures, low muscle tone, developmental delay") into a comprehensive, standardized HPO profile.

Materials: See "Scientist's Toolkit" below. Procedure:

- Input & Pre-processing: Start with the initial phenotype list. Use NLP preprocessing (e.g., Stanford CoreNLP) for tokenization, lemmatization, and negation detection.

- Primary HPO Mapping: Execute

hpo-toolkitorPhenoTaggerAPI batch query. Manually review all suggested HPO term mappings for accuracy. - Phenotypic Expansion: For each confirmed core HPO term (e.g., HP:0001250 'Seizure'), query the HPO database via

robotto retrieve all is-a parent terms and frequent phenotypic abnormality sibling terms documented in similar diseases. - Clinical Review: Present the expanded term list to a clinical geneticist. Mark terms as confirmed, excluded, or unknown.

- Output: Generate a final validated HPO term set with qualifiers (e.g., onset, severity) where known.

Protocol 2.2: Benchmarking Classification Robustness to Annotation Noise

Objective: To evaluate the resilience of a rare disease classification pipeline (e.g., Exomiser, Phenomizer) against controlled levels of annotation noise.

Materials: A validated benchmark set of solved rare disease cases with expert-curated HPO lists. Procedure:

- Create Noise Models: Programmatically degrade the gold-standard HPO lists.

- Deletion: Randomly remove 10%, 30%, 50% of terms.

- Imprecision: Replace specific terms with their more generic parent terms (e.g., HP:0010851 'Ventricular septal defect' → HP:0001627 'Abnormal heart morphology').

- False Annotation: Add 1-2 common but incorrect HPO terms randomly.

- Run Classification: Input each degraded phenotype profile alongside the patient's genomic data (if any) into the classification pipeline. Record the rank/score of the true causal gene/disease.

- Analysis: Plot the degradation of performance (Mean Rank, Recall) against noise level for each model. Fit a regression model to quantify sensitivity.

Visualization: Pathways & Workflows

Diagram 1: Workflow for refining incomplete phenotypic annotations.

Diagram 2: Benchmarking pipeline robustness to annotation noise.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Managing Imprecise Phenotypic Data

| Tool / Resource | Type | Primary Function in This Context | Key Parameter / Note |

|---|---|---|---|

HPO Ontology File (hp.obo) |

Data Resource | Core ontology for mapping and logical expansion. | Use latest monthly release; ensures term coverage. |

| robot (ROBOT Toolkit) | Software Tool | Command-line tool for ontology processing (reasoning, exporting). | Used for querying term hierarchies and relations. |

| PhenoTagger / ClinPhen | NLP Web Service/ Tool | Extracts HPO terms from free-text clinical notes. | Critical for initial structured data creation. |

| hpo-toolkit (Python Library) | Software Library | Programmatic access to HPO for building custom curation tools. | Enables batch mapping and integration into pipelines. |

| Phenomizer / Exomiser | Analysis Pipeline | Benchmark systems for testing refined HPO profiles. | Provides standard performance metrics. |

| Curation Interface (e.g., Phenopacket Builder) | Software Tool | User-friendly interface for clinical expert review/validation. | Essential for high-fidelity manual curation step. |

Application Notes

Bias in gene set databases (e.g., GO, KEGG, MSigDB) and reference population genomic data directly impacts the validity of HPO/GO term analyses for rare disease gene discovery and classification. A primary source of bias is the over-representation of genes studied in common diseases and model organisms, leading to "ascertainment bias." Similarly, reference populations in resources like gnomAD are predominantly of European ancestry, creating "representation bias" that skews variant frequency filtering and pathogenicity predictions.

Table 1: Quantifying Bias in Common Reference Resources

| Resource / Metric | European Ancestry Proportion | Gene Coverage (OMIM) | Notable Underrepresented Areas |

|---|---|---|---|

| gnomAD v4.0 | ~75% of total samples | N/A | African, Indigenous American, Oceanian ancestries |

| GWAS Catalog | ~88% of participants | N/A | Diverse non-European populations |

| GO Biological Process | N/A | ~70% of annotated genes are human | Plant, microbial-specific processes |

| MSigDB Hallmarks | N/A | Heavy bias towards cancer & immunology | Rare disease, neurodevelopmental pathways |

This bias results in: 1) Reduced diagnostic yield for non-European patients, 2) False positive/negative findings in gene-prioritization pipelines, and 3) Skewed pathway enrichment results that miss rare disease biology.

Protocols

Protocol 1: Bias-Audit for Gene Set Enrichment Analysis

Objective: To identify and mitigate database-driven bias in HPO/GO-based pathway enrichment for a candidate rare disease gene list.

Materials:

- Input gene list (e.g., from WES/WGS).

- Gene set collections (GO, KEGG, custom).

- Background gene list (e.g., all genes assayed, or all protein-coding genes).

- Statistical software (R with clusterProfiler, fgsea, or Python with gseapy).

Procedure:

- Enrichment Analysis: Perform standard over-representation analysis (ORA) using your primary database (e.g., GO-BP).

- Background Correction: Re-run ORA using a "bias-aware" background. Instead of all genes, use a set of genes with comparable annotation "maturity" (e.g., genes with at least one publication in PubMed, derived from a dated PubMed search).

- Cross-Database Validation: Run the same analysis on multiple, specialized databases (e.g., MGI for mouse phenotypes, WormBase for C. elegans). Use a meta-analysis tool to combine p-values (Fisher's method).

- Result Filtering: Filter enriched terms by the "representativeness" score of their constituent genes. Calculate this as the ratio of genes in the term with direct experimental evidence (e.g., EXP, IMP evidence codes in GO) vs. computational predictions (IEA).

- Visual Inspection: Manually review top terms for biological coherence beyond well-studied domains (e.g., "immune response," "cell cycle").

Protocol 2: Constructing a Population-Balanced Variant Filtering Pipeline

Objective: To minimize ancestry-based representation bias during variant filtering in a rare disease sequencing cohort.

Materials:

- Cohort VCF files (e.g., from family-based trio WGS).

- Multiple population frequency databases (gnomAD, TOPMed, NHLBI ESP, ancestrally matched cohort databases).

- Variant annotation & filtering pipeline (e.g., ANNOVAR, SnpEff, custom scripts).

Procedure:

- Annotate with Multiple Frequency Sources: Annotate variants with allele frequencies (AF) from gnomAD (broken down by sub-populations: EUR, AFR, AMR, EAS, SAS), TOPMed, and any available matched population database.

- Define Adaptive AF Cutoffs: For each variant, determine the relevant maximum population AF (MPAF). Do not default to the global AF.

- Rule: If the patient's ancestry is well-represented (e.g., Finnish), use the corresponding sub-population AF.

- Rule: If the patient's ancestry is underrepresented, use the most genetically similar population or an aggregate of all non-EUR populations as the MPAF.

- Apply Dynamic Filtering: Filter rare variants based on the adaptive MPAF (e.g., < 0.001 for autosomal dominant, < 0.01 for autosomal recessive). Flag variants where the AF disparity between EUR and other populations is >10-fold for manual review.