Integrating Multi-Omics for Precision Medicine: From Data to Clinical Translation

This article provides a comprehensive overview of the multi-omics landscape in precision medicine, tailored for researchers, scientists, and drug development professionals.

Integrating Multi-Omics for Precision Medicine: From Data to Clinical Translation

Abstract

This article provides a comprehensive overview of the multi-omics landscape in precision medicine, tailored for researchers, scientists, and drug development professionals. It explores the foundational principles of integrating diverse omics layers—genomics, transcriptomics, proteomics, and metabolomics—to achieve a holistic understanding of disease mechanisms. The scope extends to evaluating advanced data integration methodologies, including statistical and machine learning-based approaches, for applications in biomarker discovery and patient stratification. It further addresses critical challenges such as data heterogeneity and analytical optimization, while offering comparative analyses of integration tools. Finally, the article examines validation frameworks and future directions, underscoring the transformative potential of multi-omics in developing personalized therapeutic strategies.

The Building Blocks: Core Concepts and Omics Layers in Precision Medicine

Defining Precision Medicine and the Multi-Omics Paradigm Shift

Precision medicine represents a transformative healthcare model that moves away from conventional, reactive disease management toward proactive prevention and customized healthcare delivery. This approach utilizes a deep understanding of an individual's genome, environment, lifestyle, and their complex interplay to inform personalized prevention, diagnostic, and treatment strategies [1]. The ultimate potential of precision medicine extends beyond individual patient benefits to population-level impacts, including improved health productivity, enhanced patient trust and satisfaction, and significant health cost-benefits across healthcare systems [1] [2].

The foundational revolution enabling this paradigm shift began with genomics, particularly following the completion of the Human Genome Project in 2003, which provided the first reference sequence for human biology [1]. However, genomics alone presents an incomplete picture—the biological blueprint without the dynamic functional layers. The emergence and integration of multiple "omics" technologies has created the necessary multi-dimensional perspective required to fully realize precision medicine's potential [3] [1]. Integrative multiomics, the combination of multiple omics data layers including their interconnections and interactions, provides a more comprehensive understanding of human health and disease than any single approach can deliver separately [1].

The Multi-Omics Ecosystem: Layers of Biological Complexity

The multi-omics approach systematically characterizes and quantifies diverse biological molecules to build a holistic view of biological systems. Each layer provides unique insights into the complex machinery of health and disease.

- Genomics reveals the static DNA sequence and genetic variants that constitute an individual's fundamental biological blueprint and inherited risk profile [3].

- Transcriptomics captures the dynamic expression of genes through RNA measurement, indicating which genetic instructions are actively being used by cells [3].

- Proteomics identifies and quantifies the proteins that execute cellular functions, providing a functional readout of cellular activity [3].

- Epigenomics maps chemical modifications to DNA and histones that regulate gene expression without altering the DNA sequence itself [1].

- Metabolomics measures small-molecule metabolites that serve as direct indicators of physiological state and cellular processes [3].

- Microbiomics characterizes the collective genomes of microbial communities living in symbiosis with the host, which critically modulate immunity, metabolism, and pharmacological response [4].

Table 1: Multi-Omics Data Types and Their Characteristics

| Omics Layer | Molecules Measured | Biological Significance | Common Technologies |

|---|---|---|---|

| Genomics | DNA sequence, variations | Genetic blueprint, disease risk | Whole Genome Sequencing (WGS) |

| Transcriptomics | RNA expression levels | Active gene regulation | RNA Sequencing (RNA-seq) |

| Proteomics | Protein abundance, modifications | Functional effectors, drug targets | Mass Spectrometry |

| Epigenomics | DNA methylation, histone marks | Gene regulation, environmental response | Bisulfite Sequencing, ChIP-seq |

| Metabolomics | Metabolites (sugars, lipids, etc.) | Physiological state, metabolic health | Mass Spectrometry, NMR |

| Microbiomics | Microbial genomes, genes | Host-microbe interactions, immunity | Metagenomic Sequencing |

Core Analytical Challenges in Multi-Omics Integration

The integration of multi-omics data presents substantial technical and analytical hurdles that must be overcome to extract meaningful biological and clinical insights.

Data Heterogeneity and Scale

The fundamental challenge lies in the wild diversity of data types, each with distinct formats, scales, and inherent biases [3]. Genomics data provides a static blueprint across 3 billion base pairs, while transcriptomics captures dynamic cellular activity, proteomics reflects functional tissue states, and metabolomics offers the most direct link to observable phenotype [3]. Clinical data from electronic health records (EHRs) adds another dimension of complexity with both structured information (e.g., lab values) and unstructured data (e.g., physician notes) requiring natural language processing for interpretation [3]. This combination creates the "high-dimensionality problem," where features vastly outnumber samples, potentially breaking traditional statistical methods and increasing false discovery rates [3].

Technical and Computational Hurdles

Several critical technical challenges must be addressed throughout the multi-omics workflow:

- Data normalization and harmonization: Different laboratory platforms generate data with unique technical characteristics that can obscure true biological signals, requiring sophisticated normalization techniques to make datasets comparable [3].

- Missing data management: Incomplete datasets are common in biomedical research (e.g., a patient with genomic data but missing proteomic measurements) and can seriously bias analyses if not handled with robust imputation methods [3].

- Batch effect correction: Technical variations from different technicians, reagents, or sequencing machines create systematic noise that can obscure biological variation, requiring statistical correction methods like ComBat [3].

- Massive computational requirements: Multi-omics analyses often involve petabytes of data, demanding scalable infrastructure like cloud computing and distributed computing frameworks [3].

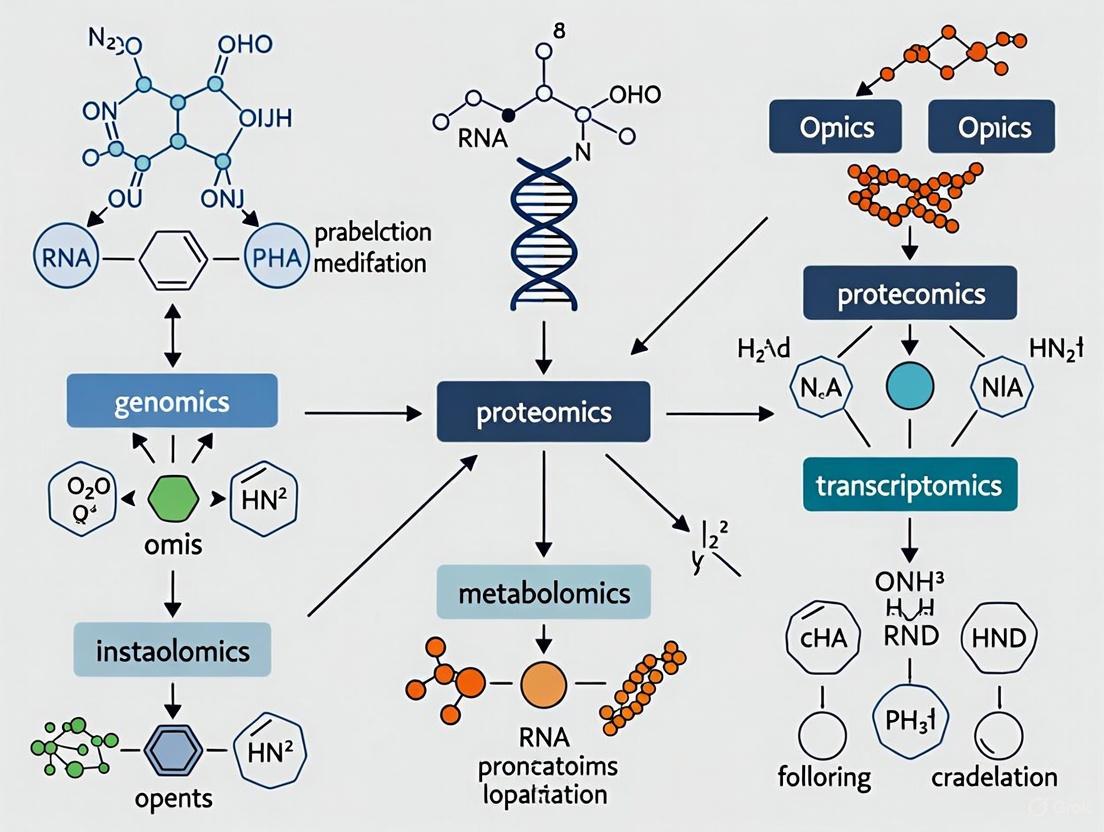

Diagram: Multi-Omics Data Integration Workflow illustrating the pipeline from raw data collection through preprocessing, integration strategies, and AI analysis to biological insights.

AI-Powered Integration Strategies and Computational Frameworks

Artificial intelligence and machine learning have become indispensable for multi-omics integration, providing the pattern recognition capabilities needed to detect subtle connections across millions of data points that remain invisible to conventional analysis [3]. The choice of integration strategy significantly influences what biological relationships can be detected.

Integration Timing Strategies

Researchers typically employ three main strategies differentiated by when integration occurs in the analytical pipeline:

- Early Integration (Feature-level): Merges all raw features into one massive dataset before analysis, potentially capturing complex unforeseen interactions but suffering from extreme dimensionality [3].

- Intermediate Integration: First transforms each omics dataset into a more manageable representation, then combines these representations using network-based methods that incorporate biological context [3].

- Late Integration (Model-level): Builds separate predictive models for each omics type and combines their predictions, offering computational efficiency and robustness to missing data but potentially missing subtle cross-omics interactions [3].

Table 2: Multi-Omics Integration Strategies and Machine Learning Approaches

| Integration Strategy | Key Machine Learning Methods | Advantages | Ideal Use Cases |

|---|---|---|---|

| Early Integration | Deep Neural Networks, Autoencoders | Captures all cross-omics interactions | Biomarker discovery, novel pathway identification |

| Intermediate Integration | Similarity Network Fusion (SNF), Graph Convolutional Networks (GCNs) | Reduces complexity, incorporates biological context | Disease subtyping, patient stratification |

| Late Integration | Ensemble Methods, Stacking | Handles missing data well, computationally efficient | Clinical outcome prediction, diagnostic models |

| Temporal Integration | Recurrent Neural Networks (RNNs), LSTMs | Captures disease progression dynamics | Longitudinal studies, treatment response monitoring |

State-of-the-Art Machine Learning Techniques

Several advanced AI methods have proven particularly effective for multi-omics data:

- Autoencoders (AEs) and Variational Autoencoders (VAEs): Unsupervised neural networks that compress high-dimensional omics data into dense, lower-dimensional "latent spaces" where integration becomes computationally feasible while preserving biological patterns [3].

- Graph Convolutional Networks (GCNs): Specifically designed for network-structured data, making them ideal for biological networks where genes and proteins represent nodes and their interactions form edges [3].

- Similarity Network Fusion (SNF): Creates patient-similarity networks from each omics layer and iteratively fuses them into a single comprehensive network, enabling more accurate disease subtyping [3].

- Transformers: Originally developed for natural language processing, these models adapt brilliantly to biological data through self-attention mechanisms that weigh the importance of different features and data types [3].

Experimental Protocols for Multi-Omics Studies

Implementing robust multi-omics studies requires meticulous experimental design and execution across several critical phases.

Study Design and Cohort Selection

Longitudinal Cohort Establishment: Large prospective cohorts form the backbone of multi-omics research, enabling understanding of genetic determinants, environmental exposures, disease natural history, and treatment response at population level [1]. Key considerations include:

- Ensure representative population diversity to achieve equity in genomic healthcare and extend precision medicine benefits to all populations [1]

- Address current underrepresentation of non-European populations (approximately 86.3% of all genomic studies) through community-based participatory research frameworks [1]

- Develop specialized pediatric cohorts to understand genetic epidemiology of childhood diseases, as many existing cohorts have insufficient child representation [1]

Sample Collection and Processing:

- Implement standardized protocols for biospecimen collection, storage, and processing to maintain sample integrity across multiple analytical platforms [5]

- For limited tissue scenarios (e.g., oncology), consider technologies like ApoStream that capture viable whole cells from liquid biopsies, preserving cellular morphology for downstream multi-omic analysis [5]

- Apply high-resolution multiplexing technologies for simultaneous analysis of multiple molecular layers from minimal sample material [5]

Data Generation and Quality Control

Next-Generation Sequencing (NGS) Applications:

- Utilize sequencing by synthesis (Illumina platforms) for genome and exome sequencing, with modern systems like NovaSeq providing 6-16 Tb output and read lengths up to 2×250 bp [1]

- Implement RNA sequencing for transcriptome profiling with appropriate normalization (TPM, FPKM) to enable cross-sample comparison [3]

- Apply metagenomic sequencing for microbiome characterization, capturing microbial community structure and functional potential [4]

Proteomic and Metabolomic Profiling:

- Employ mass spectrometry-based platforms for protein identification and quantification, including post-translational modifications [3]

- Utilize targeted and untargeted mass spectrometry approaches for metabolomic profiling, providing snapshots of physiological state [3]

- Implement spectral flow cytometry for deep immune phenotyping, enabling analysis of 60+ markers and theoretical identification of thousands of cellular phenotypes [5]

Quality Control Measures:

- Apply batch effect correction methods (e.g., ComBat) to address technical variations from different processing batches [3]

- Implement rigorous normalization procedures specific to each omics data type to enable valid integration [3]

- Use quality metrics and visualization tools to identify outliers and technical artifacts before integration

The Scientist's Toolkit: Essential Research Reagents and Technologies

Successful multi-omics research requires specialized reagents, platforms, and computational tools. The following essential resources represent critical components of the multi-omics workflow.

Table 3: Essential Research Reagents and Platforms for Multi-Omics Studies

| Category | Specific Tools/Reagents | Primary Function | Application Context |

|---|---|---|---|

| Sequencing Platforms | Illumina NovaSeq, HiSeq | High-throughput DNA/RNA sequencing | Whole genome, exome, transcriptome sequencing |

| Proteomics Technologies | Mass spectrometry platforms | Protein identification and quantification | Proteomic profiling, post-translational modifications |

| Single-Cell Technologies | 10x Genomics, SeqWell | Single-cell RNA sequencing | Cellular heterogeneity, rare cell populations |

| Spatial Omics Platforms | 10x Visium, NanoString GeoMx | Tissue context preservation | Spatial transcriptomics, protein localization |

| Flow Cytometry | Spectral flow cytometers | Deep immunophenotyping | Immune cell characterization, biomarker discovery |

| Liquid Biopsy Technologies | ApoStream | Circulating tumor cell isolation | Non-invasive cancer monitoring, biomarker discovery |

| Variant Interpretation Tools | DeepVariant, GATK, REVEL | Genetic variant calling and annotation | Variant prioritization, pathogenicity prediction |

| AI Analysis Platforms | TensorFlow, PyTorch, custom pipelines | Pattern recognition across omics layers | Biomarker discovery, patient stratification |

The integration of multi-omics data represents a paradigm shift in biomedical research, moving from fragmented biological insights to a comprehensive systems-level understanding of health and disease. As computational capabilities advance and multi-omics technologies become more accessible, the clinical implementation of these approaches will accelerate, ultimately fulfilling the promise of precision medicine to deliver personalized, predictive, preventive, and participatory healthcare [1]. Future directions will need to address ongoing challenges in data standardization, computational infrastructure, diversity in genomic databases, and ethical implementation, but the foundation established by multi-omics integration already provides an unprecedented pathway to understanding and treating complex diseases.

Precision medicine represents a transformative healthcare model that utilizes an understanding of an individual’s genome, environment, and lifestyle to deliver customized healthcare [1]. This approach marks a paradigm shift from conventional, reactive disease control to proactive disease prevention and health preservation. The foundation of this revolution lies in the integration of diverse biological data layers, known as multi-omics. Multi-omics combines genomics, transcriptomics, proteomics, metabolomics, and other omics technologies to create a comprehensive picture of human biology [1] [6]. By 2025, multi-omics is poised to significantly advance personalized medicine, enabling more detailed patient health profiles, accelerating therapeutic development, and refining disease detection [6].

The power of multi-omics stems from its ability to overcome the limitations of single-omics approaches. While genomics provides a blueprint, it cannot fully capture the dynamic complexity of biological systems [7]. Integrative multi-omics, the combination of multiple 'omics' data layered over each other, provides a more holistic understanding of human health and disease than any single approach separately [1]. This integration is made possible through phenomenal advancements in bioinformatics, data sciences, and artificial intelligence, which allow researchers to decipher the complex interactions between genes, proteins, metabolites, and environmental factors [1] [6]. The ultimate goal is to move beyond correlative relationships to establish causal mechanisms that can be targeted for therapeutic intervention across various diseases, including cancer, cardiovascular disorders, and neuropsychiatric conditions [8] [9].

Core Omics Technologies: From Genes to Metabolites

Defining the Omics Layers

The four primary omics layers form a central dogma of molecular biology, each providing unique insights into biological systems. Genomics involves the study of a person's complete set of DNA, including all genes and intergenic regions. Unlike genetics, which focuses on individual genes, genomics examines the entire genome and how it is expressed, providing insights into inherited health risks and genetic predispositions to disease [9]. The Human Genome Project, completed in 2003, established the foundational reference sequence and revealed that the human genome contains only 20,000-25,000 protein-coding genes [1].

Transcriptomics focuses on the entire collection of RNA molecules, known as the transcriptome, within a cell. This includes messenger RNA (mRNA), which conveys genetic information for protein synthesis, as well as various non-coding RNAs. The transcriptome dynamically changes in response to cellular state and environmental stimuli, providing a snapshot of gene expression activity [9]. Notably, transcriptomes differ between cell types despite identical underlying DNA, reflecting cellular specialization [9].

Proteomics encompasses the study of the entire set of proteins—the proteome—expressed by a cell, tissue, or organism. Proteins are the functional effectors of cellular processes, and their analysis is more complex than nucleic acids due to post-translational modifications, protein-protein interactions, and structural diversity [9]. Proteomic approaches typically fall into three categories: expression proteomics (quantifying protein levels), structural proteomics (determining protein structures and locations), and functional proteomics (elucidating protein functions and interactions) [9].

Metabolomics analyzes the complete set of small-molecule metabolites (typically <1200 Da) within a biological system. The metabolome represents the downstream output of cellular processes and provides the most dynamic reflection of phenotypic state, serving as a molecular phenotype that integrates genetic, environmental, and lifestyle factors [7] [9]. Metabolites include lipids, amino acids, carbohydrates, and other biochemical intermediates that participate in and result from metabolic pathways [9].

Comparative Analysis of Omics Technologies

Table 1: Comparative analysis of the four core omics technologies

| Omics Field | Molecule Class | Key Technologies | Temporal Resolution | Key Applications |

|---|---|---|---|---|

| Genomics | DNA, genetic variants | Next-generation sequencing (NGS), Sanger sequencing, whole-genome sequencing, microarrays | Static (with exceptions for epigenetic changes) | Disease risk prediction, rare variant discovery, ancestry tracing, pharmacogenomics [1] [9] |

| Transcriptomics | RNA (mRNA, non-coding RNA) | RNA-seq, single-cell RNA-seq, microarrays, spatial transcriptomics | Minutes to hours | Gene expression profiling, alternative splicing analysis, biomarker discovery, response to therapeutics [8] [9] |

| Proteomics | Proteins, peptides | Mass spectrometry, protein microarrays, immunoassays, affinity-based profiling | Hours to days | Drug target identification, biomarker validation, signaling pathway analysis, post-translational modification mapping [9] |

| Metabolomics | Metabolites (lipids, sugars, amino acids, etc.) | Mass spectrometry, NMR spectroscopy, LC/GC-MS | Seconds to minutes | Biomarker discovery, nutrient profiling, toxicology assessment, metabolic pathway analysis [7] [9] |

Quantitative Capabilities of Omics Platforms

Table 2: Technical specifications and throughput of major omics platforms

| Technology Platform | Analytical Depth | Throughput Capacity | Key Limitations |

|---|---|---|---|

| Illumina NovaSeq (NGS) | 20-52 billion reads per run, read lengths up to 2×250 bp [1] | 6-16 terabases per run [1] | Short reads challenge haplotype phasing and structural variant detection |

| Single-cell RNA-seq | Profiles 1,000-10,000 cells per run, detects 1,000-5,000 genes per cell [8] | 10,000-100,000 cells in modern high-throughput systems | Sensitivity to cell viability, technical noise, high cost per cell |

| Mass spectrometry-based proteomics | Identifies 5,000-10,000+ proteins per sample in deep profiling, 500-1,000 proteins in high-throughput mode | 10s-100s of samples per batch | Dynamic range limitations, incomplete proteome coverage |

| LC-MS metabolomics | Detects 100s-1,000s of metabolites depending on chromatography and mass analyzer | 10s-100s of samples per batch | Unknown metabolite identification, spectral annotation challenges |

Methodological Workflows in Multi-Omics Research

Sample Preparation and Experimental Protocols

The integrity of multi-omics research begins with robust sample preparation. For genomic analyses, DNA extraction methods must preserve fragment length and minimize contamination. Modern next-generation sequencing (NGS) has evolved significantly from Sanger sequencing, with platforms like Illumina's NovaSeq technology providing outputs of 6-16 terabytes per run, representing 20-52 billion reads with maximum read lengths of up to 2×250 base pairs [1]. For transcriptomic studies, RNA isolation requires strict RNase-free conditions and rapid stabilization to preserve the authentic transcriptome representation. Single-cell RNA sequencing protocols typically involve cell dissociation, viability assessment, and either plate-based or droplet-based partitioning [8].

Proteomic sample preparation focuses on protein extraction, digestion, and purification. Typical workflows involve tissue homogenization in denaturing buffers, protein quantification, protease digestion (usually with trypsin), and peptide cleanup prior to mass spectrometry analysis. Metabolomic protocols require immediate quenching of metabolic activity upon sample collection, using cold methanol or other organic solvents to preserve the metabolic snapshot. Different extraction methods are employed for various metabolite classes (e.g., liquid-liquid extraction for lipids, solid-phase extraction for polar metabolites).

Single-Cell Omics Advancements

Single-cell omics technologies have emerged as particularly powerful tools for investigating cellular heterogeneity, especially in complex tissues like the human brain [8]. These techniques have overcome the limitations of bulk tissue analysis, where molecular signals from rare cell types are diluted or obscured. Key methodological developments include fluorescence-activated cell sorting (FACS) and fluorescence-activated nuclei sorting (FANS), which enable semi-automated isolation of specific cell populations based on fluorescent markers [8]. The evolution from manual cell picking to high-throughput droplet-based systems has enabled researchers to profile thousands to millions of individual cells in a single experiment.

Recent innovations in single-cell multi-omics allow simultaneous measurement of multiple molecular layers from the same cell. For example, technologies like CITE-seq (Cellular Indexing of Transcriptomes and Epitopes by Sequencing) enable coupled transcriptome and surface protein quantification, while methods like scNMT-seq (single-cell Nucleosome, Methylation, and Transcription sequencing) provide integrated data on chromatin accessibility, DNA methylation, and transcriptomes from the same single cells [8]. These approaches are particularly valuable for neuropsychiatric research, where they have revealed cell-type-specific molecular alterations in conditions like dementia and depression [8].

Essential Research Reagents and Materials

Table 3: Essential research reagents and materials for multi-omics investigations

| Reagent/Material Category | Specific Examples | Key Functions | Technical Considerations |

|---|---|---|---|

| Nucleic Acid Isolation Kits | DNA extraction kits, RNA stabilization reagents, magnetic bead-based purification systems | Preservation and purification of high-quality nucleic acids free of contaminants | RNase-free environment for RNA work, assessment of DNA integrity numbers (DIN) and RNA integrity numbers (RIN) |

| Enzymes for Molecular Biology | Restriction enzymes, reverse transcriptases, DNA/RNA polymerases, proteases (trypsin) | Nucleic acid modification, amplification, and digestion | Batch-to-batch consistency, activity validation under specific buffer conditions |

| Separation Materials | LC columns (C18, HILIC), electrophoresis gels, solid-phase extraction cartridges | Separation of complex mixtures prior to analysis | Column chemistry selection based on analyte properties, particle size for resolution |

| Detection Reagents | Fluorescent dyes, antibody conjugates, isotopic labels, calibration standards | Signal generation and quantification | Sensitivity, dynamic range, specificity, minimal background interference |

| Cell Isolation Tools | FACS antibodies, nucleus sorting antibodies, dissociation enzymes, microfluidic devices | Isolation of specific cell populations or single cells | Cell viability preservation, surface epitope preservation, sorting efficiency |

Data Integration and Analytical Approaches

Multi-Omics Data Integration Strategies

The integration of multiple omics datasets presents significant computational challenges but offers unparalleled biological insights. Several methodological frameworks have been developed for this purpose. Pathway- or biochemical-ontology-based integration tools like IMPALA, iPEAP, and MetaboAnalyst leverage predefined biological pathways to identify coordinated changes across omics layers [7]. These methods facilitate biological interpretation by integrating domain knowledge with experimental results, though they are constrained by the completeness and accuracy of pathway annotations.

Biological-network-based integration approaches construct networks representing complex connections between cellular components. Tools such as SAMNetWeb, pwOmics, and Metscape (a Cytoscape plugin) enable the visualization and analysis of gene-protein-metabolite networks, identifying altered graph neighborhoods without relying on predefined pathways [7]. MetaMapR extends this approach by incorporating biochemical reaction information with molecular structural and mass spectral similarity, enabling integration even for molecules with unknown biological function [7].

Empirical correlation analysis methods are particularly valuable when biochemical domain knowledge is limited. The R package mixOmics implements multivariate techniques including regularized sparse principal component analysis (sPCA) and canonical correlation analysis (rCCA) to identify relationships between two high-dimensional datasets [7]. Weighted gene correlation network analysis (WGCNA) extends correlation analysis to include graph topology measures and has been widely applied to identify clusters of highly connected genes related to clinical traits or other omics data [7].

Bioinformatics Tools for Multi-Omics Analysis

Table 4: Key bioinformatics tools for multi-omics data integration and analysis

| Tool Name | Primary Function | Input Data Types | Methodology | Access |

|---|---|---|---|---|

| IMPALA | Pathway-level analysis | Gene/protein expression, metabolomics | Pathway enrichment | Web-based [7] |

| MetaboAnalyst | Comprehensive metabolomics analysis | Transcriptomics, metabolomics | Functional enrichment, pathway analysis | Web-based [7] |

| pwOmics | Signaling network analysis | Transcriptomics, proteomics | Time-series consensus networks | R Bioconductor [7] |

| Metscape | Gene-metabolite network analysis | Gene expression, metabolite data | Metabolic pathway enrichment | Cytoscape plugin [7] |

| WGCNA | Correlation network analysis | Any omics data | Weighted correlation network analysis | R package [7] |

| Grinn | Graph-database integration | Genomics, proteomics, metabolomics | Neo4j graph database with correlation analysis | R package [7] |

| MixOmics | Multivariate analysis | Any omics data | sPCA, rCCA, sPLS-DA | R package [7] |

Artificial Intelligence in Multi-Omics Integration

Artificial intelligence and machine learning have become indispensable for analyzing complex multi-omics datasets [6]. AI approaches are particularly valuable for identifying patterns and relationships across diverse data modalities that might escape conventional statistical methods. Machine learning-based variant classification tools offer advantages over statistics-based predictors because they are data-driven and yield probabilistic pathogenicity scores for prioritizing variants of unknown significance [1]. AI also facilitates patient stratification by integrating multi-omics data with clinical outcomes, enabling prediction of disease progression, drug efficacy, and optimal treatment strategies [6].

As multi-omics technologies generate increasingly large and complex datasets, federated computing approaches and advanced data storage infrastructures are emerging to support collaborative research while addressing privacy concerns [6]. These computational advancements are crucial for realizing the full potential of multi-omics in precision medicine, transforming vast biological datasets into clinically actionable insights.

Applications in Precision Medicine and Therapeutic Development

Advancing Rare Disease Diagnosis and Treatment

Multi-omics approaches are revolutionizing rare disease diagnosis by overcoming the limitations of single-omics approaches. Initiatives like the U.K.'s 100,000 Genomes Project have demonstrated how integrating genomic data with other omics layers can provide diagnoses for patients with rare genetic disorders who remained undiagnosed after conventional testing [6]. The genotype-first approach or reverse phenotyping has the potential to identify new genotype-phenotype associations, enhance disease subclassification, and widen the phenotypic spectrum of genetic variants [1]. By combining genomic findings with transcriptomic, proteomic, and metabolomic data, clinicians can better interpret variants of uncertain significance and identify pathological mechanisms that might be amenable to therapeutic intervention.

The clinical impact of multi-omics extends beyond diagnosis to treatment selection and development. In oncology, multi-omics profiling enables the identification of driver mutations and corresponding protein expression patterns that can be targeted with specific therapeutics [9] [6]. Similarly, integrating metabolomic data with genomic information helps identify metabolic vulnerabilities in cancer cells that can be exploited therapeutically. The ability to profile multiple molecular layers from limited clinical samples, such as liquid biopsies, makes multi-omics particularly valuable for monitoring treatment response and detecting emergent resistance mechanisms [6].

Enabling Personalized Therapeutic Strategies

Multi-omics data integration facilitates the development of personalized therapeutic strategies in several key areas. In pharmacogenomics, combining genomic data about drug metabolism pathways with proteomic information about drug targets and metabolomic profiles of drug response enables more precise medication selection and dosing [1]. For cell and gene therapies, multi-omics characterization of starting materials and final products ensures quality control and helps predict therapeutic efficacy [6]. In drug discovery, multi-omics approaches enable target identification and validation through comprehensive understanding of disease pathways across molecular layers [10].

The rise of single-cell multi-omics is particularly transformative for personalized medicine applications. By characterizing cellular heterogeneity in patient samples, these technologies can identify rare cell populations that drive disease progression or treatment resistance [8] [6]. In neuropsychiatric disorders, single-cell omics applied to postmortem brain tissue has revealed cell-type-specific molecular alterations in conditions like dementia and depression, providing new targets for therapeutic intervention [8]. Similarly, in cancer, single-cell multi-omics can identify minority subclones with resistant mutations that would be missed by bulk tumor profiling.

Future Directions and Challenges

Despite significant progress, several challenges remain in the widespread implementation of multi-omics approaches in precision medicine. Data integration hurdles include technical variability between platforms, batch effects, and the computational complexity of integrating heterogeneous datasets [7] [6]. Standardization needs encompass analytical protocols, data quality metrics, and computational workflows to ensure reproducibility across laboratories [6]. Equity in genomic research requires addressing the significant underrepresentation of non-European populations in existing datasets, which currently limits the applicability of findings across diverse populations [1]. It is estimated that participants of European descent constitute 86.3% of all genomic studies conducted worldwide, while African, South Asian, and Hispanic descent participants together constitute less than 10% [1].

Future advancements will likely focus on developing more sophisticated AI-driven integration methods, creating scalable computational infrastructures for multi-omics data, and establishing frameworks for responsible data sharing [6]. The continued evolution of single-cell and spatial omics technologies will provide increasingly detailed maps of cellular organization and function in both health and disease [8]. As these technologies mature and barriers are addressed, multi-omics approaches will become increasingly central to precision medicine, enabling truly personalized approaches to disease prevention, diagnosis, and treatment across diverse populations.

Precision medicine represents a transformative healthcare model that leverages a person’s genomic, environmental, and lifestyle data to deliver customized healthcare [1]. This approach marks a paradigm shift from conventional, reactive disease control to proactive disease prevention and health preservation. The foundation of this revolution lies in the ability to move beyond isolated data types—such as genomics alone—to a holistic, systems biology view that integrates multiple layers of biological information. This integration provides an unprecedented opportunity to decipher the complex and heterogeneous interactions between genes, diet, and lifestyle that underlie human health and disease [1]. The emergence of multi-omics technologies, including transcriptomics, proteomics, epigenomics, metabolomics, and microbiomics, has substantially enhanced our capacity to maximize the applicability of genomics data for improved health outcomes [1]. Integrative multi-omics, defined as the combination of multiple 'omics' data layered over each other along with their interconnections and interactions, delivers a more comprehensive understanding of human biology than any single approach can provide separately.

The Multi-Omics Landscape: From Single Layers to Unified Views

The Omics Cascade and Technological Foundations

The journey toward a systems biology view begins with understanding the distinct yet interconnected layers of biological information. Each omics layer provides a unique perspective on cellular function, from genetic blueprint to metabolic activity.

Table 1: The Multi-Omics Cascade: Data Types, Technologies, and Insights

| Omics Layer | Biological Entity | Key Technologies | Primary Insights |

|---|---|---|---|

| Genomics | DNA | Next-Generation Sequencing (NGS), Whole Genome Sequencing | Genetic blueprint, inherited variations, disease predisposition |

| Epigenomics | DNA modifications | scATAC-seq, snmC-seq | Regulatory landscape, chromatin accessibility, methylation patterns |

| Transcriptomics | RNA | scRNA-seq, RNA-Seq | Gene expression patterns, regulatory responses, cellular activity |

| Proteomics | Proteins | Mass spectrometry | Functional effectors, protein expression and interactions |

| Metabolomics | Metabolites | Mass spectrometry, NMR | Metabolic state, physiological responses, downstream phenotypes |

| Microbiomics | Microorganisms | 16S rRNA sequencing, metagenomics | Microbial communities, host-microbe interactions, ecosystem impacts |

The technological revolution, particularly in next-generation sequencing (NGS), has been instrumental in enabling this multi-omics approach. NGS includes various methods like sequencing by synthesis, pyrosequencing, sequencing by ligation, and ion semiconductor sequencing, with sequencing by synthesis using PCR being the most widely used method for genome and exome sequencing [1]. Continuous technological refinements have led to significant advancements in NGS platforms, with output capacities increasing from 1.6–1.8 terabytes (Tb) with HiSeq technology to 6–16 Tb with NovaSeq technology, enabling the generation of billions of reads per run [1].

The Single-Cell Revolution

Single-cell technologies have dramatically enhanced the resolution of multi-omics studies by allowing researchers to probe regulatory maps through multiple omics layers at the individual cell level [11]. Techniques such as single-cell ATAC-sequencing (scATAC-seq) for chromatin accessibility, snmC-seq for DNA methylation, and scRNA-seq for the transcriptome offer a unique opportunity to unveil the underlying regulatory bases for the functionalities of diverse cell types [11]. The most recent innovation involves multimodal single-cell omics, where two omic profiles (e.g., proteomics and transcriptomics) are captured for the same cell, along with spatially resolved techniques that preserve geographical context within tissues [12].

Computational Integration Strategies: Bridging the Feature Space Gap

The Core Challenge of Multi-Omics Integration

A fundamental obstacle in integrating unpaired multi-omics data is that different modalities have distinct feature spaces—for example, accessible chromatin regions in scATAC-seq versus genes in scRNA-seq [11]. This creates a significant computational challenge for creating unified biological models. Additional complexities include data heterogeneity and scale, missing data, batch effects, and staggering computational requirements often involving petabytes of data [3].

Integration Frameworks and Machine Learning Approaches

Table 2: Multi-Omics Integration Strategies: Approaches and Applications

| Integration Strategy | Timing of Integration | Key Advantages | Ideal Use Cases | Example Methods |

|---|---|---|---|---|

| Early Integration (Feature-level) | Before analysis | Captures all cross-omics interactions; preserves raw information | Discovery of novel, unforeseen interactions across modalities | Simple concatenation, Autoencoders |

| Intermediate Integration | During analysis | Reduces complexity; incorporates biological context through networks | Network biology, pathway analysis, functional module discovery | Graph Convolutional Networks, Similarity Network Fusion |

| Late Integration (Model-level) | After individual analysis | Handles missing data well; computationally efficient | Predictive modeling, clinical outcome prediction | Ensemble methods, Stacking, Weighted averaging |

The GLUE (Graph-Linked Unified Embedding) framework represents an advanced approach to addressing the fundamental challenge of distinct feature spaces across omics layers [11]. GLUE uses a knowledge-based "guidance graph" that explicitly models cross-layer regulatory interactions—for example, connecting accessible chromatin regions to their putative downstream genes with signed edges (positive or negative regulatory effects) [11]. This graph then guides the adversarial alignment of cell embeddings learned through variational autoencoders tailored to each omics layer, resulting in accurate integration while simultaneously enabling regulatory inference [11].

Systematic benchmarking has demonstrated that GLUE achieves superior performance in matching corresponding cell states across modalities, producing cell embeddings where biological variation is faithfully conserved and omics layers are well mixed [11]. Notably, GLUE reduces single-cell level alignment error by 1.5 to 3.6-fold compared to other methods and exhibits remarkable robustness to inaccuracies in prior knowledge, maintaining performance even with up to 90% corruption of regulatory interactions in the guidance graph [11].

Artificial Intelligence and Machine Learning Solutions

Without AI and machine learning, integrating multi-modal genomic and multi-omics data for precision medicine would be impossible due to the sheer volume and complexity of the data [3]. These approaches provide superhuman pattern recognition capabilities, detecting subtle connections across millions of data points that are invisible to conventional analysis.

Key machine learning techniques powering multi-omics integration include:

- Autoencoders (AEs) and Variational Autoencoders (VAEs): Unsupervised neural networks that compress high-dimensional omics data into a dense, lower-dimensional "latent space," making integration computationally feasible while preserving key biological patterns [3].

- Graph Convolutional Networks (GCNs): Designed for network-structured data, GCNs learn from biological networks by aggregating information from a node's neighbors to make predictions, proving effective for clinical outcome prediction [3].

- Similarity Network Fusion (SNF): Creates patient-similarity networks from each omics layer and iteratively fuses them into a single comprehensive network, enabling more accurate disease subtyping and prognosis prediction [3].

- Transformers: Originally from natural language processing, transformers adapt brilliantly to biological data through self-attention mechanisms that weigh the importance of different features and data types, identifying critical biomarkers from noisy data [3].

Experimental Protocols and Research Toolkit

Detailed Methodology for Multi-Omics Integration

Protocol 1: GLUE Framework Implementation for Single-Cell Multi-Omics Integration

This protocol outlines the step-by-step procedure for implementing the GLUE framework to integrate unpaired single-cell multi-omics data, based on the approach described by Gao et al. [11].

Data Preprocessing and Feature Selection

- For each omics modality (e.g., scRNA-seq, scATAC-seq), perform quality control, normalization, and feature selection.

- For scRNA-seq: Filter cells based on mitochondrial percentage, total counts, and detected genes. Normalize using standard methods (e.g., log(TPM+1)).

- For scATAC-seq: Filter cells based on transcription start site enrichment, total fragments, and nucleosome signal. Create peak count matrices.

- Select highly variable features for each modality to reduce dimensionality and computational requirements.

Guidance Graph Construction

- Construct a knowledge-based bipartite graph connecting features across omics layers.

- For scRNA-seq and scATAC-seq integration: Connect ATAC peaks to genes if they overlap in the gene body or proximal promoter regions (typically ±2kb from transcription start site).

- Assign edge signs based on known regulatory relationships: positive edges for activating relationships, negative edges for repressive relationships (e.g., gene body DNA methylation typically receives negative edges due to negative correlation with expression).

Model Configuration and Training

- Implement separate variational autoencoders for each omics modality with modality-specific probabilistic decoders.

- Configure the adversarial alignment module with a multilayer perceptron discriminator.

- Set hyperparameters: latent dimension (typically 16-64), learning rate (typically 0.001-0.01), and number of training iterations (typically 10,000-50,000).

- Train the model using stochastic gradient descent with adversarial training until convergence.

Integration and Downstream Analysis

- Extract aligned cell embeddings from the trained model.

- Perform clustering, visualization (UMAP/t-SNE), and cell type annotation on the integrated embeddings.

- Transfer labels across modalities using neighborhood-based label transfer.

- Validate integration quality using metrics such as integration consistency score.

Regulatory Inference

- Extract feature embeddings from the trained model.

- Refine the guidance graph based on the learned feature embeddings.

- Identify significant regulatory interactions using the refined graph.

- Validate inferred regulations through comparison with known regulatory databases and experimental validation.

Essential Research Reagent Solutions

Table 3: Research Reagent Solutions for Multi-Omics Studies

| Reagent/Category | Specific Examples | Primary Function | Application Context |

|---|---|---|---|

| Single-Cell Isolation | 10x Genomics Chromium System, Fluidigm C1 | High-throughput single-cell partitioning and barcoding | Preparation of single-cell suspensions for sequencing |

| Multi-Omics Assay Kits | 10X Multiome ATAC + Gene Expression, SHARE-seq, SNARE-seq | Simultaneous measurement of multiple omics modalities from same cells | Paired multi-omics data generation for direct integration |

| Library Preparation | Illumina Nextera, Smart-seq2, ATAC-seq Kits | Preparation of sequencing libraries from specific molecular fractions | Conversion of biological samples to sequence-ready formats |

| Sequencing Reagents | Illumina NovaSeq S-Prime Kits, PacBio SMRTbell | High-throughput DNA/RNA sequencing with various read lengths | Generation of raw sequencing data from prepared libraries |

| Bioinformatics Tools | GLUE, Seurat, Scanpy, Cell Ranger | Computational processing, integration, and analysis of omics data | Downstream data analysis and biological interpretation |

Applications in Precision Medicine and Therapeutic Development

Clinical Translation and Biomarker Discovery

Integrated multi-omics approaches are demonstrating significant impact across multiple clinical domains, particularly in oncology. In glioma research, for example, multi-omics strategies are being used to decipher the molecular taxonomy of adult-type diffuse gliomas, with the integration of multilayer data combined with machine-learning-based algorithms paving the way for advancements in patient prognosis and the development of personalized, targeted therapeutic interventions [13]. By combining genomics, transcriptomics (including sex-dependent differential expression patterns), epigenomics, proteomics, metabolomics, radiomics, single-cell analysis, and spatial omics into a comprehensive framework, researchers can deepen their understanding of glioma biology and enhance diagnostic precision, prognostic accuracy, and treatment efficacy [13].

One of the most impactful applications of integrated omics is the discovery of novel biomarkers that can serve as early warning signs, diagnostic tools, or indicators of treatment response [3]. By integrating genomics, transcriptomics, and proteomics, researchers can uncover complex molecular patterns of disease long before symptoms manifest. Multi-modal approaches are showing particular promise in detecting cancers earlier, where combining liquid biopsy data (circulating tumor DNA) with proteomic markers and clinical risk factors can significantly improve early detection accuracy for multiple cancer types from a single blood draw [3].

Pharmacological Applications and Drug Development

The integration of single-cell technologies with multi-omics approaches has created extraordinary opportunities in pharmacology and therapeutic development. Single-cell biofluorescence analysis, when combined with deep neural networks, can reveal the mechanisms of action of screened drugs [12]. Similarly, the idTRAX algorithm, which combines biofluorescent drug screening with machine learning, has demonstrated success in identifying cancer-selective kinase inhibitors [12].

The trifecta of single-cell omics, systems biology, and machine learning contributes significantly to pharmacological research by enabling:

- Cell-type specific drug targeting: Identifying how drugs target and create side effects in specific cell types by molecularly deconvoluting these populations [12].

- Heterogeneous population targeting: Characterizing and targeting disease-causing cells within heterogeneous populations, particularly relevant in cancer and infectious diseases [12].

- Predictive systems development: Increasing the accuracy of predictive algorithms for drug response by incorporating cell type specificity and heterogeneity characterization [12].

Future Perspectives and Challenges

Despite significant advancements, several challenges remain in the full implementation of integrated multi-omics approaches. Data diversity continues to be a critical issue, with participants of European descent constituting approximately 86.3% of all genomic studies ever conducted worldwide, while participants of African, South Asian, and Hispanic descent together constitute less than 10% of studies [1]. This limited representation creates substantial gaps in our understanding of genetic variation across human populations and hampers the equitable application of precision medicine benefits.

Additional challenges include the accurate interpretation of genomic sequences, with only a quarter of the more than 90,000 known variants having their pathological significance classified while the rest are classified as variants of unknown significance [1]. The development of more sophisticated computational methods that can handle the increasing volume and complexity of multi-omics data while remaining interpretable to biologists and clinicians represents another significant hurdle.

Future directions will likely focus on the development of more advanced knowledge-guided deep learning frameworks, enhanced methods for temporal multi-omics integration to understand disease progression, and improved approaches for translating computational findings into clinically actionable insights. As these technologies mature, the power of integration from single layers to a systems biology view will continue to transform our understanding of human health and disease, ultimately fulfilling the promise of precision medicine for diverse populations worldwide.

Precision medicine represents a transformative healthcare model that utilizes an individual’s genomic, environmental, and lifestyle information to deliver customized healthcare [1]. Multi-omics approaches—which integrate data from genomics, transcriptomics, proteomics, epigenomics, metabolomics, and microbiomics—are fundamental to realizing this vision, providing a systems biology framework for understanding human health and disease [1]. However, the robustness and translational potential of multi-omics research critically depend on two foundational elements: longitudinal study designs and population diversity in research cohorts.

Longitudinal cohorts provide the temporal dimension necessary to understand disease progression, identify dynamic biomarkers, and decipher complex gene-environment interactions [14]. Meanwhile, diverse participant inclusion ensures that scientific discoveries benefit all populations equitably and enhances the statistical power to detect genuine biological signals [15]. This technical guide examines the integral role of longitudinal cohorts and diversity as the backbone of robust multi-omics research within the broader context of precision medicine.

The Scientific Rationale: Why Longitudinal Diversity Matters in Multi-Omics

Capturing Dynamic Biological Processes

Longitudinal multi-omics profiling enables researchers to move beyond static snapshots to capture the dynamic nature of biological systems. These designs are particularly valuable for:

Understanding disease transitions: Deep longitudinal profiling can identify molecular patterns preceding clinical diagnosis, enabling early intervention strategies [14]. For example, longitudinal studies of individuals at risk for type 2 diabetes have revealed multiple pathways to diabetes onset through integrated analysis of omics data [14].

Modeling complex biological interactions: Temporal data allows researchers to investigate the complex web of interactions between genetics, metabolism, environmental factors, and lifestyle [16]. This is especially important for understanding critical developmental periods, such as puberty, which may represent susceptibility windows for metabolic deregulations [16].

Differentiating causality from correlation: Repeated measurements enhance the ability to infer causal relationships in multi-layer omics data [17]. For instance, longitudinal twin studies have helped disentangle genetic versus environmental contributions to proteome-BMI associations [18].

Addressing Representation Gaps in Genomic Research

Despite the recognized importance of diversity, significant representation gaps persist in multi-omics research. Participants of European descent constitute approximately 86.3% of all genomic studies ever conducted worldwide, while participants of African, South Asian, and Hispanic descent together constitute less than 10% [1]. This disparity has profound implications:

Limited generalizability: Genetic variants identified in one population may not transfer effectively to others due to differences in linkage disequilibrium (LD) patterns and allele frequencies [15]. For example, the CYP2C19*2 variant is in high LD with 127 SNPs in European ancestry populations compared to only 49 SNPs in African ancestry populations [15].

Reduced discovery potential: Populations with greater genetic diversity, such as those of African ancestry, harbor more genetic variants, offering enhanced opportunities for discovery [15]. The over-reliance on European-ancestry genomes has constrained our understanding of human genetic diversity and its implications for health and disease.

Perpetuation of health disparities: Without diverse representation, precision medicine advances may disproportionately benefit certain populations while exacerbating existing health disparities [19]. For example, polygenic risk scores developed primarily in European populations show reduced predictive accuracy in other ancestral groups [19].

Designing Robust Longitudinal Multi-Omic Cohorts: Methodological Considerations

Cohort Composition and Sampling Strategies

Table 1: Key Considerations for Longitudinal Multi-Omic Cohort Design

| Design Element | Technical Considerations | Best Practices |

|---|---|---|

| Participant Recruitment | Genetic ancestry, environmental exposures, socioeconomic factors, health status | Community-engaged approaches, oversampling underrepresented groups, inclusive eligibility criteria |

| Sampling Frequency | Expected rate of change in omics measures, practical constraints | Higher frequency for rapidly changing systems (e.g., daily for gut microbiome), less frequent for stable systems |

| Sample Collection | Standardized protocols, stability of biomolecules, multi-omic compatibility | Systematic SOPs, consideration of diurnal variation, adequate sample volume for all omics |

| Temporal Duration | Natural history of disease, developmental trajectories, practical constraints | Should capture complete cycles (e.g., seasonal patterns) or critical transitions (e.g., disease onset) |

Multi-Omic Technologies and Integration Approaches

Effective longitudinal multi-omics studies require careful selection of technologies and integration strategies:

Technology selection: The choice of platforms should consider throughput, reproducibility, and compatibility across omics layers. For genomics, the Multi-Ethnic Global Array (MEGA) provides better genotyping coverage across diverse populations compared to earlier platforms [15].

Reference materials: Using common reference materials, such as those developed by the Quartet Project, enables ratio-based quantitative profiling that improves data comparability across batches, labs, and platforms [20]. These materials provide "built-in truth" defined by pedigree relationships and central dogma information flow.

Data integration approaches: Vertical (cross-omics) integration combines diverse datasets from multiple omics types from the same samples, while horizontal (within-omics) integration combines datasets from the same omics type across multiple batches [20]. The integration strategy should align with the research objectives—whether sample classification or feature network identification.

Analytical Frameworks for Longitudinal Multi-Omics Data

Statistical Modeling Approaches

Longitudinal omics data presents unique analytical challenges, including imbalanced measurements, high-dimensionality, and complex correlation structures [21]. Key analytical approaches include:

Linear Mixed Models (LMMs): These models account for within-subject correlation through random effects and are widely used for continuous omics features [21]. The basic LMM for an omics feature can be formulated as:

yᵢ = Xᵢβ + Zᵢbᵢ + εᵢ

where yᵢ represents measurements for the i-th subject, Xᵢ is the design matrix for fixed effects, Zᵢ is the design matrix for random effects, bᵢ represents subject-specific random effects, and εᵢ is Gaussian noise.

Generalized Linear Mixed Models (GLMMs): For non-Gaussian omics data (e.g., count data from sequencing), GLMMs extend LMMs through appropriate link functions [21].

Functional Data Analysis (FDA): These approaches model longitudinal trajectories as continuous functions, accommodating irregular sampling intervals [21].

Diversity-Aware Analytical Methods

Conventional genomic analysis methods may perform poorly in diverse or admixed populations. Specialized approaches include:

Local Ancestry Inference (LAI): Methods like RFMix, STRUCTURE, and LAMP infer the ancestral origin of chromosomal segments in admixed individuals, enabling more powerful association testing [15].

Ancestry-aware polygenic risk scores: New methods incorporate genetic ancestry to improve risk prediction across diverse populations, helping to address performance disparities [19].

Population-specific variant annotation: Databases like gnomAD provide population-specific allele frequency information that improves variant interpretation across diverse groups [1].

The following diagram illustrates the comprehensive workflow for longitudinal multi-omics studies, from cohort design to data integration:

Implementing Diversity in Research Practice: Beyond Recruitment

Community-Engaged Research Frameworks

Meaningful inclusion of historically excluded populations requires more than just recruitment strategies. A comprehensive community-based participatory research framework includes [1]:

- Identifying research questions relevant to community stakeholders

- Establishing diverse, cross-sector stakeholder teams

- Creating genomic infrastructure adaptable to community-centered research

- Collecting culture-sensitive data with stakeholder feedback mechanisms

- Utilizing research results to positively impact community health and policy

The development of diverse reference resources is essential for equitable multi-omics research:

Reference genomes: The origin of nearly three-fourths of the reference genome sequences from a single donor raises questions about applicability to diverse populations [1]. Efforts to develop pan-genome references that capture global genetic diversity are underway.

Variant databases: Resources like the Genome Aggregation Database (gnomAD) provide putatively benign variants across populations, serving as critical controls for variant interpretation [1]. However, continued expansion of diverse variant catalogs is needed.

Multi-omics reference materials: Projects like the Quartet Project provide reference materials from a family quartet, enabling quality control and data integration across omics technologies [20]. Expanding such resources to include diverse populations will enhance their utility.

Experimental Protocols and Reagent Solutions

Standardized Methodologies for Longitudinal Multi-Omic Studies

Table 2: Essential Research Reagents and Platforms for Multi-Omic Studies

| Reagent/Platform | Function | Application Notes |

|---|---|---|

| Quartet Reference Materials | Multi-omics quality control and data integration | Provides DNA, RNA, protein, and metabolites from matched samples; enables ratio-based profiling [20] |

| Multi-Ethnic Global Array (MEGA) | Genotyping in diverse populations | Improved coverage across diverse populations compared to earlier arrays [15] |

| LC-MS/MS Platforms | Proteomic and metabolomic profiling | Multiple platforms available; common reference materials improve cross-platform comparability [20] |

| Next-Generation Sequencing | Genomic, transcriptomic, epigenomic profiling | Consider coverage requirements in diverse populations; targeted enrichment may be needed for population-specific variants |

Protocol for Longitudinal Sample Processing

A standardized protocol for longitudinal multi-omics studies includes:

Sample collection: Use consistent collection methods across timepoints, stabilizing biomolecules immediately after collection [17].

Biomolecular extraction: Employ standardized kits and protocols to minimize batch effects. For microbiome studies, consider simultaneous extraction of DNA, RNA, and proteins [17].

Multi-omics data generation: Process samples from multiple timepoints in randomized batches to avoid confounding time effects with batch effects [20].

Quality control: Implement robust QC metrics at each step, using reference materials to monitor technical performance [20]. For quantitative omics, signal-to-noise ratio provides a useful QC metric.

Data processing: Apply reference-independent approaches when studying underrepresented populations or microbial communities without comprehensive references [17].

The following diagram illustrates the information flow in multi-omics studies and how diversity enhances discovery:

Longitudinal cohorts and population diversity are not merely desirable attributes but fundamental requirements for robust multi-omics research. The integration of these elements enables researchers to capture the dynamic nature of biological systems while ensuring that scientific discoveries benefit all populations. As precision medicine advances, continued attention to these foundational principles will be essential for realizing the full potential of multi-omics approaches to understand human health and disease.

Future directions should include: (1) expanded investment in diverse longitudinal cohorts, particularly in pediatric populations; (2) development of analytical methods that appropriately account for genetic ancestry and population structure; (3) implementation of community-engaged research frameworks that promote equitable partnerships; and (4) standardization of multi-omics technologies using diverse reference materials. Through coordinated efforts across these domains, the research community can ensure that multi-omics approaches fulfill their promise to transform healthcare for all populations.

From Data to Insights: Strategies and Real-World Applications in Drug Discovery

Multi-omics data integration has emerged as a cornerstone of modern precision medicine research, enabling a holistic understanding of biological systems by combining data from different biomolecular levels such as DNA, RNA, proteins, metabolites, and epigenetic marks [22]. This technical guide provides a comprehensive framework for multi-omics integration strategies, categorizing core methodologies into conceptual, statistical, and model-based approaches. We detail specific computational tools, experimental protocols, and visualization techniques essential for researchers and drug development professionals working to translate multi-omics data into clinically actionable insights. With the exponential growth in multi-omics publications—more than doubling between 2022 and 2023—mastering these integration strategies has become imperative for advancing biomarker discovery, identifying novel drug targets, and personalizing therapeutic interventions [23].

The fundamental premise of multi-omics integration lies in overcoming the limitations of single-omics studies, which provide valuable but incomplete insights into complex biological systems. By simultaneously analyzing data from genomics, transcriptomics, proteomics, metabolomics, and epigenomics, researchers can uncover the complex interactions and causal relationships that underlie health and disease states [22]. This integrated approach has proven particularly valuable in precision medicine, where understanding the interplay between different molecular layers enables better patient stratification, biomarker discovery, and therapeutic optimization.

The rapid advancement of high-throughput technologies has generated an explosion of complex multi-omics datasets, creating both unprecedented opportunities and significant computational challenges [24]. These challenges include data heterogeneity, high dimensionality, experimental noise, missing values, and the complex, often non-linear relationships between different omics layers [25]. Furthermore, the integration process is complicated by the fact that different omics data types exhibit unique scales, noise ratios, and preprocessing requirements, making a one-size-fits-all approach ineffective [25].

The Multi-Omics Workflow in Precision Medicine Research

The following diagram illustrates the generalized workflow for multi-omics data integration, from data generation through to biological interpretation in precision medicine contexts.

Core Multi-Omics Integration Approaches

Conceptual Integration Methods

Conceptual integration represents a knowledge-driven approach that leverages existing biological databases and ontologies to link different omics datasets based on shared concepts or entities such as genes, proteins, pathways, or diseases [22]. This method utilizes established biological relationships to generate hypotheses and explore associations between different omics datasets.

A common implementation of conceptual integration involves using gene ontology (GO) terms or pathway databases (e.g., KEGG, Reactome) to annotate and compare different omics datasets, identifying common or specific biological functions and processes [22]. For example, researchers might link differentially expressed genes from transcriptomics data with differentially abundant proteins from proteomics data through their shared pathway membership. Open-source pipelines such as STATegra and OmicsON have demonstrated enhanced capacity to detect specific features overlapping between compared omics sets [22].

Key Implementation Protocol:

- Data Annotation: Annotate each omics dataset using standardized biological ontologies (GO, KEGG, Reactome)

- Identifier Mapping: Convert molecule identifiers across platforms to enable cross-referencing

- Knowledge-Based Linking: Use pathway databases to establish connections between molecular entities

- Hypothesis Generation: Identify enriched biological processes or pathways that span multiple omics layers

Table 1: Knowledge Bases for Conceptual Integration

| Resource | Type | Application in Multi-Omics | Reference |

|---|---|---|---|

| Gene Ontology (GO) | Ontology | Functional annotation across omics layers | [22] |

| KEGG Pathways | Pathway Database | Pathway-based integration of molecules | [22] |

| Reactome | Pathway Database | Curated biological pathways | [22] |

| STRING | Protein-Protein Interactions | Physical and functional interactions | [22] |

Statistical Integration Methods

Statistical integration employs quantitative techniques to combine or compare different omics datasets based on statistical measures such as correlation, regression, clustering, or classification [22]. This data-driven approach identifies patterns, trends, and associations within and between omics datasets, though it may not inherently account for causal or mechanistic relationships.

Correlation analysis represents one of the most fundamental statistical integration approaches, identifying co-expressed genes or proteins across different omics datasets [22]. For example, researchers might calculate Pearson's or Spearman's correlation coefficients to assess the relationship between gene expression and protein abundance [26]. More advanced implementations include Weighted Gene Correlation Network Analysis (WGCNA), which identifies clusters (modules) of highly correlated genes across multiple omics datasets [26]. These modules can be summarized by their eigenmodes and linked to clinically relevant traits to identify functional relationships.

The xMWAS platform performs pairwise association analysis by combining Partial Least Squares (PLS) components and regression coefficients, then generates integrative network graphs where connections represent statistically significant associations [26]. Community detection algorithms can subsequently identify clusters of highly interconnected nodes within these networks.

Key Implementation Protocol:

- Data Normalization: Standardize each omics dataset to comparable scales

- Association Analysis: Calculate correlation matrices between features across omics layers

- Network Construction: Build association networks using correlation thresholds (e.g., R² > 0.8, p-value < 0.05)

- Module Detection: Apply community detection algorithms to identify densely connected subnetworks

- Clinical Integration: Correlate modules with phenotypic traits or clinical outcomes

Table 2: Statistical Integration Methods and Tools

| Method | Algorithm Type | Applications | Tools/Packages |

|---|---|---|---|

| Correlation Analysis | Pairwise Association | Identify co-expressed features | xMWAS [26] |

| WGCNA | Network-Based | Identify co-expression modules | WGCNA [26] |

| Canonical Correlation Analysis | Multivariate | Identify relationships between two omics sets | RGCCA [27] |

| Multi-Omics Factor Analysis | Factor Analysis | Decompose multi-omics data into latent factors | MOFA+ [25] |

Model-Based Integration

Model-based integration utilizes mathematical or computational models to simulate or predict the behavior of biological systems using multi-omics data [22]. This approach aims to capture the dynamics and regulation of biological systems, though it typically requires substantial prior knowledge and assumptions about system parameters and structure.

Network models represent a powerful approach for model-based integration, capturing interactions between genes, proteins, and metabolites across different omics datasets [22]. These models can range from simple protein-protein interaction networks to complex regulatory networks that incorporate transcription factors, epigenetic modifications, and metabolic constraints. Pharmacokinetic/pharmacodynamic (PK/PD) models represent another important application, describing the absorption, distribution, metabolism, and excretion (ADME) of drugs across different tissues or organs based on multi-omics profiles [22].

More recently, deep generative models such as variational autoencoders (VAEs) have emerged as powerful tools for model-based integration, capable of handling non-linear relationships, data imputation, joint embedding creation, and batch effect correction [24]. These methods can learn latent representations that capture the joint structure of multiple omics datasets while accommodating missing data and technical artifacts.

Key Implementation Protocol:

- Network Construction: Build biological networks using prior knowledge (e.g., protein-protein interactions)

- Data Mapping: Overlay multi-omics data onto network components

- Model Parameterization: Estimate model parameters using experimental data

- Simulation and Prediction: Simulate system behavior under different conditions or perturbations

- Experimental Validation: Design experiments to test model predictions (e.g., knockdowns, inhibitors)

Network and Pathway Integration

Network and pathway integration represents a hybrid approach that uses networks or pathways to represent the structure and function of biological systems based on different omics data [22]. Networks are graphical representations of nodes (e.g., genes, proteins) and their interactions, while pathways are collections of related biological processes that occur in specific contexts.

This approach enables the integration of multiple omics data types at different levels of granularity and complexity. For example, protein-protein interaction (PPI) networks can visualize physical interactions between proteins identified in proteomics data, while metabolic pathways can illustrate biochemical reactions involving metabolites identified through metabolomics [22]. Visualization tools such as the Cellular Overview in Pathway Tools enable simultaneous visualization of up to four types of omics data on organism-scale metabolic network diagrams, using different visual channels (e.g., color and thickness of reaction edges) to represent different omics datasets [28].

Key Implementation Protocol:

- Pathway Database Selection: Choose organism-specific or general pathway databases

- Multi-Omics Mapping: Map each omics dataset to relevant pathway components

- Visual Channel Assignment: Assign different omics types to distinct visual channels (color, thickness)

- Interactive Exploration: Use semantic zooming and filtering to explore integrated data at different scales

The following diagram illustrates the GAUDI (Group Aggregation via UMAP Data Integration) method, which represents an advanced non-linear approach for multi-omics integration that outperforms several state-of-the-art methods in capturing complex relationships [27].

Practical Implementation and Computational Tools

Tool Selection Framework

Selecting appropriate computational tools for multi-omics integration depends on multiple factors, including data types (matched vs. unmatched), sample size, biological question, and computational resources. The following table summarizes key integration tools and their characteristics.

Table 3: Multi-Omics Integration Tools and Applications

| Tool | Integration Type | Core Methodology | Data Types | Reference |

|---|---|---|---|---|

| MOFA+ | Matched/Vertical | Factor Analysis | mRNA, DNA methylation, chromatin accessibility | [25] |

| Seurat v4 | Matched/Vertical | Weighted Nearest-Neighbor | mRNA, spatial coordinates, protein, chromatin | [25] |

| GAUDI | Unmatched/Diagonal | UMAP Embeddings + Density Clustering | Genomics, transcriptomics, proteomics, metabolomics | [27] |

| GLUE | Unmatched/Diagonal | Graph Variational Autoencoder | Chromatin accessibility, DNA methylation, mRNA | [25] |

| intNMF | Unmatched/Diagonal | Non-negative Matrix Factorization | Multiple omics data types | [27] |

| SCHEMA | Matched/Vertical | Metric Learning | Chromatin accessibility, mRNA, proteins | [25] |

| Cobolt | Mosaic | Multimodal Variational Autoencoder | mRNA, chromatin accessibility | [25] |

| StabMap | Mosaic | Mosaic Data Integration | mRNA, chromatin accessibility | [25] |

Successful multi-omics integration requires both wet-lab reagents and computational resources. The following table details essential components of the multi-omics research toolkit.

Table 4: Essential Research Reagent Solutions for Multi-Omics Studies

| Resource Category | Specific Tools/Reagents | Function in Multi-Omics Pipeline |

|---|---|---|

| Sequencing Platforms | Illumina NovaSeq, PacBio | Generate genomics and transcriptomics data |

| Mass Spectrometry | LC-MS/MS Systems | Quantify proteins and metabolites |

| Single-Cell Multi-Omics | 10x Genomics Multiome | Simultaneous profiling of RNA and chromatin accessibility |

| Spatial Omics | Visium Spatial Technology | Integrate molecular data with spatial context |

| Bioinformatics Suites | Pathway Tools (PTools) | Metabolic reconstruction and multi-omics visualization |

| Reference Databases | gnomAD, ClinVar, KEGG | Variant interpretation and pathway mapping |

| Statistical Environments | R/Bioconductor, Python | Data preprocessing and statistical integration |

| Visualization Platforms | Cytoscape with plugins | Network-based integration and visualization |

Application in Precision Medicine Research

Biomarker Discovery and Validation