Integrative Omics for Understanding Disease Mechanisms: From Multi-Layer Data to Precision Medicine

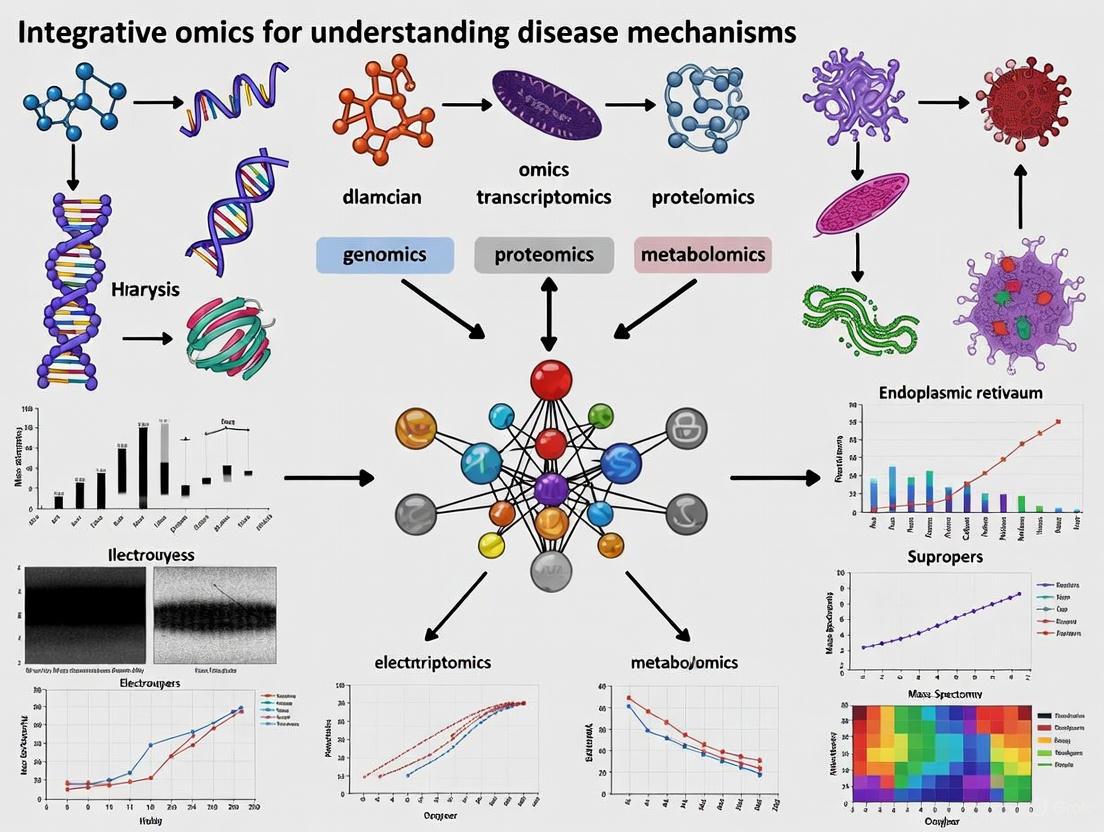

This article provides a comprehensive exploration of integrative multi-omics, a transformative approach that combines data from genomics, transcriptomics, proteomics, and metabolomics to unravel complex disease mechanisms.

Integrative Omics for Understanding Disease Mechanisms: From Multi-Layer Data to Precision Medicine

Abstract

This article provides a comprehensive exploration of integrative multi-omics, a transformative approach that combines data from genomics, transcriptomics, proteomics, and metabolomics to unravel complex disease mechanisms. Tailored for researchers, scientists, and drug development professionals, it covers the foundational principles of why single-omics analyses are insufficient for capturing biological complexity. It delves into cutting-edge methodological frameworks, including machine learning, foundation models, and network-based integration, highlighting their application in target discovery and personalized therapy. The content also addresses critical troubleshooting strategies for data heterogeneity and analytical challenges, and presents rigorous validation through case studies in oncology and neurodegeneration. By synthesizing current trends and real-world applications, this article serves as a guide for leveraging integrative omics to accelerate the translation of molecular insights into clinical breakthroughs.

Beyond the Blueprint: How Integrative Omics Reveals the Multi-Layered Nature of Disease

Biological systems, from individual cells to whole organisms, operate through the complex and dynamic interplay of multiple molecular layers. For decades, traditional single-omics approaches—which analyze one type of biological molecule in isolation—have provided valuable but fundamentally limited insights into these systems. The core limitation of single-omics technologies lies in their inherent inability to capture the multidimensional nature of biological processes, resulting in a fragmented view that obscures the complete functional landscape of cells and tissues [1] [2]. While well-established single-omics methods like bulk RNA sequencing have revolutionized biomedical science by enabling detailed exploration of genetic information, they represent an average across heterogeneous cell populations, merely reflecting the characteristics of cell populations or perhaps predominantly the information of the most numerous cells [1]. This approach inevitably masks critical cellular nuances, including cellular heterogeneity, rare cell populations, and the complex regulatory networks that drive disease mechanisms [2].

The transition from single-omics to multi-omics represents a paradigm shift in biological research, moving from isolated observations to integrated systems-level analysis. Single-cell RNA sequencing (scRNA-seq) marked a significant advancement over bulk sequencing by revealing cellular state and intercellular heterogeneity [1]. However, cellular information extends well beyond RNA sequencing, encompassing the genome, epigenome, proteome, metabolome, and crucial details about spatial relationships and dynamic alterations [1]. The limitations of single-omics approaches have become increasingly apparent as researchers recognize that most health-related traits result from the interaction of multiple internal features/alterations with multiple external conditions over a lifespan [3]. This review examines the technical and conceptual limitations of single-omics approaches, demonstrates their consequences through case studies, and illustrates how integrative multi-omics frameworks address these shortcomings to provide a more comprehensive understanding of disease mechanisms.

Fundamental Technical Limitations of Single-Omics Approaches

The Averaging Problem: Concealing Cellular Heterogeneity

Traditional bulk omics approaches average signals from heterogeneous cell populations, obscuring important cellular nuances and rare cell populations that may play critical roles in disease development and progression [2]. This averaging effect is particularly problematic in complex tissues like tumors, which contain diverse cell types including cancer stem cells, immune cells, and stromal cells, each contributing differently to disease pathology and treatment response [1]. While single-cell technologies have begun to address this issue, single-cell mono-omics still provides only a partial view of cellular heterogeneity, capturing just one dimension of the complex molecular landscape that defines true cellular identity and function [4].

Inability to Establish Causal Relationships

Single-omics data cannot establish causal relationships between different molecular layers, a critical limitation for understanding disease mechanisms. For instance, genomic data alone can identify disease-associated mutations, but not all mutations lead to disease phenotypes [5]. Without integrating transcriptomic, proteomic, and other molecular data, researchers cannot determine how genetic variations manifest functionally or distinguish causal mutations from inconsequential ones [5]. This represents a fundamental gap in the chain of understanding biological systems, as biological processes are driven by interactions between omics layers, and no single data type can capture the complexity of all factors relevant to understanding a phenomenon such as a disease [6].

Table 1: Key Limitations of Single-Omics Approaches in Disease Research

| Limitation Category | Specific Technical Shortcoming | Impact on Disease Research |

|---|---|---|

| Resolution Limitations | Averaging effects in bulk sequencing | Masks cellular heterogeneity and rare cell populations critical in cancer and developmental disorders |

| Mechanistic Insight Gaps | Inability to establish causal relationships between molecular layers | Prevents understanding of how genetic variants lead to functional consequences and disease phenotypes |

| Regulatory Blind Spots | Limited view of epigenetic regulation and post-translational modifications | Misses key regulatory mechanisms that drive disease progression without genomic alterations |

| Temporal Limitations | Static snapshots of dynamic processes | Fails to capture disease progression dynamics and cellular state transitions |

| Spatial Limitations | Loss of spatial context in most sequencing approaches | Eliminates crucial information about tissue microenvironment and cell-cell communication |

Regulatory Blind Spots and Missing Context

Single-omics approaches suffer from significant blind spots in capturing regulatory mechanisms. For example, scRNA-seq reveals which genes are being transcribed but cannot determine which transcripts are actually translated into proteins or how protein function is modified post-translationally [5]. Similarly, measuring chromatin accessibility alone without corresponding gene expression data provides an incomplete picture of regulatory activity, as accessible regions do not necessarily correspond to active regulation without transcriptional output [4]. These limitations are particularly problematic for understanding complex diseases like cancer, where epigenetic reprogramming and post-translational modifications often drive malignancy without underlying genomic alterations [7].

Consequences in Practice: Case Studies Highlighting Single-Omics Shortcomings

Limited Predictive Power in Disease Diagnostics

Single-omics approaches have demonstrated limited predictive ability when implemented in clinical or public health domains [3]. The relatively poor predictive ability of genomic data alone can be partly explained by the large variation of health-related traits explained by non-omics data, such as clinical and epidemiological variables [3]. For instance, in cancer research, single-omics biomarkers discovered in small cohorts often fail to validate across larger populations with different backgrounds, exposures, and comorbidities [5]. This lack of robustness stems from the inability of single-omics approaches to capture the complex, multifactorial nature of disease states, where multiple molecular layers interact to determine phenotypic outcomes.

Incomplete Disease Mechanism Elucidation

The fragmentation inherent in single-omics approaches often leads to incomplete or misleading conclusions about disease mechanisms. A notable example comes from cancer research, where integrating single-cell transcriptomics and metabolomics data was necessary to delineate how NNMT-mediated metabolic reprogramming drives lymph node metastasis in esophageal squamous cell carcinoma through modulation of E-cadherin expression [6]. This cross-level, multidimensional molecular profiling provided novel insights into disease mechanisms that would have been impossible to obtain from either transcriptomic or metabolomic data alone. Similarly, in COVID-19 research, integrative approaches were required to understand how the virus alters host gene expression and signaling pathways, leading to effective drug repurposing strategies [7].

Table 2: Experimental Methodologies Revealing Single-Omics Limitations Through Multi-Omics Integration

| Experimental Methodology | Omics Layers Integrated | Key Finding Enabled by Integration |

|---|---|---|

| Perturb-seq/CROP-seq | RNA expression + DNA perturbation | Maps information-rich genotype-phenotype landscapes by linking genetic perturbations to transcriptional outcomes [4] [7] |

| CITE-seq/REAP-seq | RNA expression + Protein expression | Reveals discrepancies between transcriptional activity and actual protein abundance, providing more accurate functional profiling [4] [1] |

| SNARE-seq/SHARE-seq | RNA expression + Chromatin accessibility | Identifies active regulatory sequences and their target genes, elucidating gene regulatory mechanisms [4] [8] |

| scNMT-seq | RNA expression + DNA methylation + Chromatin accessibility | Enables triple-omics integration to comprehensively profile epigenetic regulation and its functional outcomes [4] |

| ECCITE-seq | RNA expression + Protein expression + T cell receptor + Perturbation | Provides integrated immune profiling by capturing transcriptome, surface proteins, and immune receptor sequences simultaneously [4] |

Inefficient Drug Target Identification

Target-based drug discovery relying on single-omics data has faced high failure rates due to incomplete understanding of drug mechanisms and biological complexity [7]. Traditional approaches that rely on single-omics data, such as genomics or transcriptomics alone, often fall short in capturing the causal biological mechanisms underlying disease [5]. For example, in cancer drug discovery, targets identified through genomic approaches alone may not account for post-translational modifications, protein-protein interactions, or metabolic adaptations that significantly influence drug response [6]. The resurgence of phenotypic screening signals a shift back to a biology-first approach, made exponentially more powerful by modern omics data integration, as it allows researchers to observe how cells or organisms respond to perturbations without presupposing a target [7].

How Multi-Omics Integration Overcomes Single-Omics Limitations

Conceptual Framework for Data Integration

Multi-omics integration methods provide frameworks to overcome the fragmentation of single-omics approaches through several computational strategies. These include feature projection methods like canonical correlation analysis and manifold alignment that investigate relationships between variables by capturing anchors maximally correlated across datasets; Bayesian modeling that uses variational inference to model probabilistic relationships between different molecular layers; regression modeling that establishes quantitative relationships between omics layers; and decomposition approaches that break down complex multi-omics data into interpretable components [4]. More recently, network-based integration methods have emerged as powerful tools that incorporate biological network information (protein-protein interactions, metabolic pathways, gene regulatory networks) to integrate multiple layers of molecular data within their biological context [6]. These methods recognize that biomolecules do not perform their functions alone but interact to form biological networks, and that disease states often result from pathway disruptions rather than isolated molecular alterations [6].

Practical Implementation and Workflow

The practical implementation of multi-omics integration involves sophisticated computational frameworks designed to handle the distinct feature spaces of different omics modalities. Methods like GLUE (graph-linked unified embedding) address the fundamental challenge of integrating unpaired multi-omics data by modeling regulatory interactions across omics layers explicitly through a knowledge-based guidance graph [8]. This approach bridges different omics-specific feature spaces in a biologically intuitive manner while maintaining scalability to large datasets [8]. The integration workflow typically involves several key steps: (1) processing each omics layer using modality-specific models that account for technical noise and biological variability; (2) constructing or incorporating prior knowledge about cross-omics interactions; (3) aligning cells across modalities using advanced algorithms that preserve biological variation while removing technical artifacts; and (4) performing downstream analysis on the integrated space to extract biological insights [8].

Single-Omics vs. Multi-Omics Approaches

Research Reagent Solutions for Multi-Omics Studies

Table 3: Essential Research Reagents and Platforms for Multi-Omics Investigations

| Reagent/Platform | Function | Key Application in Multi-Omics |

|---|---|---|

| 10X Genomics Multiome | Simultaneous measurement of RNA expression and chromatin accessibility | Linked analysis of gene regulation and transcriptional output [8] |

| CITE-seq Antibodies | Oligo-tagged antibodies for protein detection | Integrated transcriptome and proteome analysis at single-cell resolution [4] [1] |

| Cell Painting Assay | Fluorescent dye-based profiling of cell morphology | Connection of morphological phenotypes with molecular profiles [7] |

| Perturb-seq Libraries | CRISPR guides paired with transcriptomic profiling | High-throughput functional screening linking genetic perturbations to transcriptional outcomes [4] [7] |

| Single-Cell Barcoding | DNA oligonucleotides for sample multiplexing | Reduction of batch effects in large-scale studies through sample pooling [1] |

The limitations of single-omics approaches fundamentally stem from their fragmented nature, which cannot capture the complex, interconnected reality of biological systems. As we have explored, these limitations include the concealing of cellular heterogeneity, inability to establish causal relationships between molecular layers, regulatory blind spots, and ultimately insufficient predictive power for clinical applications. The consequences manifest in incomplete disease mechanism elucidation and inefficient drug target identification, highlighting the critical need for paradigm shift in how we approach biological investigation.

Multi-omics integration represents this necessary evolution, moving from isolated observations to systems-level understanding. By simultaneously measuring and integrating multiple molecular dimensions, researchers can unravel the complex interactions and regulatory networks that underlie disease pathogenesis. The integrative frameworks and methodologies discussed provide a path forward, enabling researchers to construct comprehensive molecular maps that account for the true complexity of biological systems. As these approaches continue to mature and become more accessible, they hold the promise of revolutionizing our understanding of disease mechanisms and accelerating the development of more effective, personalized therapeutic strategies.

In the pursuit of understanding complex disease mechanisms, biomedical research has undergone a paradigm shift from reductionist approaches to global-integrative strategies that view biological systems as interconnected networks. This transformation has been fueled by the rise of 'omics' sciences—high-throughput technologies that enable the comprehensive study of an organism's molecular constituents. The core omics layers—genomics, transcriptomics, proteomics, and metabolomics—form a hierarchical framework that mirrors the central dogma of biology while capturing the dynamic complexity of living systems [9] [10]. When integrated, these layers provide a multidimensional perspective of biological phenomena, offering unprecedented opportunities to unravel the intricate molecular underpinnings of health and disease [11].

The fundamental value of multi-omics integration lies in its ability to bridge the gap between genetic blueprint and phenotypic manifestation. While genomics provides the static instruction manual, the subsequent layers capture the dynamic responses and functional outputs that ultimately determine cellular fate in both physiological and pathological states [9] [11]. For researchers and drug development professionals, this integrated approach enables the identification of novel biomarkers, reveals dysregulated pathways, uncovers disease subtypes, and identifies potential therapeutic targets that might remain invisible when examining single omics layers in isolation [12]. The following sections delineate each core omics layer, their technologies, methodologies, and their collective power in advancing precision medicine.

Defining the Core Omics Layers

Genomics

Genomics is the study of an organism's complete set of DNA, including all of its genes and the non-coding regions that comprise the majority of the genome [9] [10]. The genome represents the fundamental blueprint of an organism, containing the inherited instructions that guide development, functioning, and reproduction. Beyond merely sequencing DNA, genomics encompasses functional genomics (studying gene functions), comparative genomics (comparing genes across species), and structural genomics (determining 3D protein structures) [9].

In disease research, genomic approaches aim to identify variations in DNA sequence that correlate with or directly cause pathological states. These variations range from single nucleotide polymorphisms (SNPs)—variations at a single DNA base pair—to larger structural variations such as copy number variations (CNVs), insertions, deletions, and inversions [11] [10]. Genome-wide association studies (GWAS) represent a powerful application of genomics, scanning genomes from multiple individuals to identify genetic markers associated with specific diseases [11]. The completion of the Human Genome Project provided the foundational reference sequence against which individual variations can be identified, paving the way for personalized medicine approaches that account for an individual's unique genetic makeup [10].

Transcriptomics

Transcriptomics involves the comprehensive study of an organism's complete set of RNA transcripts, known as the transcriptome [9] [11]. This layer captures the expression dynamics of protein-coding messenger RNAs (mRNAs) and various non-coding RNAs (including long non-coding RNAs, microRNAs, and circular RNAs) that regulate gene expression at multiple levels [11]. The transcriptome serves as a critical intermediary between the static genetic code and the functional protein machinery, reflecting the genes that are actively expressed at a specific time under specific conditions.

Unlike the relatively stable genome, the transcriptome is highly dynamic, changing rapidly in response to environmental stimuli, developmental cues, and disease states [11]. Transcriptomic analyses can reveal how cells regulate gene expression—which genes are turned on or off, and to what degree—in healthy versus diseased tissues. In cancer research, for example, transcriptomics can identify gene fusions and alternative splicing events that contribute to oncogenesis [13]. Single-cell transcriptomics has emerged as a particularly powerful approach, enabling researchers to profile gene expression patterns in individual cells, thereby uncovering cellular heterogeneity within tissues that bulk analyses would average out [11].

Proteomics

Proteomics is the large-scale study of the entire complement of proteins—the proteome—in a biological system at a specific point in time [9]. Proteins serve as the primary functional actors within cells, performing countless tasks including structural support, catalysis of biochemical reactions, signal transduction, and molecular transport. The proteome is exceptionally complex and dynamic, with protein abundance, localization, modifications, and interactions constantly changing in response to intracellular and extracellular signals.

Proteomic analyses provide critical information that cannot be inferred from genomic or transcriptomic data alone, due to post-transcriptional regulation, varying protein half-lives, and extensive post-translational modifications (PTMs) such as phosphorylation, glycosylation, and ubiquitination that profoundly affect protein function [11]. Mass spectrometry-based techniques have become the cornerstone of modern proteomics, enabling the identification and quantification of thousands of proteins simultaneously [13]. Applications in disease research include comparing protein expression profiles between healthy and diseased tissues, mapping protein-protein interaction networks dysregulated in pathology, and identifying PTM patterns that alter cellular signaling in disorders such as Alzheimer's disease and cancer [11].

Metabolomics

Metabolomics focuses on the systematic study of small molecule metabolites, typically under 1,500 Da in molecular weight, that represent the end products of cellular processes [9]. The metabolome provides a direct readout of cellular activity and physiological status, capturing the ultimate response of a biological system to genetic, environmental, or therapeutic influences. Metabolites include substrates, intermediates, and products of metabolic pathways—such as carbohydrates, lipids, amino acids, and nucleotides—that reflect the functional outcome of molecular events at the genomic, transcriptomic, and proteomic levels.

Because metabolites are directly involved in cellular energy production, biosynthesis, and catabolism, their levels can immediately reflect pathological disruptions to homeostasis [9] [11]. Metabolomics is particularly valuable in translational research for identifying diagnostic biomarkers, understanding disease mechanisms, and monitoring therapeutic responses [13]. The close relationship between metabolite profiles and phenotype makes metabolomics a powerful tool for connecting molecular signatures to clinical manifestations, such as distinguishing metabolic subtypes of tumors or identifying circulating biomarkers for early disease detection [11].

Methodologies and Technologies for Omics Data Generation

The technological revolution in high-throughput analytical methods has been the driving force behind the rise of omics sciences. Each omics layer relies on specialized platforms and protocols designed to capture its particular class of biomolecules comprehensively and accurately.

Table 1: Core Technologies for Omics Data Generation

| Omics Layer | Primary Technologies | Key Applications in Disease Research | Sample Requirements |

|---|---|---|---|

| Genomics | Next-generation sequencing (NGS), Sanger sequencing, DNA microarrays, Third-generation sequencing (PacBio, ONT) | GWAS, variant discovery (SNPs, CNVs), whole genome/exome sequencing, cancer genomics | DNA from blood, tissue, or cells; varying input amounts based on platform |

| Transcriptomics | RNA sequencing (RNA-seq), single-cell RNA-seq (scRNA-seq), microarrays, nanostring | Gene expression profiling, alternative splicing analysis, biomarker discovery, single-cell heterogeneity | High-quality RNA (RIN >7-8); fresh-frozen tissue optimal; specific protocols for single-cell |

| Proteomics | Mass spectrometry (Orbitrap, MALDI-TOF, FT-ICR), affinity proteomics, protein microarrays | Protein expression quantification, post-translational modification analysis, protein-protein interactions, biomarker validation | Tissue lysates, biofluids; protein extraction with protease/phosphatase inhibitors |

| Metabolomics | LC-MS/MS, GC-MS, NMR spectroscopy, Raman spectroscopy | Metabolic pathway analysis, biomarker discovery, drug metabolism studies, toxicology | Serum, plasma, urine, tissue; rapid quenching of metabolism required |

Genomic Technologies and Protocols

Genomic analysis has evolved dramatically from low-throughput Sanger sequencing to highly parallelized next-generation sequencing (NGS) platforms [10]. The standard workflow begins with DNA extraction and quality control, followed by library preparation where DNA is fragmented and adapter sequences are ligated. For whole genome sequencing, these libraries are sequenced using platforms such as Illumina, which utilizes sequencing-by-synthesis with fluorescently labeled nucleotides, or third-generation platforms like PacBio and Oxford Nanopore that generate long reads ideal for resolving complex genomic regions [13]. For focused analyses, targeted sequencing panels or exome sequencing capture specific regions of interest using hybridization-based probes. Data analysis involves alignment to a reference genome (e.g., GRCh38), variant calling using tools like GATK, and annotation to determine potential functional consequences of identified variants [10].

Transcriptomic Technologies and Protocols

Transcriptomic profiling typically begins with RNA extraction, with careful attention to RNA integrity since degradation significantly impacts data quality. For bulk RNA-seq, mRNA is selected using poly-A capture or ribosomal RNA depletion, followed by cDNA synthesis, library preparation, and sequencing [13]. Single-cell RNA-seq requires specialized protocols such as CEL-seq2 or Drop-seq that incorporate cell-specific barcodes during reverse transcription, enabling transcriptome profiling of thousands of individual cells in parallel [13]. Bioinformatics pipelines for transcriptomics include quality control (FastQC), alignment (STAR, HISAT2), quantification (featureCounts, HTSeq), and differential expression analysis (DESeq2, edgeR) [11]. For non-coding RNA analysis, specific library preparation methods that capture small RNAs or long non-coding RNAs are employed.

Proteomic Technologies and Protocols

Mass spectrometry-based proteomics represents the gold standard for comprehensive protein analysis [13]. The typical workflow involves protein extraction, digestion (usually with trypsin), peptide separation via liquid chromatography, and analysis by tandem mass spectrometry. Label-free quantification compares peptide intensities across runs, while isobaric labeling methods (TMT, iTRAQ) enable multiplexed analysis of multiple samples simultaneously [11]. Post-translational modification analysis requires specialized enrichment strategies—immunoprecipitation for ubiquitination, metal oxide chromatography for phosphorylation—prior to MS analysis [11]. Data processing involves peptide identification (using search engines like MaxQuant), protein inference, and quantification. Alternative approaches include affinity-based methods such as antibody arrays or reverse-phase protein arrays that offer higher throughput for targeted protein quantification [13].

Metabolomic Technologies and Protocols

Metabolomic analyses employ either targeted approaches (quantifying a predefined set of metabolites) or untargeted approaches (comprehensively measuring all detectable metabolites) [11]. Sample preparation is critical and varies by analyte class; protein precipitation is common for biofluids, while tissue extraction often uses dual-phase methods to capture both hydrophilic and lipophilic metabolites. Liquid chromatography coupled to mass spectrometry (LC-MS) is the workhorse of metabolomics, with different chromatographic methods (reversed-phase, HILIC, ion-pairing) employed to separate diverse metabolite classes [13]. Gas chromatography-MS (GC-MS) provides excellent separation for volatile compounds, while nuclear magnetic resonance (NMR) spectroscopy offers non-destructive analysis with minimal sample preparation [13]. Data processing includes peak detection, alignment, and metabolite identification using spectral libraries, followed by statistical analysis to identify differentially abundant metabolites.

Multi-Omics Integration for Disease Mechanism Research

The true power of omics approaches emerges when multiple layers are integrated to construct comprehensive molecular models of disease pathogenesis. Multi-omics integration can be categorized into horizontal (within-omics) and vertical (cross-omics) approaches [14]. Horizontal integration combines datasets of the same omics type across different batches, platforms, or studies to increase statistical power and robustness. Vertical integration combines different omics modalities from the same set of samples to reveal interconnected molecular networks and causal relationships [12].

In practice, multi-omics integration serves several critical functions in disease research: (1) detecting disease-associated molecular patterns across multiple biological layers; (2) identifying molecular subtypes of diseases with distinct clinical outcomes; (3) understanding regulatory processes underlying disease pathogenesis; (4) improving diagnosis and prognosis through combinatorial biomarkers; and (5) predicting drug response based on multi-parametric molecular profiles [12]. For example, integrating genomic, transcriptomic, and proteomic data from tumor samples can reveal how specific mutations alter signaling pathways through changes in protein expression and activity, providing insights for targeted therapy development [11].

Computational methods for multi-omics integration range from correlation-based approaches that identify associations between different molecular layers, to network-based methods that model complex interactions, to machine learning and deep learning algorithms that predict clinical outcomes from high-dimensional multi-omics data [11]. The emergence of single-cell multi-omics and spatial omics technologies now enables researchers to capture multiple omics layers while preserving cellular resolution and tissue context, providing unprecedented insights into cellular heterogeneity and microenvironmental interactions in diseased tissues [11].

Diagram 1: Multi-Omics Integration in Disease Research. This workflow illustrates the hierarchical relationships between core omics layers and their applications in disease mechanism studies.

Essential Research Reagents and Reference Materials

Robust multi-omics research requires well-characterized reagents and reference materials that ensure analytical validity and reproducibility across experiments and laboratories. The table below outlines essential research solutions for generating high-quality multi-omics data.

Table 2: Essential Research Reagent Solutions for Multi-Omics Studies

| Reagent Category | Specific Examples | Function and Application | Quality Considerations |

|---|---|---|---|

| Reference Materials | Quartet reference materials (DNA, RNA, protein, metabolites), NIST reference materials, Coriell cell lines | Platform calibration, batch effect correction, proficiency testing, quality control | Stability, commutability, well-characterized properties, representation of diversity |

| Nucleic Acid Extraction Kits | Qiagen DNeasy/RNeasy kits, Promega Maxwell kits, Zymo Research kits | High-quality DNA/RNA isolation from diverse sample types | Yield, purity (A260/280 ratio), integrity (RIN for RNA), removal of inhibitors |

| Library Preparation Kits | Illumina Nextera, KAPA HyperPrep, NEB Next kits | Preparation of sequencing libraries from nucleic acids | Efficiency, bias, complexity, compatibility with downstream platforms |

| Mass Spectrometry Standards | Pierce Quantitative standards, iRT kits, Stable isotope-labeled internal standards | Retention time calibration, quantitative accuracy, instrument performance monitoring | Purity, solubility, stability, concentration accuracy |

| Chromatography Columns | Waters Acquity, Thermo Accucore, Agilent ZORBAX | Separation of analytes prior to mass spectrometry analysis | Reproducibility, peak shape, pressure stability, lifetime |

| Bioinformatics Tools | GATK, DESeq2, MaxQuant, XCMS, MOFA | Data processing, quality control, statistical analysis, data integration | Documentation, active development, community support, benchmarking |

The emergence of multi-omics reference materials, such as the Quartet suite developed from B-lymphoblastoid cell lines of a family quartet, represents a significant advancement for quality assurance in integrative studies [14]. These materials provide "built-in truth" defined by genetic relationships and the central dogma of information flow from DNA to RNA to protein, enabling objective assessment of data quality and integration methods across platforms and laboratories [14]. Ratio-based profiling approaches that scale absolute feature values of study samples relative to concurrently measured common reference samples have demonstrated improved reproducibility and comparability for multi-omics data integration [14].

The core omics layers—genomics, transcriptomics, proteomics, and metabolomics—provide complementary and increasingly comprehensive views of biological systems that are transforming our approach to understanding disease mechanisms. While each layer offers valuable insights independently, their integration through multi-omics strategies captures the complexity and dynamics of pathological processes more completely than any single approach. For researchers and drug development professionals, these technologies offer powerful tools for biomarker discovery, disease subtyping, target identification, and therapeutic monitoring.

Despite remarkable progress, challenges remain in standardizing methodologies, managing computational complexity, integrating diverse data types, and translating findings into clinical applications. The development of robust reference materials, improved computational integration methods, and standardized protocols will be crucial for advancing the field. As technologies continue to evolve—particularly in single-cell and spatial omics—and as large-scale initiatives such as the Multi-Omics for Health and Disease Consortium generate increasingly comprehensive datasets, integrative omics approaches promise to deepen our understanding of disease pathogenesis and accelerate the development of precision medicine interventions [15]. Through continued methodological refinement and collaborative science, multi-omics integration will undoubtedly play an increasingly central role in biomedical research and therapeutic development.

The sequencing of the first human genome marked the beginning of a new era in biological research, paving the way for the development of high-throughput technologies that generate massive-scale molecular data across multiple layers of biological regulation [16]. This post-genomic landscape now encompasses various "omics" fields, including genomics, transcriptomics, proteomics, and metabolomics, each providing distinct but interconnected insights into cellular functions and disease processes [17]. While single-omics analyses have yielded valuable discoveries, they offer limited perspectives on the complex, multi-layered nature of biological systems. Multi-omics integration has emerged as a transformative approach that combines data from these different molecular layers to provide a more comprehensive understanding of disease mechanisms and enable the transition from observing correlations to inferring causation in biological pathways [18].

The fundamental premise of multi-omics integration rests on the conceptual framework of the "omics cascade," which represents the sequential flow of biological information from genes to transcripts, proteins, and metabolites [19]. This flow is not strictly linear but involves complex regulatory interactions and feedback loops that remain poorly understood. Since each omic layer is causally tied to the next, multi-omics integration serves to disentangle these relationships to properly capture cell phenotype [20]. The core challenge—and opportunity—lies in moving beyond correlative associations to establish causal relationships that drive disease phenotypes, thereby enabling more effective diagnostic, prognostic, and therapeutic strategies [6].

Computational Frameworks for Multi-Omics Integration

Data Integration Strategies and Challenges

Integrating multi-omics data presents significant computational challenges due to the inherent heterogeneity of the data types, scales, and sources. Biological datasets are complex, noisy, biased, and heterogeneous, with potential errors arising from measurement mistakes or unknown biological variations [6]. The high-dimensional nature of omics data, often comprising thousands of variables but limited samples, further complicates integration efforts [19]. Several strategic frameworks have been developed to address these challenges, each with distinct advantages for specific research contexts.

Integration approaches can be categorized based on the relationship between samples across omics datasets. Matched integration (vertical integration) combines data from different omics layers within the same set of samples or even the same single cell, using the cell itself as an anchor [20]. Unmatched integration (diagonal integration) involves combining data from different cells or different studies, requiring computational methods to project cells into a co-embedded space to find commonality [20]. Mosaic integration represents an intermediate approach that can be used when experiments have various combinations of omics that create sufficient overlap across samples [20].

From a methodological perspective, integration strategies fall into three primary categories: correlation-based methods, multivariate approaches, and machine learning/artificial intelligence techniques [19]. Statistical and correlation-based methods slightly predominate in practical applications, followed by multivariate approaches and machine learning techniques [19]. The selection of an appropriate integration strategy depends on the research question, data characteristics, and desired biological outcomes, with no one-size-fits-all solution available [20].

Classification of Integration Methods

Table 1: Categories of Multi-Omics Integration Methods

| Category | Subtypes | Key Features | Representative Tools |

|---|---|---|---|

| Correlation-Based Methods | Gene co-expression analysis, Gene-metabolite networks, Similarity Network Fusion | Identify statistically significant associations between omics layers; Build correlation networks; Relatively straightforward implementation | WGCNA, xMWAS, Cytoscape [17] [19] |

| Multivariate Methods | Matrix factorization, Factor analysis, Projection-based methods | Simultaneously analyze multiple variables; Reduce dimensionality; Identify latent factors explaining variance across omics | MOFA+, PLS, PCA [20] [19] |

| Machine Learning/AI Approaches | Neural networks, Variational autoencoders, Graph neural networks | Handle complex nonlinear relationships; Pattern recognition in high-dimensional data; Predict disease states or drug responses | SCHEMA, DCCA, DeepMAPS, GLUE [20] [6] |

| Network-Based Methods | Network propagation/diffusion, Network inference models | Incorporate prior biological knowledge; Model biological interactions; Identify key regulatory nodes | Graph neural networks, Network propagation [6] |

Tool Selection for Specific Applications

The selection of computational tools for multi-omics integration must align with the specific analytical goals and data characteristics. For matched multi-omics data (profiled from the same cell), tools such as Seurat v4, MOFA+, and totalVI effectively leverage the cell as a natural anchor for integration [20]. These tools employ diverse computational approaches including weighted nearest-neighbor, factor analysis, and deep generative models respectively [20]. For unmatched data (from different cells), methods such as GLUE, BindSC, and Seurat v3 utilize techniques like variational autoencoders, canonical correlation analysis, and manifold alignment to project cells into a shared space where commonality can be established [20].

More recently, bridge integration and mosaic integration approaches have been developed to handle complex experimental designs where different samples have various combinations of omics measured [20]. Tools such as StabMap and Cobolt can integrate datasets with unique and shared features by creating a single representation of cells across datasets [20]. For temporal dynamics analysis, methods like MultiVelo employ probabilistic latent variable models to integrate mRNA and chromatin accessibility data across timepoints [20].

Network Biology: From Correlation to Causation

Biological Networks as an Integrative Framework

Network biology provides a powerful conceptual and computational framework for multi-omics integration by representing biological systems as interconnected networks of molecular components and their interactions [6]. This approach aligns with the fundamental organization of biological systems, where biomolecules do not function in isolation but rather through complex interactions that form biological networks [6]. Prominent examples include protein-protein interaction (PPI) networks, gene regulatory networks (GRNs), metabolic networks, and drug-target interaction (DTI) networks [6].

In these network representations, nodes represent individual biological entities (genes, proteins, metabolites), while edges represent interactions or relationships between them [6]. Network-based integration methods can be categorized into four primary types: (1) network propagation/diffusion, which spreads information across the network based on connectivity; (2) similarity-based approaches, which leverage topological similarity between nodes; (3) graph neural networks, which use deep learning on graph-structured data; and (4) network inference models, which predict novel interactions [6]. These approaches have demonstrated particular utility in drug discovery applications, including drug target identification, drug response prediction, and drug repurposing [6].

Establishing Causal Relationships in Networks

A critical challenge in network biology is distinguishing correlative relationships from causal relationships. While correlation-based methods can identify associations between molecular features, establishing causality requires additional analytical approaches and experimental validation. Several strategies have been developed to address this challenge, including the use of prior biological knowledge from databases of known interactions, temporal sequencing of omics measurements to establish chronology, and causal inference methods that leverage genetic variation or perturbation data [6].

For instance, Graph-Linked Unified Embedding (GLUE) is a network-based method that can achieve triple-omic integration using a graph variational autoencoder framework [20]. GLUE learns how to anchor features using prior biological knowledge, which it uses to link omic data and infer regulatory relationships [20]. Similarly, tools like CellOracle focus specifically on modeling gene regulatory networks by integrating mRNA expression, CRISPR screening, and chromatin accessibility data to infer causal regulatory relationships [20].

Table 2: Network-Based Approaches for Causal Inference

| Method Type | Mechanism | Data Requirements | Causal Inference Strength |

|---|---|---|---|

| Gene Regulatory Network Inference | Models regulatory relationships between transcription factors and target genes | Chromatin accessibility, TF binding motifs, Gene expression | High for transcriptional regulation |

| Network Propagation | Diffuses information through known interaction networks | Protein-protein interactions, Pathway databases | Medium (depends on reference network quality) |

| Causal Mediation Analysis | Tests whether the effect of an independent variable on a dependent variable goes through a mediator | Multi-omics data with intervention or natural variation | High for established mediators |

| Directional Network Models | Incorporates directional relationships using Bayesian or structural equation models | Time-series data, Knockdown/perturbation data | High with appropriate experimental design |

Workflow for Network-Based Multi-Omics Integration

The following diagram illustrates a generalized workflow for network-based multi-omics integration to infer causal relationships:

Experimental Design and Methodological Protocols

Correlation-Based Integration Protocols

Correlation-based methods represent a foundational approach for multi-omics integration, particularly for generating initial hypotheses about relationships between different molecular layers. The Weighted Gene Correlation Network Analysis (WGCNA) method can be extended to integrate transcriptomics and metabolomics data through a systematic protocol [17]. First, co-expression analysis is performed on transcriptomics data to identify modules of co-expressed genes. These modules are summarized by their eigengenes, which represent the overall expression pattern of the module. Simultaneously, metabolomics data is processed and normalized. The correlation between module eigengenes and metabolite abundance patterns is then calculated to identify significant associations [17]. This approach can reveal how coordinated gene expression relates to metabolic changes under specific biological conditions.

For gene-metabolite network construction, a standardized protocol involves collecting matched gene expression and metabolite abundance data from the same biological samples, followed by data normalization [17]. Pairwise correlations (e.g., Pearson or Spearman correlation coefficients) between all gene-metabolite pairs are calculated, and statistical significance is determined. A correlation network is then constructed where nodes represent genes and metabolites, and edges represent significant correlations above predetermined thresholds (e.g., R² > 0.8 and p-value < 0.05) [17]. Network visualization and analysis tools like Cytoscape are employed to identify highly connected regions and key regulatory nodes [17]. This method has been successfully applied to identify key regulatory pathways in various biological contexts, including plant stress responses and cancer metabolism [17].

Machine Learning Integration Protocols

Machine learning approaches offer powerful alternatives for detecting complex, non-linear relationships in multi-omics data. The multi-omics variational autoencoder framework provides a robust protocol for integrating multiple omics layers [20]. Each omics data type is first preprocessed and normalized separately. The model architecture consists of separate encoders for each omics type that map the input data to a shared latent representation, and separate decoders that reconstruct each omics type from the latent representation [20]. The training objective combines reconstruction loss for each omics type with regularization of the latent space. Once trained, the shared latent representation can be used for downstream tasks such as disease classification, subtyping, or survival prediction [20]. This approach has been implemented in tools like scMVAE and DCCA for single-cell multi-omics integration [20].

For temporal multi-omics integration, a protocol using MultiVelo incorporates RNA velocity concepts to model causal relationships between chromatin accessibility and gene expression [20]. The method requires paired scRNA-seq and scATAC-seq data from the same cells across multiple timepoints. First, RNA velocity is estimated from splicing dynamics, while chromatin velocity is estimated from chromatin accessibility dynamics [20]. The model then uses a probabilistic latent variable model to jointly model these velocities and infer a shared latent time that captures the underlying cellular dynamics. This allows for the prediction of future cellular states and the inference of causal relationships between epigenetic changes and transcriptional outcomes [20].

Experimental Protocol for Multi-Omics Causal Validation

Establishing causality requires experimental validation beyond computational inference. A multi-phase validation protocol begins with computational identification of candidate causal relationships using the methods described above. The second phase involves perturbation experiments using techniques such as CRISPR/Cas9 for gene knockout, RNA interference for gene knockdown, or small molecule inhibitors for protein inhibition [16]. Following perturbation, multi-omics profiling is repeated to assess the effects on downstream molecular layers. The third phase employs causal mediation analysis to statistically test whether changes in the putative causal mediator (e.g., chromatin accessibility) account for the effect of the perturbation on the outcome (e.g., gene expression) [6]. Finally, functional assays relevant to the disease context are performed to confirm the physiological relevance of the identified causal relationship [16].

Case Studies in Disease Research

Alzheimer's Disease Trajectory Prediction

In neurodegenerative disease research, a novel approach called Machine Learning for Visualizing AD (ML4VisAD) was developed to predict and visualize Alzheimer's disease progression through a color-coded visual output [21]. This method integrated multimodal data including neuroimaging (MRI, PET), neuropsychological test scores, cerebrospinal fluid biomarkers (amyloid beta, phosphorylated tau protein, total tau protein), and risk factors (age, gender, education, ApoE4 gene) [21]. The model used a convolutional neural network architecture that took baseline measurements as input to generate visual images reflecting disease progression at different time points. The approach achieved an accuracy of 0.82±0.03 for 3-way classification and 0.68±0.05 for 5-way classification, demonstrating the power of integrated multi-omics and multimodal data for predicting disease trajectories [21].

The implementation provided not just classification but also visual interpretation of the decision-making process, offering insights into which biomarkers contributed most to disease progression predictions [21]. This addresses the "black box" problem often associated with complex machine learning models and allows clinicians to assess the rationale behind specific classifications, particularly for challenging converter cases (patients who transition between diagnostic categories over time) [21].

Cancer Subtyping and Biomarker Discovery

In oncology, multi-omics integration has revolutionized cancer subtyping and biomarker discovery. The Cancer Genome Atlas (TCGA) represents one of the most comprehensive multi-omics resources, housing data for more than 33 different cancer types across 20,000 individual tumor samples [18]. TCGA includes diverse data types: RNA-Seq, DNA-Seq, miRNA-Seq, single-nucleotide variants, copy number variations, DNA methylation, and reverse phase protein array data [18]. Integrated analysis of these data has enabled molecular reclassification of tumors beyond histopathological criteria.

A notable example comes from colon and rectal cancer research, where integrated proteogenomic analysis revealed that the chromosome 20q amplicon was associated with the largest global changes at both mRNA and protein levels [18]. Integration of proteomics data helped identify potential 20q candidates, including HNF4A, TOMM34, and SRC, that might have been missed by genomic or transcriptomic analysis alone [18]. Similarly, in breast cancer, the Molecular Taxonomy of Breast Cancer International Consortium (METABRIC) used integrated analysis of clinical data, gene expression, SNPs, and CNVs to identify 10 molecular subgroups with distinct clinical outcomes and therapeutic vulnerabilities [18].

Pharmacogenomics and Drug Response Prediction

Multi-omics integration has proven particularly valuable in pharmacogenomics, where it helps elucidate the genetic basis of variable drug responses. For example, studies of the antiplatelet drug clopidogrel have demonstrated how integration of genomic and clinical data can explain treatment failure [16]. Clopidogrel is a prodrug that requires activation by the cytochrome P450 2C19 enzyme (CYP2C19). Loss-of-function variants of this enzyme (particularly CYP2C192) result in reduced drug activation and diminished antiplatelet effects [16]. Heterozygous patients show intermediate activity that may be overcome by dose adjustment, while homozygous patients derive little benefit even at increased doses [16]. Conversely, gain-of-function variants (CYP2C1917) are associated with increased bleeding risk [16].

The Cancer Cell Line Encyclopedia (CCLE) provides another powerful example, containing comprehensive molecular data (gene expression, copy number, sequencing) and pharmacological profiles for 24 anticancer drugs across 479 cancer cell lines [18]. Integration of these multi-omics and drug response data has enabled the identification of novel biomarkers and mechanistic effectors of drug response, facilitating the development of personalized treatment strategies [18].

Research Reagent Solutions

Table 3: Essential Research Reagents and Platforms for Multi-Omics Studies

| Category | Specific Reagents/Platforms | Function in Multi-Omics Research |

|---|---|---|

| Sequencing Technologies | Next-Generation Sequencing (NGS) platforms, Single-cell RNA-seq, ATAC-seq | Comprehensive profiling of genome, epigenome, transcriptome; Enables identification of genetic variants, chromatin accessibility, and transcriptional networks [16] |

| Proteomics Platforms | Liquid chromatography-mass spectrometry (LC-MS), Isobaric labeling (TMT, iTRAQ), SILAC | Identification and quantification of proteins and post-translational modifications; Provides crucial data on functional effectors of cellular processes [16] [17] |

| Metabolomics Tools | Mass spectrometry, NMR spectroscopy, Liquid/gas chromatography | Comprehensive profiling of small molecule metabolites; Captures ultimate mediators of metabolic processes and regulatory signals [17] |

| Perturbation Reagents | CRISPR/Cas9 systems, RNA interference tools, Small molecule inhibitors | Experimental manipulation of candidate causal genes/proteins; Functional validation of computationally inferred relationships [16] [6] |

| Reference Databases | Protein-protein interaction databases, Pathway databases (KEGG, Reactome), Drug-target networks | Prior biological knowledge for network construction; Context for interpreting multi-omics findings; Grounding computational predictions in established biology [6] |

Visualization and Interpretation of Integrated Data

Color-Coding Systems for Multi-Omics Visualization

Effective visualization is crucial for interpreting complex multi-omics data. The ten simple rules for colorizing biological data visualization provide essential guidance for creating accessible and informative visualizations [22]. The first rule emphasizes identifying the nature of the data, classifying variables as nominal (categorical without order), ordinal (categorical with order), interval (numerical without true zero), or ratio (numerical with true zero) [22]. This classification directly informs color palette selection, with qualitative palettes suited for nominal data, sequential palettes for ordinal and interval data, and diverging palettes for data with critical midpoint values [22].

Rule 2 highlights the importance of selecting an appropriate color space, with recommendations to use perceptually uniform color spaces like CIE Luv and CIE Lab that align with human visual perception [22]. These spaces ensure that equal numerical changes in color values correspond to approximately equal perceived changes, preventing visual distortion of data patterns [22]. Subsequent rules address critical considerations such as checking color context, evaluating color interactions, assessing color deficiencies, and ensuring accessibility for all users, including those with color vision deficiencies [22].

Multi-Omics Visualization in Practice

In applied multi-omics research, the ML4VisAD system demonstrates an innovative approach to visualization, generating color-coded visual outputs that reflect disease progression at different time points [21]. This system used a unique tensorization method to transform multimodal data into images that express disease state and progression, allowing clinicians to visually assess the nuances leading to specific classifications or predictions [21]. The visualization was generated rapidly (0.08 msec for a 23×23 output image and 0.17 msec for a 45×45 output image), enabling real-time application in clinical decision support scenarios [21].

For network visualization, tools like Cytoscape enable the creation of multi-omics networks where nodes represent biological entities across different omics layers and edges represent their relationships [17]. Advanced features allow for the encoding of multiple data dimensions through visual properties such as node color, size, shape, and edge thickness, enabling the representation of complex multi-omics relationships in an intuitive visual format [17]. These visualizations facilitate the identification of key regulatory hubs and bridges between different biological processes, guiding hypothesis generation and experimental design.

The integration of multi-omics data represents a paradigm shift in biological research, enabling the transition from observing correlations to inferring causation in disease pathways. While significant challenges remain—including data heterogeneity, computational complexity, and the need for sophisticated statistical methods—the field has developed robust frameworks for addressing these challenges [20] [19]. The synergistic application of correlation-based methods, multivariate approaches, machine learning, and network biology has demonstrated remarkable potential for unraveling complex biological mechanisms and advancing personalized medicine.

Future developments in multi-omics integration will likely focus on incorporating temporal and spatial dynamics more comprehensively, improving model interpretability, and establishing standardized evaluation frameworks [6]. The growing adoption of single-cell multi-omics technologies and spatial transcriptomics/proteomics will provide unprecedented resolution for studying cellular heterogeneity and tissue organization [20]. Additionally, the integration of clinical data with multi-omics measurements will be essential for translating molecular findings into actionable clinical insights.

As the field progresses, the development of more accessible tools and standardized protocols will be crucial for broadening the adoption of multi-omics integration across the research community. The ultimate goal remains the realization of precision medicine approaches that leverage comprehensive molecular profiling to understand disease mechanisms, predict therapeutic responses, and develop targeted interventions tailored to individual patients [16] [18]. Through continued methodological innovation and collaborative science, multi-omics integration will increasingly illuminate the causal pathways underlying human health and disease.

Biological systems are characterized by inherent complexity and variability, operating across multiple interconnected layers including the genome, transcriptome, proteome, and metabolome [23]. A comprehensive understanding of disease requires integrative, multi-omics analyses that capture these dynamic interactions [23]. Within this framework, organelle-level heterogeneity represents a crucial source of cellular "noise" that contributes significantly to intercellular phenotypic variation [24]. Unlike genetic mechanisms, this non-genetic heterogeneity arises from stochastic processes in cellular components and low molecular numbers, leading to fluctuations that can profoundly impact cellular behavior despite identical genetic backgrounds [24].

The study of organelle heterogeneity provides not only insight into normal physiological functions but also fundamental advances in understanding disease pathogenesis. In many clinically important diseases, extensive heterogeneity renders some cells more resistant to treatment than others, presenting significant therapeutic challenges [24]. This technical guide explores how integrative omics approaches are revolutionizing our understanding of organelle dysfunction, signaling networks, and cellular heterogeneity, providing researchers with methodologies to uncover novel disease mechanisms and therapeutic opportunities.

Theoretical Foundations: Organelle Heterogeneity and Cellular Noise

Cellular heterogeneity can be broadly categorized into two classes: "directed" heterogeneities that play specific roles in normal developmental processes, and "non-directed" heterogeneities that occur spontaneously due to inherent stochasticity of molecular processes [24]. A classic example of directed heterogeneity includes asymmetrical cell division in Drosophila melanogaster development, where ganglion mother cells consistently divide to produce daughter cells of differing fates [24]. In contrast, non-directed heterogeneity is exemplified by the generation of color-specific photoreceptors in the compound eye of D. melanogaster, where each photoreceptor cell independently chooses to express either blue- or green-sensitive rhodopsin [24].

The distinction between molecular-level and organelle-level variation is critical for understanding phenotypic heterogeneity. While molecular events (transcription, translation, protein turnover) have been extensively studied as sources of variation, organelles serve as reaction vessels for biochemical pathways where fluctuations in abundance, size, and shape can significantly influence functional output [24]. For instance, organelle volume affects capacity for storing reaction intermediates, while surface area influences flux of molecules between cytoplasm and organelle lumen [24].

Table 1: Types and Characteristics of Cellular Heterogeneity

| Type of Heterogeneity | Mechanism | Persistence | Example |

|---|---|---|---|

| Directed | Coordinated cellular decision-making | Often irreversible | Asymmetric cell division in Drosophila development |

| Non-directed | Stochastic molecular processes | Often reversible | Photoreceptor color choice in Drosophila eye |

| Molecular-level | Fluctuations in gene expression, signaling | Fluctuating | Variation in transcription factor concentrations |

| Organelle-level | Variations in organelle size, shape, distribution | More stable | Heterogeneity in mitochondrial morphology and function |

Organelle Dysfunction in Disease

Mitochondrial diseases represent a paradigm for studying organelle dysfunction, exhibiting exceptional clinical variability despite typically originating from mutations in either nuclear or mitochondrial DNA [25]. These primary mitochondrial diseases have an estimated prevalence of 1:2000 to 1:5000 and can manifest at any age with either systemic or tissue-specific effects across multiple organ systems [25]. The reasons why mitochondrial disorders show such tissue-specific manifestations are still poorly understood, though deficient ATP synthesis alone does not explain the phenotypic spectrum.

Beyond their role as cellular power plants, mitochondria are versatile players in anabolic cellular functions, including biosynthetic one-carbon cycle, iron-sulfur cluster synthesis, and cellular stress responses [25]. Their contributions to anabolic biosynthesis pathways represent an intriguing mechanism to explain tissue-specific disease manifestations [25]. Furthermore, mitochondria contribute to apoptosis and calcium storage, and facilitate signaling between cells [25], underlining their multifaceted roles in cellular physiology and disease.

Integrative Omics Methodologies

Multi-Omics Integration Strategies

Integrative omics approaches combine data from genomics, transcriptomics, proteomics, and metabolomics to provide unprecedented insights into disease mechanisms [19]. These methodologies can be broadly categorized into three main approaches: statistical-based methods, multivariate methods, and machine learning/artificial intelligence techniques [19]. Each offers distinct advantages for specific research applications and data characteristics.

Statistical and correlation-based methods represent fundamental approaches for assessing relationships between omics datasets. Simple scatterplots can visualize expression patterns and identify consistent or divergent trends [19]. Pearson's or Spearman's correlation analysis, including multivariate generalizations like the RV coefficient, test correlations between whole sets of differentially expressed genes in different biological contexts [19]. Correlation networks extend this analysis by transforming pairwise associations into graphical representations where nodes represent biological entities and edges are constructed based on correlation thresholds [19].

Table 2: Data-Driven Omics Integration Approaches

| Method Category | Key Techniques | Applications | Tools/Packages |

|---|---|---|---|

| Statistical & Correlation-based | Pearson/Spearman correlation, Correlation networks, WGCNA, xMWAS | Identify relationships between omics variables, Find co-expressed modules | xMWAS [19], WGCNA [19] |

| Multivariate Methods | PCA, PLS, Canonical correlation | Dimension reduction, Identify latent structures | |

| Machine Learning/AI | Integrative network models, Scissor algorithm | Classification, Biomarker discovery, Prognostic stratification | Scissor [26] |

Weighted Gene Correlation Network Analysis (WGCNA) represents a more advanced correlation-based approach that identifies clusters of co-expressed, highly correlated genes termed modules [19]. By constructing a scale-free network, WGCNA assigns weights to gene interactions, emphasizing strong correlations while reducing the impact of weaker connections [19]. These modules can be summarized by their eigenmodules and linked to clinically relevant traits, facilitating identification of functional relationships [19].

The xMWAS platform performs pairwise association analysis with omics data organized in matrices, determining correlation coefficients by combining Partial Least Squares (PLS) components and regression coefficients [19]. The resulting coefficients generate multi-data integrative network graphs, with communities of highly interconnected nodes identified through multilevel community detection methods [19].

Experimental Workflows for Multi-Omics Analysis

Workflow for Multi-Omics Data Integration

Single-Cell and Spatial Omics Technologies

Single-cell RNA sequencing (scRNA-seq) has emerged as a powerful technique for probing cellular heterogeneity, discerning distinct cell states, identifying marker genes, and elucidating associated functions [26]. When combined with spatial transcriptomics (ST), these technologies offer unprecedented opportunity to map single-cell and spatial resolution of tissues, facilitating understanding of how cellular heterogeneity contributes to disease progression and therapy response [26].

In practice, scRNA-seq analysis of lung adenocarcinoma tissues has revealed significant enrichment of proliferating cells compared to normal tissues [26]. Using algorithms like Scissor, researchers can identify cell subgroups closely associated with distinct disease phenotypes within scRNA data [26]. These approaches have enabled identification of proliferating cell genes with significant prognostic implications and revealed upregulated cell-cycling and oncogenic pathways within specific cell subpopulations [26].

Signaling Networks in Cellular Stress Responses

Mitochondrial Integrated Stress Response (ISRmt)

A milestone achievement of multi-omics approaches in mitochondrial research has been identification of novel regulators of the mitochondrial integrated stress response (ISRmt), a multifaceted tissue-specific response activated upon mitochondrial stress [25]. While some components overlap with the mitochondrial unfolded protein response (UPRmt), the key transcription factors and downstream targets differ [25].

The ISRmt signature comprises changes in gene expression mediated by activating transcription factor 4 (ATF4), ATF5, and ATF3, resulting in de novo synthesis of metabolic cytokines FGF21 and GDF15 and remodeling of one-carbon and folate metabolisms [25]. This leads to increased serine and nucleotide pools, collectively mediated by the upstream kinase mTORC1 [25]. Strikingly, inhibition of mTORC1 with rapamycin reverses these molecular defects and skeletal muscle tissue-level pathology in mouse models of mitochondrial myopathy [25].

Mitochondrial Integrated Stress Response Pathway

Intercellular Communication Networks

Spatial organization and communication between cellular subpopulations play crucial roles in disease progression. In lung adenocarcinoma, single-cell analyses have revealed complex, intersecting differentiation pathways among proliferating cell subsets, with specific clusters like C3_KRT8 emerging as central nodes [26]. Intercellular communication analysis using tools like CellChat has identified specific signaling pathways, such as MIF-CD74+CD44, as key mediators of communication among these subpopulations [26]. Spatial transcriptomics has further confirmed spatial colocalization of specific proliferating cell subtypes, supporting the notion of their potential synergistic role in cancer progression [26].

Quantitative Data and Biomarker Discovery

Metabolic Remodeling in Mitochondrial Dysfunction

Multi-omics approaches have revealed profound metabolic remodeling in mitochondrial diseases. Studies of mouse models with mutations in the mtDNA helicase Twinkle (encoded by Twnk), and human patients carrying the same mutations, identified major remodeling of the anabolic folate-driven one-carbon cycle specifically in affected tissues [25]. This drives one-carbon units for purine and glutathione synthesis, suggesting therapy targets in the folate cycle [25]. Similar findings were reported in human cells depleted for mtDNA polymerase-γ (encoded by Polg) [25].

Proteomic and transcriptomic analyses of conditional knockout mouse models of genes essential for mtDNA expression in heart tissue revealed remodeling of the one-carbon cycle, substantially reduced coenzyme Q (CoQ) levels, and decreased levels of multiple mitochondrial CoQ biosynthesis enzymes [25]. These findings propose controlled therapy trials with CoQ derivatives for patients with mtDNA maintenance defects [25].

Table 3: Key Biomarkers in Mitochondrial Disease Identified via Multi-Omics

| Biomarker | Biological Role | Detection Method | Clinical Utility |

|---|---|---|---|

| FGF21 | Metabolic hormone | Immunoassay, MS | Sensitive and specific blood biomarker for muscle-manifesting mitochondrial diseases [25] |

| GDF15 | Stress-responsive cytokine | Immunoassay, MS | Specific biomarker for mitochondrial disorders [25] |

| Coenzyme Q | Electron carrier, antioxidant | Mass spectrometry | Potential therapeutic target for mtDNA maintenance defects [25] |

| One-carbon metabolites | Folate cycle intermediates | Metabolomics, MS | Indicators of metabolic remodeling in mitochondrial stress [25] |

Prognostic Signatures in Cancer

In lung adenocarcinoma (LUAD), integrative multi-omics and machine learning approaches have identified critical functions of proliferating cells in prognosis and personalized treatment [26]. Using the Scissor algorithm, researchers identified Scissor+ proliferating cell genes associated with prognosis [26]. An integrative machine learning program comprising 111 algorithms was used to construct a Scissor+ proliferating cell risk score (SPRS) that demonstrated superior performance in predicting prognosis and clinical outcomes compared to 30 previously published models [26].

The SPRS model not only predicted prognosis but also informed therapeutic strategies. High- and low-SPRS groups exhibited different biological functions and immune cell infiltration in the tumor immune microenvironment [26]. Importantly, high SPRS patients showed resistance to immunotherapy but increased sensitivity to chemotherapeutic and targeted therapeutic agents [26], highlighting the clinical utility of such integrative approaches.

The Scientist's Toolkit: Essential Research Reagents

Table 4: Key Research Reagent Solutions for Multi-Omics Studies

| Reagent/Technology | Function | Application Examples |

|---|---|---|

| Next-generation sequencing (NGS) | Comprehensive analysis of genome, exome, transcriptome | Identification of cancer-associated mutations, Transcriptome profiling [23] |

| Mass spectrometry | Sensitive detection and quantification of proteins and metabolites | Proteomic and metabolomic profiling, Biomarker verification [25] [19] |

| Scissor algorithm | Identification of cell subgroups associated with clinical phenotypes | Prognostic stratification of proliferating cells in LUAD [26] |

| xMWAS platform | Pairwise association analysis and network graph generation | Multi-omics integration and community detection [19] |

| WGCNA | Identification of co-expressed gene modules | Finding clusters of highly correlated genes associated with traits [19] |

| CellChat | Analysis of intercellular communication | Inference of signaling pathways between cell subpopulations [26] |

Integrative multi-omics approaches represent a paradigm shift in biological research, enabling unprecedented insights into organelle dysfunction, signaling networks, and cellular heterogeneity. The combination of high-throughput technologies with advanced computational methods has already yielded significant advances in understanding disease mechanisms, particularly in complex areas like mitochondrial diseases and cancer [25] [26] [23]. These approaches have moved beyond single-omics analyses to provide holistic views of the molecular landscape of disease.

Future research will likely focus on standardizing frameworks for multi-omics data integration to address current challenges related to heterogeneity, reproducibility, and data interpretation [23]. As technologies evolve, particularly in single-cell and spatial omics, researchers will gain even finer resolution of cellular heterogeneity and its functional consequences [26]. The continued development of network-based models and machine learning approaches will enhance our ability to extract meaningful biological insights from these complex datasets [19] [23].

For researchers and drug development professionals, these advances offer exciting opportunities to identify novel therapeutic targets and develop personalized treatment strategies. The integration of multi-omics data has already begun to transform diagnostic approaches and therapeutic decision-making, particularly in oncology [26] [23]. As these methodologies become more accessible and standardized, they hold the promise of advancing personalized therapies by fully characterizing the molecular landscape of disease, ultimately improving patient outcomes through more effective and targeted treatment strategies.

Frameworks for Integration: Machine Learning, AI, and Network Biology in Action

In the field of biomedical research, the advent of high-throughput technologies has enabled the comprehensive profiling of biological systems across multiple molecular layers, including genomics, transcriptomics, proteomics, and metabolomics [17]. While single-omics analyses have provided valuable insights, they often fail to capture the complex interactions between different molecular layers that drive disease mechanisms [27]. Multi-omics integration has thus emerged as a pivotal approach for unraveling the complexity of diseases such as cancer, enabling the identification of robust biomarkers and facilitating the development of personalized treatment strategies [28] [27].

The integration of multiple omics data types presents significant computational challenges, leading to the development of various strategic frameworks. These strategies can be broadly categorized into three main approaches—early, intermediate, and late integration—based on the stage at which the data fusion occurs [28] [29]. Another framework further delineates five categories: early, mixed, intermediate, late, and hierarchical integration [29]. The selection of an appropriate integration strategy depends on the research question, data characteristics, and analytical objectives, with each approach offering distinct advantages and limitations [27]. This technical guide provides an in-depth examination of these computational strategies, their methodologies, applications, and implementation considerations within the context of disease mechanism research.

Core Integration Models

Early Integration

Early integration, also referred to as "combined omics integration," involves the concatenation of raw or preprocessed data from multiple omics layers into a single combined matrix at the beginning of the analytical pipeline [28] [29]. This combined matrix then serves as input for downstream machine learning or statistical models.

The fundamental principle underlying early integration is that simultaneous analysis of all features may capture dependencies and interactions across different omic layers [28]. For example, in a study integrating transcriptomics and metabolomics data, the merged dataset would include all genes and metabolites as features, with samples as observations. This approach allows algorithms to potentially identify complex, cross-omic relationships that might be missed when analyzing each dataset separately.

Table 1: Characteristics of Early Integration

| Aspect | Description |

|---|---|