Multi-Omics for Early Disease Detection: Integrating AI, Biomarkers, and Precision Diagnostics

This article provides a comprehensive exploration of multi-omics technologies and their transformative role in early disease detection.

Multi-Omics for Early Disease Detection: Integrating AI, Biomarkers, and Precision Diagnostics

Abstract

This article provides a comprehensive exploration of multi-omics technologies and their transformative role in early disease detection. Tailored for researchers, scientists, and drug development professionals, it covers the foundational principles of genomics, transcriptomics, proteomics, and metabolomics. It delves into advanced methodological approaches for data integration, including AI and machine learning, and addresses key computational and experimental challenges. Through comparative analysis of statistical versus deep learning methods and examination of real-world clinical applications, this resource offers a holistic guide to developing, optimizing, and validating robust multi-omics strategies for precision medicine and improved patient outcomes.

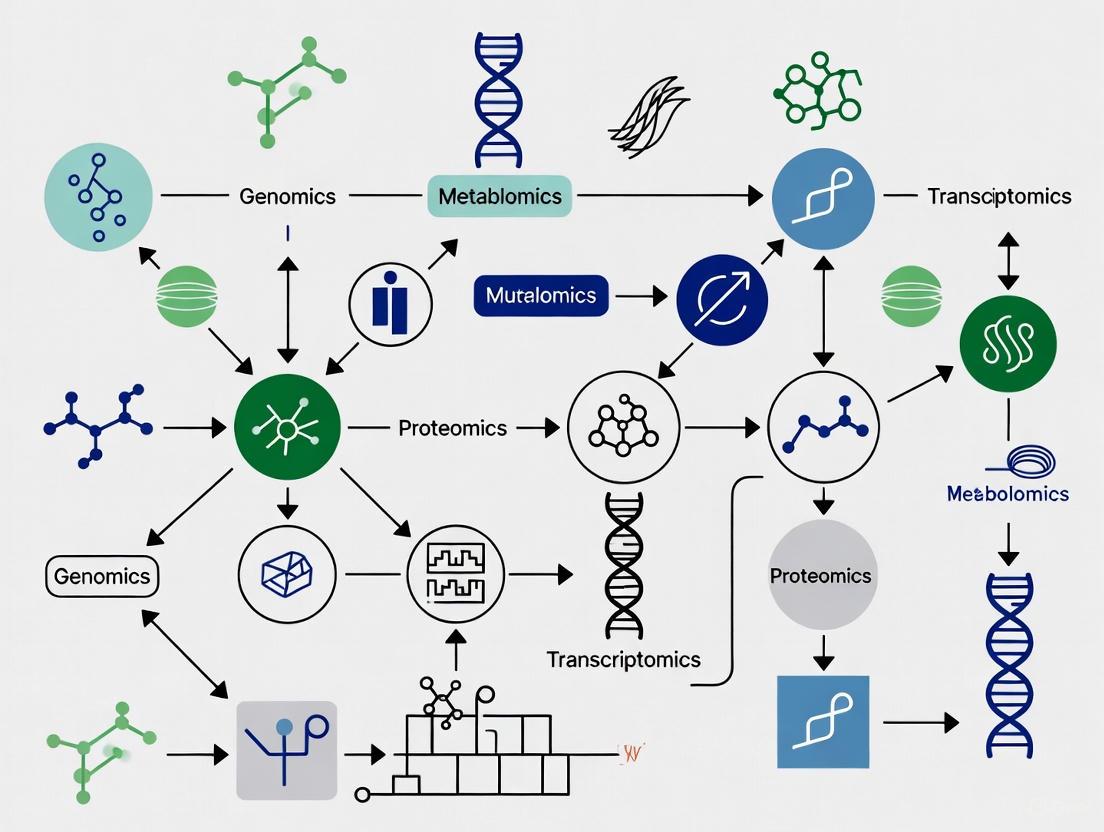

The Foundation of Multi-Omics: Core Technologies and Their Role in Early Disease Signatures

Multi-omics represents a paradigm shift in biological research, moving from the isolated analysis of single molecular layers to the integrated study of an entire biological system. This approach simultaneously measures and analyzes multiple "omes" — including the genome, epigenome, transcriptome, proteome, and metabolome — to construct a comprehensive model of health and disease [1]. For researchers focused on early disease detection, multi-omics provides an unprecedented opportunity to identify molecular dysregulations long before clinical symptoms manifest [2]. The core premise is that complex diseases, including cancer and neurodegenerative disorders, involve intricate interactions across multiple biological levels that cannot be captured by any single omics modality alone [3] [4]. By integrating these diverse datasets, scientists can uncover novel biomarkers, identify key drivers of pathogenesis, and develop more effective preventive strategies and therapeutic interventions [1] [5].

The technological landscape for multi-omics is evolving rapidly, with recent advancements enabling unprecedented resolution and scale. The emergence of single-cell multi-omics technologies allows investigators to correlate specific genomic, transcriptomic, and epigenomic changes within individual cells, providing insights into cellular heterogeneity that were previously obscured in bulk tissue analyses [5]. Simultaneously, innovations in sequencing, such as Illumina's 5-base solution, now permit simultaneous detection of genomic variants and DNA methylation from a single assay, streamlining the workflow for combined genetic and epigenetic analysis [6]. These technological advances, coupled with sophisticated computational methods, are transforming multi-omics from a specialized research area to a mainstream approach for precision medicine [5].

The Multi-Omics Data Universe: From Genotype to Phenotype

The multi-omics workflow encompasses multiple molecular layers, each providing distinct yet interconnected information about the biological system. Understanding the unique characteristics and technological foundations of each layer is crucial for designing effective integration strategies for early disease detection.

Table: The Multi-Omics Data Landscape for Early Disease Detection

| Omics Layer | Measured Entities | Key Technologies | Role in Early Disease Detection |

|---|---|---|---|

| Genomics | DNA sequence, structural variants | Whole Genome Sequencing (WGS), SNP arrays | Identifies genetic predisposition and risk variants [1] |

| Epigenomics | DNA methylation, histone modifications | Bisulfite sequencing, ChIP-seq | Reveals regulatory alterations from environmental exposures [4] [6] |

| Transcriptomics | RNA expression levels | RNA-seq, single-cell RNA-seq | Captures active gene expression changes [1] |

| Proteomics | Protein abundance, modifications | Mass spectrometry, affinity-based arrays | Reflects functional state and signaling activity [1] |

| Metabolomics | Small molecule metabolites | LC-MS, GC-MS | Provides snapshot of physiological state [1] |

The power of multi-omics integration lies in capturing the flow of biological information from genetic blueprint to functional phenotype. Genomic variations establish disease predisposition, while epigenomic mechanisms regulate how these genetic variants are expressed. The transcriptome serves as an intermediate messenger, followed by the proteome which executes biological functions, and finally the metabolome which reflects the ultimate biochemical output of the system [1] [4]. In early disease stages, subtle perturbations may occur across multiple layers simultaneously, often in patterns too complex to detect within any single omics modality. For instance, in Alzheimer's disease research, multi-omics approaches have revealed how genetic risk factors like the ApoE ε4 allele interact with metabolic dysregulation and protein aggregation processes years before clinical symptoms emerge [4].

Methodological Framework: Multi-Omics Integration Strategies

The integration of diverse omics datasets presents significant computational and statistical challenges, primarily due to the high-dimensionality, heterogeneity, and different statistical properties of each data type [7] [8]. Researchers have developed three principal computational strategies for multi-omics integration, each with distinct advantages and limitations for early detection research.

Early Integration: Data-Level Fusion

Early integration, also referred to as data-level fusion, involves concatenating all omics datasets into a single large matrix before analysis [7] [3]. This approach combines raw or pre-processed features from multiple omics layers into a unified dataset, which is then analyzed using multivariate statistical methods or machine learning algorithms. The primary advantage of early integration is its potential to capture all possible interactions between different omics modalities, as the model has access to the complete feature set simultaneously [1]. However, this method creates an extremely high-dimensional dataset where the number of features (molecular measurements) vastly exceeds the number of samples (patients or subjects), increasing the risk of overfitting and requiring robust regularization techniques [7] [3]. The "curse of dimensionality" is particularly problematic in early disease detection studies, where sample sizes may be limited due to the challenges of recruiting pre-symptomatic individuals.

Intermediate Integration: Feature-Level Fusion

Intermediate integration, also known as feature-level fusion, involves transforming each omics dataset into a new representation before combining them for analysis [7] [1]. This approach typically employs dimensionality reduction techniques such as principal component analysis (PCA) or autoencoders to extract meaningful latent features from each omics modality [3]. These transformed representations are then integrated using methods like Multiple Co-Inertia Analysis (MCIA) or Similarity Network Fusion (SNF) [9] [8]. The key advantage of intermediate integration is its ability to reduce noise and computational complexity while preserving the most biologically relevant information from each data type [7]. For early disease detection, network-based intermediate integration methods like SNF are particularly valuable, as they can capture shared patterns of sample similarity across different omics layers, potentially revealing consistent molecular subtypes among individuals with similar pre-symptomatic trajectories [8].

Late Integration: Decision-Level Fusion

Late integration, or decision-level fusion, involves analyzing each omics dataset separately and combining the results or predictions at the final stage [7] [1]. This ensemble approach builds separate models for each data type—for instance, training a classifier on genomic data, another on transcriptomic data, and a third on proteomic data—then aggregates their outputs through methods like weighted voting or stacking [1]. The main advantage of late integration is its robustness to missing data and its computational efficiency, as each omics dataset can be processed independently using optimal methods for that specific data type [1] [3]. However, this approach may miss subtle but biologically important interactions between different molecular layers, as the models never simultaneously "see" all data types [7]. In early detection applications, late integration can be effective when different omics layers provide complementary but relatively independent predictive signals for disease risk.

Table: Multi-Omics Integration Strategies Comparison

| Integration Strategy | Key Advantages | Key Limitations | Representative Methods |

|---|---|---|---|

| Early Integration | Captures all cross-omics interactions; Preserves raw information | High dimensionality; Computationally intensive; Prone to overfitting | Concatenation + multivariate analysis [7] [1] |

| Intermediate Integration | Reduces complexity; Incorporates biological context through networks | Requires careful tuning; May lose some raw information | SNF, MOFA, MCIA [9] [8] |

| Late Integration | Handles missing data well; Computationally efficient; Flexible | May miss subtle cross-omics interactions | Separate analysis + result fusion [7] [1] |

Computational Frameworks and Tools for Multi-Omics Analysis

The implementation of multi-omics integration strategies requires specialized computational tools and algorithms. Several well-established software packages have been developed to address the specific challenges of multi-omics data analysis, each with distinct methodological approaches and applications for early detection research.

MOFA (Multi-Omics Factor Analysis) is an unsupervised factorization method that uses a Bayesian probabilistic framework to infer latent factors that capture the principal sources of variability across multiple omics datasets [9] [8]. Unlike traditional single-omics dimensionality reduction techniques, MOFA identifies factors that may be shared across multiple data types or specific to individual omics layers, providing a flexible framework for exploring complex datasets without pre-defined phenotypic groups [8]. This characteristic makes MOFA particularly valuable for early disease detection studies, where the goal is often to discover novel molecular subtypes or trajectories without strong a priori hypotheses. The model decomposes each omics data matrix into a shared factor matrix (representing the latent factors across all samples) and weight matrices for each omics modality, plus residual noise terms [8]. In practice, MOFA has been applied to stratify healthy individuals into subgroups with distinct molecular profiles, potentially reflecting different future disease risks [2].

Similarity Network Fusion (SNF) is a network-based integration method that constructs and fuses patient similarity networks from each omics dataset [8]. The algorithm first creates a separate network for each data type, where nodes represent patients and edges encode similarity between patients based on their molecular profiles. These datatype-specific networks are then iteratively fused through a nonlinear process that strengthens consistent similarities across omics layers while dampening inconsistent ones [8]. The result is a fused network that captures complementary information from all omics modalities, which can then be used for clustering patients into molecularly distinct subgroups. For early detection research, SNF offers the advantage of being able to identify patient subgroups that show consistent patterns across multiple omics layers, even when no single data type provides clear separation.

DIABLO (Data Integration Analysis for Biomarker discovery using Latent cOmponents) is a supervised integration method that uses known phenotype labels to guide the integration process and perform feature selection [8]. Based on multiblock sparse Partial Least Squares Discriminant Analysis (sPLS-DA), DIABLO identifies latent components as linear combinations of the original features that maximally covary across omics datasets while being predictive of the outcome of interest [8]. The method incorporates penalization techniques (e.g., Lasso) to select subsets of features from each omics dataset that are most informative for distinguishing between phenotypic groups. This supervised approach makes DIABLO particularly suited for early detection research when clear phenotypic outcomes are available, such as comparing pre-symptomatic individuals who eventually develop disease against those who remain healthy.

Deep Learning Approaches for Multi-Omics Integration

Deep learning (DL) has emerged as a powerful approach for multi-omics data integration, capable of automatically learning complex, non-linear relationships across different molecular layers [3]. DL models, particularly multi-layer neural networks, excel at processing high-dimensional, heterogeneous data—a defining characteristic of multi-omics datasets [3]. Several specialized DL architectures have been developed to address the unique challenges of multi-omics integration for early disease detection.

Autoencoders (AEs) and Variational Autoencoders (VAEs) are unsupervised neural networks that learn to compress high-dimensional omics data into lower-dimensional representations while preserving essential biological information [1] [3]. These models consist of an encoder network that maps the input data to a compressed latent space and a decoder network that reconstructs the original input from this latent representation. By training autoencoders on multiple omics datasets simultaneously or integrating their latent representations, researchers can obtain a unified view of the molecular landscape that emphasizes shared patterns across data types [3]. For early detection applications, the latent representations generated by AEs and VAEs can serve as features for downstream classification tasks, often with better generalization performance than raw data due to the denoising effect of the compression process.

Graph Convolutional Networks (GCNs) are designed specifically for network-structured data, making them naturally suited for multi-omics integration when biological knowledge is incorporated as prior information [1]. In this framework, molecular entities (genes, proteins, metabolites) are represented as nodes in a graph, with edges representing known interactions from databases such as protein-protein interaction networks or metabolic pathways [1]. GCNs learn by aggregating information from a node's neighbors, effectively propagating signals across the network to generate improved node representations. For early disease detection, GCNs can integrate multi-omics measurements by treating them as node attributes while leveraging the topological structure of biological networks to identify dysregulated modules or pathways that might not be apparent from molecular data alone [1].

Transformers, originally developed for natural language processing, have recently been adapted for multi-omics data analysis [1]. These models use self-attention mechanisms to weigh the importance of different features and data types, effectively learning which molecular measurements and modalities are most relevant for specific predictions [1]. The attention mechanisms in transformers can identify critical biomarkers from a sea of noisy data, making them particularly valuable for early detection research where subtle molecular signals must be distinguished from background biological variation. Additionally, transformers can handle missing data and variable-length inputs, which are common challenges in multi-omics studies [1].

Experimental Design and Workflow for Multi-Omics Studies

Implementing a robust multi-omics study for early disease detection requires careful experimental design and execution across multiple stages. The following workflow outlines key considerations and methodologies for generating high-quality, integration-ready multi-omics data.

Sample Preparation and Quality Control

The foundation of any successful multi-omics study lies in proper sample preparation and rigorous quality control. For matched multi-omics designs—where multiple molecular layers are measured from the same sample—careful partitioning of limited biological material is essential [8]. Best practices include aliquoting samples immediately after collection to minimize freeze-thaw cycles, using preservatives appropriate for each molecular assay (e.g., RNAlater for RNA stabilization, protease inhibitors for protein preservation), and documenting all processing steps in detail [8]. Quality control should be performed at multiple stages: initial assessment of nucleic acid integrity (e.g., RIN scores for RNA), library preparation quality checks (e.g., fragment size distribution), and post-sequencing metrics (e.g., sequencing depth, alignment rates, batch effects) [8]. For blood-based studies, which are particularly relevant for early detection, standardized collection tubes and processing protocols help minimize technical variation that could obscure subtle biological signals [2].

Data Generation Technologies and Platforms

Selecting appropriate technologies for each omics layer is crucial for generating data that can be effectively integrated. Recent technological advances have created new opportunities for more comprehensive and efficient multi-omics profiling. Illumina's 5-base solution exemplifies this trend, enabling simultaneous detection of genetic variants and DNA methylation patterns from a single assay through proprietary conversion chemistry that selectively converts methylated cytosine to thymine while preserving genomic complexity [6]. This approach streamlines the workflow for integrated genomic-epigenomic analysis, which is particularly relevant for early cancer detection and rare disease diagnosis [6]. For transcriptomic profiling, bulk RNA-seq remains widely used, but single-cell RNA-seq is increasingly employed to resolve cellular heterogeneity in early disease processes [5]. Proteomic analysis has been transformed by advances in mass spectrometry sensitivity and throughput, while metabolomic profiling increasingly employs complementary LC-MS and GC-MS platforms to cover diverse chemical classes [1].

Data Preprocessing and Normalization

Each omics data type requires specialized preprocessing and normalization to address technology-specific artifacts and make datasets comparable across samples [8] [3]. Genomic data from sequencing platforms typically involves quality filtering, adapter trimming, alignment to reference genomes, and variant calling using established pipelines like GATK [3]. Transcriptomic data requires read alignment, gene quantification, and normalization methods such as TPM or DESeq2's median-of-ratios to account for library size differences [1]. Proteomic data from mass spectrometry needs intensity normalization and protein quantification, often using label-free or isobaric labeling approaches [1]. Metabolomic data processing includes peak detection, alignment, and normalization to account for batch effects and matrix effects [1]. Crucially, the normalization strategies should preserve biological signal while removing technical artifacts, with careful consideration of how normalization choices might affect downstream integration [8].

The Scientist's Toolkit: Essential Reagents and Platforms

Table: Key Research Reagent Solutions for Multi-Omics Studies

| Product/Platform | Type | Primary Function | Application in Early Detection |

|---|---|---|---|

| Illumina 5-Base DNA Prep | Library Prep Kit | Simultaneous genomic and epigenomic profiling from single sample | Detects methylation episignatures in rare disease; cancer biomarker discovery [6] |

| Illumina Connected Multiomics | Analysis Platform | Statistical visualization and interpretation of multi-omic data | Integrates genetic and epigenetic data for functional genomics insights [6] |

| MOFA+ | R/Python Package | Unsupervised integration of multi-omics data | Discovers latent factors of variation in healthy cohorts [9] [8] |

| DIABLO (mixOmics) | R Package | Supervised integration for biomarker discovery | Identifies multi-omics biomarker panels for disease subtyping [8] |

| Similarity Network Fusion | Algorithm | Network-based integration of multiple data types | Clusters patients by multi-omics similarity for stratification [8] |

| Omics Playground | Web Platform | User-friendly multi-omics analysis with visualization | Enables code-free exploration of multi-omics datasets [8] |

Application in Early Disease Detection: Case Studies

Stratifying Healthy Individuals for Preventive Medicine

A 2025 study published in npj Genomic Medicine exemplifies the power of multi-omics integration for early risk assessment in apparently healthy populations [2]. Researchers performed a cross-sectional analysis of 162 individuals without pathological manifestations, integrating genomic, urine metabolomic, and serum metabolomic/lipoproteomic data [2]. Each omics layer was analyzed separately and after integration, with results demonstrating that multi-omic integration provided optimal stratification capacity compared to any single data type alone [2]. The study identified four distinct subgroups within this ostensibly healthy cohort, with one subgroup showing accumulation of risk factors associated with dyslipoproteinemias—a condition linked to increased cardiovascular risk [2]. Longitudinal follow-up of 61 individuals across two additional timepoints confirmed the temporal stability of these molecular profiles, suggesting that multi-omics stratification could identify individuals who might benefit from targeted monitoring and early preventive interventions [2].

Early Cancer Detection through Liquid Biopsy Multi-Omics

Liquid biopsies represent a promising application of multi-omics for non-invasive early cancer detection [5]. By simultaneously analyzing multiple analyte classes in blood—including cell-free DNA (cfDNA), RNA, proteins, and metabolites—researchers can detect cancer-associated molecular patterns with higher sensitivity and specificity than single-analyte approaches [5]. The multi-omics liquid biopsy approach leverages complementary information across molecular layers: cfDNA fragmentation patterns and methylation signatures provide information about tissue of origin, RNA profiles reveal gene expression alterations, protein biomarkers indicate functional pathway activation, and metabolic shifts reflect systemic physiological changes [5]. The integration of these diverse data types using machine learning algorithms has shown promise for detecting multiple cancer types at early stages, often before they become visible on imaging studies [5]. As these technologies continue to mature, they are expanding beyond oncology into other medical domains, further solidifying the role of multi-omics in early disease detection [5].

Neurodegenerative Disease Risk Assessment

Multi-omics approaches are transforming early detection strategies for neurodegenerative diseases, particularly Alzheimer's disease (AD) [4]. Research has revealed that the pathophysiological process of AD begins years or even decades before clinical symptoms appear, creating a critical window for early intervention [4]. Multi-omics studies integrating genomic, transcriptomic, proteomic, and metabolomic data have identified molecular signatures associated with future AD development in currently asymptomatic individuals [4]. For example, the integration of genomic data (including APOE ε4 status) with proteomic profiles of inflammatory markers and metabolomic signatures of lipid metabolism has improved the prediction of conversion from mild cognitive impairment to full AD dementia [4]. These integrated molecular profiles provide insights into the complex interplay between genetic predisposition, metabolic dysregulation, and neuroinflammatory processes in the earliest stages of neurodegenerative decline [4].

Future Directions and Challenges

Despite significant progress, multi-omics research for early disease detection faces several important challenges that will shape future directions in the field. Technical hurdles include the need for better standardization of preprocessing protocols and integration methods, as the absence of gold standards makes it difficult to compare results across studies or establish clinical-grade analytical pipelines [7] [8]. The computational demands of multi-omics analysis remain substantial, requiring scalable infrastructure and efficient algorithms to handle the increasing volume and complexity of data [1] [5]. From a biological perspective, interpreting integrated multi-omics results remains challenging, as statistical associations must be translated into mechanistic understanding through sophisticated functional validation [8].

Emerging trends point toward several exciting developments. The field is moving toward multi-analyte algorithmic analysis that can simultaneously process data from genomics, transcriptomics, proteomics, and metabolomics using artificial intelligence and machine learning [5]. Single-cell multi-omics technologies are rapidly advancing, enabling researchers to examine larger numbers of cells and a greater fraction of each cell's molecular content [5]. The clinical translation of multi-omics is accelerating, with liquid biopsies exemplifying how integrated molecular profiling can transform non-invasive diagnostics [5]. Perhaps most importantly, there is growing recognition that addressing health disparities requires engaging diverse patient populations in multi-omics research to ensure that biomarker discoveries are broadly applicable across different genetic backgrounds and environmental contexts [5].

Looking ahead, realizing the full potential of multi-omics for early disease detection will require continued collaboration across disciplines—bringing together biologists, clinicians, computational scientists, and engineers to develop more powerful integrative frameworks [5]. As these efforts mature, multi-omics profiling is poised to become a cornerstone of preventive medicine, enabling truly personalized risk assessment and targeted early interventions that can delay or prevent the onset of complex diseases [2].

The rising global burden of complex diseases necessitates a paradigm shift from reactive treatment to proactive detection. Multi-omics technologies, which integrate molecular data from multiple biological layers, are revolutionizing early disease detection for two of humanity's most significant health challenges: cancer and neurodegenerative disorders. By simultaneously analyzing genomic, transcriptomic, epigenomic, proteomic, and metabolomic data, researchers can identify molecular signatures of disease years before clinical symptoms manifest. This whitepaper provides an in-depth technical examination of multi-omics approaches, detailing experimental protocols, key biomarkers, computational frameworks, and reagent solutions that are transforming early intervention strategies and creating new frontiers in precision medicine.

The Multi-Omics Paradigm in Early Disease Detection

Conceptual Framework and Biological Rationale

Complex diseases like cancer and neurodegenerative disorders develop through progressive alterations across multiple biological layers over extended timeframes. Traditional single-marker approaches lack the sensitivity and specificity for early detection because they capture only isolated aspects of a multifaceted pathological process. Multi-omics analysis addresses this limitation by providing a comprehensive systems biology view of disease pathogenesis [10] [11].

The fundamental premise is that diseases create detectable molecular footprints across omics layers long before structural changes or clinical symptoms emerge. In cancer, transformed cells release cell-free DNA (cfDNA) with distinctive fragmentation patterns and methylation profiles into the bloodstream [12] [13]. In Alzheimer's disease (AD), pathological processes trigger cascading changes in mitochondrial function, inflammatory pathways, and metabolic networks years before cognitive decline becomes apparent [14] [15]. Multi-omics integration detects these coordinated changes, significantly enhancing the sensitivity and specificity of early detection compared to any single biomarker class.

Global Health Impact and Clinical Imperative

The World Health Organization identifies both cancer and neurodegenerative diseases as leading causes of mortality and morbidity worldwide, with incidence rates projected to increase with aging populations. Alzheimer's disease alone may affect over 115 million people globally by 2050 [10]. Early detection is clinically imperative because interventions are most effective during initial disease stages. For cancer, detection at localized versus distant stages improves 5-year survival rates by up to 70-90% for many cancer types [16]. For neurodegenerative diseases, identifying at-risk individuals during preclinical stages creates critical windows for therapeutic intervention before irreversible neuronal loss occurs [10] [15].

Multi-Omics Approaches in Cancer Detection

Technological Foundations and Analytical Frameworks

Multi-cancer early detection (MCED) tests represent the most advanced application of multi-omics in oncology. These liquid biopsy approaches analyze cfDNA from standard blood draws using shallow whole-genome sequencing to simultaneously assess multiple genomic and epigenomic features [12] [13]. The leading technological platforms integrate four primary analytical dimensions:

- Copy number aberration: Detection of chromosomal gains and losses characteristic of cancer genomes

- Fragmentomics: Analysis of cfDNA fragmentation patterns reflecting nucleosome positioning in tumor cells

- End motif analysis: Examination of sequence preferences at cfDNA fragment ends

- Methylation profiling: Mapping of epigenetic alterations in circulating DNA [12] [13]

Advanced MCED platforms additionally incorporate protein tumor markers to enhance detection sensitivity, creating a truly multi-analyte approach [13].

Performance Metrics and Clinical Validation

Recent large-scale validation studies demonstrate the remarkable potential of multi-omics MCED tests. The following table summarizes performance characteristics from key clinical studies:

Table 1: Performance Metrics of Multi-Cancer Early Detection Tests

| Study/Cohort | Cancer Types | Overall Sensitivity | Stage I Sensitivity | Stage II Sensitivity | Specificity | Tissue of Origin Accuracy |

|---|---|---|---|---|---|---|

| Independent Validation [12] | Multiple | 87.4% | N/R | N/R | 97.8% | 82.4% |

| Prospective Asymptomatic [12] | Multiple | 53.5% | N/R | N/R | 98.1% | N/R |

| Retrospective (SeekInCare) [13] | 27 types | 60.0% | 37.7% | 50.4% | 98.3% | N/R |

| Prospective (SeekInCare) [13] | Multiple | 70.0% | N/R | N/R | 95.2% | N/R |

N/R = Not Reported

The sensitivity gradient across cancer stages demonstrates the potential for detecting increasingly earlier forms of cancer while maintaining high specificity, addressing a critical limitation of traditional screening methods that often lack effectiveness for early-stage disease [12] [13] [16].

Experimental Protocol: Multicancer Early Detection Analysis

Sample Preparation and Sequencing

- Collect peripheral blood (10ml) in Streck Cell-Free DNA BCT or similar cfDNA-preserving tubes

- Process plasma within 6 hours of collection by double centrifugation (1600×g for 10min, 16,000×g for 10min at 4°C)

- Extract cfDNA from 4-8ml plasma using commercially available kits (QIAamp Circulating Nucleic Acid Kit)

- Prepare sequencing libraries with 10-30ng cfDNA using ThruPLEX Plasma-seq or similar library preparation kits

- Perform shallow whole-genome sequencing (0.5-1× coverage) on Illumina platforms (NovaSeq 6000)

Bioinformatic Analysis Workflow

- Quality Control: FastQC for sequence quality assessment

- Alignment: Burrows-Wheeler Aligner (BWA-MEM) to reference genome (GRCh37/hg19)

- Feature Extraction:

- Copy number alterations: circular binary segmentation

- Fragment size distribution: compute fragment length between alignment pairs

- End motif analysis: extract first and last 4 bases of each fragment

- Methylation profiling: bisulfite conversion analysis or inference from fragmentation patterns

- Machine Learning Classification: Ensemble methods (Random Forest, XGBoost) trained on multi-dimensional features to distinguish cancer vs. non-cancer and predict tissue of origin [12] [13]

MCED Test Workflow

Multi-Omics Approaches in Neurodegenerative Diseases

Genetic Architecture and Molecular Signatures

Neurodegenerative diseases exhibit complex genetic architectures existing along a continuum from monogenic to polygenic models [11]. The liability-threshold model provides a theoretical framework where cumulative effects of genetic variants and environmental factors eventually exceed a critical threshold, triggering disease onset [11]. Multi-omics approaches are essential for deciphering this complexity by identifying predictive molecular signatures across biological layers.

Recent integrated analyses of Alzheimer's disease have revealed consistent dysregulation in specific biological pathways, including:

- Neurotransmitter synapses (dopaminergic, glutamatergic, GABAergic)

- Mitochondrial function and oxidative phosphorylation

- Inflammatory pathways and complement activation

- Vitamin metabolism (B2, B6, pantothenate)

- Complement and coagulation cascades [14] [15]

Cell-type-specific analyses further indicate that microglia, endothelial cells, myeloid, and lymphoid cells show prominent transcriptomic and proteomic alterations in early disease stages [15].

Multi-Omics Biomarker Discovery and Validation

Integrated multi-omics studies have identified robust biomarker signatures for neurodegenerative diseases. The following table summarizes key biomarkers and functional pathways identified through recent studies:

Table 2: Multi-Omics Biomarkers in Neurodegenerative Diseases

| Omics Layer | Specific Biomarkers | Biological Process | Validation Approach |

|---|---|---|---|

| Genomics | APOE ε4, TREM2, ABCA7 | Lipid metabolism, immune response | GWAS, whole-genome sequencing [11] [17] |

| Transcriptomics | SLC6A12, CDKN1A, CLOCK | Mitochondrial function, oxidative stress | RNA-Seq, single-cell sequencing [14] [18] |

| Epigenomics | Differential methylation in cortical tissue | Neuronal development, inflammation | Methylation arrays [14] |

| Proteomics | Complement proteins, synaptic proteins | Synaptic pruning, immune activation | Mass spectrometry [15] [17] |

| Metabolomics | TCA cycle intermediates, lactate | Energy metabolism, oxidative stress | LC-MS, GC-MS [14] [15] |

| MicroRNA | hsa-miR-129-5p | Post-transcriptional regulation | miRNA profiling [14] |

Advanced computational methods have been essential for distinguishing causal drivers from secondary effects in these complex datasets. Machine learning frameworks applied to multi-omics data from large cohorts like ROSMAP and ADNI have successfully identified mitochondrial-related gene signatures with validated associations to AD risk and progression [14].

Experimental Protocol: Integrated Multi-Omics Analysis for Alzheimer's Disease

Cohort Selection and Sample Processing

- Utilize well-characterized longitudinal cohorts (ROSMAP, ADNI) with neuropathological confirmation

- Process post-mortem brain tissues (prefrontal cortex, hippocampus) and/or biofluids (CSF, blood)

- Extract analytes using standardized protocols:

- DNA: DNeasy Blood & Tissue Kit for genotyping and methylation analysis

- RNA: RNeasy Kit with DNase treatment for transcriptomics

- Protein: Tissue homogenization in RIPA buffer with protease inhibitors

- Metabolites: Methanol:water extraction for LC-MS analysis

Multi-Omics Data Generation

- Genomics: Whole-genome sequencing (30× coverage) or genotyping arrays (Illumina OmniExpress)

- Epigenomics: Methylation profiling (Illumina EPIC array)

- Transcriptomics: RNA sequencing (Illumina, 50M reads/sample) or single-cell RNA-seq (10X Genomics)

- Proteomics: High-resolution mass spectrometry (TMT labeling, Orbitrap Lumos)

- Metabolomics: LC-MS/MS with reverse-phase chromatography (Q-Exactive HF)

Computational Integration and Validation

- Quality Control: Platform-specific QC metrics, batch effect correction (ComBat)

- Differential Analysis: Limma/Voom for RNA, linear models for proteomics/metabolomics

- Pathway Integration: Multi-omics factor analysis (MOFA), integrative clustering

- Network Analysis: Weighted gene co-expression network analysis (WGCNA), protein-protein interaction networks

- Machine Learning: Ensemble methods (Random Forest, SVM) with cross-validation

- Experimental Validation:

Neurodegenerative Disease Multi-Omics Pipeline

Computational and Methodological Frameworks

Machine Learning Integration for Predictive Modeling

The complexity and dimensionality of multi-omics data necessitate advanced computational approaches. Ensemble machine learning frameworks have demonstrated particular utility for disease prediction. The MILTON (Machine Learning with Phenotype Associations) framework exemplifies this approach, integrating 67 diverse biomarkers including blood biochemistry, cell counts, urine assays, spirometry, and anthropometric measures to predict disease risk [19].

This framework employs multiple algorithms including Random Forest, Gradient Boosting, and Regularized Regression to generate disease-specific signatures. When applied to the UK Biobank dataset encompassing 484,230 genome-sequenced samples, MILTON significantly outperformed polygenic risk scores alone for 111 out of 151 disease codes, achieving AUC ≥ 0.7 for 1,091 ICD10 codes [19]. The model successfully identified "cryptic cases" - individuals with high disease probability who were subsequently diagnosed during follow-up - enabling earlier detection and potentially augmenting genetic association studies.

Single-Cell Multi-Omics and Spatial Resolution

Emerging single-cell technologies provide unprecedented resolution for detecting cell-type-specific changes in early disease. Single-cell RNA sequencing (scRNA-seq) has revealed novel cellular subpopulations and molecular subtypes of vulnerable neurons in neurodegenerative diseases [18]. Computational integration of single-cell multi-omics data enables the construction of detailed cellular maps and lineage trajectories that capture disease progression dynamics.

Bibliometric analysis reveals rapidly growing adoption of single-cell multi-omics in neurodegeneration research, with annual publications increasing from 1 in 2015 to 155 in 2023 [18]. These approaches are particularly valuable for identifying early, cell-type-specific pathological changes that precede bulk tissue alterations and clinical symptom onset.

Research Reagent Solutions

Table 3: Essential Research Reagents for Multi-Omics Studies

| Reagent Category | Specific Products | Application | Key Features |

|---|---|---|---|

| cfDNA Collection Tubes | Streck Cell-Free DNA BCT, PAXgene Blood ccfDNA Tubes | Blood collection for liquid biopsy | Preserves cfDNA, prevents genomic DNA contamination |

| Nucleic Acid Extraction | QIAamp Circulating Nucleic Acid Kit, AllPrep DNA/RNA/miRNA Universal Kit | Simultaneous DNA/RNA extraction | High recovery from small volumes, maintains integrity |

| Library Preparation | ThruPLEX Plasma-seq, SMARTer Stranded Total RNA-seq | NGS library preparation | Low input requirements, unique molecular identifiers |

| Bisulfite Conversion | EZ DNA Methylation Kit, Premium Bisulfite Kit | DNA methylation analysis | High conversion efficiency, minimal DNA degradation |

| Single-Cell Isolation | 10X Genomics Chromium, BD Rhapsody | Single-cell omics profiling | High-throughput, cell multiplexing capabilities |

| Protein Digestion | S-Trap Micro Spin Columns, Filter-Aided Sample Preparation | Proteomics sample prep | Efficient digestion, compatibility with detergents |

| Mass Spectrometry | TMTpro 16plex, iRT Kit | Proteomic quantification | Multiplexing, retention time calibration |

| Metabolite Extraction | Biocrates AbsoluteIDQ p400 HR Kit, Methanol:Chloroform | Metabolite profiling | Broad coverage, high reproducibility |

Multi-omics technologies represent a transformative approach for addressing the global health challenges of cancer and neurodegenerative diseases through early detection. The integration of genomic, transcriptomic, proteomic, epigenomic, and metabolomic data provides unprecedented sensitivity for identifying molecular signatures of disease during preclinical stages when interventions are most effective. Continued advances in single-cell technologies, computational integration methods, and large-scale biomarker validation will accelerate the translation of these approaches into clinical practice, ultimately enabling a shift from reactive treatment to proactive prevention and early intervention for these devastating diseases.

The integration of multi-omics data represents a paradigm shift in biomedical research, enabling the elucidation of complex disease pathways across multiple biological layers. By simultaneously analyzing genomics, transcriptomics, proteomics, metabolomics, and other molecular data types, researchers can now construct comprehensive models of disease pathogenesis that account for the intricate interactions between various biological subsystems. This technical guide examines cutting-edge methodologies for multi-omics integration, with a specific focus on applications in early disease detection and the identification of comprehensive biological pathways underlying disease progression. Through advanced machine learning frameworks, network-based analysis, and cross-omic correlation studies, multi-omics approaches are transforming our understanding of biological hierarchies and creating new opportunities for predictive medicine and therapeutic development.

Multi-omics refers to the integrated analysis of multiple omics datasets collected from the same individuals, including genomics, transcriptomics, proteomics, metabolomics, epigenomics, and metagenomics [20]. This approach provides a holistic perspective on biological systems by capturing information across different molecular layers, enabling researchers to understand how variations at one level propagate through biological hierarchies to influence phenotype manifestation. The fundamental premise of multi-omics integration is that combined analysis of these complementary data types provides more biological insight than could be obtained from any single omics layer alone.

In translational medicine, multi-omics applications typically address five key objectives: (i) detecting disease-associated molecular patterns, (ii) identifying disease subtypes, (iii) improving diagnosis and prognosis, (iv) predicting drug response, and (v) understanding regulatory processes [20]. Each of these objectives benefits from the comprehensive view of biological systems that multi-omics data provides, particularly for complex diseases where pathogenesis involves dysregulation across multiple biological subsystems.

The analytical challenge lies in developing methods that can effectively integrate these heterogeneous data types while accounting for their distinct statistical properties, dimensionalities, and biological contexts. Successfully addressing this challenge requires sophisticated computational approaches that can identify meaningful patterns across omics layers and relate them to clinical outcomes.

Methodological Frameworks for Multi-Omics Integration

Machine Learning Approaches

Machine learning frameworks have demonstrated remarkable utility for multi-omics integration, particularly for disease prediction tasks. The MILTON (machine learning with phenotype associations) framework exemplifies this approach, leveraging an ensemble of biomarkers to predict disease states from multi-omics data [19]. MILTON utilizes 67 features including 30 blood biochemistry measures, 20 blood count measures, four urine assay measures, three spirometry measures, four body size measures, three blood pressure measures, sex, age, and fasting time to predict 3,213 diseases in the UK Biobank.

The framework employs three distinct time-models for training: prognostic models using individuals diagnosed up to 10 years after biomarker collection, diagnostic models using individuals diagnosed up to 10 years before biomarker collection, and time-agnostic models using all diagnosed individuals regardless of temporal relationship to sample collection [19]. This temporal stratification is crucial for addressing the clinical reality that biomarker samples may be collected years before or after disease diagnosis.

For the challenging "big p, small n" problem (high-dimensional features with small sample sizes) common in multi-omics data, the Multi-view Factorization AutoEncoder (MAE) with network constraints provides an effective solution [21]. This approach combines multi-view learning and matrix factorization with deep learning, incorporating domain knowledge such as biological interaction networks as regularization constraints to improve model generalizability. The model consists of multiple autoencoders (one for each omics view) and learns both feature and patient embeddings simultaneously while ensuring consistency with prior biological knowledge.

Multi-Omics Integration Techniques

Different computational strategies have been developed for multi-omics integration, each with distinct strengths and applications:

Table 1: Multi-Omics Data Integration Methods

| Integration Type | Description | Common Algorithms | Best Use Cases |

|---|---|---|---|

| Early Integration | Combining raw datasets before analysis | Matrix concatenation | Pattern discovery across omics layers |

| Intermediate Integration | Learning joint representations of separate datasets | Multi-view Factorization AutoEncoder (MAE) [21], Similarity Network Fusion | Subtype identification, dimensionality reduction |

| Late Integration | Analyzing datasets separately then combining results | Ensemble methods, statistical meta-analysis | Leveraging existing single-omics tools |

| Knowledge-Guided Integration | Incorporating biological networks as constraints | Network-based regularization | Pathway analysis, mechanistic insights |

Intermediate integration approaches, which learn joint representations of separate datasets, have proven particularly valuable for identifying patient subtypes and disease-associated molecular patterns [20]. These methods effectively balance the need to respect the unique characteristics of each omics data type while still enabling cross-omics pattern recognition.

Multi-Omics in Disease Research Applications

Enhanced Disease Prediction and Early Detection

Multi-omics approaches have demonstrated superior predictive performance compared to traditional single-omics models or polygenic risk scores (PRS) alone. In comprehensive analyses of the UK Biobank dataset, MILTON framework achieved area under the curve (AUC) ≥ 0.7 for 1,091 ICD10 codes, AUC ≥ 0.8 for 384 ICD10 codes, and AUC ≥ 0.9 for 121 ICD10 codes across all time-models and ancestries [19]. This performance significantly outperformed disease-specific PRS, with multi-omics models showing superior predictive accuracy for 111 out of 151 ICD10 codes compared to PRS-based approaches (median AUC 0.71 vs. 0.66, MWU two-sided P = 2.71 × 10⁻⁸) [19].

Critically, multi-omics models demonstrate strong prognostic capability, successfully identifying individuals who would later develop disease. When trained solely on cases diagnosed before January 1, 2018, MILTON models with AUC ≥ 0.6 significantly enriched for participants diagnosed after this date in 97.41% of 1,740 ICD10 codes analyzed (Fisher's exact test one-sided P < 0.05) [19]. This demonstrates the potential of multi-omics approaches for genuine early detection before clinical manifestation.

Alzheimer's Disease Pathway Mapping

In Alzheimer's disease (AD) research, multi-omics approaches have been particularly valuable for elucidating the complex pathways underlying disease pathogenesis. AD involves dysfunction across multiple biological systems, including amyloid-beta plaque accumulation, tau neurofibrillary tangle formation, neuroinflammation, and impaired glymphatic function [4]. Multi-omics analysis has revealed how these processes interact across biological hierarchies, from genetic predisposition to metabolic dysregulation.

Sex differences in AD development exemplify how multi-omics data reveals cross-hierarchical interactions. Research shows that women generally have lower synapse density but higher tau and amyloid-beta levels than men, differences linked to gonadal hormones and sex chromosomes [4]. Estrogen plays a vital role in processes involving mitochondrial function, inflammation, glucose transport and metabolism, and cholesterol homeostasis, with both estrogen and testosterone regulating apolipoprotein E (ApoE), a key AD biomarker [4]. The loss of Y chromosome in male AD patients can increase Aβ toxicity and lead to premature cell death [4]. These findings demonstrate how multi-omics integration connects chromosomal, hormonal, proteomic, and metabolic factors into a coherent pathway model.

Multi-omics studies have also clarified the relationship between AD and comorbidities such as cardiovascular disease and diabetes. In Type 2 diabetes mellitus, chronic hyperglycemia exacerbates amyloid beta production and tau hyperphosphorylation, while impaired insulin signaling disrupts neuronal energy metabolism [4]. Elevated blood glucose levels trigger the formation of advanced glycation end-products (AGEs), which promote Aβ accumulation and tau phosphorylation, creating a direct metabolic pathway to neurodegeneration.

Cardiovascular Risk Stratification in Healthy Populations

Multi-omics profiling shows particular promise for early risk detection in ostensibly healthy populations. In a study of 162 individuals without pathological manifestations, integrated analysis of genomics, urine metabolomics, and serum metabolomics/lipoproteomics identified four distinct subgroups with different metabolic profiles [2]. Longitudinal data for 61 individuals across two additional time-points demonstrated temporal stability in these molecular profiles, supporting their utility for ongoing risk assessment.

This approach enabled identification of a subgroup with accumulation of risk factors associated with dyslipoproteinemias, suggesting targeted monitoring could reduce future cardiovascular risks [2]. The polygenic score analysis within this cohort identified 28 traits with potential stratification value, with glycine and triglycerides in medium HDL showing particularly strong association (odds-ratio close to 6) [2]. This demonstrates how multi-omics integration can reveal disease-relevant biological variation even in the absence of clinical symptoms.

Table 2: Multi-Omics Performance in Disease Prediction

| Disease Area | Omic Layers Used | Key Findings | Performance Metrics |

|---|---|---|---|

| General Disease Prediction | Blood biochemistry, blood counts, urine assays, spirometry, vital signs | 1,091 ICD10 codes with AUC ≥ 0.7; outperformed PRS for 111/151 codes | Median AUC 0.71 vs 0.66 for PRS (P = 2.71×10⁻⁸) |

| Alzheimer's Disease | Genomics, epigenomics, transcriptomics, proteomics, metabolomics | Identified sex-specific pathways, metabolic links to diabetes | Revealed hormonal regulation of ApoE |

| Cardiovascular Risk | Genomics, urine metabolomics, serum metabolomics/lipoproteomics | Identified 4 subgroups in healthy cohort; one with dyslipoproteinemia risk | Odds ratio ~6 for glycine and triglycerides in medium HDL |

Experimental Protocols and Methodologies

Multi-Omics Study Design Framework

Effective multi-omics research requires careful study design to ensure data quality and analytical robustness. The following protocol outlines a comprehensive approach:

Sample Collection and Processing:

- Collect appropriate biospecimens (blood, urine, tissue) with standardized protocols

- Process samples immediately or store at appropriate temperatures (-80°C for most omics analyses)

- Record detailed metadata including fasting status, time of collection, and processing parameters

Multi-Omic Data Generation:

- Genomics: Perform whole genome sequencing or genotyping using platforms such as Illumina or Oxford Nanopore

- Transcriptomics: Conduct RNA sequencing with minimum 30 million reads per sample

- Proteomics: Implement mass spectrometry-based quantification (e.g., LC-MS/MS) or immunoassays

- Metabolomics: Employ targeted or untargeted mass spectrometry with quality control pools

- Epigenomics: Conduct DNA methylation arrays or sequencing-based approaches

Data Preprocessing and Quality Control:

- Apply platform-specific normalization and batch correction

- Remove low-quality samples based on quality metrics

- Impute missing values using appropriate algorithms (e.g., k-nearest neighbors)

- Perform principal component analysis to identify outliers and batch effects

Multi-view Factorization AutoEncoder (MAE) Implementation

The MAE framework provides a powerful approach for integrating multi-omics data with biological networks [21]. The implementation protocol includes:

Data Preparation:

- Represent each omics data type as a sample-feature matrix M⁽ⁱ⁾ ∈ ℝᴺ×ᵖ⁽ⁱ⁾

- Obtain biological interaction networks G⁽ⁱ⁾ for each feature type from databases such as STRING or Reactome

- Normalize each data matrix to have zero mean and unit variance

Model Architecture:

- Construct multiple autoencoders (one for each omics view)

- Implement a shared latent space that integrates information across views

- Include graph Laplacian regularization terms to incorporate biological network constraints

Training Procedure:

- Initialize model weights using Xavier initialization

- Train using mini-batch gradient descent with Adam optimizer

- Employ early stopping based on reconstruction loss on validation set

- Monitor training and validation loss to avoid overfitting

Hyperparameter Tuning:

- Optimize latent dimension size using cross-validation

- Tune regularization strength for network constraints

- Adjust learning rate and batch size based on dataset size

The graph Laplacian regularization term for a feature network G is implemented as: Lᵢ = Tr(Y⁽ⁱ⁾LᴳY⁽ⁱ⁾ᵀ), where Lᴳ = D - G is the graph Laplacian, D is the degree matrix, and Y⁽ⁱ⁾ is the feature embedding for view i [21].

Visualization of Multi-Omics Workflows

Multi-Omics Integration and Analysis Workflow

Multi-view Factorization AutoEncoder Architecture

Table 3: Publicly Available Multi-Omics Data Resources

| Resource Name | Omic Content | Species | Primary Use Cases | Access Link |

|---|---|---|---|---|

| The Cancer Genome Atlas (TCGA) | Genomics, epigenomics, transcriptomics, proteomics | Human | Cancer pathway analysis, biomarker discovery | https://portal.gdc.cancer.gov/ |

| Answer ALS | Whole-genome sequencing, RNA transcriptomics, ATAC-sequencing, proteomics, clinical data | Human | Neurodegenerative disease mechanisms, motor activity correlation | https://dataportal.answerals.org/ |

| UK Biobank | Genomic sequencing, blood biochemistry, proteomics, metabolomics, imaging | Human | Population-scale disease prediction, biomarker discovery | https://www.ukbiobank.ac.uk/ |

| jMorp | Genomics, methylomics, transcriptomics, metabolomics | Human | Multi-omics correlation studies, metabolic pathway analysis | https://jmorp.megabank.tohoku.ac.jp/ |

| DevOmics | Gene expression, DNA methylation, histone modifications, chromatin accessibility | Human/Mouse | Developmental biology, epigenetic regulation | http://devomics.cn/ |

Computational Tools and Platforms

Table 4: Multi-Omics Data Analysis Tools and Platforms

| Tool/Method | Functionality | Integration Type | Key Features |

|---|---|---|---|

| Multi-view Factorization AutoEncoder (MAE) | Deep learning with network constraints | Intermediate | Incorporates biological networks as regularization |

| MILTON | Ensemble machine learning for disease prediction | Late | Utilizes 67 biomarkers for 3,213 disease prediction |

| Similarity Network Fusion (SNF) | Patient similarity integration | Intermediate | Combines multiple patient similarity networks |

| iCluster | Bayesian clustering for subtype identification | Early | Joint clustering across omics data types |

| OMICSPRED | Polygenic score calculation | Late | Genetic predisposition estimation for biomolecular traits |

Multi-omics data integration represents a transformative approach for decoding biological hierarchies and elucidating comprehensive disease pathways. By simultaneously analyzing multiple molecular layers and their interactions, researchers can construct more complete models of disease pathogenesis that account for the complex, hierarchical nature of biological systems. The methodologies and applications described in this technical guide demonstrate the power of multi-omics approaches for advancing early disease detection, identifying novel biomarkers, and revealing previously unrecognized disease subtypes.

As multi-omics technologies continue to evolve and computational methods become increasingly sophisticated, we can anticipate further breakthroughs in understanding biological hierarchies and their relationship to disease. The integration of multi-omics data with clinical information, environmental factors, and digital health metrics will create even more comprehensive models of health and disease, ultimately enabling truly personalized preventive medicine and targeted therapeutic interventions.

The advent of high-throughput technologies has positioned multi-omics strategies at the forefront of biomedical research, particularly for early biomarker discovery. By integrating multiple molecular layers, researchers can now obtain a comprehensive view of biological systems, moving beyond the limitations of single-marker approaches. Early disease detection represents one of the most promising applications of multi-omics integration, as molecular alterations often precede clinical symptoms by years. This technical guide examines the core omics layers—genomics, transcriptomics, proteomics, metabolomics, and epigenomics—detailing their unique strengths, technological platforms, and specific applications in early biomarker discovery within the framework of multi-omics research.

Genomics

Biological Basis and Strengths

Genomics investigates the complete set of DNA within an organism, including genes, non-coding regions, and structural elements. It provides the foundational blueprint of biological systems, identifying hereditary factors and somatic mutations that drive disease pathogenesis. The primary strength of genomics lies in its stability; the DNA sequence remains largely constant throughout life and across most cell types, making it ideal for identifying permanent risk markers and inherited predispositions [22]. Genomic biomarkers can reveal disease susceptibility long before clinical manifestations appear, enabling truly proactive healthcare interventions.

Key Technologies and Applications

Next-generation sequencing (NGS) platforms, including whole genome sequencing (WGS) and whole exome sequencing (WES), have revolutionized genomic analysis by enabling comprehensive detection of single nucleotide polymorphisms (SNPs), copy number variations (CNVs), and structural rearrangements [22]. Genome-wide association studies (GWAS) leverage these technologies to identify cancer-associated genetic variations across populations.

In clinical applications, the tumor mutational burden (TMB), validated in the KEYNOTE-158 trial, has been approved by the FDA as a predictive biomarker for pembrolizumab treatment across solid tumors [22]. Large-scale sequencing efforts like MSK-IMPACT have demonstrated that approximately 37% of tumors harbor actionable genomic alterations, highlighting the substantial potential of genomic biomarkers in personalized oncology [22].

Experimental Protocol: Whole Genome Sequencing for Biomarker Discovery

Sample Preparation: Extract high-molecular-weight DNA from tissue (≥100mg) or blood (3-5mL) using silica-column or magnetic bead-based methods. Assess quality via spectrophotometry (A260/280 ratio ~1.8) and fluorometry (Qubit), with DNA integrity number (DIN) ≥7.0.

Library Preparation: Fragment DNA via acoustic shearing (350bp target size). Perform end-repair, A-tailing, and adapter ligation using commercially available kits (e.g., Illumina DNA Prep). Amplify library with 8-10 PCR cycles and validate using Bioanalyzer.

Sequencing: Load library onto Illumina NovaSeq X for 2x150bp paired-end sequencing at ≥30x coverage. For nanopore sequencing (Oxford Nanopore Technologies), use ligation sequencing kit SQK-LSK114 and MinION R10.4.1 flow cell.

Data Analysis: Perform adapter trimming with Trimmomatic, align to reference genome (GRCh38) using BWA-MEM, and call variants with GATK HaplotypeCaller. Annotate variants with ANNOVAR and prioritize based on population frequency (gnomAD <0.1%), predicted pathogenicity (CADD >20), and association databases (ClinVar, COSMIC).

Transcriptomics

Biological Basis and Strengths

Transcriptomics explores the complete set of RNA transcripts, including messenger RNA (mRNA), long non-coding RNAs (lncRNAs), microRNAs (miRNAs), and other non-coding RNAs. Unlike the static genome, the transcriptome dynamically reflects active cellular processes, providing a real-time snapshot of gene expression patterns in response to disease states [22]. This responsiveness makes transcriptomic biomarkers exceptionally valuable for detecting early functional changes in cellular physiology, often before morphological alterations occur. The high sensitivity and cost-effectiveness of RNA sequencing have established transcriptomics as a dominant component of multi-omics research [22].

Key Technologies and Applications

RNA sequencing (RNA-Seq) and microarray technologies enable comprehensive transcriptome profiling. Recent advances include single-cell RNA sequencing (scRNA-Seq), which resolves cellular heterogeneity, and spatial transcriptomics, which preserves geographical context within tissues [22] [23].

Clinically validated gene-expression signatures demonstrate the utility of transcriptomic biomarkers in therapeutic decision-making. The Oncotype DX 21-gene assay (TAILORx trial) and MammaPrint 70-gene signature (MINDACT trial) guide adjuvant chemotherapy decisions in breast cancer patients by predicting recurrence risk [22]. Emerging applications leverage transcriptomic profiles for early cancer detection, with AI-powered models analyzing complex gene expression patterns to identify molecular signatures of nascent malignancies.

Experimental Protocol: Bulk RNA Sequencing Analysis

Sample Collection: Stabilize tissue (10-30mg) in RNAlater within 5 minutes of collection or collect blood in PAXgene tubes. Store at -80°C until processing.

RNA Extraction: Homogenize tissue in TRIzol reagent or use silica-membrane columns (RNeasy Kit). Include DNase I treatment. Assess RNA quality via Bioanalyzer (RIN ≥8.0) and quantify by Qubit.

Library Preparation: Deplete ribosomal RNA using commercially available kits or perform poly-A selection. Fragment RNA (200-300bp), synthesize cDNA, add adapters, and amplify with 10-12 PCR cycles. Validate library size distribution (Bioanalyzer).

Sequencing: Sequence on Illumina platform (NovaSeq 6000) for 2x100bp reads, targeting 30-50 million reads per sample.

Data Analysis: Perform quality control (FastQC), trim adapters (Cutadapt), align to reference genome (STAR aligner), and quantify gene expression (HTSeq-count). Conduct differential expression analysis (DESeq2, edgeR) with false discovery rate (FDR) correction. Perform pathway enrichment analysis (GSEA, Enrichr).

Figure 1: Bulk RNA-Seq analysis workflow for transcriptomic biomarker discovery.

Proteomics

Biological Basis and Strengths

Proteomics characterizes the complete set of proteins, including their abundances, post-translational modifications (PTMs), and interactions. As the primary functional executors of biological processes, proteins most closely reflect cellular activities and disease states [22]. The plasma proteome is particularly valuable for biomarker discovery, as plasma proteins reflect both health and disease status [24]. Proteomic biomarkers offer direct insight into pathway dysregulation and drug target engagement, bridging the gap between genomic potential and phenotypic manifestation. Technological innovations in mass spectrometry have dramatically enhanced proteomic coverage and throughput, positioning proteomics as an essential component for early disease detection.

Key Technologies and Applications

Liquid chromatography-mass spectrometry (LC-MS/MS) and reverse-phase protein arrays enable high-throughput proteomic profiling. Affinity-based platforms like the Olink platform offer highly multiplexed protein quantification with exceptional sensitivity [25] [24]. Recent advances in single-cell proteomics and spatial proteomics are expanding applications to cellular heterogeneity and tissue microenvironment characterization [22].

Studies by the Clinical Proteomic Tumor Analysis Consortium (CPTAC) have demonstrated that proteomics can identify functional cancer subtypes and reveal druggable vulnerabilities missed by genomics alone [22]. The global proteomics market, valued at USD 31.41 billion in 2025, reflects the growing importance of protein biomarkers in drug discovery and clinical diagnostics [25].

Experimental Protocol: LC-MS/MS Plasma Proteomics

Sample Collection: Collect blood in EDTA tubes, process within 30 minutes to separate plasma (2,000×g, 10min). Aliquot and store at -80°C.

Protein Digestion: Deplete high-abundance proteins (e.g., albumin, IgG) using affinity columns. Reduce with dithiothreitol (5mM, 30min, 60°C), alkylate with iodoacetamide (15mM, 30min, dark), and digest with trypsin (1:50 enzyme:protein, 37°C, 16h).

LC-MS/MS Analysis: Desalt peptides with C18 stage tips. Separate on nanoflow LC system (C18 column, 75μm×25cm) with 120min gradient (3-80% acetonitrile). Analyze on timsTOF Pro mass spectrometer in DDA-PASEF mode.

Data Processing: Convert raw files to MGF format. Identify proteins using search engines (MaxQuant, ProteomeDiscoverer) against Swiss-Prot database. Set FDR<1%. Quantify with label-free algorithms (MaxLFQ) or isobaric labeling (TMT).

Metabolomics

Biological Basis and Strengths

Metabolomics investigates the complete set of small-molecule metabolites (<1,500 Da), including carbohydrates, lipids, amino acids, and nucleotides. As the molecular endpoints of cellular processes, metabolites provide the most immediate reflection of physiological status, responding to perturbations within minutes to hours [26]. This rapid responsiveness positions metabolomic biomarkers as exceptionally sensitive indicators of early disease processes. Metabolites integrate information from genomics, transcriptomics, and proteomics while incorporating influences from environmental factors, diet, and the microbiome, offering a comprehensive functional readout of biological state.

Key Technologies and Applications

Mass spectrometry (MS) and nuclear magnetic resonance (NMR) spectroscopy serve as the primary analytical platforms for metabolomics. LC-MS/MS systems can now detect and quantify over 1,200 metabolites in a single sample, with sensitivity reaching the femtomolar range [26]. NMR spectroscopy provides complementary structural information and absolute quantification without requiring reference standards.

A classic example of metabolomic biomarker application is IDH1/2-mutant glioma, where the oncometabolite 2-hydroxyglutarate (2-HG) serves as both a diagnostic and mechanistic biomarker [22]. Recent research has identified a 10-metabolite plasma signature for gastric cancer that demonstrates superior diagnostic accuracy compared to conventional tumor markers [22]. In Alzheimer's disease, metabolomic signatures can predict cognitive decline 2-3 years before clinical symptoms appear, creating a crucial window for early intervention [26].

Experimental Protocol: Untargeted Metabolomics

Sample Preparation: Precipitate proteins from plasma (50μL) with 200μL cold methanol:acetonitrile (1:1). Vortex, incubate (-20°C, 1h), centrifuge (14,000×g, 15min, 4°C). Collect supernatant and dry in SpeedVac.

LC-MS Analysis: Reconstitute in 100μL water:acetonitrile (1:1). Analyze using reversed-phase (C18) and HILIC chromatography coupled to Q-TOF mass spectrometer in both positive and negative ESI modes.

Data Processing: Convert raw data to mzML format. Perform peak detection, alignment, and gap filling (XCMS). Annotate metabolites using in-house (retention time, m/z) and public databases (HMDB, METLIN). Normalize to quality controls and internal standards.

Statistical Analysis: Apply multivariate statistics (PCA, PLS-DA) to identify differentially abundant metabolites (VIP>1.5, p<0.05). Conduct pathway enrichment analysis (MetaboAnalyst).

Epigenomics

Biological Basis and Strengths

Epigenomics investigates heritable changes in gene expression that do not involve alterations to the underlying DNA sequence, including DNA methylation, histone modifications, chromatin accessibility, and non-coding RNA regulation. Epigenetic marks represent the interface between genetic predisposition and environmental exposures, making them particularly valuable for understanding disease etiology. Unlike genetic mutations, epigenetic modifications are reversible yet stable enough to serve as reliable biomarkers. The dynamic nature of epigenetic regulation allows it to capture early adaptive responses to disease processes, often before fixed genetic changes occur.

Key Technologies and Applications

Whole genome bisulfite sequencing (WGBS) provides comprehensive DNA methylation profiling, while chromatin immunoprecipitation sequencing (ChIP-seq) maps histone modifications and transcription factor binding sites. Assay for Transposase-Accessible Chromatin using sequencing (ATAC-seq) assesses chromatin accessibility genome-wide.

The MGMT promoter methylation status represents a well-established clinical biomarker that predicts benefit from temozolomide chemotherapy in glioblastoma patients [22]. DNA methylation-based multi-cancer early detection (MCED) assays, such as the Galleri test, are under clinical evaluation and demonstrate the potential of epigenomic biomarkers for pan-cancer screening [22]. FDA-approved DNMT and HDAC inhibitors further validate epigenomic markers as therapeutic targets [22].

Experimental Protocol: Whole Genome Bisulfite Sequencing

DNA Treatment: Fragment genomic DNA (100-300bp) by sonication. Treat 100ng DNA with sodium bisulfite (EZ DNA Methylation-Lightning Kit) to convert unmethylated cytosines to uracils.

Library Preparation: Repair DNA ends, add methylated adapters, and amplify with 8-10 PCR cycles. Clean up with AMPure XP beads. Validate library quality (Bioanalyzer).

Sequencing & Analysis: Sequence on Illumina platform (2x150bp, 30x coverage). Align to bisulfite-converted reference genome (Bismark, BWA-meth). Calculate methylation ratios as #C/(#C+#T) at each CpG. Identify differentially methylated regions (DMRs) with methylKit (≥25% difference, FDR<0.05).

Comparative Analysis of Omics Modalities

Table 1: Technical comparison of key omics technologies for biomarker discovery

| Omics Layer | Analytical Platforms | Coverage Capacity | Temporal Resolution | Key Advantages |

|---|---|---|---|---|

| Genomics | NGS (WGS, WES), microarrays | Complete genome (3×10⁹ bases) | Static (lifelong) | Identifies hereditary risk factors; stable markers |

| Transcriptomics | RNA-Seq, microarrays, Nanostring | Complete transcriptome (~60,000 transcripts) | Dynamic (minutes-hours) | Reveals active pathways; high sensitivity |

| Proteomics | LC-MS/MS, affinity arrays, Olink | >10,000 proteins | Medium (hours-days) | Direct functional readout; drug target engagement |

| Metabolomics | LC-MS, GC-MS, NMR | 1,200+ metabolites | Rapid (minutes) | Most proximal to phenotype; integrates environment |

| Epigenomics | WGBS, ChIP-seq, ATAC-seq | Complete epigenome | Medium (days-weeks) | Links genotype to environment; reversible markers |

Table 2: Clinical applications of omics biomarkers in early disease detection

| Omics Layer | Representative Biomarkers | Clinical Applications | Development Stage |

|---|---|---|---|

| Genomics | Tumor mutational burden (TMB), BRCA1/2 mutations | Immunotherapy response prediction [22], hereditary cancer risk assessment [27] | FDA-approved (TMB), routine clinical testing (BRCA) |

| Transcriptomics | Oncotype DX (21-gene), MammaPrint (70-gene) | Breast cancer recurrence prediction, chemotherapy guidance [22] | Commercialized, guideline-recommended |

| Proteomics | OVA1 (5-protein panel), 4Kscore (4 kallikreins) | Ovarian cancer detection [27], prostate cancer risk stratification [27] | FDA-cleared, commercially available |

| Metabolomics | 2-hydroxyglutarate (2-HG), 10-metabolite gastric signature | Glioma diagnosis [22], gastric cancer detection [22] | Clinical validation ongoing |

| Epigenomics | MGMT promoter methylation, multi-cancer methylation signatures | Glioblastoma treatment response [22], multi-cancer early detection [22] | Clinical implementation (MGMT), advanced development (MCED) |

Integrated Multi-Omics Workflow

Figure 2: Integrated multi-omics workflow for biomarker discovery, spanning from study design to clinical validation.

The Scientist's Toolkit: Essential Research Reagents and Platforms

Table 3: Essential research solutions for omics biomarker discovery

| Category | Specific Products/Platforms | Key Applications |

|---|---|---|

| Sequencing Platforms | Illumina NovaSeq X, Oxford Nanopore PromethION | Whole genome sequencing, transcriptomics, epigenomics |

| Mass Spectrometry Systems | timsTOF Pro (Bruker), Orbitrap Exploris (Thermo) | High-sensitivity proteomics and metabolomics |

| Proteomics Reagents | Olink panels, TMTpro 16plex, Evosep Eno system | Multiplexed protein quantification, high-throughput proteomics [25] |

| Single-Cell Technologies | 10x Genomics Chromium, BD Rhapsody | Single-cell multi-omics, cellular heterogeneity analysis |

| Spatial Biology Platforms | 10x Visium, Nanostring GeoMx | Spatially resolved transcriptomics and proteomics [23] |

| Automation Systems | Opentrons OT-2, Agilent Bravo | High-throughput sample preparation for multi-omics studies [25] |

| Bioinformatics Tools | GATK, MaxQuant, MetaboAnalyst 6.0 | Omics data processing, analysis, and interpretation [26] |

Each omics layer offers unique advantages for early biomarker discovery, with genomics providing stable hereditary information, transcriptomics revealing dynamic gene expression, proteomics capturing functional effectors, metabolomics reflecting immediate physiological status, and epigenomics linking genetic predisposition with environmental influences. The integration of these complementary modalities through multi-omics strategies represents the most powerful approach for comprehensive biomarker discovery. As technological innovations continue to enhance the resolution, throughput, and accessibility of each omics layer, and as computational methods advance for data integration, multi-omics approaches will increasingly enable the detection of diseases at their earliest, most treatable stages, ultimately transforming reactive disease treatment into proactive health maintenance.

The study of complex diseases has evolved significantly with the advent of high-throughput technologies. While single-omics approaches have provided valuable insights into individual molecular layers, they fail to capture the intricate interactions between genomic, transcriptomic, proteomic, and metabolomic dimensions that drive disease pathogenesis. This technical review examines the critical transition from single-omics investigations to integrated multi-omics frameworks, highlighting how a holistic view enables deeper understanding of complex disease mechanisms. We present comprehensive methodological guidance, including experimental design considerations, data integration strategies, and analytical frameworks that leverage artificial intelligence and machine learning. Within the context of early disease detection research, we demonstrate how multi-omics profiling identifies novel biomarkers, reveals previously unrecognized disease subtypes, and enables predictive modeling of disease onset and progression. The integration of diverse molecular datasets provides unprecedented opportunities for advancing precision medicine through improved diagnostic accuracy, therapeutic target discovery, and personalized treatment strategies.