Multi-Omics for Elucidating Molecular Pathways: A Comprehensive Guide for Researchers and Drug Developers

This article provides a comprehensive overview of how multi-omics approaches are revolutionizing the elucidation of complex molecular pathways in biomedical research and drug discovery. Tailored for researchers, scientists, and drug development professionals, it covers the foundational principles of integrating genomics, transcriptomics, proteomics, and metabolomics data. The scope extends to advanced methodological strategies for data integration and analysis, practical solutions for overcoming technical and computational challenges, and validation frameworks for translating discoveries into clinically actionable insights. By synthesizing current trends, tools, and real-world applications, this resource aims to equip professionals with the knowledge to leverage multi-omics for uncovering disease mechanisms and accelerating therapeutic development.

Multi-Omics for Elucidating Molecular Pathways: A Comprehensive Guide for Researchers and Drug Developers

Abstract

This article provides a comprehensive overview of how multi-omics approaches are revolutionizing the elucidation of complex molecular pathways in biomedical research and drug discovery. Tailored for researchers, scientists, and drug development professionals, it covers the foundational principles of integrating genomics, transcriptomics, proteomics, and metabolomics data. The scope extends to advanced methodological strategies for data integration and analysis, practical solutions for overcoming technical and computational challenges, and validation frameworks for translating discoveries into clinically actionable insights. By synthesizing current trends, tools, and real-world applications, this resource aims to equip professionals with the knowledge to leverage multi-omics for uncovering disease mechanisms and accelerating therapeutic development.

Demystifying Multi-Omics: Core Concepts and System-Level Insights for Pathway Analysis

High-throughput technologies have revolutionized biological research, enabling comprehensive analysis of molecular systems at multiple levels. The integration of genomics, transcriptomics, proteomics, and metabolomics—collectively termed multi-omics—provides unprecedented insights into the complex flow of information underlying biological processes and disease mechanisms. This technical guide delineates the core omics layers, their respective technologies, and their roles in elucidating molecular pathways. By presenting structured comparisons, experimental protocols, and visualization frameworks, we aim to equip researchers with methodologies for effective data integration to advance biomarker discovery, therapeutic target identification, and systems-level understanding in biomedical research.

Omics technologies provide a global assessment of complete sets of biological molecules, moving beyond single-molecule studies to system-wide analyses [1]. The field has been driven largely by technological advances that have made possible cost-efficient, high-throughput analysis of biologic molecules. Each omics layerinterrogates a distinct level of biological organization, from genetic blueprint to functional metabolites, offering unique insights into different aspects of biological systems [2] [1]. When integrated, these technologies enable researchers to understand the flow of information that underlies disease, moving beyond correlations to identify potential causative changes [1]. This multi-omics approach is particularly valuable for interpreting complex diseases where genetic variants alone explain only a fraction of heritability, and dysregulation across multiple molecular layers contributes to pathogenesis [3] [1].

Core Omics Layers: Technologies and Biological Significance

The four primary omics layers provide complementary insights into biological systems, each capturing a different dimension of the central dogma of biology and its regulatory networks.

Table 1: Core Omics Technologies and Their Applications

| Omics Layer | Molecules Analyzed | Key Technologies | Primary Biological Information | Common Applications |

|---|---|---|---|---|

| Genomics | DNA sequences, genetic variants | Genotyping arrays, Whole Genome Sequencing (WGS), Exome sequencing [1] | Genetic blueprint, inherited variations, disease-associated polymorphisms [1] | Genome-wide association studies (GWAS), identification of disease-risk alleles [3] [1] |

| Transcriptomics | RNA transcripts (coding, non-coding) | Microarrays, RNA-Seq, single-cell RNA-Seq [1] | Dynamic gene expression, alternative splicing, regulatory RNAs [2] [1] | Expression quantitative trait loci (eQTL) mapping, pathway activity inference, biomarker discovery [3] [4] |

| Proteomics | Proteins, peptides | Mass spectrometry (MS), affinity purification, protein arrays [1] | Protein abundance, post-translational modifications, protein-protein interactions [2] [1] | Signaling pathway analysis, drug target identification, metabolic engineering [2] [4] |

| Metabolomics | Metabolites (≤1.5 kDa) | Mass spectrometry (MS), NMR spectroscopy [2] [1] | End products of cellular processes, metabolic fluxes, physiological status [2] [1] | Biomarker development, disease diagnosis, metabolic pathway analysis [2] [1] |

Biological Roles and Workflow Relationships

Each omics layer provides unique insights into different stages of biological information flow. Genomics offers a static view of genetic potential, while transcriptomics captures dynamic regulatory responses. Proteomics reveals the functional effectors of cellular processes, and metabolomics reflects the ultimate biochemical outcomes [2] [1]. This hierarchical relationship creates a comprehensive picture of biological systems when integrated.

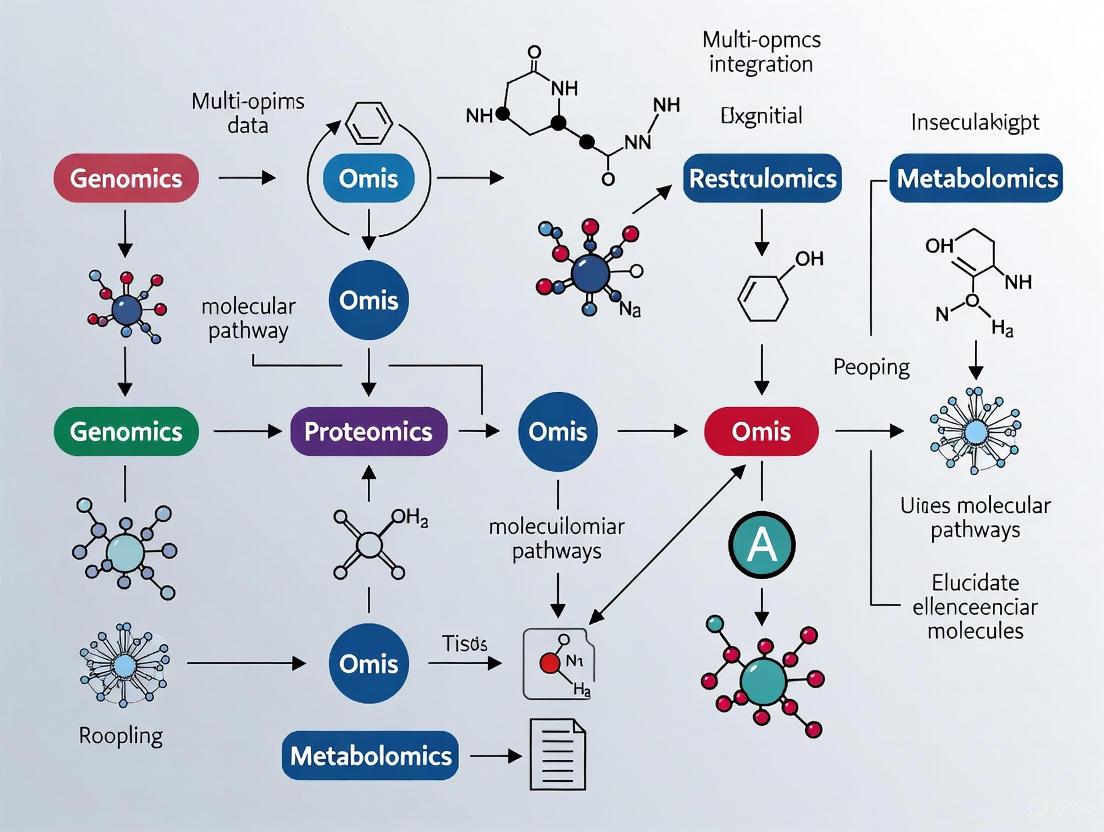

Diagram 1: Information flow through omics layers

Methodologies for Multi-Omics Integration

Data Integration Approaches and Workflows

Integrating multiple omics data sets is challenging but necessary to fully understand complex biological systems [2]. Several methodological frameworks have been developed, which can be categorized into three primary approaches: correlation-based strategies, combined omics integration, and machine learning techniques [2].

Table 2: Multi-Omics Data Integration Approaches

| Integration Approach | Key Methods | Omics Data Types | Primary Application | Tools/Examples |

|---|---|---|---|---|

| Correlation-based | Co-expression analysis, Gene-metabolite networks, Similarity Network Fusion [2] | Transcriptomics & Metabolomics, Proteomics & Metabolomics [2] | Identify co-regulated modules, construct interaction networks [2] | WGCNA, Cytoscape, PCC analysis [2] |

| Statistical & Enrichment | Pathway enrichment, Signaling Pathway Impact Analysis (SPIA) [4] | Genomics, Transcriptomics, Proteomics [4] | Pathway activation assessment, functional interpretation [4] | IMPaLA, PaintOmics, ActivePathways, SPIA [4] |

| Machine Learning | Supervised/unsupervised learning, multivariate modeling [2] [3] | All omics layers [2] [3] | Disease classification, risk prediction, pattern recognition [3] | DIABLO, OmicsAnalyst, random forest, elastic-net [3] [4] |

| Network-based | Topology-based pathway analysis, protein-protein interaction networks [4] | Transcriptomics, Proteomics, Metabolomics [4] | Identify key regulatory nodes, drug targeting [4] | Oncobox, TAPPA, Pathway-Express, iPANDA [4] |

Experimental Protocol: Multi-Omics Pathway Analysis

The following workflow represents a comprehensive approach for integrating multiple omics layers to assess pathway activation and drug efficacy, adapted from established methodologies in the field [4].

Diagram 2: Multi-omics pathway activation workflow

Step-by-Step Protocol:

Multi-omics Data Collection: Generate molecular profiles using high-throughput technologies. Essential data types include:

- DNA methylation arrays or sequencing for epigenomic regulation

- mRNA-seq for protein-coding transcript levels

- miRNA-seq for microRNA expression profiling

- lncRNA/asRNA-seq for long non-coding and antisense RNA quantification [4]

Differential Expression Analysis: Identify statistically significant molecular differences between case and control samples for each omics layer using appropriate statistical methods (e.g., moderated t-tests, DESeq2, or EdgeR for count data).

Pathway Database Integration: Utilize curated pathway databases (e.g., OncoboxPD containing 51,672 uniformly processed human molecular pathways) with annotated gene functions and interaction topologies [4].

Signaling Pathway Impact Analysis (SPIA): Calculate pathway activation levels using topology-based algorithms that consider:

- Perturbation factors (PF) for all genes in a pathway

- Direction of interactions (activation/inhibition)

- Pathway accumulation accuracy scores [4]

Drug Efficiency Index (DEI) Calculation: Evaluate potential therapeutic efficacy by integrating pathway activation data with drug target information to generate personalized drug rankings [4].

Biological Interpretation: Integrate results across omics layers to identify dysregulated pathways, key regulatory nodes, and potential therapeutic targets.

The Scientist's Toolkit: Essential Research Reagents and Solutions

Successful multi-omics research requires specialized reagents and computational tools to ensure data quality and integration capabilities.

Table 3: Essential Research Reagents and Solutions for Multi-Omics Studies

| Reagent/Tool Category | Specific Examples | Function and Application | Technical Considerations |

|---|---|---|---|

| Nucleic Acid Extraction Kits | DNA/RNA co-extraction kits, miRNA-specific isolation kits | High-quality nucleic acid preservation for parallel genomic/transcriptomic analysis | Maintain RNA integrity (RIN >8), prevent degradation [4] |

| Mass Spectrometry-Grade Solvents | LC-MS/MS compatible solvents, digest buffers | Optimal protein extraction, digestion, and metabolite preservation | Minimize contaminants, ensure batch-to-batch reproducibility [1] |

| Pathway Analysis Databases | OncoboxPD, KEGG, Reactome, Gene Ontology | Pathway topology information for activation calculations | Uniform pathway curation, functional annotations [4] |

| Reference Data Repositories | 1000 Genomes Project, GTEx, ARIC Study, NIAGADS | Control datasets, QTL mapping references, normalization | Population-matched controls, consistent processing [3] |

| Statistical Computing Environments | R/Bioconductor, Python, specialized omics packages | Data normalization, integration, and visualization | Implement reproducible workflows, version control [2] [5] |

Case Study: Multi-Omics in Alzheimer's Disease Research

A recent study demonstrates the power of integrative multi-omics approaches for complex disease characterization [3]. Researchers conducted genome-, transcriptome-, and proteome-wide association studies (GWAS, TWAS, PWAS) on 15,480 individuals from the Alzheimer's Disease Sequencing Project (ADSP) to identify AD-associated molecular signals [3]. The analysis revealed 104 genomic, 319 transcriptomic, and 17 proteomic associations with AD, with novel associations enriched in signaling, myeloid differentiation, and immune pathways [3]. Integrative Risk Models (IRMs) developed using genetically-regulated components of gene and protein expression significantly outperformed traditional polygenic score models, with the best-performing random forest classifier achieving an AUROC of 0.703 and AUPRC of 0.622 [3]. This case study illustrates how multi-omics integration enhances both biological insight and predictive accuracy for complex diseases.

The strategic integration of genomics, transcriptomics, proteomics, and metabolomics provides a powerful framework for elucidating complex biological systems and disease mechanisms. By leveraging the complementary strengths of each omics layer and applying appropriate integration methodologies, researchers can uncover molecular pathways and interactions that remain invisible to single-omics approaches. As technologies advance and analytical methods mature, multi-omics integration will increasingly drive discoveries in basic research, biomarker development, and therapeutic innovation, ultimately enabling more personalized and effective medical interventions.

Biological systems are fundamentally complex, driven by the dynamic interplay between genetic blueprint, epigenetic regulation, gene expression, protein translation, and metabolic activity. Traditional single-omics approaches—analyzing one biological layer, such as the genome or transcriptome in isolation—provide a valuable but inherently limited snapshot of this intricate system. While genomics identifies DNA-level alterations and transcriptomics reveals gene expression dynamics, they individually fail to capture the cascading effects and regulatory feedback loops that characterize complex pathways [6]. The fundamental shortcoming of single-omics is its reductionist nature; it attempts to explain a system's behavior by examining a single component, averaging signals across heterogeneous cell populations and thereby obscuring critical cellular nuances and rare but consequential cell states [7]. As a result, single-omics strategies often yield incomplete mechanistic insights and suboptimal clinical predictions, unable to fully elucidate the molecular mechanisms underlying disease pathogenesis, drug response, or therapeutic resistance [6].

This review argues that a multi-omics integrative framework is not merely an enhancement but a necessity for accurately modeling complex biological pathways. By simultaneously measuring and integrating data from multiple molecular layers, researchers can move from observing correlations to understanding causality, ultimately constructing a more holistic and predictive model of cellular behavior.

The Biological Hierarchy: A Cascade of Information Flow

The flow of biological information is not perfectly linear, but it follows a general hierarchical structure from static genetic instruction to dynamic functional outcome. A perturbation at one level can propagate through subsequent layers, but feedback mechanisms can also exert influence upstream. Single-omics approaches, which focus on a single tier of this hierarchy, cannot capture these complex inter-layer dynamics.

- Genomics: Provides the foundational blueprint, identifying inherited variations and acquired mutations (e.g., SNVs, CNVs) [6].

- Epigenomics: Regulates genomic accessibility through dynamic, reversible modifications like DNA methylation and histone changes, influencing gene expression without altering the DNA sequence itself [6] [8].

- Transcriptomics: Captures the immediate downstream effect, quantifying RNA expression levels that reflect the active transcriptional programs of the cell [6].

- Proteomics: Represents the functional effectors, cataloging protein expression, post-translational modifications (e.g., phosphorylation), and interactions that directly execute cellular processes [9] [6].

- Metabolomics: Profiles the biochemical endpoints, revealing small-molecule metabolites that constitute the final response to genomic, environmental, and therapeutic influences [6].

The following diagram illustrates this hierarchical flow of biological information and the feedback loops that a multi-omics approach is required to capture:

Biological Information Flow and Multi-Layer Regulation

For example, unraveling the cause of a disease may reveal a metabolite deficiency caused by the failure of an enzyme to be phosphorylated because a gene is not expressed due to aberrant methylation as a result of a rare germline variant [9]. This cascade of events, spanning multiple biological layers, is invisible to any single-omics investigation.

The Multi-Omics Experimental Workflow: From Sample to Insight

Implementing a multi-omics study requires a structured workflow that encompasses sample preparation, high-throughput data generation, computational integration, and biological interpretation. The following diagram outlines a generalized protocol for a multi-omics study, integrating steps from single-cell isolation to final data integration:

Generalized Multi-Omics Experimental Workflow

Key Research Reagent Solutions

The execution of a multi-omics experiment relies on a suite of specialized reagents and platforms. The following table details essential materials and their functions in a typical workflow.

Table 1: Essential Research Reagents and Platforms for Multi-Omics Studies

| Item Name | Function |

|---|---|

| Bacterial Artificial Chromosomes (BACs) | Used in hierarchical shotgun sequencing to clone large (150-200 kb) fragments of the genome for amplification and sequencing [9]. |

| Hairpin Adapters | Ligated to DNA fragments in PacBio SMRT sequencing to circularize the template, enabling multiple passes of the same fragment by the polymerase for high-fidelity (HiFi) reads [9]. |

| Template-Switching Oligos (TSOs) | Enable the construction of full-length cDNA libraries in single-cell RNA-seq methods (e.g., SMART-seq3, FLASH-seq), allowing for the identification of 5' transcript ends and isoforms [7]. |

| Cell Barcodes (DNA Oligos) | Unique nucleotide sequences attached to molecules from individual cells during library preparation, allowing samples from thousands of cells to be pooled and sequenced simultaneously while retaining cell-of-origin information [7] [10]. |

| Unique Molecular Identifiers (UMIs) | Random nucleotide tags incorporated during reverse transcription in scRNA-seq protocols to label individual mRNA molecules, mitigating PCR amplification bias and enabling accurate transcript quantification [7]. |

| Zero-Mode Waveguides (ZMWs) | Microscopic wells in PacBio SMRT cells where single molecules of DNA polymerase are immobilized, enabling real-time observation of DNA synthesis for long-read sequencing [9]. |

Analytical Technologies and Sequencing Platforms

The choice of sequencing technology is critical and involves trade-offs between read length, accuracy, throughput, and cost. The table below compares the major sequencing platforms.

Table 2: Comparison of Sequencing Technology Generations

| Platform (Generation) | Sequencing Technology | Read Length | Key Strengths | Key Limitations |

|---|---|---|---|---|

| Sanger (First) | Chain termination | 800-1,000 bp | High accuracy, low analysis difficulty | Low throughput, high historical cost [9] |

| Illumina (Second/Next) | Sequencing by synthesis | 100-300 bp | High throughput, high accuracy, moderate cost | Short reads struggle with repetitive regions [9] |

| PacBio (Third) | Circular consensus sequencing | 10,000-25,000 bp | Very long reads, moderate accuracy | High cost, high computing needs [9] |

| Oxford Nanopore (Third) | Electrical detection | 10,000-30,000 bp | Very long reads, portable devices | Lower read accuracy, high computing needs [9] |

Data Integration Strategies and Computational Methodologies

The core challenge of multi-omics lies in the computational integration of disparate data types. Several conceptual strategies have been developed, each with distinct advantages and limitations.

Table 3: Multi-Omics Data Integration Strategies

| Integration Strategy | Description | Advantages | Disadvantages |

|---|---|---|---|

| Early Integration | Raw or pre-processed data from different omics layers are concatenated into a single large matrix before analysis [11] [12]. | Simple to implement. | Creates a high-dimension, noisy dataset; discounts data distribution differences [12]. |

| Intermediate Integration | Datasets are integrated by identifying common latent structures (e.g., via joint matrix decomposition) [11] [12]. | Reduces dimensionality; can separate shared and omics-specific signals [11]. | Often requires robust pre-processing to handle data heterogeneity [12]. |

| Late Integration | Each omics dataset is analyzed separately, and the results (e.g., model predictions) are combined at the final stage [11] [12]. | Avoids challenges of merging raw data; uses optimized models for each data type. | Fails to capture inter-omics interactions during analysis [12]. |

| Hierarchical Integration | Incorporates prior knowledge of regulatory relationships between different omics layers (e.g., genomic variants influencing transcript levels) [12]. | Most accurately reflects biological causality; true trans-omics analysis. | Methods are often specific to certain omics types; less generalizable [12]. |

The Role of Artificial Intelligence and Machine Learning

Machine learning (ML) and deep learning (DL) are indispensable for navigating the high-dimensionality and non-linear relationships in multi-omics data. Unlike traditional statistics, AI models can identify complex patterns that bridge biological layers.

- Supervised Learning: Used with labeled datasets to predict outcomes such as patient survival or drug response. Algorithms like Random Forest (RF) and Support Vector Machines (SVM) are trained on omics data to create predictive classifiers, requiring careful feature labeling and parameter tuning to avoid overfitting [13].

- Unsupervised Learning: Applied to explore hidden structures without pre-defined labels. Methods like k-means clustering and principal component analysis (PCA) are used for dimensionality reduction and to identify novel cellular subpopulations or disease subtypes from omics data [13].

- Deep Learning: Utilizes artificial neural networks for automatic feature extraction from raw data. Graph Neural Networks (GNNs) can model biological networks, while multi-modal transformers can fuse disparate data types like MRI radiomics and transcriptomics to predict disease progression [6] [13].

- Transfer Learning: A technique where a model developed for one task is reused as the starting point for a model on a second task, which is particularly useful for leveraging knowledge from large public omics databases for specific clinical questions with limited data [13].

Case Study: Single-Cell Multi-Omics in Cancer Research

The transition from bulk to single-cell analysis represents a paradigm shift, moving beyond tissue-level averages to dissect the cellular heterogeneity that drives complex diseases like cancer. Single-cell multi-omics technologies now allow for the simultaneous measurement of multiple modalities—such as genome, epigenome, transcriptome, and proteome—within the same individual cell [7] [10].

The following diagram illustrates a specific workflow for single-cell multi-omics profiling that integrates transcriptomic and epigenomic data:

Single-Cell Multi-Omics Profiling Workflow

Application in Cancer: This approach has been pivotal in characterizing the tumor microenvironment. For example, in breast cancer, an adaptive multi-omics integration framework that combined genomics, transcriptomics, and epigenomics data achieved a concordance index (C-index) of 78.31 for survival prediction, significantly outperforming single-omics models [11]. Similarly, integrating single-cell transcriptomics with T-cell receptor sequencing (scTCR-seq) can identify clonally expanded T-cells and link their transcriptional state to antigen specificity, providing critical insights into anti-tumor immunity and immunotherapy resistance [10].

The evidence is conclusive: single-omics approaches are fundamentally insufficient for deconstructing the complex, dynamic, and interconnected pathways that govern biological systems and disease states. The imperative for multi-omics integration is not merely a trend but a necessary evolution in biological research. By simultaneously querying multiple layers of biological information and leveraging advanced computational strategies, including machine learning, researchers can move from descriptive snapshots to predictive, causal models. This holistic perspective is crucial for transforming our understanding of biology and accelerating the development of precise diagnostics and effective therapeutics for complex human diseases.

The complexity of biological systems extends far beyond the scope of single-omics studies. Multi-omics represents a fundamental shift in biological research, integrating data from various molecular layers—including genomics, transcriptomics, proteomics, epigenomics, and metabolomics—to construct a comprehensive view of how living systems function and interact [14]. This approach is revolutionizing molecular pathways research by enabling scientists to move from observing correlations to understanding causal relationships and regulatory mechanisms across different biological levels.

The power of multi-omics lies in its ability to capture the flow of biological information from DNA to RNA to proteins and metabolites, revealing how perturbations at one level propagate through the system [15]. For researchers and drug development professionals, this integrated perspective is invaluable for identifying robust biomarkers, understanding disease mechanisms, and discovering novel therapeutic targets that might remain hidden when examining single omics layers in isolation [16]. As we advance into an era of precision medicine, multi-omics provides the analytical framework necessary to decipher the complexity of human diseases and develop targeted interventions based on a holistic understanding of molecular pathways.

Multi-Omics Data Integration Methodologies

Effective integration of diverse omics datasets is both a technical challenge and critical success factor in multi-omics research. The integration strategies can be categorized into distinct methodological approaches, each with specific strengths and applications in pathway analysis and biological discovery.

Table 1: Multi-Omics Data Integration Approaches

| Integration Method | Core Principle | Common Applications | Key Advantages |

|---|---|---|---|

| Conceptual Integration | Links omics data through shared biological concepts or entities | Hypothesis generation, exploratory analysis | Leverages existing knowledge bases; intuitive interpretation |

| Statistical Integration | Applies quantitative techniques to combine or compare datasets | Pattern identification, biomarker discovery | Identifies co-expression patterns; handles large datasets |

| Model-Based Integration | Uses mathematical models to simulate system behavior | Dynamic pathway modeling, drug response prediction | Captures system dynamics; enables predictive simulations |

| Network & Pathway Integration | Represents data within biological network structures | Pathway analysis, target prioritization | Contextualizes findings; integrates multiple granularity levels |

More advanced topology-based methods have emerged that incorporate the biological reality of pathways by considering the type, direction, and function of molecular interactions [4]. Methods such as Signaling Pathway Impact Analysis (SPIA) and Drug Efficiency Index (DEI) utilize pathway topology databases to calculate pathway activation levels (PALs), providing more biologically realistic assessments of pathway dysregulation than non-topological approaches [4].

A critical consideration in data integration is the vertical integration of different omics modalities from the same samples, which requires specialized approaches to handle varying statistical properties, technological noise, and feature dimensions across datasets [15]. The Quartet Project has developed reference materials and ratio-based profiling methods that address fundamental reproducibility challenges by scaling absolute feature values of study samples relative to a common reference sample, significantly improving data comparability across platforms and laboratories [15].

Experimental Workflows and Analytical Frameworks

Standardized Multi-Omics Workflow

Implementing a robust multi-omics study requires careful experimental design and execution. The following diagram illustrates a generalized workflow that can be adapted for various research objectives:

Alzheimer's Disease Case Study: Experimental Protocol

A recent investigation exemplifies the application of multi-omics to elucidate complex disease pathways. Researchers performed an integrative multi-omics analysis on 15,480 individuals from the Alzheimer's Disease Sequencing Project (ADSP) to characterize AD risk and identify molecular pathways [3].

Experimental Methodology:

- Cohort Description: 15,480 individuals from ADSP R4 release

- Omics Profiling:

- Genome-wide association study (GWAS) for genomic variants

- Transcriptome-wide association study (TWAS) for gene expression

- Proteome-wide association study (PWAS) for protein expression

- Statistical Analysis: Association testing under significant thresholds with multiple testing correction

- Pathway Analysis: Enrichment analysis of identified associations using functional annotation databases

- Risk Modeling: Development of integrative risk models (IRMs) using elastic-net logistic regression and random forest classifiers

Key Findings:

- Identification of 104 genomic, 319 transcriptomic, and 17 proteomic associations with AD

- Novel associations enriched in signaling, myeloid differentiation, and immune pathways

- Random forest model with transcriptomic and covariate features achieved AUROC of 0.703, significantly outperforming polygenic risk scores alone [3]

This study demonstrates how multi-omics approaches can enhance both biological understanding and predictive modeling for complex diseases.

Pathway Analysis and Computational Framework

Topology-Based Pathway Analysis

Understanding how multi-omics data influences biological pathways requires specialized computational approaches that consider the structure and dynamics of molecular networks. The following diagram illustrates how different omics layers are integrated into topology-based pathway analysis:

Key Computational Tools for Multi-Omics Pathway Analysis

Table 2: Computational Frameworks for Multi-Omics Pathway Analysis

| Tool/Method | Integration Approach | Analytical Output | Application Context |

|---|---|---|---|

| SPIA | Topology-based pathway impact | Pathway activation scores, perturbation factors | Signaling pathway dysregulation analysis |

| DIABLO | Multivariate supervised integration | Patient stratification, feature selection | Biomarker discovery, subtype identification |

| MultiGSEA | Statistical enrichment | Gene set enrichment p-values | Functional profiling across omics layers |

| iPANDA | Network decomposition | Pathway activation levels | Disease stratification, drug response |

| ActivePathways | Data fusion across omics | Integrated pathway p-values | Multi-omics data prioritization |

The SPIA (Signaling Pathway Impact Analysis) framework exemplifies advanced topology-based approaches, calculating pathway perturbation by considering both the enrichment of differentially expressed genes and the propagation of perturbations through pathway topologies [4]. This method incorporates the type and direction of molecular interactions, providing more biologically meaningful pathway activation scores than enrichment-based methods alone.

Recent advances enable the integration of non-coding RNA and DNA methylation data into pathway analysis by accounting for their regulatory effects. For instance, methylation-based and ncRNA-based SPIA values are calculated with negative signs compared to standard mRNA-based values, reflecting their repressive effects on gene expression while utilizing the same pathway topology graphs [4].

Essential Research Reagents and Reference Materials

Successful multi-omics studies require carefully selected reagents and reference materials to ensure data quality and reproducibility. The following table details key solutions used in advanced multi-omics research:

Table 3: Essential Research Reagent Solutions for Multi-Omics Studies

| Reagent/Resource | Type | Function | Application Example |

|---|---|---|---|

| Quartet Reference Materials | Multi-omics reference standards | Provides ground truth for data integration and QC | Cross-platform standardization [15] |

| Laser-Capture Microdissection | Tissue processing | Isolation of specific cell populations | Rare neuron analysis in schizophrenia [17] |

| GTEx v8 Reference | Transcriptome database | Tissue-specific expression reference | Transcriptomic imputation [3] |

| ARIC Study References | Proteomic database | Protein quantitative trait loci (pQTL) | Proteome-wide association studies [3] |

| OncoboxPD Pathway Database | Pathway knowledge base | 51,672 uniformly processed human pathways | Topology-based pathway analysis [4] |

| AAV Vector Systems | Gene delivery vehicle | Therapeutic gene transfer | Gene therapy safety assessment [17] |

| Single-Cell Multi-Omics Kits | Library preparation | Simultaneous profiling of multiple modalities | Cellular heterogeneity resolution |

The Quartet reference materials represent a particularly significant advancement, providing matched DNA, RNA, protein, and metabolites derived from immortalized cell lines from a family quartet [15]. These materials establish "built-in truth" defined by genetic relationships and central dogma information flow, enabling objective assessment of data quality and integration performance across laboratories and platforms.

For drug discovery applications, AAV vector systems require specialized reagents to assess integration sites and potential genotoxicity. Methods such as target enrichment sequencing, whole genome sequencing, and shearing extension primer tag selection are employed to identify junctions between vector DNA and host DNA, ensuring therapeutic safety [17].

Applications in Drug Discovery and Therapeutic Development

Multi-omics approaches are transforming pharmaceutical development by providing comprehensive insights into disease mechanisms and therapeutic responses. Several exemplar applications demonstrate their impact across the drug development pipeline:

Target Identification and Validation

In schizophrenia research, investigators used laser-capture microdissection combined with RNA-seq to characterize rare parvalbumin interneurons implicated in disease pathology [17]. This approach enabled precise profiling of this limited neuronal subpopulation, identifying GluN2D—a subunit of the glutamate receptor—as a potential drug target that would have been difficult to detect using conventional transcriptomic methods.

Biomarker Discovery for Biologics

For biologic therapies, multi-omics facilitates identification of biomarkers predicting immune responses. Researchers employed single-cell RNA-seq with VDJ capture to identify T-cell clones activated by therapeutic exposure [17]. By comparing bulk and single-cell data, they validated clonal expansion patterns and established methods for early detection of immunogenic responses, enabling proactive management of adverse effects.

Gene Therapy Safety Assessment

Comprehensive integration site analysis using multiple sequencing methods demonstrated that AAV vectors integrate randomly throughout the human genome without enrichment in cancer-associated loci [17]. This multi-omics safety assessment provided critical evidence for the therapeutic profile of AAV-based gene therapies, highlighting their low oncogenic risk compared to earlier vector systems.

Integrative Risk Prediction

As demonstrated in the Alzheimer's Disease case study, multi-omics data significantly enhances disease risk prediction compared to traditional approaches [3]. Integrative risk models combining transcriptomic features with clinical covariates achieved superior performance (AUROC: 0.703) over polygenic scores alone, highlighting the clinical value of multi-dimensional molecular profiling for complex diseases.

These applications underscore how multi-omics approaches provide the comprehensive molecular perspective necessary for informed decision-making throughout the therapeutic development process, from initial target identification to post-market safety monitoring.

Major Research Consortia and Public Data Repositories (e.g., TCGA)

Major research consortia and public data repositories are foundational to modern multi-omics research, providing the large-scale, integrated datasets necessary to elucidate complex molecular pathways. Initiatives like The Cancer Genome Atlas (TCGA) and the Alzheimer's Disease Sequencing Project (ADSP) have generated petabytes of genomic, transcriptomic, proteomic, and epigenomic data, enabling researchers to move beyond single-layer analysis to a more holistic understanding of disease biology [18] [19]. The effective use of these resources requires navigating specific data portals, understanding consortium governance, and applying sophisticated computational integration strategies to uncover the interconnected regulatory and metabolic networks that define physiological and pathological states [20] [21] [22]. This guide provides a technical overview of these key resources, their data structures, and the methodologies for their integration, serving as a roadmap for researchers aiming to leverage these assets for pathway discovery and therapeutic development.

Landscape of Major Consortia and Repositories

Large-scale collaborative efforts are crucial for generating the sample sizes and data diversity required for robust multi-omics discovery. The table below summarizes key resources relevant to multi-omics pathway research.

Table 1: Major Multi-Omics Research Consortia and Data Repositories

| Name | Primary Focus | Key Data Types | Access Portal | Notable Scale & Features |

|---|---|---|---|---|

| The Cancer Genome Atlas (TCGA) [19] [23] | Cancer Genomics | Genomic, Epigenomic, Transcriptomic, Proteomic | Genomic Data Commons (GDC) Portal [19] | >20,000 patients; 33 cancer types; over 2.5 petabytes of data [19] |

| Alzheimer's Disease Sequencing Project (ADSP) [18] | Neurodegenerative Disease | Whole Genome Sequencing, Transcriptomic, Proteomic | NIAGADS [18] | 15,480 individuals (in focused analysis); genome-, transcriptome-, proteome-wide association studies [18] |

| NCI Cohort Consortium [24] | Cancer Epidemiology & Risk | Genomic, Biospecimens, Epidemiologic Data | dbGaP [24] | >50 cohorts; >7 million people; international scope [24] |

| Qatar Metabolomics Study of Diabetes (QMDiab) [21] | Diabetes & Metabolic Disease | Genomic, Methylation, Transcriptomic, Proteomic, Metabolomic | "The Molecular Human" Web Interface [21] | 391 participants; 18 diverse omics platforms; 6,304 molecular traits per sample [21] |

| MLOmics [25] | Pan-Cancer Machine Learning | mRNA, miRNA, DNA Methylation, Copy Number Variation | MLOmics Database [25] | 8,314 TCGA patient samples; 32 cancer types; pre-processed, model-ready data [25] |

| Cancer Imaging Archive (TCIA) [23] | Cancer Imaging | Medical Images, Radiomics, Clinical Data | TCIA Website [23] | Curated archive of medical images; linked with TCGA and other molecular data [23] |

These resources are supported by central data portals and knowledgebases designed to facilitate access and analysis:

- Genomic Data Commons (GDC): A unified data repository that enables data sharing across cancer genomic studies in support of precision medicine, hosting data from TCGA and other NCI programs [23].

- Database of Genotypes and Phenotypes (dbGaP): Developed by NIH to archive and distribute results from studies investigating genotype-phenotype interactions. Many NCI-funded genomic datasets are available here [26] [23] [24].

- Omics Discovery Index (OmicsDI): A knowledge discovery framework that provides a searchable index across heterogeneous public omics data from genomics, proteomics, transcriptomics, and metabolomics studies [27].

Experimental Protocols for Multi-Omic Data Generation and Integration

Leveraging data from consortia requires an understanding of both the experimental protocols used for data generation and the computational workflows for integration. The following methodology, adapted from a large-scale multi-omics study on Alzheimer's disease, provides a robust framework [18].

Genome-Wide Association Studies (GWAS)

- Objective: Identify genetic loci associated with disease risk or specific molecular traits (quantitative trait loci, QTLs).

- Protocol:

- Quality Control (QC): Perform rigorous QC on genetic variant data. Remove variants with low minor allele count (e.g., MAC < 20), low call rate (e.g., < 95%), and duplicate samples [18].

- Association Testing: Conduct association testing using an additive genetic model in tools like PLINK v2.0. Adjust for covariates including age, sex, and genetic principal components to account for population stratification [18].

- Significance Thresholding: Apply a genome-wide significance threshold (e.g., ( p < 5 \times 10^{-8} )) to identify significant associations [18].

Transcriptome-Wide and Proteome-Wide Association Studies (TWAS/PWAS)

- Objective: Identify genes and proteins whose expression levels are associated with a trait, using genetic variation as a anchor to infer causality.

- Protocol:

- Reference Panel: Use genetically regulated expression or protein models (e.g., from GTEx Project v8 via PredictDB) that predict molecular abundance based on genetic variation [18].

- Imputation & Association: Impute the genetically regulated component of gene or protein expression into the study cohort and test for association with the phenotype of interest.

- Validation: Perform mediation analysis to test if the effect of a genetic variant on the trait is mediated through the expression level of a specific gene or protein [18].

Multi-Omics Integration and Pathway Analysis

- Objective: Integrate signals from multiple molecular layers to identify coherent biological pathways and build predictive models.

- Protocol:

- Univariate Discovery: Conduct GWAS, TWAS, and PWAS independently to identify significant associations within each molecular layer [18].

- Pathway Enrichment Analysis: Use tools like GOrilla or GSEA to test for over-representation of significant genes/proteins in known biological pathways (e.g., cholesterol metabolism, immune signaling) [18].

- Multivariate Predictive Modeling:

- Feature Construction: Use genetically regulated components of gene and protein expression as features in predictive models [18].

- Model Training: Employ machine learning algorithms such as:

- Elastic-net logistic regression for feature selection and classification.

- Random Forest to capture non-linear effects and complex interactions [18].

- Model Evaluation: Assess performance using metrics like Area Under the Receiver Operating Characteristic (AUROC) and Area Under the Precision-Recall Curve (AUPRC), comparing against baseline models like polygenic risk scores [18].

Figure 1: A high-level workflow for a multi-omics study, from data generation to integration and interpretation.

Computational Strategies for Multi-Omics Integration

The integration of disparate omics layers is a central challenge. The choice of method depends on whether the data is matched (from the same sample) or unmatched (from different samples) [20].

Table 2: Multi-Omics Integration Methods and Their Applications

| Method | Type | Underlying Methodology | Best Suited For | Key Features |

|---|---|---|---|---|

| MOFA+ [20] [22] | Matched (Vertical) | Unsupervised Bayesian factor analysis | Identifying latent sources of variation across omics layers; exploratory analysis. | Infers factors that capture co-variation across modalities; no phenotype supervision required. |

| DIABLO [22] | Matched (Vertical) | Supervised multiblock sPLS-DA | Building predictive models for a known phenotype; biomarker discovery. | Uses phenotype labels to identify features that are discriminative and correlated across omics. |

| Similarity Network Fusion (SNF) [22] | Matched (Vertical) | Network-based integration | Data clustering and subtyping; identifying sample groups with multi-omics concordance. | Fuses sample-similarity networks from each omics layer into a single network. |

| GLUE [20] | Unmatched (Diagonal) | Graph-linked variational autoencoder | Integrating multiple omics from different cells or studies. | Uses prior biological knowledge to guide the integration of unpaired data. |

| Seurat v4/v5 [20] | Matched & Unmatched | Weighted nearest neighbors / Bridge integration | Single-cell multi-omics integration; transferring labels across datasets. | Robust and widely used framework for single-cell data; can integrate RNA, protein, ATAC-seq. |

| MCIA [22] | Matched (Vertical) | Multiple co-inertia analysis | Jointly visualizing relationships between samples and features across multiple omics tables. | Multivariate statistical method that projects multiple datasets into a shared space. |

Figure 2: Overview of core multi-omics integration strategies and their primary analytical outputs.

The Scientist's Toolkit: Research Reagent Solutions

The following table details essential computational tools and data resources that form the backbone of multi-omics research.

Table 3: Essential Computational Tools and Data Resources for Multi-Omics Research

| Item/Reagent | Function | Specific Application in Multi-Omics |

|---|---|---|

| GTEx eQTL Models | Reference panels of genetically regulated gene expression. | Imputing transcriptomic abundance in TWAS; available via PredictDB [18]. |

| PLINK v2.0 | Whole-genome association analysis toolset. | Performing QC and GWAS on large-scale sequencing data [18]. |

| MOFA+ | Unsupervised integration tool for multi-omics data. | Decomposing multi-omics datasets into latent factors that capture shared biology [20] [22]. |

| Seurat Suite | R toolkit for single-cell genomics. | Integrating and analyzing matched single-cell multi-omics data (RNA, ATAC, protein) [20]. |

| MLOmics Database | Pre-processed, machine-learning-ready cancer multi-omics database. | Training and evaluating ML models on pan-cancer classification and subtyping tasks [25]. |

| Omics Playground | Integrated, code-free platform for multi-omics analysis. | Providing access to multiple integration methods (MOFA, DIABLO, SNF) for biologists and translational researchers [22]. |

| COmics Web Interface | Interactive tool for exploring molecular networks. | Visualizing and hypothesis generation from integrated multi-omics networks, as demonstrated in the QMDiab study [21]. |

Major research consortia and their associated public data repositories have fundamentally transformed the scale and scope of multi-omics research. By providing standardized, high-quality data from thousands of individuals, resources like TCGA and ADSP empower the scientific community to dissect the complex, interconnected molecular pathways underlying human disease. The full potential of these assets is realized through sophisticated computational integration strategies—from unsupervised factor analysis to supervised machine learning—that can weave disparate data types into a coherent molecular narrative. As these datasets continue to grow in size and diversity, and as integration methodologies become more powerful and accessible, the path to discovering novel disease mechanisms, predictive biomarkers, and therapeutic targets becomes increasingly clear.

Multi-Omics in Action: Integration Strategies and Applications in Drug Discovery

The overarching goal of multi-omics research is to achieve a holistic understanding of biological systems by integrating complementary molecular data layers. Biological systems are complex organisms with numerous regulatory features, including DNA, mRNA, proteins, metabolites, and epigenetic factors, each of which can be influenced by disease and cause changes in cell signaling cascades and phenotypes [28]. The fundamental challenge lies in synthesizing these diverse data types—each with unique scales, noise characteristics, and technological limitations—to reveal how genes, proteins, and epigenetic factors collectively influence disease phenotypes [28].

Multi-omics data integration methods have evolved to address this complexity, generally falling into three primary categories: conceptual integration, which combines findings at the interpretation stage; statistical integration, which identifies relationships across datasets; and model-based integration, which uses mathematical frameworks to predict system behavior [28]. The choice of integration strategy is critical, as it determines the biological insights that can be gleaned, from discovering novel biomarkers to unraveling complex molecular pathways in diseases such as cancer [29] [20].

This technical guide provides a comprehensive overview of these integration approaches, focusing on their application in elucidating molecular pathways. We detail methodologies, present comparative analyses in structured tables, and provide visualization workflows to assist researchers in selecting and implementing appropriate integration strategies for their multi-omics investigations.

Classification of Integration Approaches

Multi-omics integration methodologies can be categorized based on their underlying principles and the stage at which integration occurs. These approaches are not mutually exclusive, and hybrid methods are increasingly common. The three primary frameworks—conceptual, statistical, and model-based—offer distinct advantages and are suited to different research objectives.

Table 1: Core Data Integration Approaches in Multi-Omics Research

| Integration Approach | Core Principle | Typical Methods | Primary Use Cases |

|---|---|---|---|

| Conceptual Integration | Independent analysis of each omics layer with integration during biological interpretation | Pathway enrichment analysis, network mapping | Hypothesis generation, functional validation, placing results in biological context |

| Statistical Integration | Identification of statistical relationships and correlations across omics datasets | Correlation analysis, co-expression networks (WGCNA), multivariate (PCA, CCA) | Identifying co-regulated features, biomarker discovery, data reduction |

| Model-Based Integration | Using mathematical models to predict system behavior from multi-omics inputs | Constraint-based modeling, deep learning (AE, VAE, GAN), machine learning | Predictive modeling, classification, disentangling regulatory mechanisms |

The integration process can also be characterized by its architecture, particularly in computational approaches:

- Early Integration: Features from each modality are concatenated before being processed by a model [30].

- Intermediate Integration: Modalities remain separate but model learns inter-modality relationships to generate an integrated representation or shared latent space [30].

- Late Integration: Separate models are trained for each modality, with predictions combined for a final aggregated result [30].

Furthermore, integration strategies must account for data pairing. Matched (vertical) integration combines omics data profiled from the same cell or sample, using the biological unit itself as an anchor. In contrast, unmatched (diagonal) integration combines data from different cells, samples, or studies, requiring computational alignment in a latent space [20].

Conceptual Integration Approaches

Conceptual integration represents a knowledge-driven framework where multi-omics data are analyzed independently and combined during the interpretation phase using established biological knowledge. This approach leverages curated pathway databases and molecular interaction networks to contextualize findings across omics layers.

Pathway-Based Integration

Pathway analysis facilitates conceptual integration by transforming molecular-level abundance data into pathway-level activity scores. Methods like single-sample Pathway Analysis (ssPA) condense molecular measurements into pathway activity scores for each sample, creating a pathway-level matrix that can be used for downstream analysis and integration [31]. Tools such as PathIntegrate employ ssPA to transform multi-omics datasets from molecular to pathway-level, then apply predictive models to integrate the data [31]. This approach outputs multi-omics pathways ranked by their contribution to outcome prediction, the contribution of each omics layer, and the importance of individual molecules within pathways.

Pathway-based integration offers several advantages: it provides a more parsimonious model when there are fewer input pathways than molecules, enables detection of multiple small correlated signals that may be missed in molecular-level data, and increases robustness to data noise by maximizing biological variation while reducing technical variation [31].

Network-Based Integration

Network-based approaches construct molecular interaction networks that incorporate multiple omics layers, using prior knowledge of biological interactions. These networks can include protein-protein interactions, gene regulatory networks, and metabolic pathways, providing a framework for interpreting multi-omics data in the context of established biology.

Gene-metabolite networks exemplify this approach, visualizing interactions between genes and metabolites in a biological system. These networks are generated by collecting gene expression and metabolite abundance data from the same biological samples, then integrating them using correlation analysis or other statistical methods to identify co-regulated or co-expressed genes and metabolites [32]. Visualization software such as Cytoscape or igraph enables the construction of these networks, where genes and metabolites are represented as nodes connected by edges representing the strength and direction of their interactions [32].

Statistical Integration Approaches

Statistical integration methods identify quantitative relationships across omics datasets through correlation measures, co-expression patterns, and multivariate analyses. These approaches are particularly valuable for identifying coordinated changes across molecular layers and for dimension reduction.

Correlation-Based Methods

Correlation analysis represents a fundamental statistical integration approach, quantifying the degree to which variables from different omics datasets are related. Simple scatterplots can visualize expression patterns and identify consistent or divergent trends between omics layers [33]. For example, transcript-to-protein ratios can be investigated in scatter plot quadrants representing discordant or unanimous up- or down-regulation [33].

Pearson's or Spearman's correlation analysis and their multivariate generalizations, such as the RV coefficient, are employed to test correlations between whole sets of differentially expressed features across different biological contexts [33]. These analyses can determine the extent and nature of interaction between sets of differentially expressed proteins and metabolites, assess whether up-regulated proteins correlate with increased metabolites, identify molecular regulatory pathways of correlated genes and proteins, or assess transcription-protein correspondence [33].

Correlation Networks and Co-Expression Analysis

Correlation networks extend basic correlation analysis by transforming pairwise associations into graphical representations. In these networks, nodes represent biological entities, and edges are constructed based on correlation thresholds, facilitating visualization and analysis of complex relationships within and between datasets [33].

Weighted Gene Co-Expression Network Analysis (WGCNA) is a widely used method that identifies clusters (modules) of highly correlated, co-expressed genes [33] [32]. WGCNA constructs a scale-free network that assigns weights to gene interactions, emphasizing strong correlations while reducing the impact of weaker connections. These modules can be summarized by their eigengenes (representative expression profiles) and linked to clinically relevant traits or other omics data [33] [32]. For example, co-expression analysis can be performed on transcriptomics data to identify gene modules, which are then linked to metabolites from metabolomics data to identify metabolic pathways co-regulated with the identified gene modules [32].

xMWAS is an integrated tool that performs correlation and multivariate analyses for multi-omics integration. It performs pairwise association analysis using Partial Least Squares (PLS) components and regression coefficients, then employs these coefficients to generate integrative network graphs [33]. Communities of highly interconnected nodes can be identified using multilevel community detection methods that maximize modularity—a measure of how well the network is divided into modules with higher internal connectivity [33].

Figure 1: Workflow for Statistical Integration via Correlation Networks

Model-Based Integration Approaches

Model-based integration employs mathematical and computational models to synthesize multi-omics data, often with predictive capabilities. These approaches range from constraint-based biochemical models to sophisticated machine learning and deep learning architectures.

Constraint-Based Modeling

Constraint-based models use stoichiometric metabolic networks as a scaffold for integrating multi-omics data, particularly transcriptomics and metabolomics. INTEGRATE is an example pipeline that uses constraint-based stoichiometric metabolic models to characterize multi-level metabolic regulation [34]. It computes differential reaction expression from transcriptomics data and uses constraint-based modeling to predict if differential expression of metabolic enzymes directly causes differences in metabolic fluxes. Concurrently, it uses metabolomics to predict how differences in substrate availability translate into flux differences [34].

This approach helps discriminate fluxes regulated at different levels:

- Transcriptional control: Flux variations mainly determined by enzyme abundance changes

- Metabolic control: Flux variations mainly determined by substrate abundance changes

- Combined control: Flux variations determined by concerted changes in both substrates and enzymes [34]

Machine Learning and Deep Learning Approaches

Machine learning, particularly deep learning, has revolutionized model-based multi-omics integration by handling high-dimensional, heterogeneous data and capturing non-linear relationships.

Table 2: Deep Learning Approaches for Multi-Omics Integration

| Method Category | Key Examples | Integration Strategy | Key Features |

|---|---|---|---|

| Non-Generative Models | MOLI [30], MOGONET [35] | Late or intermediate integration | Modality-specific encoding, graph convolutional networks |

| Autoencoders | Variational Autoencoders (VAE) [20] [30] | Intermediate integration | Learn shared latent representation, dimensionality reduction |

| Generative Models | Generative Adversarial Networks (GAN) [30] | Intermediate integration | Handle missing data, generate synthetic samples |

| Multi-View Models | Multi-block PLS, PathIntegrate Multi-View [31] | Simultaneous integration | Model interactions between omics datasets |

Deep learning architectures can be further categorized by their integration strategy:

Feedforward Neural Networks (FNNs): Methods like MOLI use modality-specific encoding FNNs to learn features separately before concatenation and final prediction [30]. To address inter-modality interactions, superlayered neural networks (SNN) include separate FNN superlayers for each modality with cross-connections allowing information flow between modalities [30].

Graph Convolutional Networks (GCNs): Methods like MOGONET leverage biological relationships by constructing graphs for each omics data type and applying graph convolutional networks to learn features, which are then integrated for classification [35].

Autoencoders: These learn compressed representations of input data through encoder-decoder structures. Variational autoencoders and other autoencoder architectures can integrate multi-omics data by learning a shared latent representation that captures the essential biological signal across modalities [30].

Multi-View Models: Frameworks like PathIntegrate Multi-View use multi-block partial least squares regression (MB-PLS) to model interactions between pathway-transformed omics datasets [31].

Figure 2: Model-Based Multi-Omics Integration Approaches

Experimental Protocols for Multi-Omics Integration

Implementing robust multi-omics integration requires systematic experimental and computational workflows. Below, we detail two representative protocols for pathway-based and model-based integration.

Protocol 1: Pathway-Based Integration with PathIntegrate

Objective: To integrate multi-omics data at the pathway level for improved interpretability and signal detection in low signal-to-noise scenarios.

Materials:

- Multi-omics datasets (e.g., transcriptomics, proteomics, metabolomics)

- Pathway databases (e.g., KEGG, Reactome)

- PathIntegrate Python package

Methodology:

- Data Preprocessing: Normalize and scale each omics dataset separately to address technical variation.

- Pathway Transformation: Apply single-sample pathway analysis (ssPA) using principal component analysis (PCA) or kernel PCA to transform molecular-level data into pathway activity scores.

- Model Training:

- For single-view integration: Concatenate pathway-transformed datasets and apply classification or regression models.

- For multi-view integration: Use multi-block PLS to model interactions between pathway-transformed omics datasets.

- Model Interpretation: Extract important pathways ranked by contribution to prediction, assess contribution of each omics layer, and identify key molecules within significant pathways.

Validation: Use semi-synthetic data with inserted known signals to benchmark performance against molecular-level integration methods [31].

Protocol 2: Model-Based Integration with Constraint-Based Modeling

Objective: To characterize multi-level metabolic regulation by integrating transcriptomics and metabolomics data.

Materials:

- Transcriptomics data (RNA-seq or microarray)

- Metabolomics data (targeted or untargeted)

- Stoichiometric metabolic model (e.g., Recon)

- INTEGRATE computational pipeline

Methodology:

- Data Processing: Identify differentially expressed genes and differentially abundant metabolites between conditions.

- Flux Prediction from Transcriptomics: Use Gene-Protein-Reaction associations in the metabolic model to predict metabolic fluxes from transcriptomics data.

- Flux Prediction from Metabolomics: Use substrate abundance data to predict metabolic fluxes through constraint-based modeling.

- Integration and Regulation Assessment: Intersect flux predictions from both omics layers to classify reactions as under:

- Transcriptional control if flux variation correlates with enzyme abundance changes

- Metabolic control if flux variation correlates with substrate abundance changes

- Combined control if both mechanisms are involved

Application: Demonstrate using immortalized normal and cancer breast cell lines to identify therapeutic targets [34].

Table 3: Key Research Reagent Solutions for Multi-Omics Integration

| Resource Category | Specific Tools/Databases | Function and Application |

|---|---|---|

| Data Repositories | TCGA, CPTAC, ICGC, CCLE, METABRIC [29] | Provide curated multi-omics datasets from various cancer types and cell lines for method development and validation |

| Pathway Resources | KEGG, Reactome, GO | Curated pathway knowledge for conceptual integration and pathway-based analysis |

| Statistical Tools | WGCNA, xMWAS [33] [32] | Perform correlation network analysis and identify co-expression modules across omics layers |

| Model-Based Platforms | INTEGRATE [34], PathIntegrate [31], MOFA+ [20] | Implement specific model-based integration approaches for disentangling regulatory mechanisms |

| Deep Learning Frameworks | MOLI [30], MOGONET [35], CustOmics [35] | Provide specialized deep learning architectures for multi-omics data integration and classification |

| Visualization Software | Cytoscape [32], igraph [32] | Enable network visualization and exploration of multi-omics relationships |

Successful multi-omics integration requires careful consideration of biological and technical factors. Biological complexity—including varying numbers of genes and proteins across organisms, wide dynamic ranges of molecules, and differences in lifetime expression of mRNA and proteins—must be accounted for in study design and interpretation [28]. Technical considerations include handling missing data, high dimensionality, batch effects, and platform-specific limitations [30] [33]. Furthermore, emerging evidence highlights the importance of considering microbiome influences on host gene and protein expression, as microbiota and their metabolites can affect the host epigenetic landscape and therapeutic responses [28].

As multi-omics technologies continue to advance, integration methods will increasingly need to handle spatial data, single-cell resolutions, and ever-larger datasets. The development of more interpretable deep learning models and standardized benchmarking frameworks will be crucial for translating multi-omics integration into clinical applications and personalized medicine.

Network and pathway-based integration represents a sophisticated computational approach for analyzing multi-omics datasets by mapping diverse molecular measurements onto shared biochemical networks. This methodology moves beyond simple gene lists to leverage the known topology and directional relationships within biological pathways, enabling more accurate interpretation of complex molecular data in health and disease. By considering the structural and functional relationships between genes, proteins, and metabolites, researchers can identify dysregulated pathways, discover novel therapeutic targets, and understand compensatory mechanisms in drug resistance. This technical guide explores the fundamental principles, methodologies, and applications of network-based integration approaches, providing researchers and drug development professionals with practical frameworks for implementing these advanced analytical techniques in multi-omics research.

Network and pathway-based integration has emerged as a powerful paradigm for analyzing multi-omics data by leveraging the inherent structure of biological systems. Unlike earlier enrichment methods that treated pathways as simple gene lists, modern network-based approaches incorporate the topological organization of pathways—including the directionality of interactions, regulatory relationships, and biochemical reaction flows—to provide more biologically meaningful interpretations of multi-omics datasets. This methodology recognizes that cellular functions emerge from complex networks of molecular interactions rather than from individual molecules acting in isolation.

The fundamental premise of network-based integration is that different omics layers—genomics, transcriptomics, proteomics, epigenomics, and metabolomics—provide complementary views of the same underlying biological processes. By mapping these diverse measurements onto unified pathway representations, researchers can identify consistent patterns across molecular layers that might be missed when analyzing each dataset separately. This approach has proven particularly valuable in cancer research, where pathway-level analyses have revealed convergent biological processes despite heterogeneous genetic alterations across patients. Network-based methods effectively address the "high-dimensionality" challenge in multi-omics studies, where the number of measured features vastly exceeds the number of samples, by leveraging prior biological knowledge to constrain possible interpretations [36].

Core Methodologies and Analytical Frameworks

Topology-Based Pathway Analysis

Topology-based methods incorporate the biological reality of pathways by considering the type, direction, and functional role of molecular interactions. These approaches have consistently outperformed non-topological methods in benchmarking studies by more accurately reflecting biological mechanisms [4]. The core mathematical framework for many topology-based methods involves calculating pathway perturbation by accounting for upstream and downstream effects within the network.

The Pathway-Express (PE) algorithm calculates a pathway score combining traditional enrichment statistics with perturbation factors propagated through the network topology [4]. For a pathway K, the PE-score is computed as:

[PE(K) = -\log(P{hypergeometric}(K)) \times \frac{\sum{g \in K} PF(g)}{N_{de}(K)}]

Where (P{hypergeometric}) is the hypergeometric p-value for enrichment of differentially expressed genes, (PF(g)) is the perturbation factor for gene g, and (N{de}(K)) is the number of differentially expressed genes in pathway K. The perturbation factor for each gene is calculated as:

[PF(g) = \Delta E(g) + \sum{u=1}^{n} \frac{\beta{ug} \cdot PF(u)}{N_{ds}(u)}]

Where (\Delta E(g)) represents the normalized expression change of gene g, (\beta{ug}) is the interaction coefficient between genes u and g, and (N{ds}(u)) is the number of downstream genes of u [4].

The Signaling Pathway Impact Analysis (SPIA) method extends this approach by combining the probability of observing a certain number of differentially expressed genes in a pathway (PNDE) with the probability of observing a certain amount of pathway perturbation (PPERT) calculated from the topology [4]. The combined evidence is computed as:

[PG = P{NDE} \times P_{PERT}]

[PG = P{NDE} \times (1 - P_{PERT})]

[PG = P{NDE} \times P_{PERT}]

These probabilities are then combined into a global p-value assessing the overall significance of pathway perturbation [4].

Directional Integration Methods

Directional integration methods incorporate expected relationships between different omics layers based on biological principles or experimental design. The Directional P-value Merging (DPM) method enables researchers to define directional constraints when integrating multiple datasets, prioritizing genes with consistent directional changes across omics layers while penalizing those with inconsistent patterns [37].

The DPM method computes a directionally weighted score across k datasets as:

[X{DPM} = -2(-|\Sigma{i=1}^{j} \ln(Pi) oi ei| + \Sigma{i=j+1}^{k} \ln(P_i))]

Where (Pi) represents the p-value from dataset i, (oi) is the observed directional change (e.g., +1 for upregulation, -1 for downregulation), and (e_i) is the expected direction defined by the constraints vector [37]. This approach allows explicit testing of hypotheses based on biological principles, such as the expected inverse relationship between promoter methylation and gene expression, or the positive relationship between mRNA and protein expression implied by the central dogma.

Table 1: Comparison of Network-Based Integration Methods

| Method | Statistical Approach | Data Types Supported | Key Features | Applications |

|---|---|---|---|---|

| ActivePathways [38] | Brown's combined probability test + ranked hypergeometric test | Genomic mutations, expression, epigenetic data | Identifies pathways enriched across multiple datasets; highlights contributing evidence | Pan-cancer analysis of coding and non-coding drivers |

| SPIA [4] | Topology-based perturbation analysis | Gene expression, non-coding RNA, methylation | Incorporates pathway topology; calculates net pathway perturbation | Drug efficiency indexing; pathway activation assessment |

| DPM [37] | Directional P-value merging | Any with directional information (e.g., expression, methylation) | User-defined directional constraints; integrates directional and non-directional data | Biomarker discovery; pathway regulation in gliomas |

| PARADIGM [36] | Bayesian network inference | Multiple omics layers simultaneously | Integrates diverse evidence types; estimates pathway activity | Patient stratification; causal network identification |

| TIGERS [39] | Tensor imputation + trajectory analysis | Single-cell transcriptomics | Predicts missing drug responses; identifies pathway trajectories | Drug mechanism of action at single-cell level |

Tensor-Based Methods for Single-Cell Data

The TIGERS (Tensor-based Imputation of Gene-Expression Data at the Single-Cell Level) method addresses the challenge of analyzing drug-induced single-cell transcriptomic data with high missing value rates [39]. This approach represents data as a third-order tensor (drugs × genes × cells) and uses tensor-train decomposition to impute missing values while preserving biological structure.

The performance evaluation of TIGERS demonstrated significantly lower relative standard errors (RSE mean = 0.527 at 10% missing rate) compared to standard imputation methods like MAGIC and SAVER (RSE mean = 2.136) [39]. The method successfully preserved cell-type-specific expression patterns for marker genes such as insulin (beta cells) and glucagon (alpha cells) in pancreatic islets, enabling accurate pathway trajectory analysis across inferred cell states.

Experimental Protocols and Workflows

Protocol for Integrative Pathway Analysis of Multi-Omics Data

Step 1: Data Preprocessing and Quality Control

- Perform platform-specific normalization and quality control for each omics dataset

- Map all features to common gene identifiers using reference databases

- Generate statistical significance measures (P-values) and directional effects (e.g., fold-changes) for each gene in each dataset

- For mutation data, annotate functional impact and calculate gene-level burden scores

Step 2: Define Integration Strategy and Directional Constraints

- Select appropriate integration method based on data types and research question

- For directional methods like DPM, define constraints vector based on biological relationships (e.g., mRNA-protein: +1; methylation-expression: -1)

- Configure analysis parameters (significance thresholds, multiple testing correction)

Step 3: Perform Data Integration and Pathway Analysis

- Execute chosen integration method (e.g., ActivePathways, SPIA, DPM)

- Calculate combined significance scores across omics datasets

- Perform pathway enrichment analysis using comprehensive pathway databases

- Identify significantly enriched pathways with evidence from individual omics layers

Step 4: Result Interpretation and Validation

- Visualize enriched pathways as network maps highlighting multi-omics evidence

- Identify master regulator genes and key bottlenecks in dysregulated pathways

- Perform experimental validation of key findings using targeted assays

- Correlate pathway activities with clinical outcomes where available [38] [37]

Protocol for Resistance Pathway Mapping

Step 1: Generate Resistance Models

- Treat sensitive cell lines with increasing drug concentrations to develop resistance

- Use combinatorial approaches: ORF overexpression, CRISPR activation, or chemical libraries

- Select for resistant clones over 4-8 weeks with appropriate drug selection

Step 2: Multi-Omics Profiling of Resistant Models

- Perform whole-exome or whole-genome sequencing to identify acquired mutations

- Conduct RNA sequencing to identify transcriptional adaptations

- Perform proteomic profiling to assess protein expression and phosphorylation changes

- Analyze epigenetic modifications (methylation, chromatin accessibility)

Step 3: Pathway-Centric Data Integration

- Map alterations onto curated signaling pathways (MAPK, PI3K, apoptotic, etc.)

- Identify consistently altered pathways across multiple resistant models

- Distinguish driver from passenger alterations using functional impact scores

- Validate candidate resistance pathways using pharmacological or genetic inhibition [40]

Table 2: Research Reagent Solutions for Multi-Omics Pathway Studies

| Reagent/Resource | Type | Function | Example Sources |

|---|---|---|---|

| Quartet Reference Materials [15] | Reference standards | Multi-omics proficiency testing; batch effect correction | Chinese Quartet Project; National Reference Materials |

| Oncobox Pathway Databank [4] | Pathway database | 51,672 uniformly processed human pathways for activation analysis | OncoboxPD |

| Lentiviral ORF Libraries [40] | Functional screening | Gain-of-function resistance gene identification | Addgene, commercial vendors |

| CRISPR Activation Libraries [40] | Functional screening | Identification of resistance drivers via transcriptional activation | Commercial vendors |

| Tensor Decomposition Algorithms [39] | Computational tool | Missing data imputation for single-cell drug response data | TIGERS implementation |

| Pathway Annotations [38] [36] | Knowledge base | Gene set collections for enrichment analysis | GO, Reactome, KEGG, MSigDB |

Pathway Visualization and Data Representation

Effective visualization of integrated pathway networks requires careful consideration of color theory and accessibility principles. The following diagrams adhere to WCAG 2.1 contrast standards, using a restricted palette to ensure clarity while maintaining sufficient visual distinction between network elements [41].

Network Integration Workflow

Resistance Pathway Mapping

Applications in Biomedical Research

Cancer Driver Discovery