Multi-Omics in Biomarker Discovery: Integrating Genomics, Proteomics, and AI for Precision Diagnosis

This article provides a comprehensive analysis of how multi-omics approaches are revolutionizing biomarker discovery and diagnostic applications. By integrating genomics, transcriptomics, proteomics, metabolomics, and epigenomics, researchers can now identify more robust biomarkers for cancer and complex diseases. The content explores foundational concepts, methodological workflows, computational integration strategies using machine learning, current challenges in data harmonization and clinical validation, and real-world case studies demonstrating successful translation into clinical practice. Targeted at researchers, scientists, and drug development professionals, this review synthesizes cutting-edge advancements while addressing practical implementation barriers and future directions for personalized medicine.

Multi-Omics in Biomarker Discovery: Integrating Genomics, Proteomics, and AI for Precision Diagnosis

Abstract

This article provides a comprehensive analysis of how multi-omics approaches are revolutionizing biomarker discovery and diagnostic applications. By integrating genomics, transcriptomics, proteomics, metabolomics, and epigenomics, researchers can now identify more robust biomarkers for cancer and complex diseases. The content explores foundational concepts, methodological workflows, computational integration strategies using machine learning, current challenges in data harmonization and clinical validation, and real-world case studies demonstrating successful translation into clinical practice. Targeted at researchers, scientists, and drug development professionals, this review synthesizes cutting-edge advancements while addressing practical implementation barriers and future directions for personalized medicine.

The Multi-Omics Revolution: From Single Layers to Integrated Biomarker Discovery

Multi-omics represents an integrative approach in biological sciences that combines data from various "omes"—including genomics, transcriptomics, proteomics, metabolomics, and epigenomics—to construct a comprehensive model of complex biological systems [1]. This paradigm shift from single-omics investigations enables researchers to capture the intricate interactions and regulatory mechanisms that underlie health and disease states. In the context of biomarker discovery and diagnostic research, multi-omics provides unprecedented opportunities to identify robust, clinically actionable biomarkers that can transform personalized medicine [2].

The fundamental principle of multi-omics lies in recognizing that biological entities are complex systems where information flows across multiple molecular layers. While genomic variations provide risk associations, their functional consequences are mediated through transcriptomic, proteomic, and metabolomic alterations [3]. By integrating these disparate data modalities, researchers can distinguish causal molecular events from incidental associations, thereby identifying biomarkers with higher predictive value and biological relevance [4]. This integrated approach is particularly valuable for addressing complex diseases like cancer, neurological disorders, and metabolic conditions, where pathogenesis involves dynamic interactions across multiple biological domains [1] [5].

Core Omics Technologies: Principles and Applications

Defining the Omics Layers

The multi-omics framework comprises distinct yet interconnected molecular layers, each providing unique insights into biological processes. The table below summarizes the core omics technologies, their molecular foci, and primary analytical platforms.

Table 1: Core Components of the Multi-Omics Landscape

| Omics Layer | Molecule Class Analyzed | Key Technologies | Primary Applications in Biomarker Discovery |

|---|---|---|---|

| Genomics | DNA sequence and variation | Next-generation sequencing (NGS), Whole Genome/Exome Sequencing (WGS/WES) [2] | Identification of hereditary disease risk, cancer driver mutations, pharmacogenomic variants [6] |

| Transcriptomics | RNA expression and regulation | RNA sequencing (RNA-seq), single-cell RNA-seq, microarrays | Gene expression signatures, alternative splicing events, non-coding RNA biomarkers [1] |

| Proteomics | Protein structure, function, and abundance | Mass spectrometry (LC-MS/MS), iTRAQ, antibody arrays [5] | Direct functional readout of cellular activity, post-translational modifications, signaling pathway activity [3] |

| Metabolomics | Small molecule metabolites | Mass spectrometry (MS), Nuclear Magnetic Resonance (NMR) | Dynamic physiological status, metabolic pathway disruptions, therapeutic response monitoring [1] [5] |

| Epigenomics | DNA and histone modifications | Bisulfite sequencing, ChIP-seq, ATAC-seq | Reversible regulatory mechanisms, gene-environment interactions, cellular memory markers [2] |

Integration Approaches for Biomarker Discovery

Multi-omics integration strategies can be categorized into horizontal, vertical, and diagonal approaches, each with distinct advantages for biomarker discovery. Horizontal integration combines the same type of omics data across multiple samples or cohorts to identify consensus patterns, enabling the discovery of population-level biomarkers with enhanced generalizability [1]. Vertical integration analyzes multiple omics layers from the same biological samples, establishing causal relationships across molecular layers and identifying master regulatory biomarkers that drive pathological processes [7]. Diagonal integration employs advanced computational methods to combine both cross-omics and cross-sample data, creating comprehensive network models that capture system-wide properties and identify emergent biomarkers that would remain invisible in isolated analyses [8].

The integration of these omics technologies has demonstrated particular value in oncology, where biomarkers derived from multiple molecular layers can guide diagnosis, prognosis, and treatment selection. For example, in precision oncology, multi-omics approaches have yielded biomarker panels that integrate genomic alterations, transcriptomic signatures, and proteomic profiles to predict therapeutic responses and resistance mechanisms [1]. Similarly, in metabolic diseases like prediabetes, multi-omics biomarkers combining genetic predisposition, epigenetic modifications, and metabolic profiles offer enhanced predictive power for disease progression compared to traditional clinical parameters alone [5].

Experimental Methodologies in Multi-Omics Research

Sample Processing and Data Generation

Robust multi-omics biomarker discovery begins with appropriate sample collection, processing, and data generation protocols. The following workflow outlines a standardized pipeline for multi-omics sample processing:

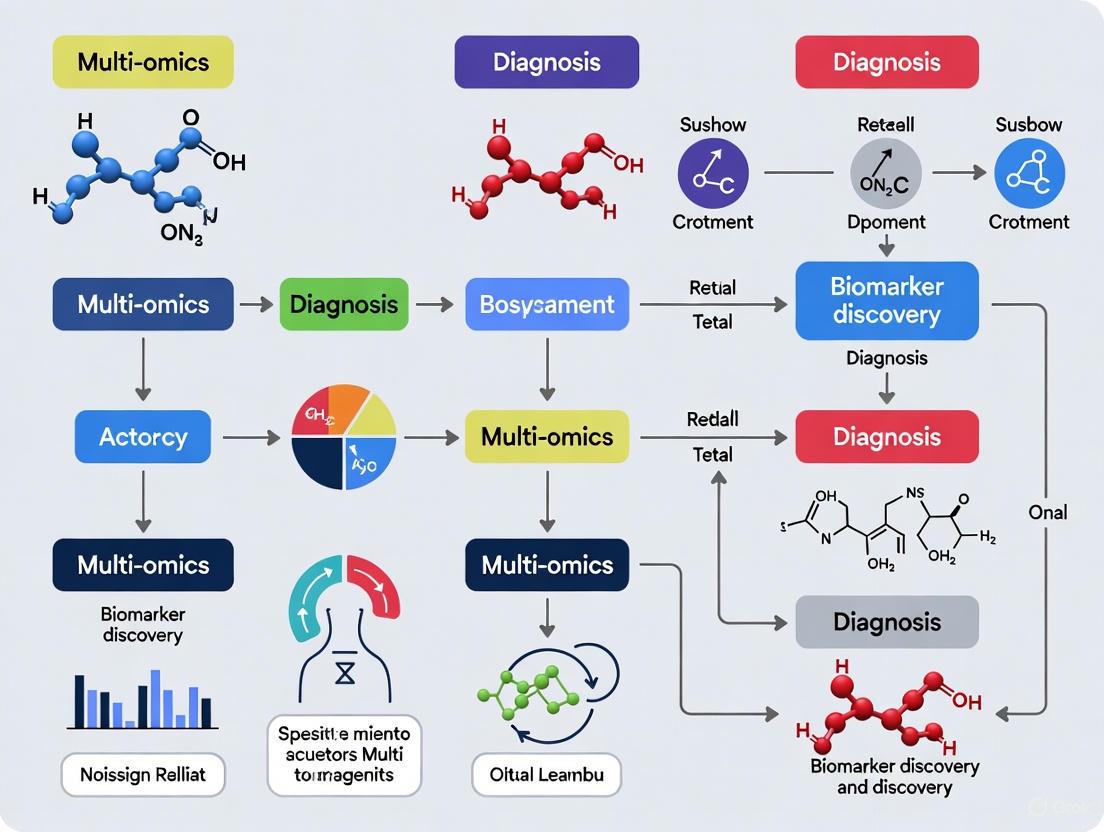

Figure 1: Multi-omics sample processing workflow from collection to raw data generation.

For nucleic acid extraction, quality control metrics are critical. DNA samples for genomics and epigenomics should have A260/A280 ratios between 1.8-2.0 and minimum concentrations of 10ng/μL for WGS. RNA samples for transcriptomics require RIN (RNA Integrity Number) values >8.0 for bulk sequencing and >9.0 for single-cell applications [6]. Protein extraction for proteomics typically utilizes lysis buffers compatible with downstream LC-MS/MS analysis, with quantification via BCA or Bradford assays [5]. Metabolite extraction employs methanol-acetonitrile-water mixtures to preserve labile metabolites, with immediate processing at 4°C to prevent degradation.

Analytical Techniques by Omics Layer

Each omics domain employs specialized analytical techniques optimized for its specific molecular class:

Genomics and Epigenomics: NGS platforms (Illumina, PacBio, Oxford Nanopore) enable comprehensive variant detection, with WGS identifying approximately 4-5 million variants per individual [2]. Target enrichment approaches (hybridization capture or amplicon-based) focus on specific gene panels with reduced sequencing costs. For epigenomics, bisulfite conversion-based methods distinguish methylated from unmethylated cytosine residues, while ATAC-seq identifies open chromatin regions using hyperactive Tn5 transposase [6].

Transcriptomics: Bulk RNA-seq provides average gene expression across cell populations, while single-cell RNA-seq (10x Genomics, Smart-seq2) resolves cellular heterogeneity, identifying rare cell populations that may serve as biomarker sources [9]. Spatial transcriptomics technologies (10x Visium, Nanostring GeoMx) preserve tissue architecture context, correlating molecular profiles with histological features [1].

Proteomics: Liquid chromatography-tandem mass spectrometry (LC-MS/MS) enables high-throughput protein identification and quantification, with isobaric labeling (TMT, iTRAQ) allowing multiplexed analysis of 8-16 samples simultaneously [5]. Novel affinity-based proteomics platforms (Olink, SomaScan) expand proteome coverage to low-abundance proteins, potentially discovering biomarkers previously undetectable by MS.

Metabolomics: Both untargeted and targeted MS approaches are employed, with untargeted methods capturing thousands of metabolic features for hypothesis generation, and targeted MRM (Multiple Reaction Monitoring) assays providing precise quantification of predefined metabolite panels for validation [5].

Computational Integration and Analysis

Data Processing and Normalization

The computational workflow for multi-omics integration begins with quality control and normalization of individual omics datasets. Genomics data processing includes alignment to reference genomes (GRCh38), variant calling (GATK), and annotation (ANNOVAR, VEP). Transcriptomics data processing involves alignment (STAR, HISAT2), quantification (featureCounts, Salmon), and normalization (TPM, DESeq2). Proteomics data processing encompasses spectrum identification (MaxQuant, Spectronaut), imputation of missing values, and batch effect correction. Metabolomics data processing includes peak detection, compound identification, and normalization using quality control samples [10].

Integration Architectures and Machine Learning Approaches

Multi-omics data integration employs sophisticated computational architectures to extract biologically meaningful patterns. The following diagram illustrates a deep learning framework for multi-omics integration:

Figure 2: Deep learning framework for multi-omics data integration and biomarker discovery.

Machine learning approaches for multi-omics integration include early fusion (concatenating features from multiple omics before model training), intermediate fusion (learning joint representations using autoencoders or graph neural networks), and late fusion (training separate models for each omics type and combining predictions) [10]. Deep learning tools like Flexynesis provide flexible architectures for multi-omics integration, supporting various modeling tasks including classification (disease subtyping), regression (drug response prediction), and survival analysis [10].

For biomarker discovery, feature selection methods are critical to identify the most informative molecular signatures from high-dimensional omics data. Regularization techniques (LASSO, elastic net), tree-based methods (Random Forest, XGBoost), and neural network attention mechanisms can prioritize biomarkers with the highest predictive power for clinical outcomes [4].

The Scientist's Toolkit: Essential Research Reagents and Platforms

Successful multi-omics biomarker discovery requires carefully selected reagents, platforms, and computational tools. The following table catalogs essential components of the multi-omics research toolkit.

Table 2: Essential Research Reagents and Platforms for Multi-Omics Studies

| Category | Specific Tools/Reagents | Function and Application |

|---|---|---|

| Sequencing Reagents | Illumina Nextera XT, PacBio SMRTbell, 10x Genomics Single Cell Kits | Library preparation for genomic, transcriptomic, and epigenomic profiling across various sequencing platforms [6] |

| Mass Spectrometry Reagents | iTRAQ/TMT labeling kits, Trypsin/Lys-C proteases, SCIEX Selex kits | Protein digestion, labeling, and metabolite detection for proteomic and metabolomic analyses [5] |

| Single-Cell Analysis Platforms | 10x Genomics Chromium, BD Rhapsody, Parse Biosciences | Partitioning cells for single-cell multi-omics profiling, enabling resolution of cellular heterogeneity in biomarker identification [9] |

| Spatial Omics Technologies | 10x Visium, Nanostring GeoMx, Akoya CODEX | Molecular profiling within tissue architectural context, correlating biomarker location with pathological features [1] |

| Computational Tools | Flexynesis [10], MOFA+ [7], Galaxy Server [10] | Integration of multi-omics datasets, statistical analysis, and visualization for biomarker discovery and validation |

| Reference Databases | gnomAD [2], TCGA [10], ClinVar [2] | Population frequency data, disease associations, and clinical interpretations for variant and biomarker prioritization |

Applications in Diagnostic Biomarker Discovery

Case Study: Prediabetes Biomarker Identification

Multi-omics approaches have demonstrated particular utility in identifying biomarkers for complex metabolic disorders like prediabetes, where traditional diagnostic parameters (HbA1c, fasting glucose) have limitations in sensitivity and specificity [5]. Integrated multi-omics studies have revealed that the progression from normoglycemia to prediabetes involves coordinated alterations across multiple molecular layers, including genetic predisposition (TCF7L2, PPARG variants), epigenetic modifications (DNA methylation of insulin signaling genes), proteomic changes (altered adipokine profiles), and metabolic disturbances (elevated branched-chain amino acids, phospholipid alterations) [5].

The integration of these multi-omics biomarkers has improved prediction of prediabetes progression compared to clinical parameters alone. For example, a combined model incorporating genetic variants, DNA methylation markers, and plasma metabolites achieved an AUC of 0.89 for predicting conversion to type 2 diabetes within 5 years, significantly outperforming models based solely on clinical parameters (AUC=0.72) [5]. These findings highlight the clinical potential of multi-omics biomarkers for early intervention in at-risk populations.

Case Study: Oncology Biomarker Panels

In precision oncology, multi-omics approaches have generated biomarker panels that inform diagnosis, prognosis, and treatment selection. For instance, in colorectal cancer, integrated analysis of genomic (APC, KRAS, TP53 mutations), transcriptomic (consensus molecular subtype classification), and immunoproteomic (PD-L1, immune cell signatures) biomarkers can stratify patients for targeted therapies, immunotherapies, and conventional chemotherapy [1]. Multi-omics profiling also enables monitoring of minimal residual disease and early detection of resistance mechanisms through liquid biopsy approaches that simultaneously analyze circulating tumor DNA, RNA, proteins, and metabolites [6].

Tools like Flexynesis have demonstrated capability in predicting cancer drug response by integrating multi-omics data from cell lines and patient samples. For example, models trained on CCLE (Cancer Cell Line Encyclopedia) multi-omics data successfully predicted sensitivity to targeted therapies (Lapatinib, Selumetinib) in independent datasets, with correlations of r=0.72-0.85 between predicted and observed drug response values [10]. Similarly, multi-omics classifiers combining gene expression and methylation profiles accurately identified microsatellite instability (MSI) status in gastrointestinal and gynecological cancers (AUC=0.981), a biomarker with implications for immunotherapy selection [10].

Future Directions and Challenges

The multi-omics field continues to evolve rapidly, with several emerging trends shaping its application in biomarker discovery. Single-cell multi-omics technologies are advancing to provide higher-resolution views of cellular heterogeneity in health and disease, enabling identification of rare cell populations that may serve as biomarker sources or therapeutic targets [9]. Spatial multi-omics methods are maturing to correlate molecular profiles with tissue morphology and cellular neighborhood contexts, adding critical spatial dimensions to biomarker discovery [1]. Artificial intelligence approaches, particularly deep learning and large language models, are being increasingly applied to integrate multi-omics data, extract biologically meaningful patterns, and generate actionable biomarkers [4] [7].

Despite these advances, significant challenges remain in multi-omics biomarker discovery. Technical challenges include data heterogeneity, with different omics layers exhibiting varying scales, resolutions, and noise characteristics that complicate integration [3]. Analytical challenges encompass the high dimensionality of multi-omics data, requiring sophisticated statistical methods to avoid overfitting and ensure biomarker robustness [10]. Clinical translation challenges involve the need for large-scale validation across diverse populations, standardization of analytical protocols, and demonstration of clinical utility for regulatory approval [2] [8].

Addressing these challenges will require coordinated efforts across academia, industry, and regulatory bodies to establish standards, share resources, and prioritize biomarkers with the greatest potential impact on patient care. As these efforts progress, multi-omics approaches are poised to fundamentally transform biomarker discovery and precision medicine, enabling earlier disease detection, more accurate prognosis, and personalized therapeutic interventions tailored to individual molecular profiles.

The Limitations of Single-Omics Approaches in Capturing Disease Complexity

Modern biomedical research relies heavily on high-throughput technologies to unravel disease mechanisms. While single-omics approaches have revolutionized our understanding of biology, they provide inherently limited insights into complex disease pathologies. This technical review examines the fundamental constraints of genomics, transcriptomics, proteomics, and metabolomics when employed in isolation, highlighting how their individual limitations necessitate integrated multi-omics strategies for comprehensive biomarker discovery and accurate disease characterization. Through critical analysis of experimental evidence and methodological constraints, we demonstrate how the averaging effect in bulk analyses, inability to establish molecular causality, and missing critical regulatory layers fundamentally restrict the clinical utility of single-omics approaches in precision medicine.

The advent of high-throughput technologies has transformed biomedical research, enabling unprecedented molecular profiling across biological scales. Single-omics approaches—including genomics, transcriptomics, proteomics, and metabolomics—have each contributed valuable insights into disease mechanisms and potential diagnostic biomarkers [11]. However, these methodologies suffer from intrinsic limitations when deployed independently, ultimately providing fragmented perspectives that fail to capture the dynamic, multi-layered complexity of disease pathogenesis [12].

The fundamental challenge stems from biological reality: diseases emerge from intricate, nonlinear interactions across molecular, cellular, and tissue levels. Single-omics approaches, by their reductionist nature, capture only one dimension of this complexity, potentially leading to incomplete or misleading conclusions [13]. This limitation becomes particularly problematic in biomarker discovery, where candidate markers identified through single-omics platforms frequently fail clinical validation due to insufficient specificity or inability to account for post-transcriptional and post-translational regulation [4].

This review systematically analyzes the technical and biological constraints of single-omics methodologies, supported by experimental evidence and case studies. By examining these limitations within the context of biomarker discovery and diagnostic research, we build a compelling case for integrated multi-omics frameworks as essential for advancing precision medicine.

Methodological Limitations of Individual Omics Approaches

Genomics: The Blueprint Without Context

Genomics, focusing on DNA sequence and structure variations, provides the foundational blueprint of biological systems but reveals little about dynamic functional states. While identifying mutations like BRCA1/2 has proven clinically valuable for cancer risk assessment, genomic data alone cannot predict how genetic variations manifest phenotypically due to extensive regulatory mechanisms operating at other molecular levels [12].

Key Limitations:

- Static Snapshot: DNA sequence information remains largely constant across cell types and physiological states, unable to capture dynamic responses to environmental stimuli or disease progression [11].

- Limited Predictive Power: The majority of disease-associated genetic variants identified through genome-wide association studies (GWAS) explain only a small fraction of disease heritability, highlighting the "missing heritability" problem [11].

- Epigenetic Blindness: Conventional genomics fails to account for regulatory modifications such as DNA methylation and histone modifications that profoundly influence gene expression without altering sequence information [11].

Table 1: Limitations of Genomic Approaches in Disease Research

| Limitation | Technical Basis | Clinical Impact |

|---|---|---|

| Static information content | DNA sequence changes slowly relative to disease processes | Limited ability to monitor disease progression or treatment response |

| Poor phenotype prediction | Complex gene-environment interactions unmeasured | Incomplete risk assessment despite identified variants |

| Epigenetic regulation not captured | Standard sequencing does not detect functional chromatin states | Critical regulatory mechanisms missed in disease association |

Transcriptomics: The Messenger Without the Message

Transcriptomic profiling, particularly through RNA sequencing, reveals gene expression patterns but suffers from critical limitations in predicting functional protein outcomes. While methodologies like single-cell RNA sequencing (scRNA-seq) have resolved cellular heterogeneity to some extent, bulk transcriptomics averages expression across cell populations, potentially masking biologically significant rare cell states [13].

Experimental Evidence of Discordance: The Clinical Proteomic Tumor Analysis Consortium (CPTAC) demonstrated that proteomic data could identify functional cancer subtypes and druggable vulnerabilities missed by genomics alone [11]. In ovarian and breast cancers, proteomic profiles revealed critical disease mechanisms not apparent from transcriptomic data, highlighting the poor correlation between mRNA and protein abundance due to post-transcriptional regulation and differential protein degradation [11].

Technical Constraints:

- Post-Transcriptional Blindness: RNA expression levels frequently correlate poorly with protein abundance due to translational regulation, miRNA activity, and varying protein half-lives [11] [12].

- Bulk Averaging Artifacts: Conventional RNA-seq masks cellular heterogeneity, potentially obscuring rare but clinically relevant cell populations, such as drug-resistant clones in cancer [13].

- Functional Ambiguity: Transcript levels provide limited information about actual protein function, which depends on post-translational modifications, localization, and complex interaction networks [11].

Proteomics: The Effectors Without Regulation

Proteomics directly characterizes the primary effector molecules of biological processes yet fails to capture the regulatory programs directing their expression. While proteins ultimately execute cellular functions and represent most drug targets, understanding their dysregulation requires integration with upstream omics layers [11].

Critical Limitations:

- Regulatory Context Missing: Protein abundance measurements alone cannot distinguish whether alterations stem from genetic, transcriptional, or post-transcriptional mechanisms [12].

- Dynamic Range Challenges: The enormous concentration range of proteins in biological systems (up to 10¹²) exceeds the detection limits of current mass spectrometry platforms, potentially missing low-abundance regulators [11].

- Modification Complexity: A single gene can produce multiple proteoforms with distinct functions through alternative splicing and post-translational modifications, creating analytical challenges for comprehensive profiling [11].

Table 2: Comparative Limitations of Major Single-Omics Approaches

| Omics Layer | Primary Limitation | Key Uncaptured Biology | Clinical Impact Example |

|---|---|---|---|

| Genomics | Static blueprint | Dynamic regulatory responses | Inability to monitor treatment response |

| Transcriptomics | Poor protein correlation | Post-translational regulation | mRNA signatures failing to predict drug efficacy |

| Proteomics | Missing upstream regulation | Genetic and epigenetic drivers | Incomplete understanding of resistance mechanisms |

| Metabolomics | Downstream snapshot | Causal molecular pathways | Late-stage detection limits intervention timing |

Metabolomics: The Endpoints Without Causality

Metabolomics provides the most proximal readout of phenotype by profiling small molecules but represents the downstream convergence of multiple regulatory layers. While metabolomic signatures can offer sensitive disease detection, they often lack mechanistic insights needed for targeted therapeutic development [11].

Inherent Constraints:

- Distance from Causal Events: Metabolic changes represent endpoints far removed from primary pathological triggers, making causal inference challenging [12].

- Environmental Confounding: Metabolite levels are profoundly influenced by diet, microbiota, and other environmental factors, complicating discrimination between primary disease effects and secondary consequences [11].

- Regulatory Blindness: Metabolic profiles cannot reveal the genetic, epigenetic, or transcriptional alterations responsible for observed changes [11].

The Cellular Heterogeneity Challenge: Beyond Bulk Analyses

Conventional bulk omics approaches fundamentally mask biological complexity by averaging measurements across thousands to millions of cells. This limitation becomes critically important in diseases characterized by cellular heterogeneity, such as cancer, where rare subpopulations drive therapy resistance and disease progression [13].

The Averaging Problem: Mathematical Limitations

Bulk sequencing methods generate population-averaged signals that mathematically obscure minority cell populations. For example, a transcriptionally distinct subpopulation comprising 5% of cells would need to exhibit 20-fold expression differences to be detectable against the background in bulk RNA-seq—a biological impossibility for many functionally important genes [13].

Rare Cell Blindness: Clinical Consequences

In oncology, rare drug-resistant clones present at frequencies as low as 0.1% can ultimately cause disease relapse but remain undetectable by bulk genomic or transcriptomic approaches [13]. This limitation has direct clinical implications, as conventional sequencing may fail to identify emerging resistance mechanisms until they become dominant populations.

Figure 1: Comparative workflow of bulk versus single-cell omics approaches. Bulk methods average signals across cell populations, masking biologically significant rare clones, while single-cell technologies resolve cellular heterogeneity at the cost of increased computational complexity and technical noise.

The Causality Dilemma: Correlation Without Mechanism

Single-omics approaches fundamentally struggle to establish causal relationships in biological systems, typically generating correlative associations that lack mechanistic validation.

The Genotype-Phenotype Gap

Genomic studies frequently identify statistical associations between genetic variants and disease susceptibility but provide limited insights into the functional mechanisms connecting genotype to phenotype [11]. For example, while GWAS have identified hundreds of genetic loci associated with type 2 diabetes, the causal genes, molecular pathways, and cellular contexts remain largely unknown for most associations [14].

Transcriptional-Translational Discordance

The assumption that mRNA levels reliably predict protein abundance represents a fundamental flaw in transcriptomic inference. Systematic comparisons across omics layers have revealed consistently poor correlations between transcript and protein levels across biological systems, with reported correlation coefficients typically ranging from 0.4 to 0.7 [11]. This discordance stems from extensive post-transcriptional regulation, including:

- Variable translation rates

- Differences in protein stability and turnover

- miRNA-mediated repression

- Alternative splicing patterns

Experimental Evidence: Case Studies in Clinical Limitations

Oncology: The MSK-IMPACT Experience

The MSK-IMPACT genomic profiling study demonstrated that approximately 37% of tumors harbor potentially actionable genetic alterations [11]. While clinically impactful, this finding conversely highlights that 63% of patients lacked identifiable genomic drivers, underscoring the limitations of genomics alone in guiding therapy. Subsequent integration of transcriptomic and proteomic data has revealed additional biomarkers and therapeutic opportunities invisible to genomic profiling alone [11].

Metabolic Disease: Prediabetes Diagnosis Challenges

In prediabetes research, reliance on single biomarkers like HbA1c has proven inadequate for accurate risk stratification. HbA1c demonstrates weak correlation with impaired fasting glucose (IFG) and impaired glucose tolerance (IGT), failing to capture important glycemic excursions and providing limited insights into underlying pathophysiology [5]. Multi-omics approaches have identified numerous molecular signatures across genomics, proteomics, and metabolomics that complement traditional biomarkers, enabling more precise risk prediction and mechanistic insights [5].

Cardiovascular Disease: Spatial Context Limitations

In cardiovascular research, single-cell transcriptomics has revealed remarkable cellular heterogeneity in human hearts, identifying previously unrecognized subpopulations of cardiomyocytes, fibroblasts, and immune cells [15]. However, without spatial context, these dissociated cell data cannot resolve critical tissue-level organization and cell-cell communication networks that drive cardiac pathophysiology. Emerging spatial transcriptomic technologies address this limitation by preserving architectural context [15].

Technical and Analytical Constraints

Data Integration Barriers

Single-omics datasets generated through different technologies present significant integration challenges due to:

- Technical variability: Platform-specific biases and batch effects

- Dimensionality mismatch: Different feature spaces across omics layers

- Temporal resolution differences: Varying molecular turnover rates

Computational Methodological Gaps

Analytical approaches for single-omics data frequently assume linear relationships and normal distributions, failing to capture the complex, nonlinear interactions that characterize biological systems [4]. Network-based analyses remain challenging without complementary data from multiple molecular layers to establish directed relationships.

The Scientist's Toolkit: Essential Research Reagents and Platforms

Table 3: Key Research Reagent Solutions for Multi-Omics Research

| Reagent/Platform | Function | Single-Omics Limitation Addressed |

|---|---|---|

| 10x Genomics Single Cell Multiome | Simultaneous profiling of chromatin accessibility and gene expression | Resolves cellular heterogeneity and connects regulatory elements to transcription |

| TMT/Isobaric Labeling (e.g., iTRAQ) | Multiplexed protein quantification across samples | Enables high-throughput proteomic correlation with transcriptomic data |

| LC-MS/MS Systems | Liquid chromatography-mass spectrometry for proteomic/metabolomic profiling | Direct measurement of functional effectors beyond genetic blueprint |

| Spatial Transcriptomics Slides | Tissue-preserving molecular profiling with morphological context | Bridges single-cell resolution with architectural information |

| CSP#X Cell Sorting | Indexed cell sorting for cross-omics validation | Enables same-cell multi-omics measurement to establish causality |

The limitations of single-omics approaches fundamentally stem from their reductionist nature in studying complex biological systems. Genomics provides a static blueprint without functional context, transcriptomics captures dynamic messages but not their functional execution, proteomics characterizes effectors without their regulatory programs, and metabolomics offers endpoint readouts without causal mechanisms. These individual constraints collectively necessitate integrated multi-omics strategies that can capture the emergent properties of biological systems through simultaneous measurement of multiple molecular layers. The future of biomarker discovery and precision medicine depends on transcending these single-omics limitations through computational and technological frameworks that embrace, rather than reduce, biological complexity.

Key Biological Insights Gained from Multi-Layer Integration in Cancer Research

The advent of multi-omics technologies has fundamentally transformed our approach to understanding cancer biology, moving beyond single-layer analysis to integrated perspectives that capture the complex molecular interactions driving oncogenesis. Multi-omics encompasses large-scale, high-throughput analyses of multiple molecular layers including genomics, transcriptomics, proteomics, metabolomics, and epigenomics [11]. Collectively, these approaches provide a comprehensive understanding of cellular dynamics, facilitating biomarker identification that is crucial for cancer diagnosis, prognosis, and therapeutic decision-making [11]. Biological systems operate through complex, interconnected layers where genetic information flows through these layers to shape observable traits [16]. Elucidating the genetic basis of complex cancer phenotypes therefore demands an analytical framework that captures these dynamic, multi-layered interactions [16].

Landmark projects such as The Cancer Genome Atlas (TCGA) Pan-Cancer Atlas, the Pan-Cancer Analysis of Whole Genomes (PCAWG), MSK-IMPACT, and the Clinical Proteomic Tumor Analysis Consortium (CPTAC) have collectively demonstrated the utility of multi-omics in uncovering cancer biology and clinically actionable biomarkers [11]. These initiatives have established foundational resources that enable researchers to correlate molecular profiles with clinical features, refining predictions of therapeutic responses and patient outcomes [16]. The integration of diverse omics datasets presents substantial computational challenges that require advanced statistical, network-based, and machine learning methods to model interdependencies and extract meaningful biological insights [16].

Table 1: Overview of Major Omics Technologies in Cancer Research

| Omics Layer | Key Elements Analyzed | Primary Technologies | Biological Insights Provided |

|---|---|---|---|

| Genomics | DNA sequences, mutations, copy number variations, structural variants | Whole genome sequencing, whole exome sequencing | Driver mutations, tumor mutational burden, copy number alterations, inherited susceptibility |

| Transcriptomics | mRNA, non-coding RNAs, gene expression levels | RNA sequencing, microarrays | Gene expression signatures, alternative splicing, regulatory networks |

| Proteomics | Protein abundance, post-translational modifications, protein complexes | Mass spectrometry, reverse-phase protein arrays | Functional protein states, signaling pathway activity, drug targets |

| Epigenomics | DNA methylation, histone modifications, chromatin accessibility | Whole genome bisulfite sequencing, ChIP-seq, ATAC-seq | Gene regulation mechanisms, transcriptional control, cellular identity |

| Metabolomics | Metabolites, small molecules, metabolic intermediates | LC-MS, GC-MS, NMR spectroscopy | Metabolic pathway activity, nutrient utilization, tumor microenvironment |

Recent technological advances have further enhanced our resolution, with single-cell multi-omics approaches and spatial multi-omics technologies providing unprecedented insights into tumor heterogeneity and the tumor microenvironment at single-cell resolution [11] [17]. These approaches have illuminated tumor biology, immune escape mechanisms, treatment resistance, and patient-specific immune response mechanisms, thereby substantially advancing precision oncology strategies [17]. The integration of these diverse data types enables researchers to construct comprehensive models of cancer biology that account for the complex interactions between different molecular layers.

Key Biological Insights from Multi-Omics Integration

Unraveling Tumor Heterogeneity and Evolution

Multi-omics approaches have revealed the profound tumor heterogeneity that exists not only between different patients but also within individual tumors, contributing significantly to therapeutic resistance and metastatic progression [17]. Single-cell multi-omics technologies have been particularly transformative in this domain, enabling researchers to deconstruct tumors at unprecedented resolution and identify rare cellular subsets that may drive cancer progression and treatment resistance [17]. For example, integrated analysis of multi-omics data has enabled the characterization of cellular states and trajectories in tumor evolution, revealing how genomic alterations propagate through molecular layers to influence phenotypic outcomes [17].

The application of multi-omics to minimal residual disease (MRD) monitoring has provided critical insights into the cellular populations that persist after therapy and eventually lead to disease recurrence [17]. By combining genomic, transcriptomic, and epigenomic profiling, researchers can identify the resistant clones that survive treatment and understand the molecular mechanisms underlying their persistence. Similarly, multi-omics approaches have advanced neoantigen discovery, enabling the comprehensive identification of tumor-specific antigens that can be targeted by immunotherapies through integrated analysis of genomic mutations, transcript expression, and human leukocyte antigen (HLA) presentation [17].

Identification of Molecular Subtypes and Biomarkers

Multi-omics integration has enabled the discovery of previously unrecognized molecular subtypes across various cancers that transcend traditional histopathological classifications [18]. These refined classifications have profound implications for prognosis and treatment selection. For example, in endometrial cancer, integrated genomic analysis has identified four distinct subtypes with different clinical outcomes and therapeutic vulnerabilities, including an ultra-mutated subgroup with favorable prognosis and a copy-number altered subgroup with poor outcomes [18]. Similar approaches in colorectal cancer and glioblastoma have revealed molecular subtypes with distinct pathway activations and clinical behaviors [18].

The convergence of multiple omics layers has also facilitated the discovery of robust biomarker panels at the single-molecule, multi-molecule, and cross-omics levels [11]. These include:

- Tumor mutational burden (TMB), validated in the KEYNOTE-158 trial as a predictive biomarker for pembrolizumab treatment across solid tumors [11]

- Gene-expression signatures such as Oncotype DX (21-gene) and MammaPrint (70-gene) that guide adjuvant chemotherapy decisions in breast cancer [11]

- Proteomic classifiers that identify functional subtypes and reveal druggable vulnerabilities missed by genomics alone [11]

- Metabolomic signatures such as the 10-metabolite plasma profile that demonstrates superior diagnostic accuracy compared to conventional tumor markers in gastric cancer [11]

- Epigenetic biomarkers including MGMT promoter methylation that predicts benefit from temozolomide in glioblastoma [11]

Mapping Signaling and Regulatory Networks

Multi-omics integration enables the reconstruction of comprehensive regulatory networks that span multiple molecular layers, providing systems-level insights into cancer biology. A prominent example comes from neuroblastoma research, where integrated analysis of mRNA-seq, miRNA-seq, and methylation data revealed a coordinated regulatory network centered on the MYCN oncogene [19]. This approach identified three transcription factors (MYCN, POU2F2, and SPI1) and seven miRNAs as key regulatory hubs, demonstrating how multi-omics data can elucidate the complex interplay between transcriptional and post-transcriptional regulation in cancer [19].

Network-based analysis of multi-omics data has proven particularly powerful for identifying master regulatory nodes and disease modules that drive oncogenic processes [16]. By modeling molecular features as nodes and their functional relationships as edges, these frameworks capture complex biological interactions and can identify key subnetworks associated with disease phenotypes [16]. Many network-based techniques can incorporate prior biological knowledge, enhancing interpretability and predictive power for identifying novel therapeutic targets [16].

Table 2: Clinically Actionable Multi-Omics Biomarkers in Oncology

| Cancer Type | Multi-Omics Biomarker | Omics Layers Involved | Clinical Application |

|---|---|---|---|

| Multiple Solid Tumors | Tumor Mutational Burden (TMB) | Genomics | Predicts response to immune checkpoint inhibitors |

| Breast Cancer | Oncotype DX (21-gene signature) | Transcriptomics | Guides adjuvant chemotherapy decisions |

| Glioblastoma | MGMT promoter methylation | Epigenomics | Predicts benefit from temozolomide chemotherapy |

| HER2-positive Breast Cancer | HER2 gene amplification | Genomics, Transcriptomics | Selection for HER2-targeted therapies |

| IDH-mutant Gliomas | 2-hydroxyglutarate (2-HG) | Metabolomics, Genomics | Diagnostic and mechanistic biomarker |

| Multiple Cancers | DNA methylation panels | Epigenomics | Multi-cancer early detection (e.g., Galleri test) |

Understanding the Tumor Microenvironment and Immune Response

Spatial multi-omics technologies have provided unprecedented insights into the tumor microenvironment (TME) and its role in cancer progression and therapy response [17]. By preserving spatial context while measuring multiple molecular layers, these approaches enable researchers to map the cellular architecture of tumors and understand how spatial relationships influence cellular behavior and treatment efficacy [17]. For example, integrated spatial transcriptomics and proteomics has revealed how immune cell distributions within tumors correlate with response to immunotherapy, identifying exclusionary patterns that mediate resistance [17].

Single-cell multi-omics has been particularly instrumental in dissecting the immune landscape of tumors, revealing diverse immune cell states and their functional roles in anti-tumor immunity [17]. Integrated analysis of transcriptomic, epigenomic, and proteomic data at single-cell resolution has identified exhausted T cell states that limit effective immune responses and regulatory cell populations that suppress anti-tumor immunity [17]. These insights are informing the development of next-generation immunotherapies that target specific immune cell states or combinations thereof to overcome resistance mechanisms.

Experimental Protocols and Methodologies

Multi-Omics Data Integration Strategies

Multi-omics integration methods can be broadly categorized based on the timing of integration and the nature of the data combined [20]. The three primary approaches are:

Early Integration involves concatenating measurements from different omics sources before any analysis, creating a single integrated dataset for downstream applications [20]. While this approach allows direct analysis of cross-omics interactions, it often fails to account for platform heterogeneity and differences in data structure between omics types.

Intermediate Integration employs methods that transform each omics dataset separately before modeling them together, respecting the diversity of platforms while enabling integrated analysis [20]. Techniques include matrix factorization approaches, multi-omics factor analysis, and deep learning architectures that learn joint representations.

Late Integration involves analyzing each omics dataset separately and then combining the results, such as in cluster-of-clusters analysis (CoCA) which identifies consensus groups across different omics analyses [20]. While this approach avoids challenges of data heterogeneity, it may miss important interactions between molecular layers.

Vertical Integration (N-integration) combines different omics data from the same samples, enabling the study of concurrent observations across functional levels [20]. This approach is particularly powerful for understanding how variations at one molecular level influence others within the same biological system.

Horizontal Integration (P-integration) combines studies of the same molecular level from different subjects to increase sample size and statistical power [20]. This approach is valuable for meta-analyses and increasing cohort diversity.

Case Study: Neuroblastoma Biomarker Discovery

A comprehensive multi-omics workflow for neuroblastoma biomarker discovery illustrates the practical application of integration methodologies [19]:

Step 1: Data Acquisition and Preprocessing

- Obtain multi-omics data for 99 patients, including mRNA-seq, miRNA-seq, and methylation array data

- Perform quality control, normalization, and batch effect correction for each data type separately

- Convert each data type into a patient similarity matrix using appropriate distance metrics

Step 2: Data Integration Using Similarity Network Fusion (SNF)

- Apply SNF to integrate the three similarity matrices into a single fused similarity matrix

- Optimize hyperparameters (T=15, k=20, α=0.5) through iterative evaluation to achieve convergence

- Perform spectral clustering on the fused graph to identify patient subgroups (c=4 clusters)

Step 3: Feature Selection and Ranking

- Use ranked SNF (rSNF) to assign importance scores to all features across omics layers

- Select the top 10% of high-rank features from each data type

- Identify 803 essential genes common to both methylation and mRNA-seq data, plus 160 high-rank miRNAs

Step 4: Regulatory Network Construction

- Retrieve TF-miRNA and miRNA-target interactions from TransmiR 2.0 and Tarbase v8 databases

- Integrate interactions to construct a regulatory network comprising 90 miRNAs, 23 TFs, and 199 target genes

- Apply maximal clique centrality (MCC) algorithm to identify hub nodes as potential biomarkers

Step 5: Validation and Clinical Correlation

- Perform survival analysis to correlate hub node expression with patient prognosis

- Validate findings in an independent cohort of 498 neuroblastoma patients (GSE62564)

- Confirm prognostic significance of three transcription factors (MYCN, POU2F2, SPI1) and three miRNAs (hsa-mir-137, hsa-mir-421, hsa-mir-760)

Diagram 1: Neuroblastoma Multi-Omics Biomarker Discovery Workflow. This flowchart illustrates the step-by-step process for identifying biomarkers from multi-omics data in neuroblastoma, from data acquisition through validation.

Advanced Computational Frameworks

Deep Learning Approaches have emerged as powerful tools for multi-omics integration, particularly for cancer subtype classification. The DeepMoIC framework exemplifies this approach, combining autoencoders for feature extraction with deep graph convolutional networks (GCNs) for classification [21]:

Component 1: Autoencoder Architecture

- Employ separate multi-layer autoencoders for each omics type to learn compressed representations

- Use sigmoid activation functions and mean square error (MSE) loss for reconstruction

- Apply weighted integration of omics-specific representations based on prior knowledge

Component 2: Patient Similarity Network

- Construct patient similarity matrices for each omics type using Euclidean distance

- Apply Similarity Network Fusion (SNF) algorithm to integrate matrices into a unified graph

- Generate adjacency matrices that capture complex patient relationships across omics types

Component 3: Deep Graph Convolutional Network

- Implement deep GCN with residual connections and identity mapping to address over-smoothing

- Enable propagation of information to remote neighbors in the patient similarity network

- Integrate feature matrices and patient similarity network for supervised classification

This framework has demonstrated superior performance in pan-cancer classification and subtype identification, highlighting the value of deep learning for capturing complex relationships in multi-omics data [21].

Successful multi-omics research requires both wet-lab reagents for data generation and computational tools for data analysis and integration. The following table summarizes key resources essential for multi-omics cancer research:

Table 3: Essential Research Reagents and Computational Resources for Multi-Omics Cancer Research

| Category | Specific Tools/Reagents | Function/Purpose | Application Examples |

|---|---|---|---|

| Sequencing Technologies | 10x Genomics Chromium X, BD Rhapsody HT-Xpress | Single-cell RNA sequencing with high throughput | Profiling tumor heterogeneity at single-cell resolution [17] |

| Proteomic Platforms | Liquid chromatography-mass spectrometry (LC-MS), Reverse-phase protein arrays | Protein identification and quantification | Measuring protein abundance and post-translational modifications [11] |

| Spatial Omics Technologies | Spatial transcriptomics, Multiplexed immunofluorescence | Preserving spatial context in molecular profiling | Mapping tumor microenvironment architecture [17] |

| Computational Frameworks | Similarity Network Fusion (SNF), DeepMoIC, MOFA | Multi-omics data integration | Identifying cancer subtypes and biomarkers [19] [21] |

| Data Resources | TCGA, CPTAC, DriverDBv4, GliomaDB | Providing annotated multi-omics datasets | Accessing processed multi-omics data for analysis [11] |

| Network Analysis Tools | Cytoscape, MCC algorithms | Visualizing and analyzing molecular networks | Identifying hub genes in regulatory networks [19] |

Diagram 2: Comprehensive Multi-Omics Research Workflow. This diagram illustrates the end-to-end process of multi-omics research, from sample collection through computational analysis to validation.

The integration of multi-layer omics data has fundamentally advanced our understanding of cancer biology, revealing intricate molecular networks, tumor heterogeneity, and regulatory mechanisms that were previously inaccessible. Through methodologies ranging from similarity network fusion to deep graph convolutional networks, researchers can now identify robust biomarkers, define molecular subtypes, and reconstruct signaling pathways with unprecedented precision. As single-cell and spatial multi-omics technologies continue to evolve, they promise to further refine our molecular portraits of cancer, enabling truly personalized therapeutic approaches that match the complexity of the disease. The biological insights gained from these integrated approaches are already transforming oncology, bridging the gap between molecular discoveries and clinical applications to improve patient outcomes.

Large-scale multi-omics consortia have fundamentally transformed the landscape of cancer research by generating comprehensive, publicly available datasets that bridge molecular biology with clinical medicine. These initiatives provide the foundational data infrastructure required for biomarker discovery, enabling researchers to identify molecular signatures with diagnostic, prognostic, and therapeutic applications. The integration of diverse molecular datasets from genomics, transcriptomics, proteomics, and epigenomics has revealed complex biological networks driving tumorigenesis, moving beyond the limitations of single-omics approaches [11]. By establishing standardized protocols for data generation and analysis, these consortia have accelerated the translation of basic research findings into clinically actionable biomarkers, thereby advancing the core mission of precision oncology to match patients with optimal treatments based on their unique molecular profiles [1].

The evolution of these consortia reflects the rapid technological advancements in high-throughput sequencing, mass spectrometry, and computational biology. Landmark projects such as The Cancer Genome Atlas (TCGA) and the Clinical Proteomic Tumor Analysis Consortium (CPTAC) have demonstrated the power of collaborative science in characterizing the molecular architecture of cancer across thousands of patients [11]. These efforts have not only cataloged driver mutations but also elucidated their functional consequences across multiple biological layers, providing insights into therapeutic resistance mechanisms and novel therapeutic vulnerabilities [22]. As the field progresses, emerging consortia are incorporating cutting-edge technologies including single-cell multi-omics and spatial transcriptomics, further deepening our understanding of tumor heterogeneity and the tumor microenvironment [11].

Table 1: Overview of Major Multi-Omics Consortia in Cancer Research

| Consortium Name | Primary Focus | Key Omics Data Types | Notable Contributions |

|---|---|---|---|

| The Cancer Genome Atlas (TCGA) | Pan-cancer molecular atlas | Genomics, transcriptomics, epigenomics, clinical data | Comprehensive molecular characterization of 33 cancer types; identification of molecular subtypes across cancers [11] [23]. |

| Clinical Proteomic Tumor Analysis Consortium (CPTAC) | Proteogenomic integration | Proteomics, genomics, transcriptomics, post-translational modifications | Identification of functional cancer subtypes and druggable vulnerabilities missed by genomics alone [11]. |

| International Cancer Genome Consortium (ICGC) | International genomic data sharing | Genomics, transcriptomics, epigenomics from international cohorts | Expanded diversity of cancer genomic data through global collaboration [23]. |

| Cancer Cell Line Encyclopedia (CCLE) | Preclinical model characterization | Genomics, transcriptomics, drug response data | Molecular profiling of cancer cell lines to facilitate drug discovery [23]. |

| DriverDBv4 | Multi-omics driver characterization | Genomic, epigenomic, transcriptomic, proteomic data | Integration of data from ~24,000 patients across 70+ cancer cohorts using multi-omics algorithms [11]. |

| GliomaDB | Glioma-specific database | Multi-omics data from TCGA, GEO, CGGA, MSK-IMPACT | Integrated 21,086 glioblastoma samples from 4,303 patients for specialized brain tumor research [11]. |

The Cancer Genome Atlas (TCGA): A Blueprint for Multi-Omics Profiling

Experimental Design and Methodological Framework

TCGA established a systematic approach for large-scale molecular characterization of human cancers, employing standardized protocols across multiple processing centers to ensure data quality and reproducibility. The project utilized comprehensive molecular profiling across multiple platforms, including whole exome sequencing (WES), whole genome sequencing (WGS), RNA sequencing, DNA methylation arrays, and miRNA sequencing [11] [23]. This multidimensional data generation was complemented by detailed clinical data annotation, enabling correlation of molecular features with patient outcomes, treatment responses, and pathological characteristics.

The experimental workflow began with quality-controlled biospecimens from participating institutions, followed by centralized DNA/RNA extraction and distribution to designated genome characterization centers. Genomic analyses identified somatic mutations, copy number variations (CNVs), and structural variants, while transcriptomic approaches quantified gene expression levels, alternative splicing events, and non-coding RNA expression [23]. Epigenomic profiling focused on DNA methylation patterns through platforms such as whole genome bisulfite sequencing (WGBS), providing insights into regulatory mechanisms beyond the genetic code [11]. The integration of these diverse data types enabled researchers to move beyond single-dimensional analyses and develop unified molecular classifications of cancer subtypes with distinct clinical behaviors.

Biomarker Discoveries and Clinical Impact

TCGA's multi-omics approach has yielded numerous clinically relevant biomarkers that have advanced precision oncology. The project's data revealed that tumor mutational burden (TMB), a genomic biomarker, predicts response to immune checkpoint inhibitors across multiple cancer types, leading to its FDA approval as a companion diagnostic for pembrolizumab in solid tumors based on the KEYNOTE-158 trial [11]. Transcriptomic analyses identified gene expression signatures with prognostic utility, such as the 21-gene Oncotype DX and 70-gene MammaPrint assays that guide adjuvant chemotherapy decisions in breast cancer, as validated in the TAILORx and MINDACT trials [11].

Epigenomic profiling through TCGA established MGMT promoter methylation as a predictive biomarker for temozolomide response in glioblastoma, now part of standard clinical practice [11]. The project's integrated molecular analyses further enabled the development of multi-cancer early detection assays based on DNA methylation patterns, such as the Galleri test currently under clinical evaluation [11]. Beyond these specific biomarkers, TCGA data has facilitated the discovery of molecular subtypes within traditional histopathological classifications, revealing distinct disease entities with different therapeutic vulnerabilities and outcomes.

Table 2: Key Biomarker Classes Discovered Through Multi-Omics Consortia

| Biomarker Class | Omics Level | Example Biomarker | Clinical Application |

|---|---|---|---|

| Diagnostic | Genomic | IDH1/2 mutations | Classification of glioma subtypes [11] |

| Metabolomic | 2-hydroxyglutarate (2-HG) | Detection of IDH-mutant gliomas [11] | |

| Epigenomic | Multi-cancer methylation signatures | Early cancer detection (e.g., Galleri test) [11] | |

| Prognostic | Transcriptomic | 21-gene Oncotype DX signature | Breast cancer recurrence risk stratification [11] |

| Proteomic | Protein signaling pathways | Functional subtyping and outcome prediction [11] | |

| Predictive | Genomic | EGFR mutations | Response to EGFR inhibitors in lung cancer [22] |

| Epigenomic | MGMT promoter methylation | Temozolomide response in glioblastoma [11] | |

| Genomic | Tumor mutational burden (TMB) | Immunotherapy response prediction [11] |

Clinical Proteomic Tumor Analysis Consortium (CPTAC): Bridging Genotype to Phenotype

Proteogenomic Integration Methodology

CPTAC was established to complement genomic initiatives like TCGA by adding deep proteomic and phosphoproteomic characterization to existing molecular profiles, creating powerful proteogenomic datasets. The consortium employs liquid chromatography-mass spectrometry (LC-MS/MS)-based proteomics to quantify protein abundance and post-translational modifications, including phosphorylation, acetylation, and ubiquitination [11] [22]. These proteomic measurements are integrated with genomic and transcriptomic data from the same samples, enabling researchers to connect genetic alterations to their functional protein-level consequences and identify regulatory mechanisms that operate independently of transcriptional control.

The experimental protocol involves tissue lysis and protein extraction followed by enzymatic digestion (typically with trypsin) to generate peptides, which are then fractionated and analyzed by high-resolution mass spectrometry. CPTAC has developed standardized sample processing protocols across participating centers to ensure data reproducibility, including reference standards and quality control metrics [11]. Advanced computational pipelines map the identified peptides to their corresponding proteins and quantify their abundance, while phosphoproteomic analyses identify phosphorylation sites and infer kinase activity. The resulting datasets reveal how genomic alterations translate to functional proteomic changes, providing insights into cancer signaling networks that are not apparent from genomic data alone.

Therapeutic Insights and Biomarker Applications

CPTAC's proteogenomic approach has demonstrated that proteomic data can reveal functional subtypes of cancer that are not discernible from genomic or transcriptomic data alone. For example, CPTAC studies of ovarian and breast cancers identified proteomic signatures associated with therapeutic vulnerability, including phosphorylation patterns that indicate activated signaling pathways targetable with existing drugs [11]. These findings have important implications for biomarker development, as they suggest that protein-level measurements may provide more direct assessment of druggable pathway activity than genomic or transcriptomic proxies.

The integration of proteomic with genomic data has also enabled the discovery of non-genomic mechanisms of therapeutic resistance, such as post-translational modifications that reactivate signaling pathways despite inhibitory genomic alterations [22]. Additionally, CPTAC has contributed to the identification of neoantigens and immunogenic proteins that may serve as targets for cancer immunotherapy or as biomarkers for immune recognition. The consortium's publicly available datasets continue to serve as a valuable resource for the research community, facilitating the discovery and validation of protein-based biomarkers across multiple cancer types.

Complementary International Multi-Omics Initiatives

Expanding the Genomic Landscape: ICGC and CCLE

The International Cancer Genome Consortium (ICGC) represents a global effort to coordinate large-scale cancer genomics research across multiple countries and institutions. ICGC's pan-cancer analysis of whole genomes (PCAWG) project complemented TCGA by providing whole genome sequencing data that encompasses both coding and non-coding regions, enabling the discovery of regulatory mutations and structural variants that may drive cancer development [11]. The consortium's decentralized model, with participating countries leading projects on specific cancer types, has facilitated the inclusion of more diverse patient populations and cancer subtypes, expanding the scope of discoveries beyond those possible in single-nation initiatives.

The Cancer Cell Line Encyclopedia (CCLE) provides another critical resource for translational research by offering comprehensive molecular characterization of human cancer cell lines alongside drug sensitivity data [23]. This dataset enables researchers to correlate molecular features with therapeutic response in preclinical models, facilitating biomarker hypothesis generation and validation. The integration of CCLE data with clinical datasets from TCGA and other consortia allows for triangulation of findings across model systems and human tumors, strengthening the evidence for candidate biomarkers before embarking on costly clinical validation studies.

Specialized multi-omics databases have emerged to address the unique research questions posed by specific cancer types. GliomaDB focuses exclusively on glioblastoma multiforme (GBM), integrating 21,086 samples from 4,303 patients across multiple platforms including TCGA, GEO, Chinese Glioma Genome Atlas (CGGA), and MSK-IMPACT [11]. This disease-specific concentration enables deeper investigation into the molecular drivers of glioma progression and therapeutic resistance. Similarly, HCCDBv2 provides a comprehensive resource for liver cancer research, incorporating clinical phenotype data, bulk transcriptomics, single-cell transcriptomics, and spatial transcriptomics to explore hepatocellular carcinoma heterogeneity [11].

More recently, initiatives such as the ONCare Alliance biobank have adopted longitudinal sampling designs, collecting blood samples at multiple timepoints during the patient journey to capture dynamic changes in multi-omics profiles during treatment and disease progression [24]. These prospective cohorts linked to detailed clinical data represent the next generation of multi-omics resources, enabling researchers to study temporal patterns of biomarker evolution and identify molecular predictors of treatment response and resistance.

Methodological Framework for Multi-Omics Data Integration

Computational Strategies and Analytical Workflows

The integration of heterogeneous multi-omics data requires sophisticated computational approaches that can handle differences in scale, distribution, and biological meaning across data types. Horizontal integration combines data within the same omics layer (e.g., combining single-cell RNA sequencing with spatial transcriptomics) to address the limitations of individual technologies, such as the loss of spatial context in scRNA-seq or mixed-cell signals in spatial transcriptomics [22]. In contrast, vertical integration connects different biological layers (e.g., genomics to transcriptomics to metabolomics) to establish causal relationships from genetic alterations to their functional consequences [22].

Machine learning and deep learning approaches have become indispensable for multi-omics integration, with methods such as iClusterBayes, Subtype-GAN, and Similarity Network Fusion (SNF) demonstrating strong performance in cancer subtyping applications [25]. Benchmarking studies have evaluated these methods across critical performance metrics including clustering accuracy, clinical relevance, robustness, and computational efficiency. For example, NEMO and PINS have shown high clinical significance with log-rank p-values of 0.78 and 0.79 respectively in identifying meaningful cancer subtypes, while iClusterBayes achieved a silhouette score of 0.89 at its optimal k, indicating strong clustering capabilities [25]. The selection of appropriate integration methods depends on the specific research question, data types available, and desired output, with no single method performing optimally across all scenarios.

Diagram 1: Multi-Omics Data Integration Workflow. This diagram illustrates the flow from major data sources through different omics data types and integration methods to research outputs.

Experimental Design Considerations for Robust Biomarker Discovery

Effective multi-omics study design requires careful consideration of multiple factors that influence analytical robustness and biological validity. Benchmarking studies using TCGA datasets have provided evidence-based recommendations for multi-omics study design (MOSD), identifying nine critical factors across computational and biological domains [23]. Computational factors include sample size, feature selection, preprocessing strategy, noise characterization, class balance, and number of classes, while biological factors encompass cancer subtype combinations, omics combinations, and clinical feature correlation.

Research indicates that robust cancer subtype discrimination requires at least 26 samples per class, with feature selection retaining less than 10% of omics features to reduce dimensionality while preserving biological signal [23]. Maintaining a sample balance under a 3:1 ratio between classes and controlling noise levels below 30% further enhance analytical performance. Feature selection has been shown to improve clustering performance by up to 34%, highlighting its critical role in multi-omics analysis [23]. The selection of omics combinations should be guided by biological rationale rather than comprehensive inclusion, as using combinations of two or three omics types frequently outperforms configurations with four or more types due to reduced noise and redundancy [25].

Essential Research Reagents and Computational Tools

Table 3: Research Reagent Solutions for Multi-Omics Experiments

| Category | Specific Reagents/Tools | Application in Multi-Omics |

|---|---|---|

| Sequencing Reagents | Whole exome/genome sequencing kits | Genomic variant identification (mutations, CNVs, structural variants) [11] |

| RNA sequencing library prep kits | Transcriptome profiling (mRNA, lncRNA, miRNA expression) [11] | |

| Single-cell RNA sequencing kits | Cellular heterogeneity analysis at single-cell resolution [11] | |

| Proteomics Reagents | Liquid chromatography-mass spectrometry systems | Protein and phosphoprotein quantification [11] |

| Trypsin and other proteolytic enzymes | Protein digestion for mass spectrometry analysis [11] | |

| Immunoaffinity enrichment kits | Phosphopeptide enrichment for phosphoproteomics [11] | |

| Epigenomics Reagents | Whole genome bisulfite sequencing kits | DNA methylation profiling [11] |

| ChIP-seq kits | Histone modification mapping [11] | |

| Computational Tools | Seurat v5, Cell2location, Muon | Single-cell and spatial multi-omics integration [22] |

| iCluster, MOFA, NEMO | Multi-omics factor analysis and subtype discovery [25] [22] | |

| DriverDBv4, LinkedOmics | Multi-omics database exploration and visualization [11] |

Signaling Pathways Elucidated Through Multi-Omics Integration

Multi-omics consortia have enabled unprecedented mapping of complex signaling pathways across genomic, transcriptomic, and proteomic layers, revealing how genetic alterations propagate through biological systems to drive cancer phenotypes. The vertical integration approach connects driver mutations identified through WES/WGS with downstream transcriptional dysregulation measured by RNA-seq, and ultimately with protein-level pathway activation captured by phosphoproteomics [22]. This cross-layer analysis has been particularly powerful for understanding pathway rewiring in response to targeted therapies, revealing both innate and acquired resistance mechanisms.

In lung cancer, multi-omics analyses have delineated how EGFR mutations trigger downstream signaling through MAPK and PI3K-AKT pathways, with proteogenomic data revealing compensatory signaling changes that enable resistance to EGFR inhibitors [22]. Similarly, integrated analyses of metabolic pathways have shown how IDH1/2 mutations in glioma alter the cellular metabolome through production of the oncometabolite 2-hydroxyglutarate (2-HG), which competitively inhibits α-ketoglutarate-dependent dioxygenases and reshapes the epigenome [11]. These insights have facilitated the development of combinatorial therapeutic strategies that target multiple nodes in these rewired signaling networks simultaneously.

Diagram 2: Multi-Omics Elucidation of Signaling Pathways. This diagram shows how driver mutations identified through genomics propagate through transcriptomic and proteomic layers to drive cancer phenotypes and generate clinically applicable biomarkers.

Major multi-omics consortia including TCGA, CPTAC, and international initiatives have established a new paradigm for cancer research, generating foundational datasets that continue to drive biomarker discovery and therapeutic innovation. The integration of diverse molecular data types has revealed the complex, multidimensional nature of cancer biology, enabling molecular reclassification of tumors and identification of novel therapeutic vulnerabilities. These resources have supported the development of clinically actionable biomarkers across genomic, transcriptomic, proteomic, and epigenomic domains, advancing the implementation of precision oncology.

The future evolution of multi-omics consortia will likely incorporate emerging technologies such as single-cell multi-omics and spatial transcriptomics at larger scales, providing unprecedented resolution to study tumor heterogeneity and microenvironment interactions [11]. Longitudinal sampling designs, as implemented in initiatives like the ONCare Alliance biobank, will capture dynamic biomarker changes during treatment, enabling the identification of resistance mechanisms and adaptive signaling pathways [24]. As these datasets grow in size and complexity, advanced computational methods including artificial intelligence and deep learning will become increasingly essential for extracting biologically meaningful insights and translating them into clinical practice. The continued collaboration between basic researchers, computational biologists, and clinicians will ensure that multi-omics discoveries ultimately benefit patients through improved diagnosis, treatment selection, and outcomes in cancer care.

The complex heterogeneity of tumors, encompassing both diverse malignant cell populations and the intricate ecosystem of the tumor microenvironment (TME), represents a fundamental challenge in cancer biology and therapeutic development. Spatial and single-cell multi-omics technologies have emerged as transformative approaches that simultaneously profile multiple molecular layers—genomics, transcriptomics, epigenomics, proteomics, and metabolomics—at single-cell resolution while preserving crucial spatial context. These integrated methodologies are revolutionizing biomarker discovery and diagnostic research by enabling unprecedented resolution of cellular diversity, cell states, and cell-cell interactions within native tissue architecture. Within the framework of a broader thesis on multi-omics in biomarker discovery, this technical guide examines how these technologies are uncovering novel diagnostic and prognostic biomarkers, identifying therapeutic targets, and revealing mechanisms of treatment resistance that were previously obscured by bulk tissue analysis.

Advanced multi-omics integration moves beyond traditional single-omics approaches, which individually face limitations in capturing the full complexity of cancer biology. As reviewed by Molecular Biomedicine, multi-omics strategies provide "a holistic framework for constructing detailed tumor ecosystem landscapes, thereby facilitating the development of a more robust classification system for precision diagnosis and treatment" [1]. This comprehensive profiling is particularly valuable for deciphering the functional states and spatial relationships of immune and stromal cells within the TME, which critically influence disease progression and therapeutic response [26]. The integration of artificial intelligence and machine learning with multi-omics data further enhances the discovery of robust biomarkers by analyzing complex, high-dimensional datasets to identify patterns predictive of diagnosis, prognosis, and treatment response [4].

Core Technologies and Methodologies

Single-Cell Multi-Omics Platforms

Single-cell technologies enable the dissection of tumor heterogeneity by characterizing individual cells across multiple molecular dimensions, moving beyond the limitations of bulk tissue analysis that averages signals across diverse cell populations.

Single-Cell Isolation and Barcoding: Critical first steps involve efficient isolation of individual cells using methods such as fluorescence-activated cell sorting (FACS), magnetic-activated cell sorting (MACS), or microfluidic technologies [17]. Following isolation, cells are labeled with unique molecular identifiers (UMIs) and cell-specific barcodes during reverse transcription and amplification steps, enabling high-throughput parallel analysis while minimizing technical noise [17].

Multi-Omic Profiling Modalities:

- Single-Cell RNA Sequencing (scRNA-seq) characterizes gene expression programs and identifies rare cell types and intermediate cell states through platforms such as Drop-seq and 10x Genomics [17].

- Single-Cell DNA Sequencing (scDNA-seq) profiles genomic landscapes, including copy number variations and single nucleotide variants, using whole-genome amplification techniques [17].

- Single-Cell Epigenomics includes methods such as scATAC-seq for chromatin accessibility mapping, bisulfite sequencing for DNA methylation profiling, and scCUT&Tag for histone modification mapping [17].

- Single-Cell Proteomics utilizes antibody-derived tags in conjunction with sequencing to quantify protein abundance at single-cell resolution [17].

Spatial Multi-Omics Technologies

Spatial multi-omics technologies preserve the architectural context of tissues while providing multi-dimensional molecular data, enabling researchers to map cellular interactions within the tumor microenvironment.

Spatial Transcriptomics (ST) Approaches:

- In Situ Barcoding (ISB): Methods including 10x Genomics Visium, Slide-seq, and DBiT-seq utilize DNA-barcoded arrays or microfluidic channels to capture and label mRNA directly in tissue sections [27].

- In Situ Sequencing (ISS): Technologies such as STARmap and FISSEQ perform targeted or untargeted sequencing directly in fixed tissues without tissue transfer [27].

- In Situ Hybridization (ISH): Multiplexed error-robust FISH (MERFISH) and sequential FISH (seqFISH) use combinatorial labeling to detect hundreds to thousands of RNA species with subcellular resolution [27].

- Laser Capture Microdissection (LCM): Techniques including Geo-seq and LCM-seq isolate specific tissue regions for subsequent RNA sequencing [27].

Spatial Proteomics and Metabolomics: