Multi-Omics Integration: Revolutionizing Personalized Medicine from Discovery to Clinical Application

This article provides a comprehensive overview of how multi-omics approaches are transforming personalized medicine strategies for researchers, scientists, and drug development professionals.

Multi-Omics Integration: Revolutionizing Personalized Medicine from Discovery to Clinical Application

Abstract

This article provides a comprehensive overview of how multi-omics approaches are transforming personalized medicine strategies for researchers, scientists, and drug development professionals. It explores the foundational principles of integrating genomics, transcriptomics, proteomics, and metabolomics data to understand individual health profiles. The content examines advanced computational methodologies including machine learning and AI for data integration, addresses critical challenges in data complexity and standardization, and evaluates validation frameworks through case studies in oncology, cardiovascular, and neurological diseases. By synthesizing current research and emerging trends, this resource offers actionable insights for implementing multi-omics strategies across the therapeutic development pipeline.

The Multi-Omics Landscape: Building a Comprehensive Framework for Personalized Medicine

Multi-omics represents a paradigm shift in biomedical research, moving beyond the limitations of single-layer biological analysis to a holistic, systems-level approach. By integrating data from various molecular layers—such as the genome, epigenome, transcriptome, proteome, and metabolome—multi-omics provides an unprecedented, comprehensive view of the complex mechanisms underlying health and disease. This in-depth technical guide details the core components of multi-omics, the methodologies for data integration, and the advanced computational tools driving its application. Framed within the context of personalized medicine, this whitepaper underscores how multi-omics strategies are revolutionizing the discovery of biomarkers and therapeutic targets, enabling the development of precise, individualized diagnostic and treatment strategies.

Traditional biological research has often relied on a single-omics approach, studying one molecular layer in isolation (e.g., only genomics or only transcriptomics). While valuable, this method can only provide a partial and often disconnected view of a system's biology, as it fails to capture the complex, dynamic interactions between different molecular levels [1]. Multi-omics is the concerted approach in which the data from multiple "omes" are combined to study life in an integrated fashion [2]. This synergy is foundational to systems medicine, which views disease not as an aberration of a single gene or protein, but as a perturbation within a complex, interconnected biological network [3].

The central hypothesis of multi-omics is that by layering different types of molecular data, scientists can construct a coherent map of the geno-pheno-envirotype relationships, thereby identifying novel associations, pinpointing robust biomarkers, and understanding the functional mechanisms driving physiology and disease [2] [4]. This approach is particularly crucial for personalized medicine, where the goal is to tailor medical treatment to the individual characteristics of each patient by understanding their unique genetic, molecular, and biochemical profile [5] [3].

The Core Components of a Multi-Omics Approach

A multi-omics analysis leverages several high-throughput technologies to probe different levels of biological information. The primary omics layers are summarized in the table below.

Table 1: The Core Omes in Multi-Omics Analysis

| Omic Layer | Molecular Entity Analyzed | Key Technologies | Primary Insight Provided |

|---|---|---|---|

| Genomics | DNA sequence, structural variants | Next-Generation Sequencing (NGS), GWAS, Whole Genome Sequencing [3] [1] | Genetic blueprint, inherited variations, and mutations associated with disease susceptibility. |

| Epigenomics | Chemical modifications to DNA/histones (e.g., methylation) | Bisulfite Sequencing, ChIP-Seq, ATAC-Seq [6] [1] | Heritable regulation of gene expression activity without changing the DNA sequence. |

| Transcriptomics | All RNA transcripts (mRNA, non-coding RNA) | RNA-Seq, Single-Cell RNA-Seq (scRNA-seq) [6] [4] | Dynamic gene expression patterns and the bridge between the genome and the functional cellular state. |

| Proteomics | Proteins, their structures, modifications, and abundances | Mass Spectrometry (MS), Affinity Proteomics, Proximity Extension Assays (PEA) [6] [4] | Functional executors of cellular processes, including post-translational modifications critical for signaling. |

| Metabolomics | Small-molecule metabolites (e.g., sugars, lipids, amino acids) | Mass Spectrometry (MS), Nuclear Magnetic Resonance (NMR) [7] [4] | Downstream readout of cellular activity and the ultimate response to physiological and pathophysiological changes. |

More recent advancements have further refined the resolution of multi-omics studies:

- Single-Cell Multi-Omics: This branch allows for the analysis of multiple omic layers (e.g., genome and transcriptome, or transcriptome and epigenome) at the level of individual cells. This mitigates confounding factors from cell-to-cell variation and uncovers heterogeneous tissue architectures that are lost in bulk tissue analysis [2] [7].

- Spatial Multi-Omics: These technologies profile the molecular features of cells while preserving their spatial location within a tissue. This provides critical context about the local microenvironment, cell-cell interactions, and the architectural organization of tissues, which is vital for understanding diseases like cancer and neurodegenerative disorders [1] [7].

Methodologies for Multi-Omic Data Collection and Integration

Combined Multi-Omic Data Collection Workflows

A significant innovation in the field is the move towards simultaneous extraction and analysis of multiple molecular classes from a single sample. This reduces technical variation, processing time, and sample requirements compared to traditional methods that process samples separately [2]. Key technologies include:

- TRIzol-based Sequential Isolation: A reagent traditionally used for RNA isolation that can be adapted to sequentially extract DNA, RNA, proteins, and metabolites from a single sample [2].

- Multi-Omic Single-Shot Technology (MOST): Integrates proteome and lipidome analysis in a single liquid chromatography-mass spectrometry (LC-MS) run [2].

- Omni-MS: A proprietary multi-omic assay that simultaneously profiles proteins, lipids, electrolytes, and metabolites in a single preparation and single LC-MS analysis, which has been applied to biomarker discovery in conditions like COVID-19 and 22q11.2 deletion syndrome [2].

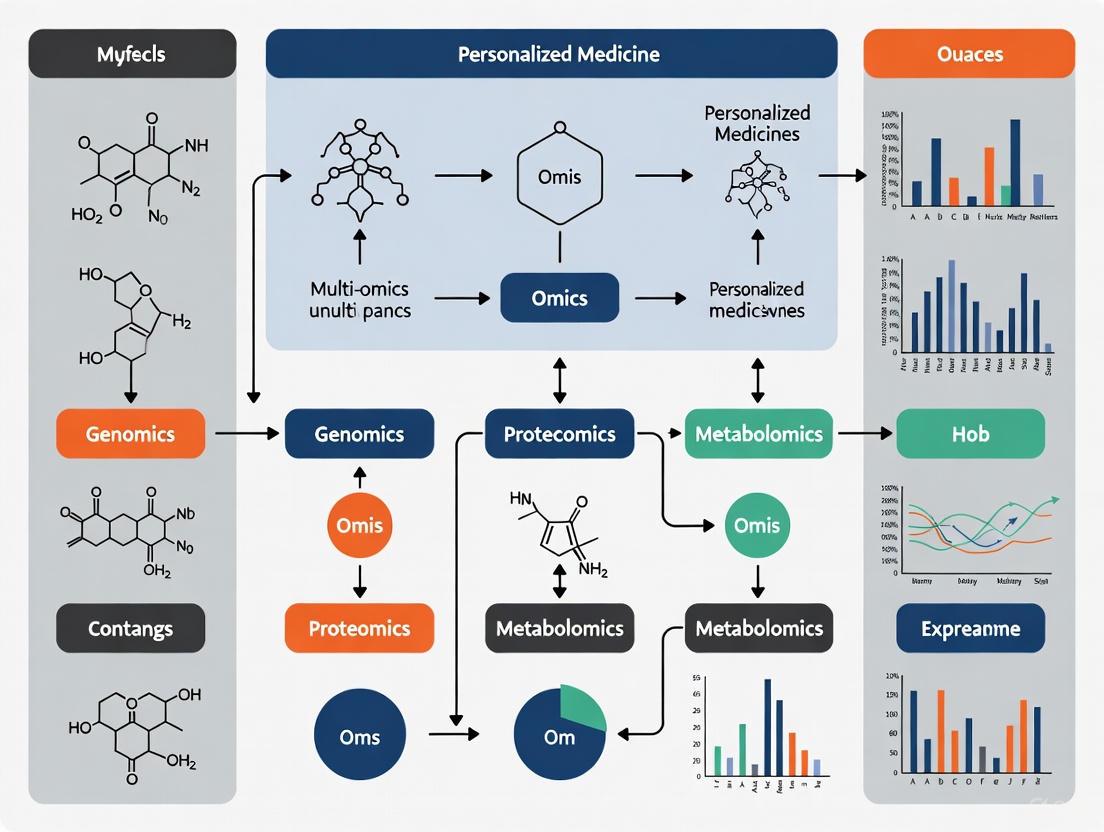

The following diagram illustrates a generalized workflow for a multi-omics study, from sample to insight.

Diagram 1: Generalized Multi-Omics Workflow.

Data Integration Strategies and Computational Tools

Data integration is the most critical and challenging step in multi-omics analysis, often requiring sophisticated computational and statistical methods [1] [4]. The integration strategy can be broadly categorized as follows:

- Horizontal Integration: Combines the same type of omics data from different studies or cohorts to increase statistical power.

- Vertical Integration: Combines different types of omics data (e.g., genomics, proteomics) from the same individuals to understand causal pathways across biological layers [8].

Machine learning (ML) and artificial intelligence (AI) are increasingly central to interpreting complex multi-omics data [2] [4]. Key applications include:

- Dimensionality Reduction: Techniques like Principal Component Analysis (PCA) and t-Distributed Stochastic Neighbor Embedding (t-SNE) are used to visualize high-dimensional data in lower dimensions [7].

- Biomarker Discovery: Multivariate methods like sparse Partial Least Squares (sPLS) and Regularized Generalized Canonical Correlation Analysis (RGCCA) can identify features (putative biomarkers) that are correlated across different omics datasets [2].

- Predictive Modeling: ML algorithms (e.g., random forests, support vector machines) and deep learning models are used to predict disease subtypes, patient prognosis, and treatment response based on integrated multi-omics profiles [8] [7].

Table 2: Key Software and Tools for Multi-Omic Analysis

| Tool/Package Name | Primary Function | Access/Platform |

|---|---|---|

| mixOmics [2] | Suite of multivariate methods for data integration (e.g., sPLS). | R/Bioconductor |

| RGCCA [2] | Flexible statistical framework for heterogeneous data integration. | R/CRAN |

| MultiAssayExperiment [2] | Bioconductor interface for managing and analyzing overlapping multi-omics samples. | R/Bioconductor |

| PaintOmics [2] | Web-based resource for visualization of multi-omics datasets. | Web application |

| Illumina Connected Multiomics [6] | Integrated software for multiomic data analysis and visualization. | Commercial Platform |

Multi-Omics in Personalized Medicine: Translating Data into Clinical Strategy

The integration of multi-omics is a cornerstone of modern precision medicine, enabling a move from a reactive "one-size-fits-all" approach to a proactive, personalized healthcare model [5] [3]. Its clinical applications are vast and impactful:

- Precision Diagnostics and Biomarker Discovery: Multi-omics allows for precise disease classification by identifying unique molecular signatures. For example, The Cancer Genome Atlas (TCGA) has used multi-omics to characterize over 11,000 cancer samples, leading to the discovery of new biomarkers and therapeutic targets [7]. Multi-omics strategies are also being used to improve the molecular classification of gliomas, enhancing diagnostic precision and prognostic accuracy [9].

- Targeted Therapies and Drug Development: By identifying specific molecular targets and understanding mechanisms of drug response, multi-omics informs the development of targeted therapies. This is evident in oncology with drugs like HER2 inhibitors for breast cancer and EGFR inhibitors for lung cancer, which are prescribed based on the tumor's genetic profile [5] [8].

- Predictive Modeling for Treatment Response: Machine learning models applied to multi-omics data can predict an individual patient's response to a specific treatment, optimizing therapeutic efficacy and minimizing adverse effects [5] [7]. This has been applied in systems vaccinology to understand the immune response to vaccines [2].

- Disease Prevention: Understanding the genetic and molecular basis of diseases through multi-omics allows for the development of preventive strategies tailored to an individual's unique risk profile [7].

The following diagram conceptualizes how multi-omics data informs the personalized medicine feedback loop.

Diagram 2: Multi-Omics in the Personalized Medicine Cycle.

The Scientist's Toolkit: Essential Reagents and Technologies

Successful multi-omics research relies on a suite of reliable reagents and platforms. The following table details key solutions used in featured experiments and workflows.

Table 3: Research Reagent Solutions for Multi-Omics Experiments

| Item/Technology | Function in Multi-Omics Workflow | Example Application |

|---|---|---|

| NovaSeq X Series Sequencer [6] | Production-scale sequencing platform for generating high-throughput genomic, transcriptomic, and epigenomic data. | Enables broad and deep coverage for comprehensive multi-omic profiling. |

| Illumina DNA Prep Kit [6] | Prepares sequencing-ready libraries from DNA samples. | Used in the genomics arm of a multi-omics workflow for variant discovery. |

| Single Cell 3' RNA Prep Kit [6] | Enables accessible and scalable single-cell RNA sequencing for transcriptomic analysis. | Used to profile gene expression and cellular heterogeneity at single-cell resolution. |

| ApoStream Technology [10] | Proprietary platform for isolating viable whole cells from liquid biopsies. | Preserves cellular morphology for downstream multi-omic analysis of circulating tumor cells. |

| Proximity Extension Assay (PEA) [2] | Technology using DNA-barcoded antibodies for highly multiplexed protein detection. | Allows for integration of proteomic and transcriptomic data, often at the single-cell level. |

| TRIzol Reagent [2] | Monophasic reagent for the simultaneous isolation of RNA, DNA, and proteins from a single sample. | Reduces sample requirement and technical variation in multi-omic studies. |

Challenges and Future Directions

Despite its transformative potential, the widespread adoption of multi-omics in clinical practice faces several hurdles:

- Data Complexity and Integration: Harmonizing vast, heterogeneous datasets from different omics platforms remains a significant computational challenge [7] [4].

- Cost and Accessibility: High-throughput multi-omics technologies can be expensive, limiting access for some research groups and healthcare systems [7].

- Standardization and Reproducibility: A lack of standardized protocols from sample collection to data analysis can affect reproducibility and hinder clinical translation [7] [10].

- Data Privacy and Ethical Considerations: The use of sensitive genetic and health information raises important questions about data privacy, security, and ethical usage [5].

- Clinical Implementation and Interpretation: Translating complex multi-omics findings into actionable clinical insights requires robust validation and training for clinicians [7].

The future of multi-omics lies in addressing these challenges through technological refinement, collaborative efforts, and the development of more user-friendly analytical tools. The continued evolution of single-cell and spatial multi-omics technologies, coupled with more powerful AI-driven integration algorithms, will further deepen our understanding of human biology and accelerate the realization of truly personalized systems medicine [8] [3] [4].

Precision medicine represents a transformative healthcare model that leverages an individual's genomic, environmental, and lifestyle data to deliver customized healthcare [3]. This approach has shifted medicine from a conventional, reactive disease control model toward proactive prevention and health preservation. The foundation of this transformation lies in the "omics" revolution—high-throughput technologies that enable comprehensive measurement of biological molecules at unprecedented scale and resolution [11].

Integrative multi-omics, the combination of multiple biological data layers, provides a more complete understanding of human health and disease than any single approach can offer separately [3]. By combining genomics, transcriptomics, proteomics, and metabolomics with advanced computational methods, researchers can now decipher the complex interactions between genes, proteins, and metabolites that drive health and disease states, enabling more precise diagnostic, prognostic, and therapeutic strategies [9] [11].

Core Omics Technologies: From Blueprint to Function

Genomics: The Biological Blueprint

Genomics involves the systematic study of an organism's complete set of DNA, including all of its genes and intergenic regions [12]. The primary goal of genomics is to identify the physiological functions of genes and their roles in disease susceptibility. Single nucleotide polymorphisms (SNPs) serve as the most commonly used markers for disease association studies [12]. Modern array-based genotyping techniques allow simultaneous assessment of up to one million SNPs per assay, enabling genome-wide association studies (GWAS) that scan the entire genome for disease-linked variants [12].

Table 1: Genomics Technologies and Applications

| Aspect | Description |

|---|---|

| Primary Focus | Analysis of DNA sequence, structure, and variation |

| Key Technology | Next-generation sequencing (NGS), Sanger sequencing |

| Common Parameters | SNPs, copy number variations (CNVs), structural variants |

| Applications | Identification of disease-associated genes, genetic risk assessment |

The Human Genome Project, completed in 2003, established the reference human genome sequence and revealed that humans possess only 20,000-25,000 protein-coding genes [3]. While the Sanger sequencing method used in this project provided excellent accuracy, newer NGS technologies enable massively parallel sequencing with dramatically increased throughput and reduced cost [3]. These advances have made large-scale genomic studies feasible and have provided the foundation for precision medicine approaches.

Transcriptomics: Dynamic Gene Expression

Transcriptomics provides a quantitative overview of the mRNA transcripts present in a biological sample at the time of collection, offering insights into which genes are actively being expressed [12]. Unlike the relatively static genome, the transcriptome is highly dynamic, changing in response to environmental conditions, developmental stages, and disease states [12]. Gene expression profiling studies typically compare expression patterns between groups with different phenotypes (e.g., disease state versus healthy controls) to identify differentially expressed genes [12].

Table 2: Transcriptomics Technologies and Applications

| Aspect | Description |

|---|---|

| Primary Focus | Analysis of complete set of RNA transcripts |

| Key Technology | RNA sequencing (RNA-seq), microarrays |

| Common Parameters | Gene expression levels, alternative splicing, non-coding RNA |

| Applications | Identification of differentially expressed genes, pathway activation |

The connection between transcriptomics and genomics is fundamental—while genomics reveals the potential of what might occur based on genetic code, transcriptomics reveals what is actually being executed from that code in specific contexts. This dynamic information is crucial for understanding how genetic variations manifest in different tissue types, disease states, and in response to treatments [13].

Proteomics: The Functional Effectors

Proteomics involves the large-scale study of proteins, including their expression levels, modifications, and interactions [12]. The proteome consists of all proteins present in specific cell types or tissues and is highly variable over time and in response to environmental changes [12]. While all proteins are correlated to mRNA, post-translational modifications (PTMs) and environmental interactions make it impossible to predict protein behavior from gene expression analysis alone [12]. Mass spectrometry (MS) represents the most common analytical platform for proteomic studies, though protein microarrays using capturing agents such as antibodies are also employed [12].

Table 3: Proteomics Technologies and Applications

| Aspect | Description |

|---|---|

| Primary Focus | Analysis of protein expression, structure, function, and interactions |

| Key Technology | Mass spectrometry, protein microarrays |

| Common Parameters | Protein abundance, post-translational modifications, protein-protein interactions |

| Applications | Biomarker discovery, drug target identification, signaling pathway analysis |

The proteome provides a more direct representation of cellular function than transcriptomic data, as proteins serve as the primary functional actors in biological systems. Proteomic analyses can identify not only which proteins are present but also how they are modified through processes like phosphorylation, acetylation, and glycosylation, which dramatically alter protein function [13].

Metabolomics: The Physiological Phenotype

Metabolomics focuses on the comprehensive analysis of small-molecule metabolites (typically <1 kDa) within a biological system [12]. The metabolome includes metabolic intermediates, hormones, signaling molecules, and other biochemical entities that represent the end products of cellular processes [14]. Metabolic phenotypes are the by-products of interactions between genetic, environmental, lifestyle, and other factors, making metabolomics the most direct readout of a system's physiological state [13]. The metabolome is highly variable and time-dependent, consisting of a wide range of chemical structures that present analytical challenges [12].

Table 4: Metabolomics Technologies and Applications

| Aspect | Description |

|---|---|

| Primary Focus | Analysis of complete set of small-molecule metabolites |

| Key Technology | Mass spectrometry (MS), nuclear magnetic resonance (NMR) |

| Common Parameters | Metabolite identification and quantification, metabolic pathway analysis |

| Applications | Biomarker discovery, toxicology studies, nutrient metabolism |

Metabolomics provides a unique window into the current physiological state of an organism, as metabolite concentrations can change rapidly in response to perturbations, treatments, or disease progression. This responsiveness makes metabolomics particularly valuable for monitoring treatment responses and disease dynamics in precision medicine applications [14].

Multi-Omics Integration Strategies and Methodologies

Data Integration Approaches

Integrating data across multiple omics layers presents significant computational and analytical challenges due to the heterogeneity, scale, and complexity of the datasets [11]. Three primary strategies have emerged for multi-omics integration:

Early integration combines all features from different omics datasets into one massive dataset before analysis. This approach preserves all raw information and can capture complex, unforeseen interactions between modalities but suffers from extremely high dimensionality and computational intensity [11].

Intermediate integration first transforms each omics dataset into a more manageable representation, then combines these representations. Network-based methods exemplify this approach, where each omics layer constructs a biological network (e.g., gene co-expression, protein-protein interactions) that are subsequently integrated to reveal functional relationships and modules driving disease [11].

Late integration builds separate predictive models for each omics type and combines their predictions at the end. This ensemble approach is robust, computationally efficient, and handles missing data well, but may miss subtle cross-omics interactions not strong enough to be captured by any single model [11].

Computational and AI-Based Integration Tools

Advanced computational methods are essential for effective multi-omics integration. Several software tools and platforms have been developed to support different integration approaches:

Table 5: Multi-Omics Integration Tools and Methods

| Tool/Method | Approach | Key Features | Applications |

|---|---|---|---|

| IMPALA | Pathway-based | Integrated pathway-level analysis from gene/protein expression and metabolomics data | Identification of additional pathways from combined datasets |

| iPEAP | Pathway-based | Pathway enrichment analysis integrating multiple omic platforms | Supports transcriptomics, proteomics, metabolomics, and GWAS data |

| MetaboAnalyst | Pathway-based | Comprehensive metabolomics data processing and functional enrichment analysis | Integrated pathway analysis from gene expression and metabolomics data |

| SAMNetWeb | Network-based | Generates biological networks representing changes in protein and gene expression | Integrated network and pathway enrichment analysis |

| MixOmics | Correlation-based | Multivariate analysis and visualization for comparing heterogeneous datasets | Dimensionality reduction, multilevel analysis |

| WGCNA | Correlation-based | Correlation and network topology analysis with hierarchical clustering | Gene co-expression network analysis |

Artificial intelligence and machine learning have become indispensable for multi-omics integration, with several state-of-the-art techniques showing particular promise [11]:

Autoencoders and Variational Autoencoders are unsupervised neural networks that compress high-dimensional omics data into lower-dimensional "latent spaces," making integration computationally feasible while preserving key biological patterns [11].

Graph Convolutional Networks are designed for network-structured data and can learn from biological networks where genes and proteins represent nodes and their interactions represent edges. These have proven effective for clinical outcome prediction by integrating multi-omics data onto biological networks [11].

Similarity Network Fusion creates patient-similarity networks from each omics layer and iteratively fuses them into a single comprehensive network, strengthening robust similarities and removing weak ones for more accurate disease subtyping [11].

Experimental Workflows and Methodologies

Integrated Multi-Omics Workflow

The following diagram illustrates a generalized workflow for integrated multi-omics studies, from sample collection through data integration and interpretation:

Detailed Methodologies for Core Omics Layers

Genomics Experimental Protocol: DNA extraction typically begins with cell lysis, followed by removal of proteins and RNA, and final DNA purification. For whole-genome sequencing, DNA is fragmented, adapters are ligated, and fragments are amplified and sequenced using NGS platforms like Illumina's NovaSeq technology, which can generate 20-52 billion reads per run with read lengths up to 2×250 base pairs [3]. Variant calling involves alignment to reference genomes (e.g., GRCh38) followed by identification of SNPs, indels, and structural variants using tools like GATK or DeepVariant [3].

Transcriptomics Experimental Protocol: RNA extraction must preserve RNA integrity, typically using guanidinium thiocyanate-phenol-chloroform extraction. RNA quality is assessed (RIN > 8), followed by library preparation including poly-A selection or rRNA depletion, cDNA synthesis, and adapter ligation. Sequencing is performed on platforms like Illumina, with subsequent analysis including quality control (FastQC), alignment (STAR, HISAT2), quantification (featureCounts), and differential expression analysis (DESeq2, edgeR) [12] [13].

Proteomics Experimental Protocol: Protein extraction involves cell lysis with detergent-based buffers, quantification, and digestion with trypsin. Liquid chromatography-tandem mass spectrometry (LC-MS/MS) separates peptides which are ionized and fragmented, with mass-to-charge ratios recorded. Data analysis includes peptide identification (MaxQuant, Proteome Discoverer), quantification (label-free or isobaric tagging), and statistical analysis to identify differentially expressed proteins [12] [13].

Metabolomics Experimental Protocol: Metabolite extraction uses methanol:acetonitrile:water mixtures to precipitate proteins while maintaining metabolite stability. Analysis employs either LC-MS (for broad coverage) or GC-MS (for volatile compounds), with NMR providing structural information. Data processing includes peak detection, alignment, and annotation using databases like HMDB, followed by statistical analysis to identify differential metabolites [12] [13].

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful multi-omics research requires specialized reagents and materials for each analytical platform. The following table details essential components for integrated multi-omics studies:

Table 6: Essential Research Reagents and Materials for Multi-Omics Studies

| Reagent/Material | Omics Application | Function | Technical Notes |

|---|---|---|---|

| TriZol/RNA Later | Transcriptomics | RNA stabilization and preservation | Maintains RNA integrity during sample storage and processing; critical for accurate gene expression profiling |

| RIPA Buffer | Proteomics | Protein extraction and solubilization | Effective lysis while maintaining protein stability and preventing degradation |

| Methanol:Acetonitrile:Water | Metabolomics | Metabolite extraction | Precipitates proteins while maintaining metabolite stability and solubility |

| Trypsin | Proteomics | Protein digestion | Cleaves proteins at specific sites for mass spectrometry analysis |

| DNase/RNase-free Water | All omics | Molecular biology reactions | Prevents nucleic acid degradation during sample processing and analysis |

| Solid Phase Extraction Columns | Metabolomics | Sample cleanup | Removes interfering compounds and concentrates analytes prior to analysis |

| Isobaric Tags (TMT, iTRAQ) | Proteomics | Multiplexed protein quantification | Enables simultaneous analysis of multiple samples in a single MS run |

| Poly-A Selection Beads | Transcriptomics | mRNA enrichment | Isolates mRNA from total RNA for RNA-seq library preparation |

| Restriction Enzymes | Genomics | DNA fragmentation | Used in various genomic applications including library preparation |

| Library Preparation Kits | All sequencing | NGS library construction | Facilitates adapter ligation and amplification for sequencing platforms |

Applications in Precision Medicine and Health

Integrative multi-omics approaches have demonstrated significant impact across multiple areas of precision medicine:

Disease Subtyping and Classification: Multi-omics data enables refined molecular classification of diseases beyond traditional histopathological approaches. In neuroblastoma and other cancers, integrated omics profiles have identified novel subtypes with distinct clinical outcomes and therapeutic vulnerabilities [11]. Similar approaches are being applied to cardiovascular, neurological, and metabolic disorders to redefine disease classification systems based on molecular mechanisms rather than symptomatic presentation.

Biomarker Discovery: Multi-omics approaches accelerate the discovery of novel diagnostic, prognostic, and predictive biomarkers. By integrating genomics, transcriptomics, and proteomics, researchers can identify complex molecular signatures that serve as early warning signs for disease or indicators of treatment response [11]. For example, combining liquid biopsy data (circulating tumor DNA) with proteomic markers and clinical risk factors has improved early cancer detection accuracy from blood samples [11].

Therapeutic Target Identification: Integrated analyses reveal novel therapeutic targets by mapping complete biological pathways and identifying key regulatory nodes. In glioma research, multi-omics integration combining genetic features with transcriptomic, epigenomic, and proteomic data has identified potential therapeutic targets that would be missed by isolated genetic analyses [9]. Similar approaches are being applied to identify drug targets for complex diseases including metabolic disorders, autoimmune conditions, and neurodegenerative diseases.

Drug Development and Clinical Trials: Multi-omics data enhances clinical trial design through improved patient stratification. By identifying molecular subtypes most likely to respond to specific therapies, researchers can enrich trial populations and increase success rates [11]. Additionally, multi-omics biomarkers can serve as intermediate endpoints for monitoring treatment response and understanding mechanisms of drug action or resistance.

The continued advancement and integration of genomics, transcriptomics, proteomics, and metabolomics technologies promises to further transform precision medicine, enabling increasingly personalized approaches to disease prevention, diagnosis, and treatment tailored to an individual's unique molecular profile.

The completion of the Human Genome Project (HGP) in 2003 marked a transformative milestone in biological science, providing the first reference sequence of the human genome and launching a new era of genomic medicine [3] [15]. This monumental international collaboration, which involved 20 centers across six countries and cost approximately $3 billion, fundamentally reshaped research approaches to human biology, disease states, and their treatment [3] [16]. The project not only provided a fundamental reference map for future discovery but also established powerful precedents for international collaboration and open-source data sharing that would become critical enablers for subsequent scientific advances [17] [3].

Over the past two decades, this foundation has enabled a dramatic evolution from focusing on a single omics layer—genomics—toward increasingly sophisticated integrated multi-omics approaches that combine genomic, transcriptomic, proteomic, metabolomic, and epigenomic data [3] [18]. This paradigm shift has been driven by the recognition that while genomics provides invaluable insights into DNA sequences, it represents only one piece of the complex puzzle of biological systems [18]. The integration of multiple biological data layers has emerged as an essential strategy for obtaining a comprehensive understanding of health and disease, enabling researchers to link genetic information with molecular function and phenotypic outcomes [3] [18]. This technical guide examines the historical progression, methodological frameworks, and translational applications of this evolution, with particular emphasis on implications for personalized medicine strategies in research and drug development.

Historical Timeline: From Genome Sequencing to Multi-Omics Integration

The journey from the initial conception of the HGP to contemporary multi-omics frameworks has been characterized by continuous technological innovation, dramatically reduced sequencing costs, and increasingly sophisticated computational approaches. Table 1 summarizes key quantitative metrics that highlight this remarkable evolution.

Table 1: Evolution of Genomic and Multi-Omics Technologies: Key Quantitative Metrics

| Metric | Human Genome Project (2003) | Current State (2025) | Fold Improvement |

|---|---|---|---|

| Time to Sequence Human Genome | 13 years [17] | ~5 hours (record) [16] | ~23,000x |

| Cost per Human Genome | ~$2.7 billion [17] | ~$200-$500 [17] [16] | ~10 millionx |

| Sequencing Output (per run) | N/A (project total) | 6-16 Terabases (NovaSeq X) [18] | N/A |

| Primary Technology | Sanger sequencing [3] | Next-Generation Sequencing (NGS) [3] [18] | - |

| Data Integration Approach | Single-omics (Genomics) | Multi-omics (Genomics, Transcriptomics, Proteomics, Metabolomics, Epigenomics) [3] [18] | - |

The Human Genome Project: A Foundation for Future Discovery

The HGP was officially launched in 1990 with a 15-year timetable and substantial funding from the US National Institutes of Health and Department of Energy [3] [16]. The project utilized Sanger sequencing, known for its excellent accuracy in base calling but limited throughput, as it could only sequence small DNA fragments at a time [3]. A significant turning point came in 1998 with the emergence of Celera Genomics, a private company that promised to sequence the genome ahead of the public consortium and potentially lock the data behind paywalls or patents [17] [16]. This competition created urgency within the public project, leading to accelerated efforts and the eventual publication of the first draft sequence in 2000—five years ahead of the original schedule [17] [16]. On July 7, 2000, a team at the University of California, Santa Cruz, posted the first human genome sequence online, making it freely available to the global scientific community and establishing a powerful ethos of open-access science that would fuel subsequent innovation [16].

The project's final completion in 2003 revealed that the human genome contains only 20,000-25,000 protein-coding genes, far fewer than previously anticipated, highlighting the complexity of gene regulation and the importance of non-coding regions [3]. This fundamental discovery created the need to decipher complex interactions within the human body at both microscopic and macroscopic levels, establishing the importance of a systems biology approach in biomedical research [3].

The Rise of Next-Generation Sequencing and Multi-Omics Technologies

The period following the HGP witnessed rapid development of Next-Generation Sequencing (NGS) technologies that addressed the throughput limitations of Sanger sequencing [3] [18]. These massively parallel DNA sequencing platforms, including sequencing by synthesis, pyrosequencing, sequencing by ligation, and ion semiconductor sequencing, enabled simultaneous sequencing of millions of DNA fragments [3] [18]. This technological leap democratized genomic research, making large-scale DNA and RNA sequencing faster, cheaper, and more accessible than ever before [18]. Illumina's sequencing platforms exemplify this dramatic progress: while the HiSeq technology in 2014 had an output capacity of 1.6-1.8 Terabases with a maximum read length of 2×150 base pairs, current NovaSeq technology can deliver 6-16 Terabases with read lengths up to 2×250 base pairs [3].

The development of these high-throughput technologies enabled researchers to move beyond genomics alone and begin systematically integrating multiple molecular data layers. Multi-omics approaches emerged as a strategic framework for understanding biology across interconnected layers, combining genomics (DNA sequences), transcriptomics (RNA expression), proteomics (protein abundance and interactions), metabolomics (metabolic pathways and compounds), and epigenomics (epigenetic modifications) [3] [10] [18]. This integration provides a more comprehensive view of biological systems than any single omics layer can provide alone, enabling researchers to link genetic information with molecular function and phenotypic outcomes [18].

Technical Methodologies: Experimental Frameworks for Multi-Omics Integration

Multi-Omics Data Generation and Workflow Integration

The successful implementation of multi-omics studies requires careful experimental design and execution across multiple technical domains. Table 2 outlines essential research reagents and platforms critical for generating robust multi-omics datasets.

Table 2: Essential Research Reagents and Platforms for Multi-Omics Studies

| Category | Specific Technology/Reagent | Key Function | Application in Multi-Omics |

|---|---|---|---|

| Sequencing Platforms | Illumina NovaSeq X [18] | High-throughput DNA/RNA sequencing | Genomics, Transcriptomics |

| Oxford Nanopore Technologies [18] | Long-read, real-time sequencing | Structural variant detection, full-length transcript sequencing | |

| Proteomics Technologies | Mass Spectrometry [19] | Protein identification and quantification | Proteomics, Post-translational modifications |

| Spatial Technologies | Spatial Transcriptomics [16] [18] | Gene expression mapping in tissue context | Tissue heterogeneity, tumor microenvironment |

| Single-Cell Technologies | Single-Cell RNA Sequencing [8] [18] | Gene expression profiling at single-cell resolution | Cellular heterogeneity, rare cell populations |

| Bioinformatic Tools | AI/ML Platforms (e.g., DeepVariant) [3] [18] | Variant calling, pattern recognition | Data integration, biomarker discovery |

| Specialized Platforms | ApoStream [10] | Isolation of circulating tumor cells | Liquid biopsy, cancer monitoring |

The integration of multiple omics layers follows a structured workflow that begins with experimental design and proceeds through data generation, processing, integration, and interpretation. The following diagram illustrates this comprehensive multi-omics workflow, highlighting the interconnected nature of these processes and the role of artificial intelligence in extracting biologically meaningful insights.

Data Integration Strategies and Computational Frameworks

The integration of diverse omics datasets presents significant computational challenges due to data heterogeneity, varying scales, resolutions, and noise levels across different molecular layers [19]. Two primary strategies have emerged for addressing these challenges: horizontal integration and vertical integration [8]. Horizontal integration combines the same type of omics data across different samples or cohorts to identify patterns and associations, while vertical integration combines different types of omics data from the same samples to build a comprehensive view of biological systems [8].

Advanced computational tools, particularly artificial intelligence (AI) and machine learning (ML) algorithms, have become indispensable for analyzing these complex, high-dimensional datasets [8] [18]. Deep learning models such as convolutional neural networks and graph neural networks can detect hidden patterns, fill gaps in incomplete datasets, and enable in silico simulations of treatment responses [20]. Tools like Google's DeepVariant utilize deep learning to identify genetic variants with greater accuracy than traditional methods, while other AI models analyze polygenic risk scores to predict individual susceptibility to complex diseases [18]. The synergy of AI with multi-omics data has significantly enhanced predictive accuracy and mechanistic insights, revealing how gene-gene and gene-environment interactions shape therapeutic outcomes [20].

Cloud computing platforms such as Amazon Web Services (AWS) and Google Cloud Genomics have emerged as essential infrastructure for multi-omics research, providing scalable resources to store, process, and analyze the massive datasets generated by these approaches [18]. These platforms enable global collaboration, allowing researchers from different institutions to work on the same datasets in real-time while complying with regulatory frameworks such as HIPAA and GDPR that ensure the secure handling of sensitive genomic data [18].

Applications in Personalized Medicine and Drug Development

Biomarker Discovery and Patient Stratification

Multi-omics approaches have revolutionized biomarker discovery by enabling the identification of molecular signatures at multiple biological levels, facilitating more precise patient stratification for targeted interventions [8] [21]. In oncology, for example, multi-omics strategies have yielded promising biomarker panels at the single-molecule, multi-molecule, and cross-omics levels, supporting cancer diagnosis, prognosis, and therapeutic decision-making [8]. These approaches help dissect the tumor microenvironment, revealing interactions between cancer cells and their surroundings that would be invisible to single-omics approaches [18].

A key application of multi-omics in patient stratification is demonstrated in a 2025 study that performed a cross-sectional integrative analysis of genomics, urine metabolomics, and serum metabolomics/lipoproteomics on a cohort of 162 healthy individuals [21]. The research concluded that multi-omics integration provided optimal stratification capacity, identifying four distinct subgroups with different molecular profiles [21]. For a subset of 61 individuals with longitudinal data, the study evaluated the temporal stability of these molecular profiles, finding that certain clusters displayed classification consistency over time—a critical aspect for implementing effective prevention strategies [21]. This approach exemplifies how multi-omics profiling can serve as a framework for precision medicine aimed at early prevention strategies in apparently healthy populations.

Advancing Drug Discovery and Development

Multi-omics approaches are transforming drug discovery by breaking down traditional silos where genomic data alone was used to identify mutations associated with disease without integrating other datasets that inform downstream impacts on cellular functions [19]. By layering transcriptomics, translatomics (analysis of translated RNA), proteomics, and metabolomics, researchers can better understand biological pathways and distinguish causal mutations from inconsequential ones [19]. This multidimensional insight enables the discovery of functionally relevant drug targets that might otherwise be overlooked, enhancing the potential to deliver meaningful benefits to patients [19].

The integration of multi-omics with real-world data (RWD) and AI represents a particular powerful paradigm shift in drug development [19]. This combination allows researchers to move from static snapshots of biology to dynamic, predictive models of disease that can inform drug development in real-time [19]. By training AI models on RWD that includes wearable device outputs, imaging, and electronic health records, researchers can identify subgroups of patients most likely to benefit from particular treatments and monitor how multi-omics markers evolve over time in dynamic patient populations [19]. This approach improves the external validity of findings and enhances clinical relevance.

Pharmacogenomics has particularly benefited from multi-omics integration, as many drug response phenotypes are governed by intricate networks of genomic variants, epigenetic modifications, and metabolic pathways rather than single-gene effects [20]. Multi-omics approaches capture these complex data layers, offering a comprehensive view of patient-specific biology that enables more accurate prediction of individual responses to medications, helping to optimize dosage and minimize side effects [18] [20].

Future Perspectives and Challenges

As multi-omics approaches continue to evolve, several cutting-edge technologies are poised to expand their scope and impact. Single-cell multi-omics and spatial multi-omics technologies represent particularly promising frontiers, enabling researchers to map molecular activity at the level of individual cells within the spatial context of their native tissue environment [8] [19]. These approaches reveal cellular heterogeneity that bulk analyses cannot detect, providing critical insights for understanding complex diseases like cancer and autoimmune disorders [18] [19]. Additionally, the emerging human pangenome project—an inclusive set of reference genome sequences that captures global genomic diversity—is improving our ability to detect rare conditions and address historical biases in genomic research [16].

Despite these promising advances, significant challenges remain in the widespread implementation of multi-omics approaches. Data integration continues to present technical hurdles due to the heterogeneous nature of different omics datasets with varying scales, resolutions, and noise levels [19]. Infrastructure limitations also represent a bottleneck, as multi-omics approaches generate enormous volumes of data that require advanced storage, processing power, and cloud-based computational resources [19]. Additionally, ensuring equitable representation in genomic and multi-omics datasets remains a critical concern, as participants of European descent currently constitute approximately 86% of all genomic studies worldwide, potentially limiting the applicability of findings across diverse populations [3].

Looking ahead, the next five years are poised to see multi-omics approaches increasingly support in silico drug discovery through rapid screening of compounds, simulation of biological interactions, and prediction of off-target effects [19]. As AI models become more sophisticated and data-sharing practices expand, multi-omics integration will likely become standard practice in both basic research and clinical applications, ultimately fulfilling the promise of precision medicine that motivated the initial Human Genome Project over two decades ago [19]. Continued investment in technology, policy-making, and international collaboration will be essential to overcome current limitations and realize the full potential of integrated multi-omics approaches for improving human health.

The conventional "one-size-fits-all" approach to medicine has demonstrated limited efficacy in addressing the complex heterogeneity of human diseases [22]. Precision medicine represents a fundamental paradigm shift from this reactive disease control model to a proactive framework for disease prevention and health preservation [3]. This transformation utilizes a deep understanding of an individual's genomic makeup, environmental exposures, and lifestyle factors to deliver customized healthcare strategies for prevention, diagnosis, and treatment [3]. The emergence of large-scale biological datasets and sophisticated analytical technologies has enabled this transition, moving medical practice from population-wide averages to individualized strategies based on each patient's unique molecular profile [11] [3].

Multi-omics technologies form the cornerstone of this transformed approach, providing comprehensive insights into the complex biological networks governing health and disease states [4]. While single-omics approaches have yielded valuable insights, they cannot capture the intricate interactions between different biological layers that drive disease pathogenesis [4]. Multi-omics integration systematically combines diverse molecular datasets—including genomic, transcriptomic, proteomic, epigenomic, metabolomic, and microbiomic profiles—to construct a clinically relevant understanding of disease biology that reflects the true complexity of human physiological systems [4] [10]. The integration of these multidimensional datasets with clinical information from electronic health records (EHRs) creates unprecedented opportunities for understanding disease etiology, identifying novel biomarkers, and developing targeted therapeutic interventions [11] [23].

The Multi-Omics Technological Framework

Omics Technologies and Their Clinical Applications

The multi-omics framework encompasses several distinct but interconnected technological domains, each providing unique insights into different aspects of biological systems. The table below summarizes the key omics technologies and their clinical applications in precision medicine.

Table 1: Multi-Omics Technologies and Their Clinical Applications in Precision Medicine

| Omics Domain | Molecular Focus | Key Technologies | Primary Clinical Applications |

|---|---|---|---|

| Genomics | Entire DNA sequence, genetic variations | Next-generation sequencing (NGS), whole-genome sequencing, exome sequencing, genotyping arrays [4] [3] | Genetic risk assessment, variant discovery, pharmacogenomics, carrier screening [4] [3] |

| Transcriptomics | RNA expression patterns (coding and non-coding RNAs) | RNA-seq, single-cell RNA sequencing (scRNA-seq) [4] | Gene expression profiling, alternative splicing analysis, biomarker discovery, therapeutic target identification [4] |

| Proteomics | Protein expression, post-translational modifications | Mass spectrometry, affinity proteomics, protein microarrays [4] | Functional pathway analysis, signaling network mapping, therapeutic response monitoring [4] |

| Epigenomics | DNA methylation, histone modifications | Bisulfite sequencing, ChIP-seq [3] [23] | Environmental exposure assessment, gene regulation studies, developmental biology [3] |

| Metabolomics | Small molecule metabolites | Mass spectrometry, NMR spectroscopy [4] | Metabolic pathway analysis, nutritional interventions, toxicity assessment [4] |

| Microbiomics | Microbial communities | 16S rRNA sequencing, metagenomics [3] | Gut-brain axis studies, infectious disease profiling, microbiome therapeutics [3] |

Analytical Challenges in Multi-Omics Integration

The integration of multi-omics data presents significant computational and analytical challenges that must be addressed to derive clinically meaningful insights. Data heterogeneity arises from the fundamentally different nature of each biological layer, where each data type has distinct formats, scales, and technical characteristics that can obscure true biological signals [11]. Missing data is a common issue in biomedical research, where patients may have complete genomic data but lack proteomic measurements, potentially introducing bias if not handled with robust imputation methods such as k-nearest neighbors (k-NN) or matrix factorization [11]. The high-dimensionality problem emerges when dealing with far more features than samples, which can break traditional analytical methods and increase the risk of identifying spurious correlations [11].

Batch effects represent another critical challenge, where variations introduced by different technicians, reagents, sequencing machines, or processing times can create systematic noise that masks genuine biological variation [11]. These technical artifacts require careful experimental design and statistical correction methods like ComBat to ensure data quality and reproducibility [11]. Furthermore, the massive computational requirements for processing and storing multi-omics data often involve petabyte-scale datasets, demanding scalable infrastructure such as cloud-based solutions and distributed computing frameworks [11]. Finally, researchers must develop robust statistical models that can handle this complexity while producing biologically interpretable results, balancing computational sophistication with deep biological understanding [11].

Methodological Approaches to Multi-Omics Integration

Data Integration Strategies

The successful integration of multi-omics data requires sophisticated methodological approaches that can harmonize diverse datasets into a coherent analytical framework. Researchers typically employ three primary integration strategies, differentiated by the timing of integration in the analytical workflow.

Early integration (feature-level integration) merges all omics features into a single massive dataset before analysis [11]. This approach typically involves simple concatenation of data vectors from different omics layers, preserving all raw information and potentially capturing complex, unforeseen interactions between modalities [11]. However, early integration is computationally intensive and highly susceptible to the "curse of dimensionality," where the extremely high number of features relative to samples can lead to model overfitting and spurious correlations [11].

Intermediate integration involves transforming each omics dataset into a more manageable representation before combination [11]. Network-based methods exemplify this approach, where each omics layer is used to construct biological networks (e.g., gene co-expression, protein-protein interactions) that are subsequently integrated to reveal functional relationships and modules driving disease processes [11]. This strategy reduces complexity and incorporates valuable biological context but may lose some raw information and requires substantial domain knowledge to implement effectively [11].

Late integration (model-level integration) builds separate predictive models for each omics type and combines their predictions at the final stage [11]. This ensemble approach uses methods like weighted averaging or stacking, offering computational efficiency and robust handling of missing data [11]. However, late integration may miss subtle cross-omics interactions that are not strong enough to be captured by any single model, potentially overlooking important biological insights that emerge only from the integration of multiple data layers [11].

Table 2: Comparison of Multi-Omics Data Integration Strategies

| Integration Strategy | Timing of Integration | Advantages | Limitations | Common Algorithms |

|---|---|---|---|---|

| Early Integration | Before analysis | Captures all cross-omics interactions; preserves raw information | Extremely high dimensionality; computationally intensive | Simple concatenation, regularized regression |

| Intermediate Integration | During analysis | Reduces complexity; incorporates biological context through networks | Requires domain knowledge; may lose some raw information | Similarity Network Fusion (SNF), matrix factorization |

| Late Integration | After individual analysis | Handles missing data well; computationally efficient | May miss subtle cross-omics interactions | Stacking, weighted averaging, Bayesian models |

Artificial Intelligence and Machine Learning Approaches

Without advanced artificial intelligence (AI) and machine learning (ML) techniques, integrating multi-modal genomic and multi-omics data for precision medicine would be practically impossible due to the sheer volume and complexity of the data [11]. These computational approaches provide powerful pattern recognition capabilities that can detect subtle connections across millions of data points that remain invisible to conventional analytical methods [11].

Autoencoders (AEs) and Variational Autoencoders (VAEs) are unsupervised neural networks that compress high-dimensional omics data into dense, lower-dimensional "latent spaces" [11]. This dimensionality reduction makes integration computationally feasible while preserving key biological patterns, creating a unified representation where data from different omics layers can be effectively combined [11]. Graph Convolutional Networks (GCNs) are specifically designed for network-structured data, representing biological components (genes, proteins) as nodes and their interactions as edges [11]. GCNs learn from this structure by aggregating information from a node's neighbors to make predictions, proving particularly effective for clinical outcome prediction in complex conditions [11].

Similarity Network Fusion (SNF) creates patient-similarity networks from each omics layer and iteratively fuses them into a single comprehensive network [11]. This process strengthens robust similarities while removing weak associations, enabling more accurate disease subtyping and prognosis prediction [11]. Recurrent Neural Networks (RNNs), including Long Short-Term Memory (LSTM) and Gated Recurrent Unit (GRU) networks, excel at analyzing longitudinal data by capturing temporal dependencies [11]. This capability is crucial for modeling how biological systems evolve over time, enabling predictions of disease progression and treatment responses from time-series clinical and omics data [11].

More recently, Transformer architectures originally developed for natural language processing have been adapted for biological data analysis [11]. Their self-attention mechanisms dynamically weigh the importance of different features and data types, learning which modalities matter most for specific predictions and enabling identification of critical biomarkers from noisy, high-dimensional datasets [11].

Diagram 1: AI and Machine Learning Framework for Multi-Omics Data Integration. This workflow illustrates how different AI approaches process various omics data types to generate clinically actionable insights.

Experimental Protocols and Workflows

Multi-Omics Data Generation Pipeline

A standardized experimental workflow is essential for generating high-quality multi-omics data suitable for integration and analysis. The process begins with sample collection and preparation, where biospecimens (tissue, blood, etc.) are obtained and processed according to standardized protocols to maintain sample integrity [10]. For limited tissue scenarios, innovative technologies like ApoStream—a proprietary platform that captures viable whole cells from liquid biopsies—can be employed to enable downstream multi-omic analysis when traditional biopsies are not feasible [10].

The DNA sequencing phase utilizes next-generation sequencing (NGS) technologies, with sequencing by synthesis using polymerase chain reaction (PCR) being the most widely adopted method for genome and exome sequencing [3]. Modern NGS platforms like Illumina's NovaSeq technology can generate outputs of 6–16 terabytes with read lengths up to 2×250 base pairs, providing comprehensive genomic coverage [3]. For RNA sequencing, RNA-seq protocols capture both protein-coding mRNAs and non-coding RNAs, with single-cell RNA sequencing (scRNA-seq) enabling transcriptome profiling at cellular resolution to understand heterogeneity within tissues [4].

Proteomic profiling typically employs mass spectrometry-based methods, with stable isotope labeling approaches reducing detection time and minimizing batch effects between samples [4]. Advanced techniques combining immunoprecipitation with mass spectrometry enable the identification of protein-protein interactions and post-translational modifications that regulate protein activity [4]. Metabolomic analysis utilizes either untargeted or targeted mass spectrometry approaches to quantify small molecule metabolites, providing immediate insights into cellular physiological states and metabolic pathway activities [4].

Quality Control and Data Preprocessing

Rigorous quality control and standardized preprocessing are critical for ensuring data quality and comparability across different omics platforms. Data normalization and harmonization address the challenge of different labs and platforms generating data with unique technical characteristics that can mask true biological signals [11]. RNA-seq data requires normalization (e.g., TPM, FPKM) to enable cross-sample gene expression comparisons, while proteomics data needs intensity normalization to correct for technical variations [11].

Batch effect correction employs statistical methods like ComBat to remove systematic technical noise introduced by different processing dates, reagent batches, or personnel [11]. Missing data imputation uses algorithms such as k-nearest neighbors (k-NN) or matrix factorization to estimate missing values based on patterns in the existing data, preventing bias from incomplete datasets [11]. Finally, feature selection reduces dimensionality by identifying and retaining the most biologically informative variables, improving model performance and interpretability while mitigating the curse of dimensionality [11].

Diagram 2: Comprehensive Multi-Omics Experimental Workflow. This diagram outlines the complete pipeline from sample collection through data generation and processing to analytical applications.

Research Reagent Solutions for Multi-Omics Studies

Table 3: Essential Research Reagents and Platforms for Multi-Omics Investigations

| Reagent/Platform | Type | Primary Function | Application Context |

|---|---|---|---|

| Next-generation sequencers | Instrumentation | High-throughput DNA/RNA sequencing | Whole genome sequencing, transcriptome profiling, variant discovery [3] |

| Mass spectrometers | Instrumentation | Protein and metabolite identification and quantification | Proteomic and metabolomic profiling, post-translational modification analysis [4] |

| ApoStream technology | Platform | Isolation and profiling of circulating tumor cells from liquid biopsies | Cellular profiling when traditional biopsies are not feasible [10] |

| Spectral flow cytometry | Technology | High-parameter analysis of cellular phenotypes (60+ markers) | Immune cell profiling, tumor microenvironment characterization [10] |

| Single-cell RNA sequencing kits | Reagent | Transcriptome profiling at single-cell resolution | Cellular heterogeneity studies, tumor subpopulation identification [4] |

| Stable isotope labels | Reagent | Quantitative proteomics using mass spectrometry | Protein expression and turnover measurements [4] |

Applications in Precision Medicine

Biomarker Discovery and Diagnostics

One of the most impactful applications of integrated multi-omics is the discovery of novel biomarkers for early disease detection, diagnosis, and prognosis [11]. By combining genomics, transcriptomics, and proteomics, researchers can uncover complex molecular patterns associated with disease states long before clinical symptoms manifest [11]. Multi-modal approaches have shown particular promise in oncology, where combining liquid biopsy data (circulating tumor DNA) with proteomic markers and clinical risk factors significantly improves early cancer detection accuracy from minimal specimen requirements [11].

Integrated omics also enables the identification of prognostic markers that predict disease progression trajectories and predictive markers that forecast treatment responses [11]. For example, in cardiovascular medicine, researchers have investigated genes associated with heart failure, atrial fibrillation, and other conditions using machine learning to predict individual risk from integrated multi-omics profiles [11]. AI-powered pathology tools enhance these discoveries by extracting quantitative features from medical images and correlating them with molecular signatures, creating comprehensive diagnostic frameworks [11] [10].

Drug Target Discovery and Therapeutic Development

Multi-omics approaches are revolutionizing drug discovery by identifying novel therapeutic targets and enabling more efficient clinical trial designs [11] [10]. Integrative analysis can pinpoint key drivers of disease pathogenesis across multiple biological layers, highlighting potential intervention points that might be missed when examining single omics datasets in isolation [4]. This approach is particularly valuable for understanding complex diseases with heterogeneous etiologies, such as neurodegenerative disorders and autoimmune conditions, where multiple interconnected pathways contribute to disease progression [4].

Pharmacogenomics—the study of how an individual's genetic makeup influences their response to medications—exemplifies the clinical translation of multi-omics insights [10]. By integrating pharmacology and genomics, researchers can develop safer, more effective therapies tailored to each person's genetic profile [10]. Furthermore, multi-omics data enhances clinical trial efficiency through improved patient stratification, ensuring that participants most likely to respond to investigational therapies are enrolled, thereby increasing trial success rates while reducing costs and timelines [11] [10].

Clinical Implementation and Case Studies

The real-world implementation of multi-omics strategies is already demonstrating significant clinical impact across various therapeutic areas. In oncology, circulating tumor cell profiling using ApoStream technology in non-small cell lung cancer patients has enabled identification of Antibody Drug Conjugate (ADC) targets such as folate receptor alpha (FRA), supporting personalized treatment selection while meeting regulatory requirements [10]. AI-powered genomic analysis has improved diagnostic accuracy and reduced turnaround time by detecting subtle patterns across genetic variants and expression profiles that traditional bioinformatics approaches often miss [10].

In neurodegenerative diseases like Alzheimer's, multi-omics integration has helped unravel complex pathogenic mechanisms that cannot be explained by single-omics approaches alone [4]. Similarly, multi-omics profiling in cardiovascular diseases has provided insights into systems biology approaches for understanding disease pathogenesis and identifying novel therapeutic interventions [4]. The integration of single-cell transcriptomics with spatial transcriptomics has successfully resolved the spatial organization of immune-malignant cell networks in human colorectal cancer, providing insights into tumor microenvironment dynamics that inform immunotherapeutic strategies [4].

Future Perspectives and Challenges

Emerging Technologies and Approaches

The field of multi-omics integration continues to evolve rapidly, with several emerging technologies poised to enhance its capabilities further. Single-cell multi-omics represents a significant advancement, enabling simultaneous measurement of multiple molecular layers within individual cells [4] [23]. This approach provides unprecedented resolution for understanding cellular heterogeneity and identifying rare cell populations that may drive disease processes [4]. Spatial omics technologies add another dimension by preserving the architectural context of tissues, allowing researchers to correlate molecular profiles with spatial localization and cell-cell interactions [4].

Large-scale population biobanks are transforming multi-omics research by providing extensive, diverse datasets that combine multi-omics profiles with rich clinical and demographic information [23]. Initiatives such as the Integrated Personal 'Omics Project (IPOP) and various national biobanks incorporate heterogeneous phenotypic data derived from EHRs alongside conventional multi-omics data, enabling population-level analyses that capture the full spectrum of human diversity [23]. Longitudinal multi-omics represents another frontier, tracking molecular profiles over time to understand dynamic biological processes, disease progression trajectories, and response to interventions [23].

The emergence of large language models in artificial intelligence is anticipated to significantly influence multi-omics data integration approaches, potentially revolutionizing how researchers extract meaningful patterns from complex, high-dimensional datasets [23]. These models can incorporate prior biological knowledge and contextual relationships, enhancing interpretability and biological plausibility of findings [23].

Implementation Challenges and Ethical Considerations

Despite the tremendous promise of multi-omics approaches, significant challenges remain in their widespread clinical implementation. Data standardization across different platforms and institutions is essential for ensuring reproducibility and comparability of results [11]. Computational infrastructure requirements for storing and processing petabyte-scale datasets present substantial logistical and financial barriers, particularly for smaller healthcare institutions [11].

The issue of data diversity represents a critical challenge, as participants of European descent currently constitute approximately 86% of all genomic studies worldwide, limiting the generalizability of findings across diverse populations [3]. Addressing this disparity requires community-engaged research frameworks that build trust and ensure equitable representation in research cohorts [3]. Variant interpretation complexity also presents obstacles, with over 90,000 known variants of uncertain significance requiring functional characterization to determine their clinical relevance [3].

Ethical considerations surrounding data privacy, informed consent, and equitable access to precision medicine advancements must be carefully addressed [3]. The integration of multi-omics data with EHR systems raises important questions about data security, patient autonomy, and the potential for genetic discrimination [3]. Additionally, the regulatory framework for validating and approving multi-omics-based diagnostic and therapeutic approaches continues to evolve, requiring ongoing dialogue between researchers, clinicians, industry partners, and regulatory agencies [10].

The precision medicine paradigm, powered by integrated multi-omics approaches, represents a fundamental transformation in healthcare from reactive, population-wide interventions to proactive, individualized strategies. By comprehensively characterizing the complex interactions between genes, proteins, metabolites, and environmental factors, multi-omics integration provides unprecedented insights into disease mechanisms and therapeutic opportunities. While significant challenges remain in standardization, computational infrastructure, and equitable implementation, the rapid advancement of analytical technologies and AI methodologies continues to accelerate progress toward truly personalized healthcare. As multi-omics approaches become increasingly integrated into clinical practice, they hold the potential to redefine therapeutic strategies, improve patient outcomes, and ultimately transform the practice of medicine from a one-size-fits-all model to a precisely tailored, individualized approach.

The emergence of multi-omics technologies represents a transformative approach in biomedical research, enabling a comprehensive understanding of human health and disease by integrating data across multiple molecular layers. This integration allows researchers to move beyond single-dimensional analysis to capture the complex, systemic properties of biological systems and disease pathologies [24]. The foundational premise of multi-omics is that the combination of various 'omics' technologies—including genomics, transcriptomics, proteomics, metabolomics, epigenomics, and microbiomics—generates a more holistic molecular profile than any single approach can provide [24] [3]. This profile serves as a critical stepping stone for ambitious objectives in precision medicine, including detecting disease-associated molecular patterns, identifying disease subtypes, improving diagnosis and prognosis, predicting drug response, and understanding regulatory processes [24].

The shift toward multi-omics aligns with the broader vision of personalized or precision medicine, which aims to tailor medical decisions and treatments to individual patient characteristics [5]. Rather than creating therapies uniquely tailored to each patient, personalized medicine focuses on categorizing individuals into subpopulations based on their susceptibility to particular diseases or their response to specific treatments [5]. Multi-omics data provides the molecular foundation for this categorization, enabling preventive or therapeutic interventions to be concentrated on those who will benefit, thereby sparing expense and side effects for those who will not [5]. The integration of these complex datasets is made possible through phenomenal advancements in bioinformatics, data sciences, and artificial intelligence, which together help unravel the heterogeneous etiopathogenesis of complex diseases and create a framework for precision medicine approaches [3].

Revealing Disease Mechanisms Through Multi-Omics Integration

Elucidating Complex Pathomechanisms

Multi-omics integration has proven particularly valuable for elucidating molecular pathways in diseases with complex and poorly understood underlying mechanisms. Methylmalonic aciduria (MMA), an inherited metabolic disorder, serves as an illustrative example where multi-omics approaches have revealed previously unknown aspects of pathogenesis [25]. By integrating genomic, transcriptomic, proteomic, and metabolomic profiling with biochemical and clinical data from 210 patients, researchers identified glutathione metabolism as critically important in MMA pathogenesis—a finding substantiated by evidence across multiple molecular layers [25]. The integration of protein quantitative trait loci (pQTL) analysis with correlation networks of proteomics and metabolomics data further revealed that lysosomal function is compromised in MMA patients, which is critical for maintaining metabolic balance [25].

This systematic approach to multi-omics integration provides a framework for decoding disease mechanisms by accumulating evidence from multiple biological levels. The analysis demonstrated how genetic variation influences protein abundance through pQTL mapping, then connected these findings to metabolic disturbances through correlation network analysis [25]. This methodology represents a powerful paradigm for investigating complex disorders where single-omics approaches have provided incomplete understanding.

Understanding Regulatory Processes and Molecular Interactions

Multi-omics approaches enable researchers to understand regulatory processes and interactions between different molecular layers that would remain invisible when examining each layer in isolation. Biological processes are inherently complex and orchestrated by billions of molecules, with interactions and crosstalk between these biomolecules enabling the regulation of essential processes including cell division, gene expression, signal transduction, and metabolism [25]. In disease, these processes become dysregulated, leading to pathological conditions.

The integration of epigenomic and transcriptomic data is especially valuable for associating regulatory regions with changes in gene expression. For paired single-cell multi-omics data, joint dimensionality reduction of multiple molecular measurements can identify patterns of co-variation between genomic features, potentially revealing how chromatin accessibility in specific regions influences gene expression patterns [26]. Deep generative models like multiDGD provide a probabilistic framework to learn shared representations of transcriptome and chromatin accessibility, enabling detection of statistical associations between genes and regulatory regions conditioned on the learned representations [26].

Table 1: Multi-Omics Technologies and Their Contributions to Understanding Disease Mechanisms

| Omics Layer | Molecular Entities Measured | Biological Insight Provided | Contribution to Disease Mechanism |

|---|---|---|---|

| Genomics | DNA sequences, genetic variants | Genetic predisposition, mutation identification | Reveals inherited or acquired genetic variations that predispose to or cause disease |

| Transcriptomics | RNA expression levels | Gene activity, alternative splicing | Identifies dysregulated genes and pathways in disease states |

| Proteomics | Protein abundance, modifications | Functional effectors, signaling pathways | Reveals post-translational modifications and protein pathway alterations |

| Metabolomics | Metabolite concentrations | Biochemical activity, metabolic state | Provides direct reflection of biochemical activities and metabolic state in disease |

| Epigenomics | DNA methylation, histone modifications | Regulatory mechanisms without DNA sequence changes | Shows how environmental factors influence gene expression in disease development |

Analytical Frameworks for Multi-Omics Data