PDB Validation Reports: A Practical Guide to Assessing Crystallographic Structure Quality for Research and Drug Discovery

This article provides a comprehensive guide to PDB validation reports for crystallographic structures, essential tools for researchers, scientists, and drug development professionals.

PDB Validation Reports: A Practical Guide to Assessing Crystallographic Structure Quality for Research and Drug Discovery

Abstract

This article provides a comprehensive guide to PDB validation reports for crystallographic structures, essential tools for researchers, scientists, and drug development professionals. It covers the foundational principles of structural validation, explains how to interpret key quality metrics like resolution, R-factors, and electron density fit, and offers practical troubleshooting advice for addressing common issues. The guide also explores comparative validation across different experimental methods and emerging computational models like AlphaFold, empowering readers to critically assess structural data reliability for biomedical applications.

The Essential Guide to PDB Validation: Why Every Structural Biologist Needs Validation Reports

What are PDB Validation Reports and Why Were They Developed?

PDB Validation Reports are detailed documents produced by the Worldwide Protein Data Bank (wwPDB) that provide an objective assessment of the quality of macromolecular structures. They were developed to standardize the evaluation of structural models using community-established criteria, thereby ensuring the reliability and reproducibility of structural data in the PDB archive, which is crucial for fields like biomedical research and drug discovery [1] [2] [3].

The Critical Need for Validation in Structural Biology

The initiative to create standardized validation reports was driven by several key factors:

- Preventing Errors: Instances of high-profile structures containing serious errors highlighted the need for more rigorous, community-wide validation criteria to detect problems ranging from incorrect ligand fitting to entirely incorrect chain tracing [3].

- Managing Growth: As the PDB archive expanded massively, growing from a handful of structures to over 170,000, it became both possible and necessary to validate new structures by comparing them against the entire database of existing structures [2] [3].

- Ensuring Data Utility: The PDB is a foundational resource for millions of users. Ensuring the quality of its data is critical for downstream applications, including understanding biochemical function and facilitating structure-based drug discovery [2] [4].

Evolution and Implementation of Validation Reports

The development of PDB Validation Reports was a formal, community-driven process. The wwPDB established expert Validation Task Forces (VTFs) for different methods (X-ray crystallography, NMR, and 3D Electron Microscopy) to develop consensus recommendations for validation [5] [2].

The following timeline summarizes key milestones:

The system is integrated into the OneDep deposition and validation portal [5]. Depositors can generate preliminary reports via a stand-alone server before formal submission and must review the official report as part of the deposition process [1] [6]. Upon public release of a structure, its validation report also becomes publicly available [5].

Inside the Validation Report: A Guide to Key Metrics

PDB Validation Reports provide a multi-faceted assessment of a structural model and its fit to the experimental data. The reports are available in both PDF and XML formats [2].

Table: Core Components of a PDB Validation Report

| Validation Category | Specific Metrics Assessed | Purpose and Significance |

|---|---|---|

| Polymer Geometry | Bond lengths, bond angles, torsion angles (Ramachandran plot), sidechain rotamers [2] [3] | Identifies deviations from ideal stereochemistry and unlikely conformations, indicating potential errors in model building [3]. |

| Fit to Experimental Data | X-ray: Real-Space R (RSR) & Correlation (RSCC); EM: Fit to map volume & FSC curves [6] [2] | Evaluates how well the atomic model explains the experimental data it was derived from [2]. |

| Ligand & Carbohydrate Validation | Geometry (e.g., with Mogul software), chirality, fit to electron density (X-ray) [2] | Critical for confidence in small-molecule conformation and interactions, directly impacting drug discovery [2]. |

Scores are often presented as percentiles relative to all structures in the PDB or to a specific resolution class, making it easy to see how a given structure compares to the existing database [3].

Journal Mandates and Industry Impact

The adoption of PDB Validation Reports by the scientific community has been accelerated by their integration into the manuscript review process. Many leading journals now require the report to be submitted alongside a manuscript describing a new structure [1] [6] [7].

- Journal Requirements: Prominent journals requiring validation reports include Nature, eLife, Journal of Biological Chemistry, IUCr journals, FEBS journals, and Angewandte Chemie [6].

- Drug Discovery Applications: Access to validated PDB structures, especially those with reliably modeled small-molecule ligands, is fundamental to structure-based drug design. A 2024 analysis found that the PDB facilitated the discovery and development of 100% of the 34 new low molecular weight antineoplastic agents approved by the US FDA from 2019-2023 [4].

Researchers engaging with structural data and validation reports rely on several key resources.

Table: Key Research Reagent Solutions for Structural Validation

| Resource Name | Type | Function and Utility |

|---|---|---|

| OneDep System [5] | Online Portal | Unified wwPDB platform for deposition, validation, and biocuration of structural data. |

| Stand-alone Validation Server [5] [1] | Web Tool | Allows experimentalists to generate validation reports privately to verify structure quality before formal deposition. |

| Validation Web Service API [5] [2] | Programming Interface | Enables automated generation of validation reports, supporting integration into computational workflows. |

| Mogul [2] | Software | Used internally by wwPDB to check ligand geometry and chirality against the Cambridge Structural Database. |

| Sample Validation Reports [1] | Educational Resource | Pre-publication examples (e.g., 1CBS for good quality, 1FCC for poorer quality) to help users interpret reports. |

PDB Validation Reports represent a cornerstone of modern structural biology, transforming the PDB from a simple data archive into a quality-controlled knowledge resource. By providing a standardized, objective assessment of structural models, these reports empower researchers across the biological and chemical sciences to make confident use of 3D structures, thereby accelerating scientific discovery and therapeutic development.

The Role of the Worldwide PDB (wwPDB) and Validation Task Forces

The Worldwide Protein Data Bank (wwPDB) represents a cornerstone of structural biology, serving as the single global archive for three-dimensional structural data of biological macromolecules. Since its establishment as an international consortium in 2003, the wwPDB has managed the PDB archive through partner sites in the United States (RCSB PDB), Europe (PDBe), Japan (PDBj), and the Biological Magnetic Resonance Data Bank (BMRB) [8]. A critical innovation in ensuring the quality and reliability of the structural data within this archive has been the establishment of method-specific Validation Task Forces (VTFs). These expert groups develop community-wide consensus on validation standards, which are implemented through the wwPDB validation pipeline to assess both the coordinate models and their supporting experimental evidence [9] [8]. For researchers, drug developers, and the broader scientific community, these validation processes provide essential, standardized metrics to judge the quality of any given structure, thereby underpining confident scientific conclusions and decisions in areas such as drug design.

The Worldwide PDB (wwPDB) Organization

The wwPDB's mission extends beyond simple data archiving to encompass comprehensive data deposition, biocuration, validation, and dissemination. The archive, which was founded in 1971, has grown to contain over 137,000 structures as of early 2018, determined primarily by X-ray crystallography, Nuclear Magnetic Resonance (NMR) spectroscopy, and three-dimensional Electron Microscopy (3DEM) [8]. To manage the growing and complex workflow of data handling, the wwPDB developed the OneDep system, an integrated platform that combines deposition, biocuration, and validation into a unified process [8]. This system ensures that all incoming structures undergo consistent and rigorous processing. Geographically, deposition and biocuration responsibilities are distributed among the wwPDB partners: the RCSB PDB handles the Americas; PDBe covers Europe and Africa; PDBj processes entries from Asia (except China); and the associate member PDBc manages depositions from China [10].

A foundational principle of the wwPDB is that experimental data must accompany coordinate models. This policy mandates the deposition of structure-factor data for X-ray crystallography, restraint and chemical shift data for NMR, and map volumes to the Electron Microscopy Data Bank (EMDB) for 3DEM structures [11]. This ensures that the empirical evidence supporting a structural model is available for validation and reuse. The wwPDB accepts structures determined by experimental methods on actual biological macromolecules, with specific criteria for different polymer types. For example, biologically relevant polypeptide structures must contain at least three residues, while polynucleotide and polysaccharide structures require four or more residues [11]. This careful curation guarantees that the archive remains a focused and high-quality resource for the scientific community.

Validation Task Forces: Origin and Mandate

The initiative to establish Validation Task Forces (VTFs) arose from a critical need to systematically assess the quality of macromolecular structures in the PDB archive. Realizing that users—including non-specialists—required reliable tools to evaluate structural models, the wwPDB partners convened method-specific VTFs comprising leading experts from the structural biology community [8]. The primary mandate of these task forces was to collect recommendations and develop a consensus on the additional validation checks that should be performed for structures determined by X-ray crystallography, NMR spectroscopy, and 3DEM [9]. Furthermore, they were tasked with identifying the software applications best suited to perform these validation tasks.

The recommendations from the three principal VTFs (for X-ray, NMR, and 3DEM) have fundamentally shaped the modern validation landscape [8]. Their work has ensured that the validation process is not limited to basic geometric checks but extends to a comprehensive assessment of the agreement between the atomic model and the experimental data, as well as the quality of the experimental data itself. This community-driven, consensus-based approach has been vital for the widespread adoption and authority of the resulting validation reports. The wwPDB continues to work closely with these VTFs to incorporate new scientific insights and methodological advancements, ensuring that the validation pipeline remains at the forefront of structural biology quality control.

The wwPDB Validation Pipeline and Reports

The wwPDB validation pipeline is the operational engine that translates VTF recommendations into actionable quality metrics. Integrated directly into the OneDep deposition system, this pipeline performs automated checks on both the structural model and its experimental data [8]. The output is a comprehensive validation report (VR), provided in both human-readable (PDF) and machine-readable (XML) formats, which offers depositors, reviewers, and users a detailed assessment of a structure's quality [8] [1].

Key Validation Metrics and the "Slider Plot"

The validation report employs a range of validation metrics to evaluate different aspects of a structure. A central feature, recommended by the X-ray VTF, is the "slider plot" (see Figure 1), which provides an at-a-glance summary of overall quality [8]. This plot maps key metrics to a percentile score, visually indicating how a given structure compares to all other structures in the archive and, crucially, to other structures determined at a similar resolution. The slider plot uses a color code from blue (high percentile, best quality) to red (low percentile, poorer quality), making it accessible even to non-experts [8].

Table 1: Key Validation Metrics in the wwPDB Validation Report

| Validation Metric | Description | Method(s) | Interpretation |

|---|---|---|---|

| Ramachandran Outliers | Percentage of protein residues in disallowed regions of the Ramachandran plot [8]. | X-ray, EM, NMR | Lower percentages indicate better protein backbone geometry. |

| Rotamer Outliers | Percentage of protein side chains with unlikely conformations [8]. | X-ray, EM, NMR | Lower percentages indicate better side-chain packing. |

| Clashscore | Number of severe atomic overlaps per 1000 atoms [8]. | X-ray, EM, NMR | Lower scores indicate fewer steric clashes. |

| Bond Length RMSZ | Root-mean-square Z-score of deviations from ideal bond lengths [12]. | X-ray, EM, NMR | Values close to 0 indicate good geometric agreement. |

| Angle RMSZ | Root-mean-square Z-score of deviations from ideal bond angles [12]. | X-ray, EM, NMR | Values close to 0 indicate good geometric agreement. |

| Q-score | Measures the agreement between atomic model and EM map [10]. | 3DEM | Higher scores (closer to 1) indicate better model-map fit. |

| Ligand Fit | Assessment of electron density fit for small-molecule ligands [8]. | X-ray, 3DEM | Good fit supports ligand identity, position, and conformation. |

Experimental Data and Model Fit

Beyond internal geometry, the pipeline rigorously assesses how well the atomic model fits the experimental data. For X-ray structures, this includes analyses with tools like phenix.xtriage and the calculation of real-space correlation coefficients [8]. A particularly critical check involves small-molecule ligands, where the pipeline uses the Mogul software to compare ligand geometry against high-quality small-molecule structures from the Cambridge Structural Database (CSD) and assesses the electron density fit to validate the ligand's presence and conformation [8]. For 3DEM structures, a recent and significant advancement has been the introduction of a Q-score percentile slider in the validation report. The Q-score measures the resolvability of atoms in a cryo-EM map, and the slider allows users to compare a structure's average Q-score against the entire archive and a resolution-similar subset, helping to flag issues with model-map fit or map quality [10].

Comparative Analysis of Validation Across Experimental Methods

The wwPDB validation system applies a consistent philosophy of quality assessment across all supported experimental methods, while employing technique-specific metrics and software as defined by the respective VTFs. The following section and table provide a comparative overview.

Table 2: Comparison of wwPDB Validation by Experimental Method

| Aspect | X-ray Crystallography | NMR Spectroscopy | 3DEM |

|---|---|---|---|

| Mandatory Data | Structure factors [11]. | Restraints and chemical shifts [11]. | EMDB map volume [11]. |

| Key Model Metrics | Ramachandran, Rotamer, Clashscore, RSRZ, ligand fit [8]. | Ramachandran, Rotamer, Clashscore, restraint analysis [13]. | Ramachandran, Rotamer, Clashscore, Q-score [10]. |

| Key Data Metrics | Data completeness, R-work/R-free, twinning analysis [8]. | Restraint violations, chemical shift completeness [13]. | Map resolution, FSC curve, model-map Q-score [10] [14]. |

| Specialized Software | MolProbity, phenix.xtriage, Mogul [8]. | PDBStat, analysis of restraint violations [13]. | TEMPy, Q-score analysis [14]. |

| Recent Advances | Archive-wide updates of validation statistics. | Public availability of validation reports for all NMR entries [1]. | Introduction of Q-score percentile slider (2025) [10]. |

Method-Specific Workflows and Outputs

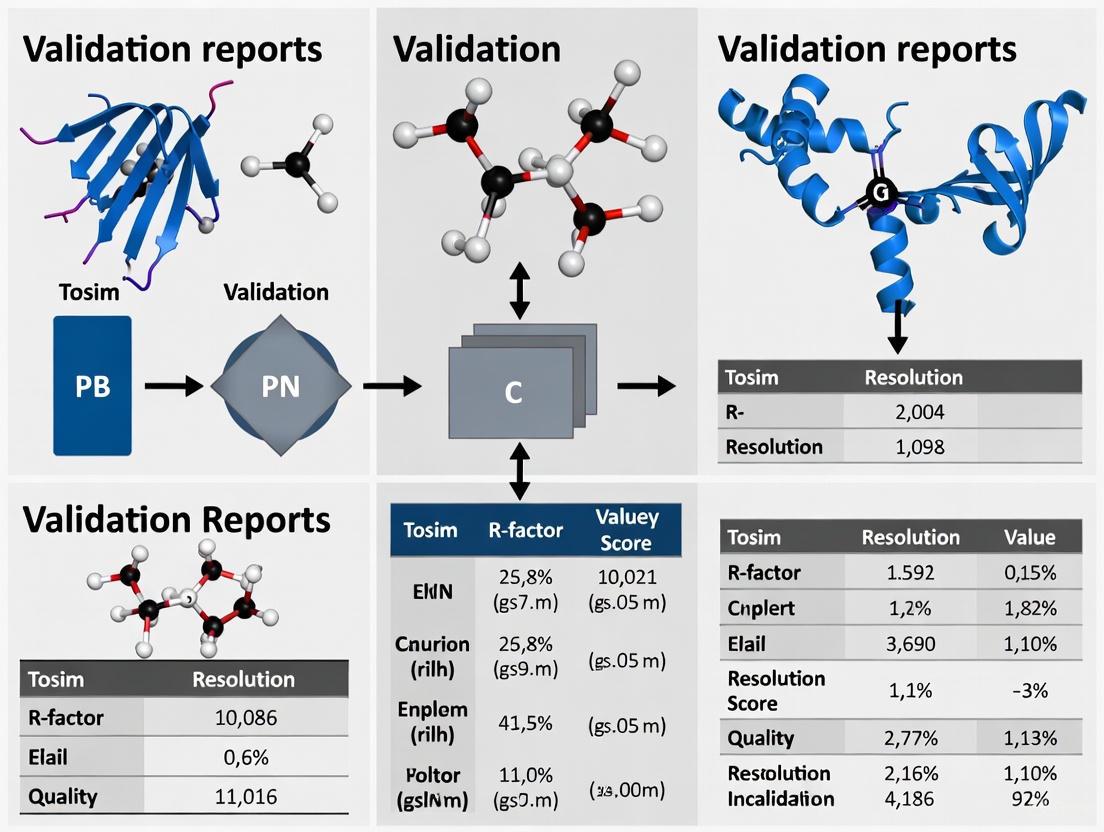

The validation workflow, while unified in the OneDep system, branches to accommodate the specific requirements of each method. The diagram below illustrates this integrated process.

Figure 1: Integrated wwPDB Validation Workflow. The process, governed by OneDep and VTF recommendations, branches for method-specific validation before generating the final report.

Leveraging wwPDB validation data requires awareness of key resources. The following table details essential tools and access points for researchers.

Table 3: Research Reagent Solutions for Structural Validation

| Resource Name | Type | Function & Purpose | Access / Provider |

|---|---|---|---|

| wwPDB Validation Server | Web Server | Allows experimentalists to run the official validation pipeline on their models prior to deposition, enabling quality improvement [1]. | https://validate.wwpdb.org [8] |

| RCSB PDB Data API | Programming Interface | Enables programmatic retrieval of validation report data, allowing integration into custom analysis pipelines and tools [12]. | RCSB PDB API [12] |

| MolProbity | Software Suite | Provides all-atom contact, torsional, and geometry analysis. Integrated into the wwPDB pipeline to generate Clashscore and rotamer/Ramachandran statistics [8]. | Richardson Lab / Duke University |

| PDBx/mmCIF Format | Data Format | The standard format for PDB deposition and data representation. Required for accurately representing complex modern structural data [10]. | wwPDB / IUCr |

| Coot | Model Building Software | A tool for model building and refinement that can display per-residue validation information from the wwPDB validation reports for released entries [8]. | MRC Laboratory of Molecular Biology |

| MolViewSpec | Visualization Spec | A Mol* extension to create, share, and reproduce molecular scenes, ensuring visualization reproducibility [10]. | Mol* |

Experimental Protocols for Utilizing Validation Data

To ensure robust scientific outcomes, researchers should adopt a systematic protocol for reviewing validation data. The first step is the acquisition of the validation report. For any PDB entry of interest, the official validation report (PDF) can be downloaded directly from the entry page on any of the wwPDB partner sites (RCSB PDB, PDBe, PDBj) [1]. For large-scale analyses, the machine-readable XML files for all released entries are available via FTP/HTTP, or programmatically through the RCSB PDB Data API, which allows retrieval of specific validation metrics like MolProbity scores or Ramachandran outliers [12].

The core of the protocol is a hierarchical analysis of the report. The initial assessment should focus on the Overall Quality at a Glance slider plot [8]. Investigators should look for a preponderance of blue (high percentile) indicators, with particular attention to the key model quality metrics: Ramachandran outliers, rotamer outliers, and clashscore. A structure with multiple metrics in the red (low percentile) should be treated with caution. Subsequently, a detailed investigation of specific areas is required. This includes checking the fit of key residues in the active site or at protein-protein interfaces using real-space correlation data, and scrutinizing the geometry and electron density fit of any small-molecule ligands, cofactors, or ions [8]. For 3DEM structures, the newly introduced Q-score slider and residue-level Q-score data should be used to assess the local and global model-map fit [10].

Finally, the protocol requires contextual and comparative analysis. Validation metrics must always be interpreted in the context of the structure's resolution. For example, a higher percentage of Ramachandran outliers is expected in a lower-resolution X-ray or EM structure. Comparing the validation metrics of several structures within the same family can help identify which one is most reliable for detailed mechanistic analysis or as a starting point for molecular docking. This multi-layered protocol ensures that researchers can effectively triage and select the highest-quality structural data for their specific research or drug development projects.

Validation reports for Protein Data Bank (PDB) crystallographic structures provide a standardized assessment of structural model quality, serving as critical tools for researchers, scientists, and drug development professionals. These reports, generated by the Worldwide PDB (wwPDB) consortium, implement community-recommended standards to evaluate both the overall reliability of macromolecular structures and specific local features that may require careful scrutiny. For professionals relying on structural data for drug design and functional analysis, understanding these components is essential for interpreting structural models accurately and avoiding erroneous conclusions based on potentially problematic regions. This guide examines the key elements of these validation reports, from high-level global quality indicators to residue-level outlier identification, providing a framework for critical assessment of crystallographic structures within the research pipeline.

The wwPDB validation report provides an executive summary section titled "Overall quality at a glance" that serves as a rapid evaluation dashboard for researchers. This section displays key information about the entry including the experimental technique and a proxy measure of information content (resolution for crystal structures) [15]. The most visually distinctive elements are the percentile sliders, which compare the validated structure against the entire PDB archive, providing immediate context for interpretation [15]. This overview enables drug development professionals to quickly assess whether a structure meets minimum quality thresholds for their specific applications, whether for high-resolution mechanistic studies or lower-resolution molecular placement.

Global Validation Metrics: The Big Picture

Global metrics provide an overall assessment of structure quality, allowing for rapid comparison between structures and evaluation against archival norms. These metrics are particularly valuable for journal reviewers and editors who need to assess structural reliability during manuscript evaluation, and for scientists selecting appropriate structures for their research programs.

Table 1: Global Validation Metrics for X-ray Crystallographic Structures

| Metric Category | Specific Metrics | Interpretation Guidelines | Comparative Context |

|---|---|---|---|

| Model-Data Fit | R-factor, R-free [16] | Lower values indicate better agreement (perfect=0); R-free should be slightly higher (~0.05) than R-factor; large differences suggest potential over-fitting | Percentile scores compared to similar resolution structures in PDB archive [15] |

| Experimental Data Quality | Resolution [16] | Lower values (e.g., 1.8Å vs 3.0Å) indicate better resolvability of adjacent atoms | Direct numerical value with established quality ranges (e.g., <2.0Å=high, >3.0Å=low) |

| Geometric Quality | Clashscore, Ramachandran outliers, sidechain outliers [17] | Lower clashscores and lower percentage of outliers indicate better stereochemistry | Percentile rankings compared to entire PDB archive [15] |

The R-free value deserves particular attention in drug development contexts, as it serves as an unbiased validation metric calculated against experimental data not used during structure refinement [16]. A significant divergence between R-factor and R-free may indicate over-interpretation of the experimental data, potentially compromising the reliability of ligand-binding sites or active regions—critical information for structure-based drug design initiatives.

Local Outlier Analysis: The Devil in the Details

While global metrics provide overall quality assessment, local outlier analysis identifies specific regions of concern within the structural model. For researchers focusing on particular binding sites, enzyme active regions, or molecular interfaces, these local validations are often more informative than global scores, which can sometimes mask localized issues [15].

Table 2: Local Outlier Analysis in Validation Reports

| Validation Type | Specific Checks | Outlier Identification | Research Implications |

|---|---|---|---|

| Rotamer Analysis | Sidechain conformations [17] | Unfavorable rotamers of Asn, Gln, and other residues [18] | Potential errors in ligand-interacting residues; functional implications |

| Ramachandran Assessment | Phi/psi dihedral angles [17] | Residues in disallowed regions of Ramachandran plot | Possible backbone errors affecting protein folding interpretation |

| Real-Space Fit | Fit to electron density [15] | RSCC<0.8 and RSR>0.4 indicate poor fit [19] | Low confidence in atomic coordinates for specific residues |

| Steric Clashes | Non-bonded atom contacts [17] | Atoms positioned too closely without appropriate bonding | Structurally unrealistic models affecting binding site geometry |

The validation report provides both summary information (typically up to five outliers per metric) and complete listings of all outliers, enabling focused investigation of potentially problematic regions [15]. This granular approach is particularly valuable for drug development professionals who need to assess the reliability of specific binding pockets or catalytic sites when selecting structural templates for virtual screening or lead optimization.

Experimental Protocols and Methodologies

The validation pipeline incorporates multiple sophisticated analytical methods, each with specific experimental or computational protocols:

Electron Density Fit Analysis

The Real Space Correlation Coefficient (RSCC) validation uses electron density maps calculated from deposited structure factors [16]. The protocol involves: (1) calculating electron density from the atomic model; (2) comparing calculated density with experimental electron density; (3) computing correlation coefficients on a per-residue basis; (4) identifying outliers with RSCC<0.8 [19]. This methodology provides residue-level validation of the fit between atomic coordinates and experimental data, highlighting regions where the model may be unsupported by experimental evidence.

Geometry Validation Protocols

MolProbity validation implements all-atom contact analysis using updated geometrical criteria for phi/psi angles, sidechain rotamers, and Cβ deviations [18] [17]. The methodology involves: (1) adding hydrogen atoms to the model; (2) analyzing all interatomic distances to identify clashes; (3) evaluating rotamer distributions against high-quality reference data; (4) calculating Ramachandran preferences based on dihedral angle distributions [17]. This comprehensive geometric analysis identifies steric problems and conformational outliers that may indicate modeling errors.

Visualization of Validation Workflows

The validation process follows a systematic workflow that integrates multiple validation approaches to assess different aspects of structure quality, as illustrated in the following diagram:

Validation Workflow for PDB Structures

The relationships between different validation metrics and their collective interpretation can be visualized as an interconnected system:

Validation Metrics Interrelationship

Researchers working with PDB validation reports utilize several key resources that facilitate structure validation and quality assessment:

Table 3: Essential Validation Tools and Resources

| Tool/Resource | Primary Function | Application in Research |

|---|---|---|

| wwPDB Validation Server (https://validate.wwpdb.org) | Stand-alone validation prior to deposition [15] | Pre-submission quality check for structural biologists |

| MolProbity | All-atom contact analysis and geometry validation [18] | Identification of steric clashes, rotamer outliers, and Ramachandran issues |

| OneDep System | Unified deposition, biocuration, and validation platform [15] | Centralized workflow for structure submission to PDB |

| RCSB PDB Validation Resources | Access to validation reports and documentation [1] | Retrieval and interpretation of validation data for existing entries |

| Coot | Molecular graphics with validation visualization [15] | Interactive model building with validation outlier display |

Validation reports for PDB crystallographic structures provide an essential framework for assessing the reliability of macromolecular models in structural biology research and drug development. These reports integrate global metrics that offer overall quality assessment with detailed local outlier analysis that identifies specific regions requiring careful scrutiny. For researchers relying on structural data for drug discovery, understanding both components—from R-free values and resolution limits to residue-specific geometry outliers and electron density fit—is crucial for appropriate interpretation and application of structural models. As the wwPDB continues to refine these reports based on community recommendations [15], they remain living documents that evolve alongside methodological advances in structural biology, continually enhancing their utility for critical assessment of the structural data that underpins modern drug development.

Within structural biology and structure-based drug design, the reliability of a molecular model is paramount. The Slider Plot, featured prominently on the RCSB PDB's Structure Summary pages, serves as a critical visual dashboard for the global quality of experimentally-determined protein structures [20]. This guide objectively compares this integrated visualization tool with standalone validation alternatives, providing researchers with the data and context needed to make informed decisions in their computational analyses and therapeutic development workflows.

What is the Slider Plot?

The Slider Plot is a component of the wwPDB validation report, graphically summarizing key global quality indicators for a PDB entry [20] [21]. It provides an at-a-glance assessment of a structure's quality by presenting its performance across several validation metrics relative to all structures in the PDB and to structures of comparable resolution [20].

- Purpose: To offer a quick, intuitive overview of a structure's overall quality.

- Location: Found in the Header section of the Structure Summary page for any experimental structure on RCSB.org [20].

- Presentation: Each row represents a specific quality measure, displayed as a horizontal slider with the structure's percentile score indicated by vertical bars [20].

Table 1: Core Quality Metrics Represented in the Slider Plot

| Metric | Description | Interpretation |

|---|---|---|

| Clashscore | Measures steric overlaps between atoms; a lower score indicates fewer clashes [22]. | Lower values (right/blue on slider) are better [20]. |

| Ramachandran Outliers | Percentage of protein residues in disallowed regions of the Ramachandran plot [21]. | Lower percentages (right/blue) are better [20]. |

| Sidechain Outliers | Percentage of protein residues with unlikely sidechain rotamers. | Lower percentages (right/blue) are better [20]. |

| Rfree value | Cross-validation statistic indicating agreement with experimental data not used in refinement [16]. | Lower values (right/blue) are better [20] [16]. |

| RSRZ Outliers | Real Space R Z-score; identifies residues with poor fit to the experimental electron density [16]. | Lower values and fewer outliers (right/blue) are better [20]. |

Comparative Analysis: Slider Plot vs. Alternative Validation Tools

While the Slider Plot offers a streamlined summary, a comprehensive validation strategy often requires deeper analysis. The following table compares its capabilities against other widely used validation resources.

Table 2: Objective Comparison of the Slider Plot and Alternative Validation Methods

| Validation Tool | Key Features | Data Sources | Primary Outputs | Best Use Case |

|---|---|---|---|---|

| Slider Plot (RCSB PDB) | Integrated on the PDB entry page; provides percentile-based visual summary [20]. | wwPDB validation data; PDB-wide statistics for comparison [20]. | Global quality metrics relative to the entire PDB and similar-resolution structures [20]. | Quick, initial assessment of overall structure quality during data retrieval. |

| MolProbity | All-atom contact analysis; modern geometrical criteria for dihedrals and Cβ deviations [18]. | User-uploaded coordinate files or PDB IDs [18]. | Detailed, residue-level reports on clashes, rotamers, Ramachandran plots, and Cβ deviations [18]. | In-depth, per-residue quality evaluation before detailed analysis or publication. |

| PROCHECK | Validates stereochemical quality of protein structures [18]. | User-uploaded coordinate files [18]. | Ramachandran plot quality and detailed stereochemical statistics [18]. | Complementary analysis of protein backbone conformation. |

| EMRinger | Scores the fit of a model into its cryo-EM density map, particularly for side chains. | Cryo-EM map and atomic model. | EMRinger score, indicating model-to-map fit. | Validating models built into mid-resolution cryo-EM maps. |

| Q-Score | Measures atom resolvability in cryo-EM maps [16]. | Cryo-EM map and atomic model coordinates [16]. | Per-atom and average Q-scores for the model; included in 3DEM validation reports [16]. | Assessing the local fit and interpretability of cryo-EM models. |

Supporting Experimental Data and Performance Gaps

The Slider Plot's percentile rankings are derived from the statistical analysis of the entire PDB archive [20]. For example, an X-ray structure's Slider Plot will display:

- A solid bar representing the structure's quality percentile relative to all X-ray structures in the PDB [20].

- A hollow bar representing its quality percentile relative to other X-ray structures of similar resolution [20].

This allows a researcher to immediately see if a 2.5Å structure is of high quality for its resolution. However, a key performance gap is its focus on global metrics. It does not provide residue-level or ligand-specific validation data. For instance, a structure might have excellent global scores but contain a poorly fit active-site inhibitor. Identifying such issues requires tools like MolProbity or the 3D visualization of validation metrics available in the Mol* viewer on RCSB.org, which can map local quality measures like RSRZ or clash hotspots directly onto the 3D structure [22].

Detailed Methodologies for Key Validation Experiments

Understanding the protocols behind the metrics is essential for their correct interpretation.

Experimental Protocol 1: Generating the Slider Plot

- Data Deposition & Processing: The depositor submits the atomic coordinates and the associated experimental data (e.g., structure factors for X-ray crystallography) to the wwPDB [16].

- Automated Validation: The wwPDB validation pipeline runs, using standards and recommendations from international Validation Task Forces (VTFs) for X-ray, NMR, and 3DEM methods [16].

- Percentile Calculation: The pipeline calculates key metrics (Clashscore, Ramachandran outliers, etc.) for the deposited structure and compares them against the distribution of these metrics across all relevant PDB structures.

- Report Generation: The Slider Plot is generated as part of the full validation report, with the position of the percentile bars determined by the structure's rank within the calculated distributions [20].

Experimental Protocol 2: Assessing Local Density Fit with RSCC

The Real-Space Correlation Coefficient (RSCC) is a critical local measure referenced in validation reports and viewable in 3D [16].

- Data Input: The protocol requires the atomic coordinates of a residue and the experimental electron density map.

- Calculation: For each residue, a simulated electron density map is calculated based on the atomic model. The RSCC is computed by comparing this simulated density to the experimental density in the region surrounding the residue.

- Interpretation: An RSCC value of 1.0 indicates perfect agreement. Residues with RSCC values in the lowest 1% of distributions for their amino acid type and resolution should not be trusted, while those in the lowest 1-5% should be treated with caution [16].

Slider Plot Generation Workflow: This diagram illustrates the automated pipeline from data deposition to the generation of the Slider Plot and full validation report on the RCSB PDB website.

Table 3: Essential Resources for Structure Validation and Analysis

| Resource Name | Type | Primary Function in Validation |

|---|---|---|

| RCSB PDB Structure Summary Page | Web Portal | Central hub for accessing the Slider Plot, full validation report PDF, and links to 3D visualization [20]. |

| Mol* Viewer | 3D Visualization Software | Enables 3D mapping of quality metrics like clashscores and density fit (RSRZ/RSCC) onto the molecular structure for local assessment [22]. |

| wwPDB Validation Report | Data Report | The comprehensive PDF report containing the Slider Plot, detailed analyses, and specific outlier listings [21]. |

| MolProbity Server | Validation Web Service | Provides all-atom contact analysis, updated Ramachandran evaluations, and rotamer outlier checks for in-depth, residue-level validation [18]. |

| CheckMyMetal | Specialized Validation Service | Validates the geometry and identity of metal-binding sites in metalloprotein structures [18]. |

Structure Quality Assessment Strategy: This diagram outlines a multi-tiered validation strategy, from initial Slider Plot review to in-depth analysis with external tools, leading to an informed decision on a structure's suitability for research.

How Validation Serves the Broader Scientific Community and Journals

Validation is a cornerstone of structural biology, transforming raw experimental data into reliable, publicly accessible knowledge that fuels further scientific discovery. In the context of crystallographic structures, validation refers to the comprehensive process of assessing the quality, reliability, and chemical correctness of structural models before they enter the scientific record. This process serves as a critical quality control mechanism that ensures the scientific integrity of structures housed in repositories like the Protein Data Bank (PDB), which in turn enables their effective reuse across diverse research domains [23].

The PDB, maintained by the Worldwide Protein Data Bank (wwPDB) consortium, represents one of biology's richest open-source repositories, housing over 242,000 macromolecular structural models alongside their experimental data [24]. Since its establishment in 1971, systematic archiving, validation, and indexing of these structures have accelerated discoveries across structural biology, enabling researchers to compare new entries against a vast archive of solved structures [24]. The democratization of this structural data, amplified by modern computational tools, has empowered a broad community of researchers to drive new scientific discoveries—but this widespread usage is fundamentally dependent on robust validation protocols that ensure data reliability [24].

Validation Metrics and Methodologies for Structural Data

Core Validation Metrics for Crystallographic Structures

Crystallographic validation employs multiple quantitative metrics to assess different aspects of structural models. While the R factor remains the most widely recognized measure describing the fit of the model to the experimental data, numerous additional quality metrics provide valuable insights into refinement quality and model validity [25]. The Cambridge Structural Database (CSD), a comprehensive repository of over 1.3 million unique crystallographic datasets, has identified several key metrics particularly valuable for assessing structural quality [25].

Table 1: Essential Validation Metrics for Crystallographic Structures

| Metric Category | Specific Metric | Interpretation | Optimal Range |

|---|---|---|---|

| Fit to Experimental Data | R-factor (_refine_ls_R_factor_gt) |

Difference between observed and calculated structure-factor amplitudes | Lower values indicate better fit (typically <0.20) |

Weighted R-factor (_refine_ls_wR_factor_ref) |

R-factor with weighting scheme applied | Lower values preferred | |

Goodness of fit (_refine_ls_goodness_of_fit_ref) |

How well the model fits the experimental data | Values close to 1.0 ideal | |

| Model Geometry | Maximum shift/su (_refine_ls_shift/su_max) |

Maximum shift per standard uncertainty in the last refinement cycle | Values <0.05 indicate convergence |

| Electron Density | Maximum difference density (_refine_diff_density_max) |

Highest peak in the difference density map | Should be small relative to map values |

Minimum difference density (_refine_diff_density_min) |

Lowest peak in the difference density map | Should be small relative to map values | |

| Data Resolution | Theta max (_diffrn_reflns_theta_max) |

Maximum diffraction angle used for data collection | Higher values indicate higher resolution |

These metrics focus primarily on the technical aspects of refinement rather than "chemical correctness," which can be assessed using additional tools like the CCDC's Mogul software for evaluating molecular geometry [25]. The IUCr's checkCIF service provides automated validation checks that are often required prior to publication, though structure validation remains an evolving field with ongoing discussions about metric applicability and weaknesses [25].

Experimental Protocols for Structural Validation

The validation workflow for crystallographic structures follows a systematic protocol that begins with data collection and continues through the entire refinement process. The following diagram illustrates this comprehensive validation workflow:

Diagram 1: Structural Validation Workflow (47 characters)

As outlined in the workflow, validation is not a single step but an iterative process integrated throughout structure determination. Refinement software provides continuous quality assessment through indicators like the color-coded GUI of Olex2, allowing crystallographers to identify and address issues during model building [25]. The final validation stage occurs during deposition to the PDB, where structures undergo automated checking against both experimental data and geometric expectations [23].

For integrative structural biology methods, which combine data from multiple experimental and computational approaches, specialized validation frameworks have been developed. The PDB-IHM system provides standards and software tools specifically designed for validating integrative structures that span diverse spatiotemporal scales and conformational states [23]. These mechanisms validate structures based on the experimental data underpinning them, ensuring reliability even for complex macromolecular assemblies determined through hybrid approaches.

The Scientist's Toolkit: Essential Research Reagent Solutions

Structural biologists rely on a sophisticated toolkit of databases, software, and computational resources to perform validation and analysis of crystallographic structures. These resources have been developed and refined through community-wide efforts to establish standards and best practices.

Table 2: Essential Research Reagent Solutions for Structural Validation

| Tool/Resource | Type | Primary Function | Access |

|---|---|---|---|

| wwPDB Validation Server | Web Service | Comprehensive validation during deposition | Online |

| checkCIF (IUCr) | Web Service | Identification of potential issues in CIFs | Online |

| Mogul (CCDC) | Software | Assessment of molecular geometry | Licensed |

| PISCES Server | Web Service | Sequence culling and selection | Online |

| FATCAT | Web Service | Flexible structure alignment | Online |

| MMseqs2 | Algorithm | Sequence clustering and alignment | Open Source |

| Good Tables | Library | Validation of tabular data | Open Source |

The wwPDB consortium provides a unified deposition system that ensures structures are consistently validated and mirrored worldwide within 24 hours of release [24]. This global infrastructure is maintained through regional data centers (RCSB PDB in the United States, PDBe in Europe, PDBj in Japan), each providing unique portals, visualization tools, and database integrations tailored to their respective communities while maintaining consistent validation standards [24].

Specialized software like the CCDC's Mogul enables assessment of the "chemical correctness" of a structure by comparing its molecular geometry against knowledge-based expectations derived from the CSD [25]. For sequence-level analyses, tools like the PISCES server automate the removal of redundant sequences above a chosen identity threshold while keeping the highest-quality structure from each group, which is essential for many structural bioinformatics analyses [24].

Impact of Validation on Scientific Communities and Applications

Enabling Drug Discovery and Development

Rigorous validation of PDB structures has profoundly impacted drug discovery, particularly in oncology. A recent analysis revealed that open access to validated three-dimensional biostructure information from the PDB facilitated the discovery and development of 100% of the 34 new low molecular weight, protein-targeted antineoplastic agents approved by the US FDA between 2019-2023 [26]. These drugs target diverse protein classes including kinases, enzymes, nuclear hormone receptors, and transcription factors.

The median time between the first PDB deposition of each drug target structure and FDA approval of the corresponding drug exceeded 17 years, demonstrating how validated structural information provides a foundation for long-term drug development pipelines [26]. For approximately 74% (25/34) of these new molecular entities, validated PDB structures reveal at atomic-level precision how the drug binds to its target protein, providing crucial insights for understanding mechanism of action and optimizing therapeutic efficacy [26].

The relationship between validated structures and drug discovery is illustrated in the following pathway:

Diagram 2: From Structure to Drug Pathway (36 characters)

Supporting Methodological Advances and Community Reuse

Validation enables the collective use of structural data to discover new knowledge that "transcends the results of individual experiments," fulfilling the original vision of structural databases [25]. Throughout the Cambridge Structural Database's 60-year history, validation has facilitated numerous discoveries through data mining, including proof of hydrogen bonds, insights into ring geometry, and the characterization of Bürgi-Dunitz angles [25].

The essential role of validation in promoting data reuse extends beyond structural biology to other scientific domains. At eLife, validation of shared research data using tools like Good Tables—which checks both structural integrity and adherence to published schema—has been crucial for improving data reusability [27]. Analyses revealed that researchers often present data for visual inspection rather than computational reuse, employing formatting choices like colored cells to separate data groups that hinder machine readability [27]. Validation identified these issues, allowing journals to educate researchers about preparing data in "machine-friendly" ways that facilitate reproduction and comparison of results [27].

Validation studies also play a critical role in establishing the credibility of predictive methods across scientific disciplines. In toxicology, validation frameworks help establish confidence in new approaches based on in vitro methods and computational modeling, though the multiplicity of assessment frameworks can sometimes hinder cross-disciplinary acceptance [28]. Method-agnostic credibility factors have been proposed to facilitate communication between method developers and users, ultimately increasing acceptance of predictive approaches in regulatory contexts [28].

Comparative Analysis of Validation Approaches Across Disciplines

Community-Engaged Validation in Public Health Research

While structural biology has developed sophisticated technical validation metrics, other fields employ complementary approaches that emphasize community engagement. In public health research, validation often involves returning findings to community participants for feedback, which serves to check researcher interpretation, support relationship building, and empower communities [29]. This approach is particularly valuable for ensuring research reflects the realities of those it aims to serve.

The Community Engagement for Pandemic Preparedness (CEPP) project exemplifies this approach through validation workshops where findings were presented to participants using fictional stories representative of overall findings [29]. This methodology made research accessible and relatable, encouraging open dialogue across diverse groups. Participants noted how digital exclusion aspects "were on point" with their experiences, while also identifying missing elements like the pandemic's impact on youth mental health—leading to a more nuanced understanding of the data [29].

Validation of Predictive Computational Models

The emergence of AI/ML tools for protein structure prediction represents a seismic shift in structural biology, and their validation against experimental data is crucial for establishing reliability. AlphaFold 2 has revolutionized protein structure prediction, yet systematic evaluations reveal specific limitations in capturing biologically relevant states [30]. For nuclear receptors, AlphaFold 2 shows high accuracy for stable conformations but misses the full spectrum of biologically relevant states, systematically underestimating ligand-binding pocket volumes by 8.4% on average and capturing only single conformational states where experimental structures show functionally important asymmetry [30].

Recent advances in protein complex prediction, such as DeepSCFold, demonstrate how validation against experimental benchmarks drives methodological improvements. DeepSCFold uses sequence-derived structure complementarity to improve protein complex modeling, achieving an 11.6% improvement in TM-score compared to AlphaFold-Multimer on CASP15 targets [31]. For antibody-antigen complexes from the SAbDab database, it enhances prediction success rates for binding interfaces by 24.7% over AlphaFold-Multimer [31]. These improvements are validated through rigorous benchmarking against experimental structures, highlighting how the PDB's repository of validated structures enables advancement of predictive algorithms.

Validation serves as the critical bridge between raw structural data and scientific knowledge that can be reliably used by the broader research community. For journals, robust validation processes ensure the integrity of published findings and enable reproducibility—cornerstones of scientific credibility. For researchers and drug development professionals, validated structures provide a trustworthy foundation for designing experiments, interpreting results, and developing therapeutics. The continued evolution of validation methodologies—from technical metric development to community engagement approaches—will further enhance the utility of structural data across scientific disciplines, ultimately accelerating the translation of structural insights into practical applications that benefit society.

Decoding the Report: A Step-by-Step Guide to Key Crystallographic Validation Metrics

In the field of structural biology, the assessment of macromolecular structure quality is paramount for ensuring biological validity and reliability in downstream applications, including drug discovery. The Protein Data Bank (PDB) serves as the central repository for experimentally determined structures, and the worldwide PDB (wwPDB) has established standardized validation protocols to assess structure quality. For structures determined by X-ray crystallography, three fundamental global quality indicators provide the initial assessment of model reliability: resolution, R-work, and R-free. These metrics offer researchers a quantitative foundation for evaluating the precision of atomic coordinates, the agreement between the model and experimental data, and the potential for overfitting during refinement. Understanding the interpretation, interrelationships, and limitations of these indicators is essential for structural biologists, computational researchers, and drug development professionals who rely on these models for mechanistic insights and structure-based drug design. This guide provides a comprehensive comparison of these essential indicators, detailing their theoretical basis, practical interpretation, and role within the broader context of PDB validation reports.

Defining the Global Quality Indicators

Resolution

Resolution, measured in Ångströms (Å), is the most frequently cited indicator of structural quality. It represents the smallest distance between two points in the crystal that can be distinguished as separate features in the electron density map. In practical terms, it sets the theoretical limit on the precision of a structural model. Higher resolution (indicated by a lower numerical value) provides finer atomic detail, allowing for more confident placement of atoms, discrimination of alternative conformations, and identification of water molecules and ions. The relationship between resolution values and model interpretability is well-established: structures at resolutions better than 1.5 Å are considered "atomic," those between 1.5-2.5 Å are "high," 2.5-3.5 Å are "medium," and resolutions worse than 3.5 Å are "low". In cryo-electron microscopy (cryo-EM), resolution is estimated differently, using the Fourier Shell Correlation (FSC) between two independently reconstructed half-maps [24].

R-work and R-free

R-work (also called the R-factor) and R-free are complementary measures that quantify how well the atomic model explains the experimental X-ray diffraction data.

- R-work is calculated as R-work = (∑ ‖Fobs| - |Fcalc‖) / (∑ |Fobs|), where Fobs are the observed structure factor amplitudes from the experimental data and F_calc are the calculated structure factor amplitudes derived from the model. It measures the agreement between the model and the data used in refinement.

- R-free serves as a safeguard against overfitting. It is calculated identically to R-work but uses a small subset of the reflection data (typically 5-10%) that was excluded from the refinement process. A significant divergence between R-work and R-free suggests that the model may be over-parameterized, fitting noise in the working data set rather than the true signal [24].

Both R-values are reported as decimals or percentages, with lower values indicating better agreement. They are strongly correlated with the resolution of the diffraction data [32].

Table 1: Summary of Key Global Quality Indicators

| Quality Indicator | Definition | Interpretation (Typical Values for Good Structures) | Primary Function |

|---|---|---|---|

| Resolution | The smallest distinguishable distance in the electron density map. | < 2.0 Å (High); 2.0-3.0 Å (Medium); > 3.0 Å (Low) [24] | Sets the theoretical limit of model precision. |

| R-work | Agreement between the model and the diffraction data used in refinement. | Should be close to R-free. A value < 0.25 is typical for high-resolution structures. | Measures model fit to the refinement data. |

| R-free | Agreement between the model and a subset of data excluded from refinement. | Should be close to R-work (difference typically < 0.05). A value < 0.30 is typical for high-resolution structures [32]. | Guards against overfitting; a key cross-validation metric. |

Experimental Protocols for Structure Determination and Validation

The journey from protein crystal to a validated PDB entry follows a rigorous pipeline. The following diagram illustrates the key stages of this process, highlighting where global quality indicators are calculated and assessed.

Diagram 1: The workflow of an X-ray crystallographic structure determination, showing the generation of key quality indicators.

The Structure Determination Workflow

The process begins with the growth of a protein crystal and the collection of X-ray diffraction data. The resolution of the structure is determined at this initial stage from the quality and extent of the diffraction pattern. Following data collection, the "phase problem" is solved, often using molecular replacement (as is common for kinase families like PKA) or experimental phasing methods [32]. The initial model then undergoes cycles of iterative model refinement, where atomic coordinates and B-factors are adjusted to improve the fit between the calculated (Fcalc) and observed (Fobs) structure factors. This process minimizes the R-work value. Critically, the R-free value is calculated using a test set of reflections that is excluded from these refinement calculations from the very beginning. The stability and reasonableness of the R-free value throughout refinement is a key check for the model's validity. Upon completion, the structure, along with its primary experimental data (structure factors), is deposited into the PDB, where it undergoes automated wwPDB validation [6]. This process generates a validation report that provides a comprehensive assessment of model quality, including the global indicators and detailed geometric analyses.

Advanced Refinement and Validation Protocols

Beyond standard refinement, several advanced protocols exist to improve model quality and extract more information from the experimental data.

- PDB-REDO Pipeline: This is an automated method for the re-refinement of PDB structures using a uniform protocol. A recent analysis of cAMP-dependent protein kinase (PKA) structures found that PDB-REDO significantly improved some older structures, although its success was generally limited for more modern, high-quality deposits [32]. This highlights that standardized re-refinement can be beneficial but is not a panacea.

- Ensemble Refinement: This method addresses the limitation of single "snapshot" models by using molecular dynamics simulations with time-averaged X-ray restraints to generate an ensemble of structures. This approach better accounts for protein dynamics and disorder, often leading to a better fit to the data, as evidenced by reduced Rfree values (reductions of 0.3–4.9% were reported) [33]. This technique can reveal functionally important "molten cores" and dynamics that are obscured in single-conformer models.

- Radiation Damage Metrics (Bnet): Specific radiation damage from X-ray exposure can induce structural and chemical changes. The Bnet metric helps quantify this damage by comparing the B-factors of damage-prone atoms (like aspartate/glutamate carboxyl groups) to all other atoms in a similar local environment [34]. This is an example of a more nuanced quality check that goes beyond global indicators.

Researchers have access to a powerful suite of databases and software tools for assessing and analyzing structural quality.

Table 2: Key Research Reagent Solutions for Structural Validation

| Resource Name | Type | Primary Function in Quality Assessment |

|---|---|---|

| wwPDB Validation Server [6] | Database/Report | Provides standardized validation reports for all PDB entries, featuring the quality "slider" for global and geometric indicators. |

| PDB-REDO [32] | Software Pipeline | Automatically re-refines X-ray structures to improve model quality and identify potential issues in the original deposition. |

| MolProbity [14] [21] | Software/Service | Provides all-atom contact analysis, identifying steric clashes, rotamer outliers, and Ramachandran plot quality. |

| Phenix [35] | Software Suite | A comprehensive package for macromolecular structure determination, including refinement tools that output R-work and R-free. |

| KLIFS Database [32] | Specialized Database | A kinase-specific database that, like others, can be used to assess the relative quality of structures within a specific protein family. |

Comparative Analysis and Practical Guidance for Researchers

Global quality indicators must be interpreted in concert, not in isolation. A high-resolution structure with a poor R-free value may be over-refined, while a low-resolution structure with excellent R-values might still lack the detail needed for specific analyses like drug design. The wwPDB validation reports synthesize these metrics into an accessible format, providing percentiles that show how a structure compares to all other same-method structures in the PDB archive [6] [21].

When planning a structural bioinformatics project, it is crucial to define biological selection criteria and then determine how you will quality control your data [24]. For instance, if your analysis requires precise side-chain positioning for a kinase, you might filter for PKA structures with resolutions better than 2.5 Å and consult the top-quality structures identified in specialized analyses [32]. Be aware that legacy structures, many deposited without structure factors, may have less reliable quality metrics [32]. Furthermore, always consider the fit of key regions, like active sites or ligand-binding pockets, to the electron density, as global indicators can mask local errors.

In summary, resolution, R-work, and R-free form the foundational triad for assessing the global quality of crystallographic models. A rigorous understanding of these indicators, complemented by the use of modern validation resources and specialized databases, empowers researchers to select the most reliable structural data, thereby ensuring the robustness of their scientific conclusions in structural biology and drug development.

In the field of structural biology, the validation of three-dimensional atomic models against experimental crystallographic data is fundamental to ensuring scientific reliability. Real-space validation methods provide a residue-by-residue and ligand-by-ligand assessment of how well an atomic structure agrees with the experimental electron density map. For researchers, drug developers, and scientists relying on Protein Data Bank (PDB) structures, understanding these metrics is crucial for distinguishing well-determined regions from potentially unreliable areas in molecular models. The worldwide PDB (wwPDB) validation system employs these metrics in its official validation reports to provide a standardized assessment of structure quality [19] [36]. These reports are increasingly required by major scientific journals during manuscript submission and play a vital role in structural bioinformatics analyses and structure-guided drug discovery efforts [24] [19].

Among the various validation metrics, the Real-Space Correlation Coefficient (RSCC) and Real-Space R-Factor (RSR) have emerged as cornerstone measures for evaluating local fit to electron density. Their importance is particularly evident in ligand binding site analysis, where accurate modeling is often critical for understanding biological function and informing drug design [37]. This guide provides a comprehensive comparison of these essential metrics, detailing their calculation, interpretation, and practical application for assessing the quality of crystallographic structures.

Core Metrics: RSCC and RSR Explained

Fundamental Principles and Calculations

Real-Space Correlation Coefficient (RSCC) quantifies the linear correlation between the experimental electron density (ρexp) and the density calculated from the atomic model (ρcalc) within a specific region of the structure, typically around a residue or ligand [38]. It ranges from -1 to 1, where values closer to 1 indicate strong agreement between the model and experimental data. The mathematical calculation involves sampling both density maps at grid points within a defined volume surrounding the atom or residue of interest:

where μexp and μcalc represent the mean densities of the experimental and calculated maps, respectively, within the evaluated region.

Real-Space R-Factor (RSR) measures the average absolute difference between the experimental and calculated density maps, normalized by the average experimental density [16]. Unlike RSCC, RSR is a measure of discrepancy rather than correlation, with lower values indicating better fit. The typical calculation is:

In practice, both metrics are calculated for each residue or ligand in a structure, providing a localized assessment of model quality that complements global statistics like R-work and R-free [16] [36].

Comparative Analysis of RSCC and RSR

Table 1: Direct Comparison of RSCC and RSR Metrics

| Feature | RSCC (Real-Space Correlation Coefficient) | RSR (Real-Space R-Factor) |

|---|---|---|

| Fundamental Principle | Measures linear correlation between experimental and calculated density | Measures average absolute difference between densities |

| Value Range | -1 to 1 | 0 to 1 (theoretical range), typically ~0.05-0.6 in practice |

| Interpretation | Higher values indicate better fit (closer to 1.0) | Lower values indicate better fit (closer to 0.0) |

| Sensitivity | More sensitive to shape correspondence | More sensitive to density magnitude differences |

| Common Thresholds | Excellent: >0.9; Good: 0.8-0.9; Poor: <0.8 [38] | Excellent: <0.2; Problematic: >0.4 [19] |

| Outlier Identification | RSCC <0.8 often flags concerning regions [16] | RSR >0.4 used to identify poor fit [19] |

| Standardization | Often converted to Z-score (RSRZ) for comparison across resolutions [36] | Commonly used as absolute value or Z-score |

| Ligand Validation | Combined with RSR for comprehensive ligand assessment [19] | Used with RSCC for ligand electron density fit |

The wwPDB validation pipeline employs both metrics in tandem to provide a comprehensive picture of local structure quality. Since 2017, the validation reports have used a combination of RSR > 0.4 and RSCC < 0.8 to identify ligands that do not fit the electron density well, replacing the previously used LLDF statistic which produced substantial false positives and negatives [37] [19]. This dual-metric approach provides a more robust assessment of ligand fit, which is particularly important for structure-guided drug discovery where accurate ligand modeling is critical.

Experimental Protocols and Workflows

Implementation in wwPDB Validation Pipeline

The calculation of RSCC and RSR within the wwPDB validation infrastructure follows a standardized workflow that ensures consistent application across all deposited structures. The process begins when a depositor submits both atomic coordinates and structure factors to the PDB. The validation pipeline processes these data through multiple steps to generate the comprehensive validation report that accompanies each PDB entry.

Diagram 1: Workflow for RSCC/RSR calculation in wwPDB validation. The pipeline processes experimental data and coordinates through standardized steps to generate local fit metrics.

The calculation involves sampling the experimental and calculated electron density maps on a grid surrounding each residue or ligand. The specific volume considered is typically determined by a contour level that encompasses the region where the atomic model is expected to contribute meaningfully to the density. The wwPDB system utilizes the DCC (Density-Count-Correlation) software for these calculations, which has been validated against other community-standard tools [36] [38]. For ligands, additional validation using the Mogul program from the Cambridge Crystallographic Data Centre (CCDC) assesses geometric features against small-molecule crystal structure data, providing complementary information to the electron density fit metrics [37] [36].

Practical Application in Structure Analysis

For researchers analyzing specific structures, several tools and resources are available for calculating and visualizing RSCC and RSR values:

- wwPDB Validation Reports: Provide standardized RSCC and RSR values for all residues and ligands in publicly available PDB structures [16] [19]

- PDB-REDO/density-fitness: A specialized application for calculating density statistics including RSR, RSCC, and related metrics [39]

- PDBe website: Offers interactive visualization of electron density alongside RSCC/RSR values for individual ligands and residues [37]

- ValTrendsDB: Allows analysis of validation metric distributions across multiple structures, though it currently averages ligand metrics per PDB entry [37]

When performing structural bioinformatics analyses involving multiple structures, researchers should extract and compare these real-space validation metrics to identify the most reliable regions or structures for their specific research questions [24]. This is particularly important for studies focusing on ligand-binding sites, conformational changes, or catalytic residues, where local model accuracy is crucial for valid biological interpretations.

Quantitative Data and Interpretation Guidelines

Statistical Distributions and Threshold Values

Large-scale analysis of over 100 million individual amino acid residues across approximately 150,000 PDB crystal structures has established robust statistical distributions for RSCC values [38]. These distributions enable the identification of statistically significant outliers that may indicate problematic regions in structural models.

Table 2: RSCC Value Interpretation and Statistical Guidance

| RSCC Range | Interpretation | Recommended Action | Statistical Prevalence |

|---|---|---|---|

| > 0.95 | Excellent fit | High confidence in atomic coordinates | Top quartile of structures |

| 0.90 - 0.95 | Very good fit | Reliable for most analyses | Better than average |

| 0.80 - 0.90 | Acceptable fit | Use with minor caution | Typical for well-built regions |

| 0.70 - 0.80 | Questionable fit | Scrutinize carefully, especially side chains | ~4% of residues [38] |

| < 0.70 | Poor fit | Atomic coordinates not well supported | ~1% of residues (outliers) [38] |

For RSR values, the wwPDB validation system utilizes a threshold of RSR > 0.4 to identify problematic regions, particularly for ligand fit assessment [19]. When RSR is converted to a Z-score (RSRZ), values greater than 2.0 typically indicate regions where the fit to electron density is significantly worse than expected for structures at similar resolution [36].

The resolution of the crystallographic data significantly influences the expected ranges for both RSCC and RSR values. Lower-resolution structures (e.g., >3.0 Å) naturally exhibit lower average RSCC values due to increased uncertainty in electron density maps, while high-resolution structures (<1.5 Å) typically show RSCC values approaching 0.95 or higher for well-ordered regions [38]. The wwPDB validation reports address this resolution dependence by providing percentile scores that compare a structure's metrics against all PDB entries determined at similar resolution [16] [36].

Comparison with Alternative Quality Metrics

RSCC and RSR provide complementary information to other structure quality metrics. While global statistics like R-free and resolution offer overall structure quality assessments, RSCC and RSR deliver localized validation at the residue and ligand level.

Compared to geometry-based validation metrics (clashscores, Ramachandran outliers, rotamer outliers), real-space metrics directly assess the agreement with experimental data rather than conformity to expected stereochemistry [16] [36]. This makes them particularly valuable for identifying regions where the model may be stereochemically reasonable but poorly supported by the experimental evidence.

Recent comparisons between experimental structures and AlphaFold2 predictions have demonstrated that RSCC values correlate with predicted local distance difference test (pLDDT) scores (median correlation coefficient ~0.41) [38]. Importantly, these analyses confirm that experimentally determined structures at 3.5 Å resolution or better are generally more reliable than computational predictions and should be preferred when available [38].

Table 3: Key Research Reagents and Computational Tools for Real-Space Validation

| Resource Name | Type | Primary Function | Access Method |

|---|---|---|---|

| wwPDB Validation Server | Web Service | Pre-deposition validation of structures | http://validate.wwpdb.org [36] |

| PDB-REDO/density-fitness | Software | Calculate RSCC, RSR, and related density statistics | GitHub repository [39] |

| MolProbity | Software Suite | All-atom contact analysis, rotamer, and Ramachandran validation | Web service or standalone [40] [36] |

| Mogul | Database Tool | Geometric validation of ligands against CSD | Integrated in wwPDB pipeline [37] [36] |

| CCP4 Suite | Software Package | Comprehensive crystallographic computation | Program suite installation [40] |

| PDBe Ligand Page | Web Resource | Interactive visualization of ligand density fit | https://pdbe.org [37] |

| Uppsala EDS | Web Service | Electron density server for map calculation | Online database [40] |

| ValTrendsDB | Database | Analysis of validation metric trends across PDB | http://ncbr.muni.cz/ValTrendsDB [37] |

These resources represent the essential toolkit for researchers working with crystallographic structures. The wwPDB validation server is particularly valuable as it allows depositors to check their structures before formal submission and provides the same validation pipeline used for official PDB deposition [36]. For specialized analyses, the PDB-REDO/density-fitness tool offers advanced capabilities for calculating density statistics beyond the standard RSCC and RSR metrics [39].

Applications in Structural Biology and Drug Discovery

Ligand Validation and Drug Discovery Applications

The accurate assessment of ligand fit to electron density is arguably one of the most critical applications of real-space validation metrics. In structure-guided drug discovery, misleading ligand geometry or placement can derail entire research programs. The combination of RSCC and RSR has become the standard for identifying problematic ligands in the PDB [37] [19].

Analysis of ligand validation trends reveals that while overall protein structure quality has improved since the implementation of enhanced wwPDB validation protocols, ligand quality has shown less significant improvement [36]. This underscores the importance of careful ligand validation in macromolecular complexes. Common issues include misidentification of buffer molecules or water networks as ligands, especially when ligands bind with partial occupancy or in low-resolution structures (<3.0 Å) [37].

For drug discovery researchers, the following practical approach is recommended when analyzing ligand-containing structures:

- Consult the wwPDB validation report for RSCC and RSR values of all ligands

- Visualize the electron density around ligands using PDBe or similar tools

- Check for geometric outliers using Mogul statistics in the validation report

- Be particularly cautious with ligands showing RSCC < 0.8 and RSR > 0.4 [19]

- Consider resolution limitations - waters mediating protein-ligand interactions are rarely visible at resolutions worse than 3.0 Å [37]

Integration in Structural Bioinformatics Pipelines

For large-scale analyses across multiple structures, such as comparative studies of protein families or conformational analyses, integrating real-space validation metrics provides crucial quality filtering. Studies of kinase structures, for example, have employed these metrics to identify the most reliable structures for detailed mechanistic analysis [41].

When designing structural bioinformatics studies, researchers should:

- Define quality thresholds based on RSCC/RSR values appropriate for their specific research question

- Consider resolution-dependent expectations for real-space metrics

- Use percentile rankings from validation reports to compare structures determined at different resolutions

- Pay special attention to regions of biological interest (active sites, binding pockets, interface regions)

The systematic application of these real-space validation metrics across the PDB has revealed that structures determined more recently generally show better quality metrics, though even some older structures remain remarkably accurate in their well-ordered regions [41] [36].

Real-space validation metrics, particularly RSCC and RSR, provide indispensable tools for assessing the local fit of atomic models to experimental electron density. Their implementation in the wwPDB validation pipeline has standardized quality assessment across the archive, enabling researchers to identify reliable regions in crystallographic structures and avoid potentially misleading areas. For the structural biology and drug discovery community, understanding and applying these metrics is essential for rigorous structural analysis. As structural bioinformatics continues to evolve, with increasing integration of experimental and computational approaches, real-space validation will remain fundamental to ensuring the reliability of structural insights guiding biological discovery and therapeutic development.