Protein Structure Validation: Essential Tools and Metrics for Accurate Biomedical Research

This article provides a comprehensive guide to protein structure validation for researchers and drug development professionals.

Protein Structure Validation: Essential Tools and Metrics for Accurate Biomedical Research

Abstract

This article provides a comprehensive guide to protein structure validation for researchers and drug development professionals. It covers the foundational principles of why structure validation is critical for reliable biomedical conclusions, explores the key methodologies and tools—from established suites like MolProbity to AI-era metrics like pLDDT and ipTM. The content details practical strategies for troubleshooting common model errors and optimizing predictions from tools like AlphaFold and ColabFold. Finally, it offers a comparative analysis of validation approaches for different structure types, including monomers, complexes, and challenging targets like antibodies, empowering scientists to critically assess and confidently use structural models in their work.

Why Protein Structure Validation Matters: Ensuring Reliability in Biomedical Data

The Critical Role of Accurate Structures in Drug Design and Functional Analysis

The field of structural biology has undergone a revolutionary transformation, enabling unprecedented advances in drug discovery and functional protein analysis. Accurate three-dimensional protein structures have become indispensable tools for understanding disease mechanisms, identifying druggable targets, and designing novel therapeutics with enhanced specificity and safety profiles. The emergence of artificial intelligence (AI)-driven prediction tools, particularly AlphaFold, has dramatically expanded the structural coverage of the proteome while introducing new considerations for validation and application in pharmaceutical development. This guide provides an objective comparison of current structural determination and validation technologies, examining their performance characteristics, limitations, and optimal applications within drug discovery pipelines. As the structural biology landscape evolves rapidly, with AlphaFold 3 and RoseTTAFold All-Atom now enabling predictions of molecular complexes beyond single proteins, researchers require clear frameworks for selecting appropriate methodologies based on their specific research objectives and validation requirements [1].

Comparative Analysis of Protein Structure Determination Methods

The accurate determination of protein structures relies on both experimental and computational approaches, each with distinct strengths, limitations, and optimal use cases. The following comparison summarizes the key performance metrics of major structural biology techniques used in pharmaceutical research and development.

Table 1: Performance Comparison of Major Protein Structure Determination Methods

| Method | Typical Resolution | Throughput | Key Advantages | Major Limitations |

|---|---|---|---|---|

| X-ray Crystallography | 1.5-3.0 Å | Low-Medium | High resolution; Direct atomic visualization | Requires crystallization; Static snapshot |

| Cryo-Electron Microscopy | 2.5-4.5 Å | Low-Medium | No crystallization needed; Handles large complexes | Expensive equipment; Complex sample prep |

| NMR Spectroscopy | Atomic (solution state) | Low | Studies dynamics in solution; Atomic details | Size limitations; Complex data analysis |

| AlphaFold Prediction | ~1-5 Å (confidence measure) | Very High | No experimental data needed; High coverage | Limited complex data; Confidence metrics vary |

| Homology Modeling | Varies with template identity | High | Computationally efficient; Well-established | Template-dependent accuracy |

| De Novo Modeling | Variable | Medium | No template required | Computationally intensive; Lower accuracy |

Experimental methods like X-ray crystallography and cryo-electron microscopy (cryo-EM) provide high-resolution structural information but require significant time, resources, and technical expertise [2]. The recent "structural revolution" in membrane proteins, including G protein-coupled receptors (GPCRs) and ion channels, has been largely driven by advances in cryo-EM technology [3]. These experimental structures serve as crucial benchmarks for validating computational predictions and provide definitive evidence for regulatory submissions.

Computational approaches offer complementary advantages, particularly in throughput and accessibility. Homology modeling, which predicts protein structure based on evolutionarily related templates, remains valuable when templates with high sequence similarity are available [2]. By contrast, de novo modeling predicts protein structures from scratch using physical principles and statistical methods, making it applicable to proteins without structural homologs [2]. The revolutionary emergence of AI-based prediction tools like AlphaFold has dramatically expanded structural coverage, with the AlphaFold Protein Structure Database now containing over 214 million unique protein structures [3]. This comprehensive coverage enables structure-based drug discovery for targets previously considered intractable.

Experimental Validation Protocols for Computational Predictions

As computational predictions become increasingly integrated into drug discovery pipelines, robust validation protocols are essential to assess model quality and determine appropriate applications. The following workflow outlines a comprehensive framework for validating computationally-derived protein structures.

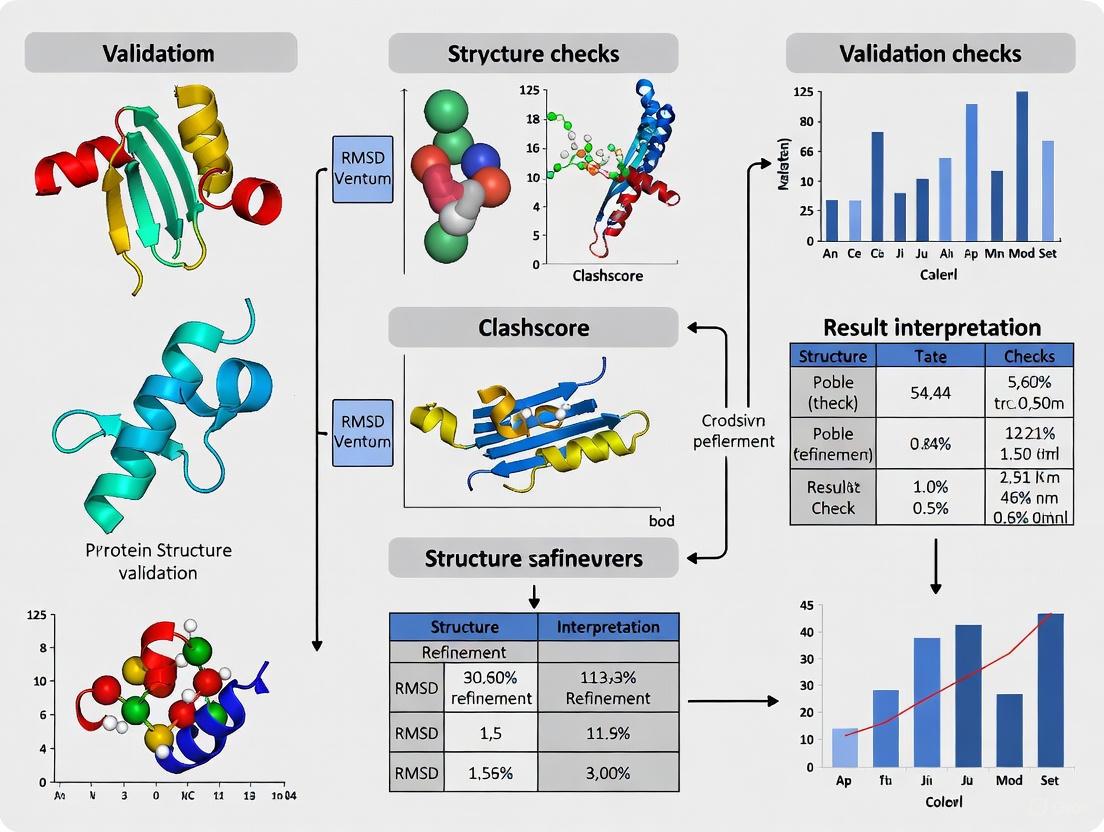

Diagram 1: Protein structure validation workflow showing key assessment stages.

Global Quality Assessment Metrics

The initial validation phase focuses on global structure quality using computational metrics. For AlphaFold models, the pLDDT (predicted Local Distance Difference Test) score provides a per-residue estimate of confidence on a scale of 0-100, with scores above 90 indicating high confidence, 70-90 good confidence, 50-70 low confidence, and below 50 very low confidence [4] [1]. The Template Modeling (TM) score measures global topology similarity to reference structures, with scores >0.5 indicating correct folding and <0.17 representing random similarity [2]. Additional geometric validation checks include Ramachandran plot analysis for backbone torsion angles, rotamer analysis for side-chain conformations, and clash score assessment for steric overlaps [2].

Experimental Validation Techniques

While computational metrics provide initial quality indicators, experimental validation remains essential for confirming structural accuracy. Hydrogen-deuterium exchange mass spectrometry (HDX-MS) probes protein dynamics and solvent accessibility by measuring the rate at which backbone amide hydrogens exchange with deuterium in solution [5]. This technique can validate predicted flexible regions and binding interfaces. Cross-linking mass spectrometry identifies spatially proximate amino acids, providing distance constraints that can validate predicted folds [5]. Small-angle X-ray scattering (SAXS) offers solution-state structural information that complements static predictions, particularly for validating conformational flexibility [3].

Performance Comparison of AI-Based Structure Prediction Platforms

The landscape of AI-based protein structure prediction has evolved rapidly, with multiple platforms now offering distinct capabilities and licensing models. The following comparison examines the leading solutions available in 2025.

Table 2: Comparison of AI-Based Protein Structure Prediction Platforms (2025)

| Platform | Developer | Prediction Capabilities | Availability | Key Advantages | Documented Limitations |

|---|---|---|---|---|---|

| AlphaFold3 | Google DeepMind | Proteins, multimeric complexes, ligands | Non-commercial only | High accuracy; Complex prediction | Restricted commercial use |

| RoseTTAFold All-Atom | David Baker Lab, UW | Proteins, complexes, small molecules | Non-commercial only | Good performance; Active development | Limited to non-commercial |

| OpenFold | OpenFold Consortium | Protein structures | Fully open-source | Commercial friendly; Community-driven | Primarily single chains |

| Boltz-1 | Academic Consortium | Protein structures | Fully open-source | Open license; Modifiable code | Early development stage |

AlphaFold3 represents a significant advancement beyond its predecessor, with capabilities extending to molecular complexes comprising multiple proteins or protein-ligand interactions [1]. However, its restricted availability for non-commercial use only has prompted development of open-source alternatives. RoseTTAFold All-Atom from David Baker's lab at the University of Washington offers competitive performance for molecular complexes but shares similar licensing restrictions [1]. The emergence of fully open-source initiatives like OpenFold and Boltz-1 addresses the need for commercially applicable prediction tools, though these platforms may lag slightly in accuracy and feature completeness compared to their proprietary counterparts [1].

Performance benchmarking studies indicate that AlphaFold3 generally provides superior accuracy for single-chain predictions, with median backbone accuracy approaching experimental resolution in high-confidence regions [4] [1]. However, performance varies substantially for different protein classes, with membrane proteins and large complexes presenting greater challenges. The RoseTTAFold All-Atom algorithm demonstrates particular strength in predicting protein-protein interfaces, making it valuable for studying signaling complexes and drug targets involving multiple subunits [1].

Application in Structure-Based Drug Design

Accurate protein structures enable rational drug design by revealing precise atomic-level interactions between targets and potential therapeutics. The ligand binding pocket—a cavity or depression on the protein surface where small molecules bind—plays a crucial role in determining binding affinity, specificity, and overall druggability [2]. Structure-based drug design (SBDD) leverages this structural information to identify and optimize compounds with desired pharmacological properties.

Molecular Docking and Virtual Screening

Molecular docking simulations computationally predict how small molecules bind to protein targets, generating binding poses and affinity scores [2] [3]. These tools enable virtual screening of ultra-large compound libraries, significantly reducing experimental costs and time requirements. Modern screening libraries, such as the REAL database, contain billions of readily synthesizable compounds, with virtual hit rates typically ranging from 10-40% in experimental testing [3]. The exponential growth of accessible chemical space, combined with cloud computing resources, now enables screening campaigns that would have been computationally impossible just years ago [3].

The following diagram illustrates the integrated workflow for structure-based drug discovery, combining computational predictions with experimental validation.

Diagram 2: Structure-based drug discovery workflow integrating computational and experimental approaches.

Accounting for Structural Flexibility

Traditional docking approaches often treat proteins as rigid structures, but increasing evidence demonstrates that target flexibility significantly impacts drug binding [3]. Molecular dynamics (MD) simulations address this limitation by modeling conformational changes in solution, revealing cryptic pockets not apparent in static structures [3]. The Relaxed Complex Method represents a systematic approach that selects representative target conformations from MD simulations for docking studies, significantly improving hit rates for flexible targets [3]. Accelerated MD (aMD) methods enhance sampling efficiency by smoothing energy barriers, enabling more comprehensive exploration of conformational landscapes within feasible simulation timescales [3].

Essential Research Reagent Solutions

The following table details key reagents, tools, and platforms essential for protein structure analysis and validation in drug discovery research.

Table 3: Essential Research Reagent Solutions for Protein Structure Analysis

| Category | Specific Tools/Platforms | Primary Function | Application Context |

|---|---|---|---|

| Structure Prediction | AlphaFold3, RoseTTAFold All-Atom, OpenFold | Protein and complex structure prediction | Initial model generation; Template for docking |

| Molecular Docking | AutoDock, Schrödinger Suite, MOE | Ligand pose prediction and scoring | Virtual screening; Binding mode analysis |

| Dynamics Simulation | GROMACS, AMBER, CHARMM | Molecular dynamics simulations | Flexibility assessment; Cryptic pocket discovery |

| Experimental Validation | HDX-MS, XL-MS, Cryo-EM | Experimental structure verification | Prediction validation; Quality assessment |

| Compound Libraries | REAL Database, SAVI Library | Source of screening compounds | Ultra-large virtual screening |

| Structure Analysis | PYMOL, ChimeraX, Byos | Visualization and analysis | Model inspection; Quality assessment |

These tools represent the essential infrastructure for modern structure-based drug discovery. The REAL Database by Enamine deserves particular note, having grown from approximately 170 million compounds in 2017 to more than 6.7 billion compounds in 2024, dramatically expanding accessible chemical space for virtual screening [3]. Similarly, platforms like Byos from Protein Metrics provide automated workflows for mass spectrometry-based structural validation, including hydrogen-deuterium exchange and cross-linking analyses [5].

The critical role of accurate protein structures in drug design and functional analysis continues to expand as computational and experimental methods evolve in synergy. AI-based prediction tools have democratized access to structural information while creating new imperatives for rigorous validation and thoughtful application. The optimal structure-based drug discovery pipeline integrates multiple methodologies—leveraging the unprecedented coverage of AlphaFold predictions, the high resolution of experimental structures, the binding insights from molecular docking, and the dynamic perspective of MD simulations. As open-source initiatives develop commercially accessible alternatives to restricted platforms and validation methodologies become increasingly sophisticated, researchers are positioned to leverage structural information more effectively than ever before. This convergence of technologies promises to accelerate the discovery of novel therapeutics targeting previously intractable diseases, fundamentally advancing both pharmaceutical development and our understanding of biological function at the molecular level.

The revolutionary advancements in protein structure prediction, acknowledged by the 2024 Nobel Prize in Chemistry, have created a paradigm shift in structural biology and drug discovery [6]. Sophisticated artificial intelligence (AI) systems like AlphaFold now provide researchers with millions of structurally predicted proteins, offering unprecedented insights into molecular machinery [7]. However, beneath this remarkable progress lies a complex landscape of methodological constraints that impact the accuracy and biological relevance of protein models. Both experimental techniques for structure determination and computational approaches for prediction carry inherent limitations in capturing the dynamic reality of proteins in their native biological environments [6] [8]. This guide provides an objective comparison of current protein structure validation tools and metrics, examining the sources of error across methodological boundaries to inform researchers and drug development professionals in their structural analysis workflows.

Fundamental Epistemological Challenges in Protein Structure Determination

The interpretation of protein structures faces several foundational challenges that affect both experimental and computational approaches. The Levinthal paradox highlights the astronomical number of possible conformations a protein could theoretically adopt, making random sampling computationally infeasible [6] [9]. This paradox suggests that protein folding must proceed along specific pathways rather than through random conformational searches, yet these pathways remain incompletely understood. Anfinsen's dogma, which posits that a protein's native structure is determined solely by its amino acid sequence, provides a fundamental principle while facing limitations in interpretation, particularly for proteins with significant conformational flexibility or those requiring chaperones for proper folding [6] [9]. Additionally, the environmental dependence of protein conformations creates substantial barriers to predicting functional structures through static computational means alone [6]. The presence of cellular components, pH variations, temperature fluctuations, and molecular interactions all influence protein folding and stability in ways that are difficult to capture in isolation.

Limitations of Experimental Structure Determination Methods

Experimental methods for protein structure determination provide crucial reference data but face significant constraints in resolving dynamic biological reality. The table below summarizes the key limitations of major experimental approaches:

Table 1: Limitations of Experimental Protein Structure Determination Methods

| Method | Resolution | Key Limitations | Impact on Structure Quality |

|---|---|---|---|

| X-ray Crystallography | Atomic (~1-3 Å) | Requires rigid crystallization; static representation; crystal packing artifacts; radiation damage | Cannot capture flexibility or disordered regions; may reflect non-physiological states |

| NMR Spectroscopy | Atomic (~1-5 Å) | Limited to smaller proteins (<50 kDa); challenging data interpretation; technical complexity | Provides dynamic information but with molecular size constraints |

| Cryo-Electron Microscopy | Near-atomic (~1.5-4 Å) | Sample preparation artifacts; potential structural alterations during vitrification; high equipment cost | Potential freezing-induced conformational changes; heterogeneous resolution |

These experimental limitations have profound implications for structural biology databases and computational modeling. The Protein Data Bank (PDB), while invaluable, contains structures determined under conditions that may not fully represent the thermodynamic environment controlling protein conformation at functional sites [6]. This creates a fundamental dependency for computational methods, as AI-based prediction tools are trained primarily on these experimentally determined structures, potentially propagating and amplifying their limitations [8].

Limitations of Computational Protein Structure Prediction

Computational methods have dramatically advanced but face distinct challenges in accurately representing protein dynamics and diverse structural classes:

Table 2: Limitations of Computational Protein Structure Prediction Methods

| Method Type | Representative Tools | Key Limitations | Impact on Prediction Accuracy |

|---|---|---|---|

| Template-Based Modeling (TBM) | MODELLER, SwissPDBViewer | Limited by template availability; template bias; alignment errors | Accuracy deteriorates with decreasing sequence identity to templates (<30%) |

| Template-Free Modeling (TFM) | AlphaFold2/3, RoseTTAFold, ESMFold | Training data dependency; MSA quality sensitivity; static structure bias | Reduced accuracy for proteins lacking homologous sequences in databases |

| Ab Initio Methods | Physical principle-based approaches | Computationally intensive; force field inaccuracies; sampling limitations | Challenging for larger proteins; limited practical application |

A critical limitation of current AI-based predictors is their inadequate handling of chimeric or fused proteins. Recent research demonstrates that AlphaFold-2, AlphaFold-3, and ESMFold consistently mispredict the experimentally determined structure of small, folded peptide targets when presented as N or C terminus sequence fusions with common scaffold proteins [10]. This occurs despite accurate prediction of the individual components, revealing a fundamental gap in handling non-natural protein constructs common in experimental biology.

The multiple sequence alignment (MSA) step represents a particular vulnerability. For chimeric proteins, the MSA-based structural signals for the target protein are lost in the fused sequence form when using default parameters [10]. This limitation has prompted the development of specialized approaches like the Windowed MSA method, which independently computes MSAs for target and scaffold regions before merging them, significantly improving prediction accuracy for fusion constructs [10].

Comparative Analysis of Structure Prediction Performance

To objectively evaluate contemporary structure prediction tools, we examine quantitative performance metrics across different protein classes and contexts:

Table 3: Performance Comparison of AI-Based Protein Structure Prediction Tools

| Tool | Peptide Prediction Accuracy (RMSD <1 Å) | Scaffold Fusion Performance | Key Strengths | Key Limitations |

|---|---|---|---|---|

| AlphaFold-3 | 90/394 targets | Significant accuracy deterioration | Highest accuracy on isolated peptides | Loses accuracy in fusion contexts |

| AlphaFold-2 | 34/394 targets | Significant accuracy deterioration | Robust MSA utilization | Lower baseline accuracy than AF3 |

| ESMFold-iterative | 21/394 targets | Significant accuracy deterioration | Faster inference; language model-based | Lowest accuracy of evaluated tools |

The Windowed MSA approach demonstrates a marked improvement for chimeric protein prediction, producing strictly lower RMSD values than standard MSA in 65% of test cases without compromising scaffold structural integrity [10]. This specialized method addresses the MSA construction artifacts that occur when attempting to align entire chimeric sequences at once, highlighting how understanding specific failure modes can lead to targeted improvements.

Protein Dynamics: The Critical Frontier

A fundamental limitation shared by many experimental and computational methods is their focus on static structures rather than dynamic conformational ensembles [8]. Protein function is not solely determined by static three-dimensional structures but is fundamentally governed by dynamic transitions between multiple conformational states [8]. This representation gap is significant because the millions of possible conformations that proteins can adopt, especially those with flexible regions or intrinsic disorders, cannot be adequately represented by single static models derived from crystallographic and related databases [6].

The structural alphabet approach used in tools like Foldseek represents an important innovation, describing tertiary amino acid interactions within proteins as sequences to enable rapid structure comparison [7]. Foldseek decreases computation times by four to five orders of magnitude while maintaining 86% and 88% of the sensitivities of Dali and TM-align, respectively [7]. This demonstrates how alternative representations can overcome specific computational limitations while introducing different trade-offs in sensitivity and accuracy.

Research Reagent Solutions for Protein Structure Analysis

Table 4: Essential Research Reagents and Tools for Protein Structure Analysis

| Reagent/Tool | Function | Application Context |

|---|---|---|

| MMseqs2 | Rapid sequence search and MSA generation | Identifying homologous sequences for template-based modeling |

| Foldseek | Fast protein structure search and alignment | Structural similarity detection in large databases |

| Windowed MSA | Specialized MSA construction for chimeric proteins | Improving prediction accuracy for fusion constructs |

| GROMACS | Molecular dynamics simulation package | Exploring protein dynamic conformations and flexibility |

| PDB2PQR | Structure preparation for simulations | Adding hydrogen atoms and preparing files for MD input |

| UniRef30 | Non-redundant protein sequence database | MSA construction for deep learning-based prediction |

Experimental Protocols for Method Validation

Windowed MSA Protocol for Chimeric Protein Prediction

The Windowed MSA approach addresses critical limitations in predicting fused protein structures [10]:

- Independent MSA Generation: Generate separate MSAs for scaffold and tag regions using MMseqs2 against UniRef30

- Sub-alignment Specification: Scaffold sub-alignment includes homologs spanning the scaffold sequence with explicit "GLY-SER" linker incorporation

- Peptide-specific Alignment: Peptide sub-alignment built exclusively from peptide homologs

- Alignment Merging: Concatenate scaffold and peptide MSAs with gap characters (-) inserted to fill non-homologous positions

- Spatial Preservation: Peptide-derived sequences carry gaps across scaffold region, and scaffold-derived sequences carry gaps across peptide region

- Structure Prediction: Use finalized windowed MSAs as inputs to AlphaFold-2 or AlphaFold-3

Molecular Dynamics Validation Protocol

Molecular dynamics simulations provide critical validation of structural stability [10]:

- Structure Preparation: Use PDB2PQR server to add hydrogen atoms and prepare files for MD input

- Force Field Application: Apply Amber 99sb-ildnp force field to normal amino acids and ions; SPC model for water molecules

- System Solvation: Solvate in cubic box with addition of Cl⁻ and Na⁺ ions to balance charge

- System Equilibration: Energy minimization followed by heating to 300K with equilibration under NVT and NPT conditions (50 ps each)

- Production Run: Perform 50 ns simulation under NPT conditions with 2fs time step, maintaining temperature (300K) and pressure (1 bar)

Visualization of Methodological Relationships and Workflows

Methodological Relationships in Protein Structure Determination

Windowed MSA Workflow for Improved Chimeric Protein Prediction

The field of protein structure validation continues to evolve with a growing recognition that both experimental and computational methods provide complementary yet incomplete representations of structural reality. The limitations discussed in this guide—from the static nature of crystallographic structures to the MSA dependencies of AI predictors—highlight critical areas for methodological improvement. Future directions point toward increased integration of experimental data with computational modeling, enhanced sampling of conformational ensembles, and specialized approaches for challenging protein classes including chimeric constructs, disordered proteins, and membrane-associated complexes. By understanding these fundamental sources of error, researchers can make more informed decisions in selecting appropriate methods, interpreting structural data, and developing improved tools for protein structure analysis in drug discovery and basic research.

In protein structure prediction, accurately estimating the quality of computational models is as crucial as generating the models themselves. For researchers and drug development professionals, this process, known as Estimation of Model Accuracy (EMA) or model quality assessment, relies on distinct classes of metrics that evaluate different aspects of a predicted structure. Understanding the differences between global, local, and interface-specific accuracy is fundamental to selecting the right models for downstream applications like function annotation and drug discovery [11].

The following table defines these core concepts and their significance in structural biology.

| Accuracy Type | Description | Significance in Protein Complex Validation |

|---|---|---|

| Global Accuracy | Measures the overall correctness of the entire protein complex structural model, treating the complex as a single unit. [11] | Provides a single score for the overall model quality, useful for initial ranking and selection from a large pool of predictions. [11] |

| Local Accuracy | Assesses the quality of specific, localized regions within the complex, such as individual secondary structure elements or domains. [11] | Identifies regions of high and low confidence within a model; crucial for interpreting functional sites that may not be reflected in the global score. [11] |

| Interface Accuracy | Evaluates the correctness specifically at the binding interfaces between different protein chains within the complex. [11] | Essential for understanding biological function, as it directly measures the predicted quality of inter-chain interactions and binding. [11] |

Quantitative Comparison of Accuracy Metrics for Protein Complexes

The development of robust EMA methods depends on benchmark datasets annotated with multiple quality scores. Frameworks like PSBench provide over one million structural models annotated with at least 10 complementary quality scores spanning global, local, and interface levels [11]. The performance of different modeling pipelines can be quantitatively compared using these metrics, as shown in the table below which summarizes benchmark results on CASP15 targets.

Table: Benchmark Performance of Protein Complex Structure Prediction Methods (CASP15 Data)

| Method | Global Accuracy (Average TM-score) | Key Distinguishing Approach |

|---|---|---|

| DeepSCFold | 11.6% higher than AlphaFold-Multimer [12] | Uses sequence-derived structural complementarity and interaction probability to build paired Multiple Sequence Alignments (pMSAs). [12] |

| AlphaFold-Multimer | Baseline (0% change) | An extension of AlphaFold2 tailored for multimers; relies on co-evolutionary signals from paired MSAs. [12] |

| AlphaFold3 | 10.3% lower than DeepSCFold [12] | A recently released model for predicting structures of protein complexes and other biomolecules. [12] |

Experimental results demonstrate that DeepSCFold's focus on sequence-derived structure complementarity significantly enhances accuracy. On antibody-antigen complexes from the SAbDab database, DeepSCFold improved the prediction success rate for binding interfaces by 24.7% and 12.4% over AlphaFold-Multimer and AlphaFold3, respectively [12]. This highlights a major advantage in capturing difficult inter-chain interactions, such as those in antibody-antigen systems, which often lack clear co-evolutionary signals [12].

Experimental Protocols for Evaluating Protein Complex Accuracy

Rigorous evaluation of protein complex models relies on standardized, blind experiments. The community-wide Critical Assessment of protein Structure Prediction (CASP) experiments provide the gold-standard framework for such assessments [11]. The following workflow outlines a typical experimental protocol for benchmarking EMA methods.

Key Experimental Steps:

- Dataset Curation and Blind Testing: Benchmarks use carefully selected protein complex targets from CASP experiments, ensuring a diverse range of sequence lengths, stoichiometries, and difficulties [11]. Models are generated before the experimental structures are released, simulating a real-world prediction scenario and preventing data leakage [11].

- Structural Model Generation: Thousands of models are generated for each target, primarily using state-of-the-art AI methods like AlphaFold2-Multimer and AlphaFold3 [11] [12]. This creates a large pool of predictions with varying quality.

- Model Annotation with Ground Truth: Each predicted model is rigorously labeled with quality scores by comparing it to the experimentally determined native structure. PSBench, for instance, annotates models with 10 different scores capturing global, local, and interface accuracy [11].

- EMA Method Training and Testing: Machine learning-based EMA methods like GATE (a graph transformer-based method) are trained on datasets like the CASP15inhousedataset [11]. Their performance is then blindly tested on a separate dataset, such as from CASP16, to evaluate their ability to rank models by quality without knowledge of the true structure [11].

- Performance Evaluation: The success of an EMA method is measured by how well its estimated scores correlate with the true quality of models and its ability to identify the best model from a pool of candidates [11].

| Resource Name | Function in Protein Structure Validation |

|---|---|

| PSBench | A large-scale benchmark suite providing over one million labeled protein complex structural models for training and testing EMA methods. [11] |

| CASP Datasets | Community-wide blind test datasets (e.g., CASP15, CASP16) used for rigorous and temporally unbiased evaluation of prediction methods. [11] [12] |

| AlphaFold-Multimer | A foundational deep learning tool for predicting protein complex structures, often used as a baseline and model generator in benchmarks. [12] |

| DeepUMQA-X | An in-house model quality assessment method used in pipelines like DeepSCFold to select the top-ranked model for further refinement. [12] |

| pSS-score & pIA-score | Deep learning-predicted scores for protein-protein structural similarity and interaction probability, used to construct biologically informed paired MSAs. [12] |

Protein structure validation is a critical step in structural biology, ensuring that three-dimensional atomic models accurately represent the experimental data and conform to known physical and chemical principles. As the single global archive for macromolecular structures, the Worldwide Protein Data Bank (wwPDB) has established standardized validation processes that are integral to the deposition pipeline. Alongside these official processes, third-party tools like MolProbity and the Protein Structure Validation Suite (PSVS) provide complementary and often more detailed analyses, creating a multi-layered ecosystem for quality assessment. These resources are indispensable for researchers, referees, and journal editors, providing confidence in structural models used for downstream applications in drug discovery and functional analysis. This guide objectively compares the capabilities, methodologies, and outputs of these three major validation resources, providing a framework for scientists to select the most appropriate tools for their specific research contexts.

The landscape of protein structure validation is served by both official deposition pipeline tools and advanced third-party resources. The following table summarizes the core characteristics, primary functions, and key outputs of the wwPDB validation system, MolProbity, and the PSVS suite for direct comparison.

Table 1: Core Features of Major Validation Resources

| Resource | Provider/Scope | Primary Function | Key Validation Metrics | Access Method |

|---|---|---|---|---|

| wwPDB Validation | Worldwide PDB Partnership (Official) | Standardized validation for deposition and archival [13] | Clashscore, Rfree, Ramachandran outliers, Rotamer outliers, RSRZ outliers [13] | Integrated into OneDep deposition system; public reports [14] |

| MolProbity | Richardson Lab (Duke University) | All-atom contact analysis & comprehensive geometry validation [15] | All-atom clashscore, Ramachandran distribution, Rotamer distribution, Cβ deviations, CaBLAM [15] | Web server (Duke/Manchester), integrated in Phenix, command-line [15] [16] |

| PSVS Suite | BioMagResBank (BMRB) | Protein structure quality assessment for NMR and X-ray [17] | NMR validation: AVS, LACS, SPARTA; General quality scores [17] | Web server, downloadable tools [17] |

The wwPDB Validation System

The wwPDB validation system is an official, integrated component of the OneDep deposition and annotation pipeline, deployed globally for X-ray crystallography, NMR, and electron microscopy (3DEM) methods [13]. This system operationalizes recommendations from expert Validation Task Forces (VTFs) convened for each structure determination method [13]. Its implementation represents a systematic effort to standardize quality assessment across the entire PDB archive.

Core Validation Methodology and Metrics

The wwPDB system employs a comprehensive software pipeline that utilizes community-standard tools including DCC, EDS, Mogul, MolProbity, and Xtriage [13]. The validation output is summarized in an official wwPDB Validation Report that features five primary graphical slider metrics, allowing for intuitive assessment of structure quality relative to the entire archive and similar resolution structures [13]:

- Rfree: Measures the agreement between the model and a subset of experimental data not used in refinement.

- Clashscore: A MolProbity-derived metric quantifying the number of serious steric overlaps per 1000 atoms [13].

- Ramachandran Outliers: Percentage of residues in disallowed regions of the Ramachandran plot.

- Rotamer Outliers: Percentage of sidechains in unfavorable conformations.

- RSRZ Outliers: Percentage of residues with poor real-space fit to electron density.

The system also provides specific validation for ligands, assessing the geometry of small molecules against the Cambridge Structural Database using Mogul and evaluating their fit to experimental electron density [13].

MolProbity

Philosophy and Unique Capabilities

MolProbity stands apart through its distinctive all-atom contact analysis, which includes explicit hydrogen atoms, providing exceptionally sensitive detection of steric clashes and suboptimal packing [15]. This approach has made MolProbity's clashscore a gold standard for evaluating local model errors. The tool is methodologically versatile, tailored for crystallography while being equally suitable for cryo-EM, neutron, NMR, and computational models [15].

Key Methodological Features

MolProbity's validation relies on statistically derived expectations from high-quality reference datasets. The current Top8000 dataset for proteins (filtered at 70% homology) and RNA11 for RNA provide the empirical basis for Ramachandran, rotamer, and backbone conformation evaluations [15]. Its most significant features include:

- All-atom contact analysis: Identifies steric clashes, including those involving hydrogen atoms, through the "clashscore" metric (number of clashes ≥0.4Å per 1000 atoms) [15].

- Asn/Gln/His "flip" correction: Automatically identifies and corrects ambiguous amide or imidazole ring orientations [16].

- Updated geometry criteria: Uses improved heavy-atom-to-hydrogen distances and van der Waals radii, with separate parameters for electron-cloud-center positions (X-ray) and nuclear positions [15].

- CaBLAM analysis: A Cα-CO virtual-angle method for validating backbone and secondary structure at lower resolutions, particularly useful for cryo-EM and low-resolution X-ray structures [15].

- Multi-platform accessibility: Available through web servers (primary and Manchester mirror), fully integrated into the Phenix software suite, and as open-source tools via GitHub [15].

Protein Structure Validation Suite (PSVS)

Focus and Application Scope

The PSVS suite specializes in quality assessment for protein structures, with particular strength in validating models determined by NMR spectroscopy [17]. It serves as a key pre-deposition checking tool for researchers submitting to the PDB and is accessible through the BioMagResBank (BMRB) website.

Core Components and Capabilities

PSVS incorporates several specialized tools for comprehensive structure validation:

- AVS (Assignment Validation Suite): Checks the completeness and consistency of NMR chemical shift assignments.

- LACS (Local Axis Constellation System): Validates local structural geometry based on NMR data.

- SPARTA+: Used for predicting chemical shifts from protein coordinates and comparing them with experimental data.

- General quality scores: Provides various Z-scores that compare geometric parameters against high-resolution reference structures.

The suite generates consolidated validation reports for NMR-derived structures and is also applicable for evaluating X-ray structures [17].

Quantitative Comparison of Validation Metrics and Performance

A meaningful comparison of validation resources requires examination of both their metric definitions and their demonstrated impact on structural quality.

Comparative Metric Definitions and Scoring

Table 2: Quantitative Validation Metrics Across Resources

| Validation Category | wwPDB System | MolProbity | PSVS Suite |

|---|---|---|---|

| Steric Clash Validation | Clashscore (from MolProbity) [13] | All-atom Clashscore (original implementation) [15] | Not specifically highlighted |

| Backbone Geometry | % Ramachandran outliers [13] | Ramachandran distribution (Top8000 dataset), CaBLAM [15] | Dihedral angle analysis |

| Sidechain Geometry | % Rotamer outliers [13] | Rotamer distribution (Top8000 dataset) [15] | Rotamer analysis |

| Data-Model Fit | Rfree, RSRZ outliers [13] | Real-space correlation (when map provided) [18] | NMR data-model fit (SPARTA+) |

| Ligand Chemistry | Mogul bond/angle Z-scores [13] | Not specifically highlighted | Not specifically highlighted |

| NMR-Specific | BMRB validation protocols | General purpose for NMR [15] | AVS, LACS, SPARTA [17] |

| Overall Score | Multi-parameter sliders | MolProbity score | Overall quality Z-scores |

Documented Impact on Structure Quality

The implementation of these validation resources has demonstrated measurable effects on the quality of structures deposited in the PDB. Following the deployment of the augmented wwPDB validation system and widespread adoption of MolProbity, significant improvements in key quality metrics have been observed [13]:

- Clashscore improvement: Since the advent of MolProbity in 2002, clashscores for new PDB depositions in the 1.8-2.2Å resolution range have improved by approximately a factor of 3, demonstrating the tool's impact on identifying and correcting steric violations [15].

- Multi-parameter enhancements: Comparisons of PDB depositions before and after introduction of the wwPDB validation reporting system show improvements in clashscores, sidechain rotamer outliers, and local agreement between atomic coordinates and electron density (RSRZ), largely independent of resolution and molecular weight [13].

- Ligand quality gap: Despite overall improvements, the validation data indicates no significant improvement in the quality of bound ligands, highlighting an area requiring continued focus [13].

Experimental Protocols and Workflow Integration

Standard Validation Workflow

A typical validation workflow integrates multiple resources at different stages of structure determination, from initial model building through final deposition. The following diagram illustrates this integrated process:

Validation Workflow Integration: From structure determination to deposition

Detailed Methodological Protocols

MolProbity Protocol for All-Atom Contact Analysis

The standard MolProbity protocol involves sequential steps that can be performed via the web server or within the Phenix environment [16]:

- Structure Input: Upload a coordinate file (PDB format) or fetch directly from the PDB using its identifier [16].

- Hydrogen Addition and N/Q/H Flips: Run the

Reduceutility to add hydrogen atoms at optimal positions and evaluate Asn/Gln/His sidechain flip states. The algorithm scores alternative orientations and suggests flips that improve steric environment [16]. - Contact and Geometry Analysis: Execute all-atom contact analysis using

Probe, which calculates interatomic contacts and identifies serious steric clashes (≥0.4Å overlap). Simultaneously, run geometry validation including:- Ramachandran analysis using updated Top8000 distributions

- Rotamer analysis against the Top8000 dataset

- Cβ deviation calculations

- CaBLAM backbone conformation analysis (particularly for cryo-EM/ low-resolution X-ray)

- Identification of cis-nonProline and twisted peptides [15]

- Output Interpretation: Review the summary statistics, particularly the all-atom clashscore, Ramachandran and rotamer Z-scores, and lists of specific outliers. Download kinemage visualization files or PDB files with corrected atoms for further analysis [16].

wwPDB Validation Protocol for Deposition

The wwPDB validation process is integrated into the OneDep deposition pipeline [13]:

- Pre-deposition Checking: Depositors are strongly encouraged to use the standalone wwPDB Validation Server (validate.wwpdb.org) before formal submission to identify and correct potential issues [13].

- File Submission: Through the OneDep system, depositors submit structure coordinates, structure factors (for crystallography), and sequence information [14].

- Automated Validation Pipeline: The system runs multiple validation tools in parallel:

- MolProbity for clashscore, Ramachandran, and rotamer analysis

- DCC for density fit analysis

- EDS and RSRZ calculations for real-space correlation

- Mogul for ligand geometry validation against CSD [13]

- Report Generation and Biocuration: The system generates a comprehensive validation report with graphical sliders showing percentile scores. During biocuration, wwPDB staff may request clarifications or corrections based on validation outliers [13].

- Final Report Dissemination: The final validation report is provided to the depositor and, upon publication, becomes publicly accessible alongside the PDB entry [13].

Essential Research Reagent Solutions

Researchers performing structural validation require access to both computational tools and reference data resources. The following table catalogs key solutions in the validation toolkit.

Table 3: Essential Research Reagents for Structure Validation

| Resource Category | Specific Tool/Data | Function in Validation | Access Method |

|---|---|---|---|

| Reference Datasets | Top8000 (Protein) | High-quality reference for Ramachandran, rotamer, and CaBLAM distributions [15] | GitHub: MolProbity/reference_data [15] |

| Reference Datasets | RNA11 (RNA) | Reference for RNA backbone conformer analysis [15] | GitHub: MolProbity/reference_data [15] |

| Software Libraries | CCTBX | Computational Crystallography Toolbox; open-source library underlying Phenix and MolProbity [15] | GitHub: cctbx/cctbx_project [15] |

| Validation Services | wwPDB Validation Server | Pre-deposition validation service for checking models before submission [13] | http://validate.wwpdb.org [13] |

| Visualization Tools | KiNG | Java-based molecular viewer for analyzing MolProbity kinemage outputs [16] | GitHub: rlabduke/javadev [15] |

| Visualization Tools | ChimeraX | Modern molecular visualization with built-in validation display capabilities [18] | Download from UCSF [19] |

The wwPDB validation system, MolProbity, and the PSVS suite form a complementary ecosystem for protein structure validation, each with distinct strengths and applications. The wwPDB system provides the official, standardized validation essential for deposition and archival, with its five-slider report offering intuitive quality assessment. MolProbity delivers the most sensitive all-atom contact analysis and comprehensive geometry validation, particularly valuable during model building and refinement. The PSVS suite specializes in NMR structure validation, filling a critical methodological niche. The documented improvement in PDB structure quality metrics since these tools were introduced demonstrates their collective value to the structural biology community. Researchers should employ these resources in a complementary fashion: using MolProbity and PSVS during structure determination and refinement, and relying on the wwPDB validation report as the final arbiter of quality for deposition and publication.

A Practical Toolkit: Key Validation Metrics and How to Apply Them

Protein structure validation is a critical step in structural biology, ensuring that three-dimensional atomic models are geometrically realistic and energetically plausible before they are used in downstream applications such as drug design and functional analysis. These validation tools act as a final quality check, identifying potential errors in protein structures derived from experimental methods like X-ray crystallography and cryo-EM, or from computational predictions like homology modeling and AlphaFold. At the heart of modern validation are three principal metrics: the Ramachandran plot, which assesses the backbone torsion angles; the clashscore, which quantifies steric hindrance between atoms; and rotamer analysis, which evaluates the conformations of amino acid side chains. Together, these checks provide a comprehensive overview of a model's stereochemical quality, highlighting regions that may require refinement. Notably, even models with nearly perfect overall statistics can possess subtle geometric issues detectable through these analyses, underscoring their indispensable role in the structure determination pipeline [20].

The fundamental importance of these validation metrics has grown with the increasing number of structures solved at lower resolutions, where experimental data are insufficient to define atomic positions unambiguously. Furthermore, the rise of artificial intelligence-based structure prediction tools has generated an unprecedented number of protein models, making automated quality assessment essential. While these geometric checks are powerful, researchers are increasingly aware that they provide necessary but not always sufficient conditions for model accuracy. This has driven the development of additional validation criteria, such as hydrogen-bonding geometry, to provide independent assessment beyond traditional metrics [20]. This guide systematically compares the performance, implementation, and interpretation of the primary geometric validation tools, providing researchers with a framework for rigorous protein structure evaluation.

Core Validation Metrics and Their Structural Basis

Ramachandran Plot: Principles and Interpretation

The Ramachandran plot provides a fundamental check of protein backbone conformation by visualizing the allowed regions for the phi (φ) and psi (ψ) torsion angles of each amino acid residue. This analysis is based on the principle that steric clashes between atoms impose strict limitations on the possible combinations of these dihedral angles. The plot is divided into favored, allowed, and outlier regions, with the distribution of residues providing crucial information about backbone quality. Ideally, a high-quality structure will have over 90% of its residues in the most favored regions, with few or no outliers. The Ramachandran plot Z-score (Rama-Z) offers a quantitative measure of how well the distribution of residues matches expectations from high-resolution structures, helping identify models with statistically unusual backbone conformations even when they lack formal outliers [20].

Recent studies have demonstrated that over-reliance on Ramachandran restraints during refinement can diminish the validating power of this tool. For example, some refined models achieve excellent overall statistics with 98-99% of residues in favored regions and no outliers, yet display highly unusual, symmetric clustering patterns around the most prominent peaks in the α-helical region. These patterns, detectable to a trained eye or through Rama-Z scores, indicate potential over-fitting during refinement that conventional favored/outlier counts alone might miss [20]. This highlights the importance of both quantitative metrics and visual inspection when interpreting Ramachandran plots for final model validation.

Clashscore: Quantifying Steric Hindrance

The clashscore is a measure of atomic packing quality, calculated as the number of serious steric overlaps per thousand atoms. It is derived from all-atom contact analysis, where atomic van der Waals radii are used to detect unnaturally close contacts between non-bonded atoms. A lower clashscore indicates better atomic packing and fewer steric violations. For high-quality structures, clashscores typically fall below the 10th percentile for structures at comparable resolutions, with values near zero representing optimal packing [20] [21].

The MolProbity server provides one of the most widely used implementations of clashscore calculation, utilizing all-atom contact analysis to identify steric clashes. This analysis is particularly valuable for detecting errors in side-chain placement and highlighting regions where the molecular density may be misinterpreted. In practice, the clashscore serves as a sensitive indicator of overall model quality, with high values often correlating with other geometric problems. As with other validation metrics, optimal target values depend on the structure's resolution, with tighter thresholds applied to high-resolution models [21].

Rotamer Analysis: Evaluating Side-Chain Conformations

Rotamer analysis assesses the quality of amino acid side-chain conformations by comparing them to preferred rotameric states observed in high-resolution structures. Side-chain rotamers represent low-energy conformations determined by rotations around torsion angles, with certain orientations being strongly favored due to steric and energetic considerations. The analysis identifies outliers—side chains in unlikely, high-energy conformations—which may indicate errors in modeling or refinement. For high-quality structures, the percentage of rotamer outliers should be minimal (typically <1-2%) [20].

Specialized tools like NQ-Flipper specifically target unfavorable rotamers of asparagine (Asn) and glutamine (Gln) residues, which can often flip to alternative orientations with minimal energetic cost but significant implications for hydrogen-bonding networks. Rotamer analysis has proven particularly valuable for validating functionally important regions, such as enzyme active sites and binding pockets, where accurate side-chain placement is critical for understanding biological mechanisms. When combined with Ramachandran and clashscore analysis, it provides a comprehensive picture of both backbone and side-chain geometry [21].

Comparative Performance of Validation Tools

Various servers and software packages implement the core validation metrics with different algorithms and reference databases. The following table summarizes key tools used by the structural biology community:

Table 1: Key Protein Structure Validation Tools and Their Features

| Tool Name | Primary Function | Key Features | Access Method |

|---|---|---|---|

| MolProbity | Comprehensive validation | All-atom contact analysis, clashscore, Ramachandran plots, rotamer analysis | Web server [21] |

| PROCHECK | Stereochemical quality check | Detailed Ramachandran plot analysis, structure comparison | Standalone program [21] |

| WHAT_CHECK | Structure verification | Multiple geometric checks, derived from WHAT IF program | Standalone program [21] |

| Verify3D | Structure-sequence compatibility | 3D-1D profile compatibility assessment | Web server [21] |

| Phenix | Refinement and validation | Integrated validation tools, hydrogen-bonding parameter analysis | Software suite [20] |

MolProbity stands out for its integrated all-atom approach, combining clashscore, Ramachandran, and rotamer analyses into a single validation report. Its emphasis on identifying clear, actionable problems has made it particularly valuable for both experimental structure determination and computational model building. PROCHECK offers more detailed Ramachandran plot analysis, while WHAT_CHECK provides a broader range of geometric checks. The integration of these tools into refinement pipelines like Phenix has streamlined the validation process, allowing for real-time quality assessment during model building [20] [21].

Quantitative Comparison of Tool Performance

Different validation tools can produce varying results for the same structure due to differences in reference datasets, statistical methods, and parameterization. The following table summarizes a comparative analysis of protein structures validated using multiple approaches:

Table 2: Comparative Validation Metrics for Example Protein Structures

| PDB Code/Model Type | Resolution (Å) | Ramachandran Favored (%) | Ramachandran Outliers (%) | Clashscore | Rotamer Outliers (%) | Validation Tool |

|---|---|---|---|---|---|---|

| 5j1f [20] | 3.0 | 99.5 | 0 | 0 | 0 | MolProbity |

| 5xb1 [20] | 4.0 | 98.0 | 0 | 4 | 0 | MolProbity |

| 6akf [20] | 8.0 | 98.2 | 0 | 8 | 0 | MolProbity |

| 6mdo [20] | 3.9 | 99.7 | 0 | 7 | 0 | MolProbity |

| Gαi1 (AF) [22] | Prediction | N/R | N/R | N/R | N/R | pLDDT: High |

| Gαi1 (HM) [22] | Prediction | N/R | N/R | N/R | N/R | Z-score: 0.67 |

| APC (AF) [22] | Prediction | N/R | N/R | N/R | N/R | pLDDT: Moderate-High |

| APC (HM) [22] | Prediction | N/R | N/R | N/R | N/R | Z-score: -1.41 |

Abbreviations: AF: AlphaFold; HM: Homology Modeling; N/R: Not reported in the cited study

The table illustrates that conventional validation metrics can appear excellent even in structures with unusual geometric properties. For instance, all four example structures (5j1f, 5xb1, 6akf, 6mdo) show nearly perfect Ramachandran statistics and minimal clashscores, yet visual inspection reveals atypical clustering patterns in their Ramachandran plots [20]. For predicted models, quality measures include traditional geometric validation as well as prediction-specific metrics like pLDDT for AlphaFold and Z-scores for homology models, with optimal models typically showing Z-scores greater than zero [22].

Performance Across Structure Types and Resolutions

Validation tool performance varies significantly with structure resolution and determination method. High-resolution structures (<2.0 Å) typically present fewer validation challenges, with most tools agreeing on quality assessments. At medium resolutions (2.0-3.5 Å), validation becomes more critical as increased coordinate error can lead to more geometric violations. At low resolutions (>3.5 Å), particularly in cryo-EM structures, validation metrics must be interpreted with caution, as over-restraining during refinement can produce artificially good statistics that mask underlying problems [20].

For computationally predicted structures, traditional validation metrics remain essential but may be supplemented with prediction-specific measures. AlphaFold models are accompanied by pLDDT scores per residue, which estimate confidence but are not a substitute for geometric validation. Homology models show varying performance depending on template quality and sequence identity, with Z-scores providing an overall quality measure relative to high-resolution experimental structures [22]. Recent studies comparing homology modeling and AlphaFold have found that while both can produce high-quality models, their superiority depends on specific criteria, with homology models successfully incorporating experimental aspects from templates and AlphaFold excelling in the absence of suitable templates [22].

Experimental Protocols for Structure Validation

Standard Workflow for Comprehensive Validation

A robust validation protocol incorporates multiple tools and metrics to provide a comprehensive assessment of protein structure quality. The following workflow diagram illustrates the key steps in a standard validation pipeline:

Title: Protein structure validation workflow

This systematic approach ensures that all aspects of structural geometry are thoroughly evaluated. The process begins with Ramachandran plot analysis to assess backbone conformation, followed by clashscore calculation to evaluate steric clashes, and rotamer analysis to check side-chain placements. Additional validation, including hydrogen-bonding geometry and Cβ deviations, provides complementary metrics. Finally, all results are integrated for a comprehensive assessment. Structures passing all checks are deemed reliable, while those failing require iterative refinement and re-validation [20] [21].

Implementation with Specific Tools

MolProbity Protocol:

- Upload your structure file (PDB format) to the MolProbity server (http://molprobity.biochem.duke.edu/)

- Run the automated analysis, which includes:

- All-atom contact analysis for clashscore calculation

- Ramachandran plot evaluation using updated dihedral angle distributions

- Rotamer analysis using updated rotamer libraries

- Cβ deviation checks

- Review the integrated validation report, paying attention to percentiles relative to structures at similar resolutions

- Use the interactive visualization to identify specific problematic residues for correction

PROCHECK Protocol:

- Prepare your structure file in PDB format

- Run PROCHECK either as a standalone program or through web interfaces

- Generate the Ramachandran plot and associated statistics

- Analyze the residue-by-residue stereochemical quality reports

- Compare the G-factors for dihedral angles, bond lengths, and bond angles against expected values

For low-resolution structures, additional validation using hydrogen-bonding parameters is recommended. The phenix.hbond tool in Phenix analyzes hydrogen-bond geometry distributions and compares them to expected distributions from high-resolution structures, providing an independent validation metric not typically used as a refinement target [20].

Research Reagent Solutions for Protein Structure Validation

Table 3: Essential Tools and Resources for Protein Structure Validation

| Tool/Resource | Type | Primary Function | Access Method |

|---|---|---|---|

| MolProbity | Validation Server | Comprehensive all-atom validation | Web server [21] |

| PROCHECK | Software | Stereochemical quality analysis | Standalone program [21] |

| WHAT_CHECK | Software | Structure verification suite | Standalone program [21] |

| NQ-Flipper | Specialized Tool | Detection of unfavorable Asn/Gln rotamers | Web server [21] |

| Verify3D | Validation Server | 3D-1D profile compatibility | Web server [21] |

| PDB_REDO | Database | Re-refined structure database | Web database |

| Protein Data Bank | Database | Repository for validated structures | Web database [21] |

These tools represent the essential toolkit for researchers conducting protein structure validation. MolProbity serves as the central comprehensive validation system, integrating multiple analyses into a unified report. PROCHECK provides more specialized Ramachandran plot analysis, while WHAT_CHECK offers broader geometric checks. NQ-Flipper addresses the specific problem of ambiguous asparagine and glutamine orientations that can be difficult to resolve experimentally. Verify3D offers a complementary approach by assessing the compatibility of the 3D structure with its amino acid sequence. Together, these resources enable researchers to thoroughly evaluate their protein models before deposition in the Protein Data Bank or use in functional studies [21].

Advanced Applications and Emerging Trends

Validation in the Age of AI-Based Structure Prediction

The revolutionary advances in AI-based protein structure prediction, recognized by the 2024 Nobel Prize in Chemistry, have created new challenges and opportunities for structure validation. While AlphaFold2 and related tools can predict structures with unprecedented accuracy, their outputs still require rigorous validation, particularly for regions with low pLDDT confidence scores. Traditional geometric checks remain essential for assessing the physical plausibility of AI-predicted models. Additionally, the relationship between prediction confidence metrics (like pLDDT) and traditional validation metrics is an area of active research [23].

Comparative studies have shown that AlphaFold generally produces high-quality structures, though high-confidence regions sometimes disagree with experimental data. Homology modeling, while successful in incorporating experimental aspects from templates, may struggle with accuracy in the absence of suitable templates. For both approaches, geometric validation provides crucial information about model quality beyond internal confidence measures [22]. This is particularly important for functional sites, such as enzyme active regions and binding pockets, where accurate geometry is essential for biological activity.

Hydrogen-Bonding Parameters as Complementary Validators

With the limitations of conventional validation metrics at low resolutions, hydrogen-bonding parameters have emerged as valuable complementary validators. Systematic analysis of hydrogen-bond geometries in high-resolution structures reveals distinct, conserved distributions that can serve as reference data. The phenix.hbond tool implements this analysis, providing a validation metric that is difficult to use directly as a refinement target and thus maintains its independence [20].

This approach is particularly valuable for identifying subtle geometric issues in models that pass traditional validation checks. For example, structures with nearly perfect Ramachandran statistics, minimal clashscores, and no rotamer outliers can still display unusual hydrogen-bonding patterns indicative of underlying problems. The development of this and other novel validation methods represents an important trend in protein structure validation, moving beyond the standard triad of Ramachandran plots, clashscores, and rotamer analysis toward more comprehensive assessment [20].

Geometric quality checks using Ramachandran plots, clashscores, and rotamer analysis remain foundational to protein structure validation. While numerous tools implement these analyses, MolProbity stands out for its integrated all-atom approach and user-friendly interface. However, as demonstrated by structures with excellent conventional metrics but unusual geometric properties, these standard checks should be complemented with additional validation methods, particularly hydrogen-bonding analysis. The continuing evolution of protein structure prediction and determination methods ensures that validation will remain an active and critical area of research, with ongoing development of new metrics and approaches to ensure the reliability of protein structural models.

In structural biology and computational drug design, quantifying the similarity between three-dimensional protein structures is a fundamental task. Accurate structure comparison is vital for understanding protein function, evolutionary relationships, and for validating computational models against experimental data. Distance-based metrics provide the quantitative foundation for these comparisons, enabling researchers to objectively assess structural similarity across various contexts and scales. These metrics have become indispensable tools in protein structure prediction, molecular docking, and drug development workflows.

The evolution of structural comparison metrics has progressed from global measures that provide overall similarity assessments to more sophisticated region-specific measures that capture local structural variations. Each metric offers distinct advantages and sensitivities to different aspects of structural similarity, making them suitable for specific applications in structural biology. This guide provides a comprehensive comparison of the primary distance-based metrics used in protein structure validation, examining their underlying methodologies, interpretative frameworks, and appropriate applications to equip researchers with the knowledge to select optimal metrics for their specific research questions.

Core Metrics for Global and Local Structure Assessment

Root Mean Square Deviation (RMSD)

Root Mean Square Deviation (RMSD) is one of the most established metrics for quantifying the average distance between atoms of two superimposed protein structures. The calculation involves optimally aligning two structures to minimize the distances between corresponding atoms, then computing the square root of the average of these squared distances. The mathematical formulation is expressed as RMSD = √[∑(di)²/N], where di represents the distance between the i-th pair of corresponding atoms and N is the total number of atom pairs compared. An RMSD of 0 indicates perfect structural identity, while increasing values reflect greater structural divergence [24].

RMSD provides a global measure of structural similarity but has inherent limitations. As an average measure, it is equally sensitive to local structural variations and global topological differences, which can be problematic when comparing structures with flexible regions or terminal extensions. Additionally, RMSD values are length-dependent, making comparisons across different-sized proteins challenging [25]. The interpretative framework for RMSD values is well-established: values below 2.0 Å typically indicate high structural similarity, values between 2.0-4.0 Å represent moderate similarity with potentially significant local variations, and values exceeding 4.0 Å suggest substantial structural differences [26].

Template Modeling Score (TM-score)

The Template Modeling Score (TM-score) was developed to address several limitations of RMSD, particularly its sensitivity to local variations and dependence on protein length. TM-score employs a length-independent normalization and weights smaller distances more strongly than larger ones, making it more sensitive to global fold similarity than local structural variations. The score ranges between (0,1], where 1 indicates perfect match. Statistical analyses have established that scores below 0.17 correspond to randomly chosen unrelated proteins, while scores above 0.5 generally indicate structures sharing the same fold classification in SCOP/CATH [27] [25].

TM-score provides a more robust assessment of global topological similarity compared to RMSD. Its statistical foundation allows for quantitative interpretation of structural significance. For instance, a TM-score of 0.5 corresponds to a P-value of 5.5×10⁻⁷, indicating that one would need to consider approximately 1.8 million random protein pairs to find a match with TM-score ≥0.5 [25]. This statistical rigor makes TM-score particularly valuable for fold recognition and protein classification studies where global topology is more relevant than atomic-level precision.

Global Distance Test (GDT)

The Global Distance Test (GDT) quantifies structural similarity by calculating the largest set of equivalent Cα atoms that fall within specified distance cutoffs after optimal superposition. Unlike RMSD, which provides a single average value, GDT typically generates multiple scores at different distance thresholds (commonly 1, 2, 4, and 8 Å), offering a more nuanced view of structural similarity at different spatial scales. The final GDT score is often reported as the average percentage of residues within these distance cutoffs [26].

GDT is particularly valuable for assessing protein structure prediction accuracy, where it provides insights into the fraction of correctly positioned residues. However, the choice of distance cutoffs introduces an element of subjectivity, as optimal thresholds may vary depending on the specific application and protein characteristics. GDT scores are expressed as percentages, with values exceeding 90% indicating high accuracy, 50-90% representing acceptable similarity depending on research context, and values below 50% suggesting poor structural agreement [26].

Local Distance Difference Test (LDDT)

The Local Distance Difference Test (LDDT) is a local assessment metric that evaluates the accuracy of distance distributions within a protein structure without requiring superposition. LDDT operates by comparing distances between atom pairs in reference and model structures within a specified radius, making it particularly valuable for assessing local structural quality and insensitive to domain movements. The per-residue variant (pLDDT) provides residue-level confidence scores, offering granular insights into local model quality [26].

LDDT scores range from 0-100, with scores above 80 indicating high local accuracy, scores between 50-80 representing moderate reliability, and scores below 50 suggesting low confidence in local structural elements. This residue-level assessment is particularly valuable for identifying poorly modeled regions and understanding local variations in structural quality, especially in flexible loops or terminal regions where global metrics like RMSD may provide misleading assessments [26].

Table 1: Comparative Overview of Key Protein Structure Metrics

| Metric | Scope | Scale | Interpretation Range | Key Applications |

|---|---|---|---|---|

| RMSD | Global | Atomic coordinates | <2.0 Å (High similarity)2.0-4.0 Å (Moderate)>4.0 Å (Low similarity) | Molecular dynamics trajectories,Local conformational changes |

| TM-score | Global | Topological similarity | <0.17 (Random similarity)0.17-0.5 (Possible relatedness)>0.5 (Same fold) | Fold recognition,Template-based modeling |

| GDT | Global | Residue percentages | <50% (Low accuracy)50-90% (Moderate)>90% (High accuracy) | Protein structure prediction,Global topology assessment |

| LDDT/pLDDT | Local | Per-residue confidence | <50 (Low confidence)50-80 (Moderate)>80 (High confidence) | Local quality assessment,Model validation |

Experimental Protocols for Metric Validation

Standardized RMSD Calculation Workflow

The protocol for calculating RMSD between two protein structures requires careful preparation and execution to ensure meaningful results. First, structures must be preprocessed by removing non-protein atoms (waters, ions, cofactors) unless specifically relevant to the analysis. The protein structures must then be optimally superimposed using algorithms such as least-squares fitting to minimize the overall distance between corresponding atoms. This alignment step is crucial as it ensures that RMSD values reflect genuine structural differences rather than arbitrary rotational or translational orientations [24].

Following alignment, the RMSD calculation proceeds with computing the Euclidean distance between each pair of equivalent atoms. These distances are squared, summed, and divided by the total number of atom pairs to obtain the mean squared deviation. The final RMSD value is derived by taking the square root of this mean. Validation of RMSD calculations requires careful inspection of the alignment quality and confirmation that equivalent atoms are properly matched. Poor alignments or incorrect atom correspondences can produce artificially elevated RMSD values that do not reflect true structural differences [24].

TM-score Statistical Validation Protocol

The statistical validation of TM-score involves large-scale comparisons across non-redundant protein datasets to establish significance thresholds. The standard protocol begins with curating a dataset of non-homologous single-domain proteins (typically with sequence identity <25%) with lengths between 80-200 amino acids. All-to-all gapless structural alignments are performed across this dataset, generating millions of structural comparisons. For each protein pair, the shorter protein is superposed on the larger protein using a sliding window approach to generate diverse structural alignments [25].

The resulting TM-score distribution follows an extreme value distribution, enabling the calculation of P-values for observed TM-score values. This statistical framework allows researchers to determine the significance of structural similarity beyond random expectation. The second validation phase involves examining the relationship between TM-score values and known fold classifications from databases like SCOP and CATH. This analysis reveals that TM-score = 0.5 serves as an approximate threshold for fold assignment, with scores above this threshold predominantly representing the same fold and scores below typically indicating different folds [25].

Diagram 1: Structural similarity assessment workflow

Metric Performance in Structure Prediction Assessment

CASP Benchmark Results

The Critical Assessment of Protein Structure Prediction (CASP) experiments provide comprehensive evaluations of metric performance across diverse protein targets. These experiments reveal that different metrics offer complementary insights into various aspects of prediction quality. TM-score consistently demonstrates superior performance for assessing global fold correctness, particularly for targets with distant evolutionary relationships. In CASP15, leading methods like DeepSCFold achieved TM-score improvements of 10-12% over baseline approaches, highlighting the metric's sensitivity to topological accuracy [12].

GDT scores provide valuable granularity through their multiple distance thresholds, offering insights into the fraction of models meeting specific accuracy standards. The GDT-TS (Total Score) and GDT-HA (High Accuracy) variants cater to different precision requirements, with the latter providing stricter assessment for high-accuracy models. RMSD remains valuable for local structure validation but shows limitations for full-length protein assessment, particularly for proteins with flexible termini or domain movements [26].

Application in Challenging Targets

Recent assessments on challenging protein classes, including snake venom toxins and antibody-antigen complexes, demonstrate the complementary strengths of different metrics. For these targets with complex disulfide connectivity or binding interfaces, LDDT provides crucial insights into local environment accuracy that global metrics may overlook. In antibody-antigen complexes, where global folds may be preserved but binding interfaces show critical variations, LDDT successfully identified interface inaccuracies that correlated with functional impairment [28] [12].