Systematic Reagent Testing: A Complete Guide to Identifying and Eliminating Contamination Sources

This guide provides researchers, scientists, and drug development professionals with a comprehensive framework for systematic reagent contamination testing.

Systematic Reagent Testing: A Complete Guide to Identifying and Eliminating Contamination Sources

Abstract

This guide provides researchers, scientists, and drug development professionals with a comprehensive framework for systematic reagent contamination testing. It covers foundational concepts of contamination sources, practical methodologies for detection and control, advanced troubleshooting techniques, and validation protocols to ensure data integrity and regulatory compliance in biomedical research and diagnostics.

Understanding Contamination: Sources, Risks, and Impact on Data Integrity

Contamination represents a critical and pervasive challenge in scientific research and drug development, with implications ranging from compromised product quality to erroneous biological conclusions. This article defines two primary contamination categories: physical particulate matter and biological microbial DNA. Effective management of these contaminants is essential for research integrity, particularly in sensitive fields like microbiome studies and pharmaceutical manufacturing.

Physical particulate contamination involves foreign inorganic or organic particles introduced during manufacturing or handling. Meanwhile, low-biomass microbiome studies are exceptionally vulnerable to microbial DNA contamination from laboratory reagents and environments, which can drastically alter results and interpretations [1] [2]. This application note establishes systematic protocols for detecting, characterizing, and mitigating both contamination types within a comprehensive reagent quality assurance framework.

Quantitative Contamination Profiles

Data from recent studies quantifying microbial DNA contamination in common laboratory reagents are summarized in the tables below.

Table 1: Microbial DNA Contamination in Commercial PCR Enzymes [3]

| Analytical Method | Number of Enzymes Tested | Number Contaminated | Contamination Rate | Key Contaminants Identified |

|---|---|---|---|---|

| Endpoint PCR & Sanger Sequencing | 9 | 7 | 78% | Multiple bacterial species |

Table 2: Background Microbiota in DNA Extraction Kits via mNGS [4]

| Reagent Brand | Input Material | Key Findings | Clinical Implications |

|---|---|---|---|

| Brand M | Molecular-grade water | Distinct contamination profiles across brands and lots. | Detection of common pathogenic species affecting diagnosis. |

| Brand Q | Molecular-grade water | Significant batch-to-batch variability. | Highlights need for lot-specific profiling and negative controls. |

| Brand R | Molecular-grade water | Site-specific environmental contaminants identified. | Confirms healthy blood may lack consistent microbiome. |

| Brand Z | ZymoBIOMICS Spike-in Control |

Experimental Protocols

Protocol 1: Particulate Matter Analysis and Characterization

This protocol outlines a systematic approach for isolating and identifying unknown particulate contaminants using microscopy and spectroscopy techniques [5].

Materials

- Light microscope (with polarized capability)

- Scanning Electron Microscope (SEM) with Energy-Dispersive X-Ray Spectroscopy (EDX)

- Fourier-transform infrared (ATR-FTIR) microscope

- Sterile forceps, sample vials, and appropriate packaging for transport

Procedure

- Sample Collection: Using sterile forceps, collect particulate matter under controlled conditions to avoid cross-contamination. For particles embedded in a product, carefully excise a portion containing the contaminant.

- Visual Examination: Examine particles under a light microscope to document morphology, size, shape, and color using photomicrography.

- Elemental Analysis (SEM/EDX): Transfer isolated particles to SEM for high-resolution imaging. Use EDX to determine elemental composition.

- Molecular Identification (ATR-FTIR): Analyze particles via ATR-FTIR spectroscopy to identify functional groups and organic compounds by matching spectral data to reference libraries.

- Data Integration and Reporting: Correlate data from all techniques to identify the contaminant and perform root cause analysis.

Protocol 2: Testing Reagents for Microbial DNA Contamination

This protocol describes a method to screen commercial PCR enzymes for bacterial DNA contamination using endpoint PCR and Sanger sequencing [3].

Materials

- Test commercial PCR enzymes and their respective buffers

- Primers targeting the V3-V4 hypervariable region of the 16S rRNA gene

- Molecular biology-grade water

- dNTP mix

- Positive control DNA (e.g., from E. coli)

- Agarose gel electrophoresis equipment

- Sanger sequencing services

Procedure

- Reaction Setup: For each PCR enzyme, set up two reactions in parallel under a laminar flow hood using aseptic technique.

- Test Reaction: 25 µL total volume containing PCR mix, primers, and molecular biology-grade water (no template DNA).

- Positive Control: 25 µL total volume containing PCR mix, primers, and E. coli DNA.

- PCR Amplification: Run reactions per manufacturer's recommended cycling conditions.

- Gel Electrophoresis: Separate 5 µL of PCR product on a 1% agarose gel. A band at ~500 bp in the test reaction indicates bacterial DNA contamination.

- Sequence and Identify Contaminants: Excise bands from the gel, purify, and submit for Sanger sequencing. Analyze sequences using BLAST against the NCBI database.

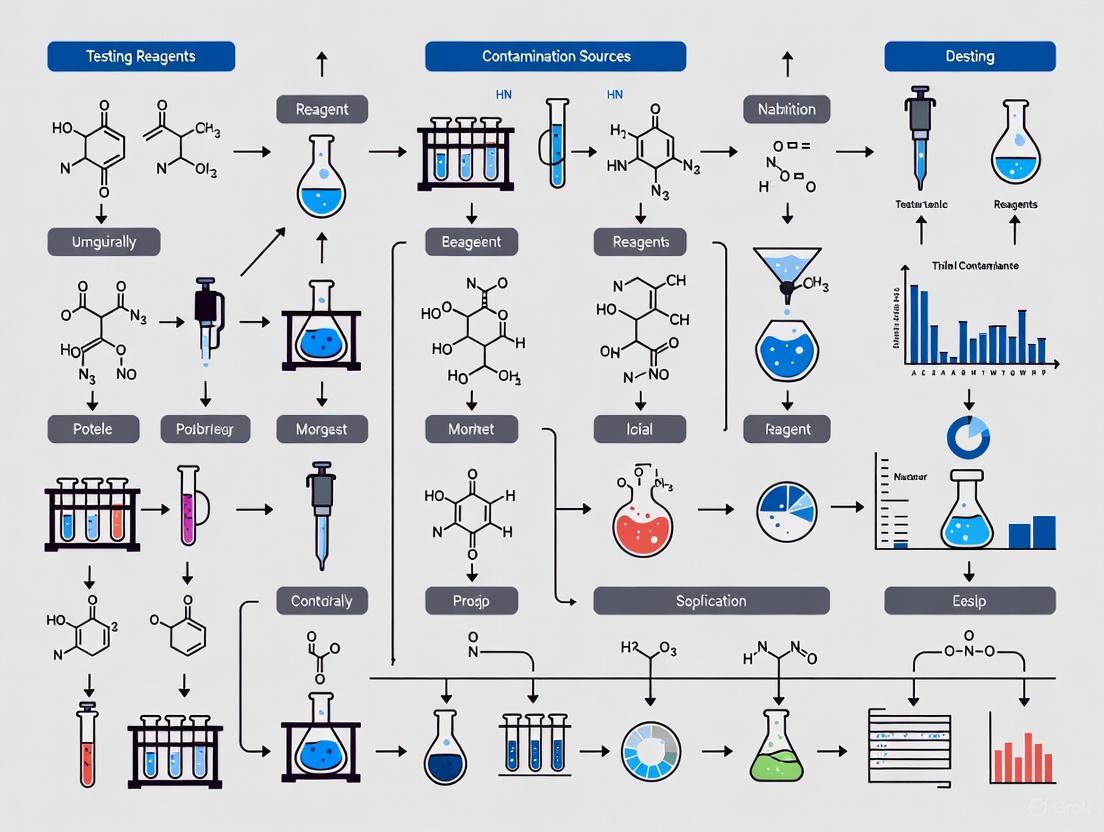

Workflow Visualization

The following diagram illustrates the logical workflow for a systematic contamination investigation, integrating both particulate and microbial analysis paths.

The Scientist's Toolkit

Essential materials and solutions for implementing the contamination analysis protocols are listed below.

Table 3: Research Reagent Solutions for Contamination Analysis

| Item | Function | Application Example |

|---|---|---|

| Molecular Biology-Grade Water | DNA-free water for preparing negative controls. | Used as input in DNA extraction kits to test for reagent-derived contamination [4]. |

| ZymoBIOMICS Spike-in Control | Defined microbial community as an internal positive control. | Spiked into samples to monitor extraction efficiency and identify contaminants in mNGS workflows [4]. |

| 16S rRNA Gene Primers | Primers for amplifying the bacterial 16S rRNA gene. | Used in endpoint PCR to screen for bacterial DNA in reagents [3]. |

| DNA Removal Reagents (e.g., Bleach) | Degrades contaminating DNA on surfaces and equipment. | Decontaminate sampling equipment, tools, and work surfaces between samples [1]. |

| Personal Protective Equipment (PPE) | Clean suits, gloves, masks to limit sample exposure. | Reduces contamination from human operators during sampling of low-biomass specimens [1]. |

Contamination constitutes one of the most persistent and costly challenges in biological and biomedical research, potentially compromising experimental integrity, leading to erroneous conclusions, and wasting valuable resources. In the context of systematic reagent testing for contamination sources research, identifying and mitigating these contaminants becomes paramount for generating reliable, reproducible data. This is particularly critical in studies involving low microbial biomass samples, where the target DNA "signal" can be easily overwhelmed by contaminant "noise" [1] [2]. This application note details the primary sources of contamination stemming from reagents, equipment, and the laboratory environment, and provides structured protocols for their identification and control within a systematic quality assurance framework.

A systematic approach to contamination control begins with recognizing its principal sources. The following table summarizes key contaminants, their origins, and their potential impact on research outcomes.

Table 1: Common Contamination Sources and Their Impact on Research

| Source Category | Specific Source | Common Contaminants | Potential Impact on Experiments |

|---|---|---|---|

| Reagents & Kits | DNA Extraction Kits [2] [6] | Bacterial DNA (e.g., Cutibacterium) | False positives in microbiome studies; alters host molecular landscapes [6]. |

| Water & Buffers [7] | Bacteria, Fungi, Endotoxins | Supports microbial growth; interferes with cell cultures and molecular assays. | |

| Commercial Media [7] | Microbial spores, Chemical impurities | Compromises cell culture purity; introduces unintended variables. | |

| Laboratory Equipment | Improperly Cleaned Tools [8] | Residual analytes, cross-sample carryover | Skews sensitive assays (e.g., PCR, trace element analysis) [9] [8]. |

| Automated Instruments [10] | Aerosolized DNA, sample carryover | Causes well-to-well cross-contamination in PCR and sequencing [1] [10]. | |

| Laminar Flow Hoods [7] | Settled airborne contaminants | Contaminates sterile supplies and open culture vessels. | |

| Laboratory Environment | Personnel [11] [7] | Skin cells, hair, respiratory droplets | Introduces human microbiota, a prevalent contaminant in low-biomass studies [6]. |

| Airborne Particles [11] | Dust, fungal spores, microbial cells | Settles on surfaces and into open samples during processing. | |

| Surfaces [12] | Chemical residues, dust, DNA | Transfers contaminants via direct contact with samples or equipment. |

The quantitative impact of contamination is especially severe in low-biomass research. Studies estimate that 1,000 to 100,000 contaminating microbial reads can be detected per million host reads sequenced by RNA-seq [6]. In low-biomass samples, contaminants can rapidly dominate the profile; experiments with serial dilutions of a bacterial culture showed contaminants representing the majority of the microbial biomass after just a few dilutions [2]. Furthermore, contamination is often confounded with experimental variables, such as being associated with different batches of the same extraction kit, which can lead to misleading biological conclusions [2].

Experimental Protocols for Contamination Detection

Protocol: Systematic Monitoring of Reagent and Kit Contamination

Principle: Even certified molecular-grade reagents and commercial kits can contain trace amounts of microbial DNA. This protocol uses sensitive negative controls to detect these contaminants [2] [13].

Workflow:

Procedure:

- Preparation of Negative Controls:

- Process Control (Kit Blank): Use sterile, DNA-free water (e.g., PCR-grade) as a mock sample. Subject it to the entire analytical procedure alongside your experimental samples, starting from the DNA extraction step [2] [13].

- Template Control (PCR Blank): Include a well containing only PCR-grade water in your amplification reaction to control for contamination in master mix components [2].

- Sample Processing:

- Downstream Analysis and Sequencing:

- Data Interpretation:

- Identification: Taxa or sequences found in the negative controls are considered potential contaminants.

- Frequency-Based Filtering: Contaminants present at higher levels in controls than in samples can be removed bioinformatically [6].

- Threshold Setting: For qPCR assays, use the results from negative controls to establish a limit of detection/quantitation above which sample results are considered reliable [13].

Protocol: Environmental Monitoring via Surface and Air Sampling

Principle: Regular monitoring of the laboratory environment is essential for identifying persistent contamination reservoirs and verifying the efficacy of cleaning protocols [11].

Workflow:

Procedure:

- Define a Monitoring Plan:

- Identify critical control points: biosafety cabinet/workbench surfaces, door handles, centrifuge keypads, shared equipment, and reagent storage areas.

- Establish a regular sampling schedule (e.g., weekly, monthly).

- Surface Sampling:

- Swab Sampling: Use sterile swabs moistened with a sterile buffer or saline. Swab a defined surface area (e.g., 10 cm²) using a consistent technique.

- Contact Plates: Use RODAC plates or other contact plates filled with appropriate culture media. Press the agar surface directly onto the flat surface to be tested [11].

- Air Sampling:

- Use an air monitoring device (air sampler) that draws a known volume of air over a nutrient agar plate or a liquid collection medium [11].

- Place samplers in key locations, such as inside laminar flow hoods, near incubators, and in general lab areas.

- Sample Analysis:

- Microbial Culture: Transfer swabs to liquid media or directly streak onto agar plates. Incubate contact plates and air sampler plates. Count colony-forming units (CFUs) and identify predominant morphologies.

- Molecular Analysis: Extract DNA directly from swabs and analyze via qPCR or sequencing to profile the microbial community of the lab environment [6].

- Corrective Action:

- Compare results against established internal alert and action limits.

- If limits are exceeded, perform enhanced cleaning and disinfection of the affected area and investigate the source.

The Researcher's Toolkit for Contamination Control

A proactive approach to contamination involves utilizing specific reagents, equipment, and protocols designed for prevention and detection.

Table 2: Essential Research Reagent Solutions and Materials for Contamination Control

| Item Category | Specific Product/Technique | Primary Function in Contamination Control |

|---|---|---|

| Decontamination Reagents | Sodium Hypochlorite (Bleach) [1] | Degrades nucleic acids and inactivates microbes on surfaces and equipment. |

| 80% Ethanol [1] | Effective for surface disinfection and killing contaminating organisms. | |

| DNA Away [10] [8] | Commercially available solution designed to remove contaminating DNA from lab surfaces and equipment. | |

| Specialized Kits & Reagents | Certified DNA-Free Water [7] | Serves as a critical negative control and as a base for reagents to prevent introduction of aquatic contaminants. |

| Microbiome-Tested Extraction Kits [2] | Kits validated for low microbial biomass content reduce introduction of contaminating DNA. | |

| Pre-sterilized, Single-Use Consumables [11] [10] | Eliminates variability and potential contamination from in-house cleaning and sterilization of reusable items. | |

| Laboratory Equipment | Laminar Flow Hoods/Biosafety Cabinets [11] [10] | Provides a HEPA-filtered, sterile workspace for handling sensitive samples and cultures. |

| Automated Liquid Handling Systems [10] | Minimizes technician error and reduces the risk of sample-to-sample cross-contamination. | |

| HEPA Filtration Systems [11] [10] | Removes airborne particulates and microbes from the laboratory environment. |

Within a systematic reagent testing framework, acknowledging and actively managing contamination from reagents, equipment, and the environment is not optional but fundamental. The protocols and tools outlined herein provide a foundation for establishing a rigorous contamination control plan. By integrating systematic negative controls, routine environmental monitoring, and robust decontamination practices, researchers can significantly mitigate contamination risks, thereby safeguarding the integrity of their scientific data and the validity of their conclusions.

Contamination represents a fundamental challenge in scientific research and diagnostic testing, with consequences that extend far beyond the laboratory. The inadvertent introduction of contaminants during analytical processes can compromise data integrity, generate false results, and ultimately erode confidence in scientific institutions and systems. In low-biomass microbiome studies, the inevitability of contamination from external sources becomes a critical concern when working near the limits of detection, as lower-biomass samples can be disproportionately impacted by practices suitable for handling higher-biomass samples [1]. Similarly, in clinical diagnostics, contamination and methodological variations can produce false positive results that carry profound real-world consequences, including the wrongful denial of parole for incarcerated individuals based on erroneous drug tests [14] [15]. This article examines the multifaceted costs of contamination through recent case studies and provides systematic protocols for reagent testing and contamination control applicable across research and diagnostic settings.

Quantifying the Impact: Case Studies in Contamination Consequences

The Quest Diagnostics False Positive Crisis

In 2024, a contamination-related incident involving Quest Diagnostics' urine drug screening revealed how methodological changes can generate widespread false positives. Between April and July 2024, the company utilized an "alternative reagent" while facing a backorder of its usual chemical, resulting in a dramatic spike in presumptive positive tests for opiates [15]. The quantitative impact was substantial, as shown in Table 1.

Table 1: Impact of Alternative Reagent on Opiate Test Positivity Rates in California Prisons

| Time Period | Reagent Type | Monthly Positive Opiate Rate | Statistical Change |

|---|---|---|---|

| Jan-Apr 2024 | Standard | 6.6%-6.8% | Baseline |

| May 2024 | Alternative | 17.1% | ~160% increase |

| June 2024 | Alternative | 20.5% | ~200% increase |

| July 2024 | Alternative | 17.1% | ~160% increase |

| August 2024 | Standard | 6.8% | Return to baseline |

This contamination of the testing process affected approximately 5,000-6,000 tests across California prisons, with profound human consequences [15] [16]. The false results were cited in parole denials, where the Board of Parole Hearings wrote that positive tests "reflect continued substance abuse" and "demonstrate continuing struggles with criminal thinking" [15]. One individual in his 60s, eligible for parole after decades of incarceration, had his case delayed until 2026 based largely on these erroneous results [15].

Contamination in Low-Biomass Microbiome Research

In research contexts, contamination presents equally significant challenges, particularly in low-biomass studies where the target DNA signal may be minimal compared to contaminant noise [1]. Contaminants can be introduced from various sources—including human operators, sampling equipment, reagents/kits, and laboratory environments—at multiple stages such as sampling, storage, DNA extraction, and sequencing [1]. The proportional nature of sequence-based datasets means even small amounts of contaminant DNA can strongly influence results and interpretation, potentially distorting ecological patterns and evolutionary signatures [1].

Systematic Reagent Testing Protocol for Contamination Control

Reagent Qualification Workflow

The following experimental protocol provides a systematic approach for reagent testing and validation to prevent contamination-related errors. This workflow is particularly crucial when reagents are substituted or sourced from alternative suppliers.

Diagram 1: Systematic reagent testing workflow for contamination control.

Experimental Protocol: Reagent Contamination Assessment

Objective: To systematically evaluate new reagent lots or alternative reagents for potential contamination and performance variance before implementation in diagnostic or research workflows.

Materials:

- Reference standard reagent (current validated lot)

- Test reagent (new lot or alternative)

- Positive controls

- Negative controls

- Relevant sample matrices

- Standard laboratory equipment for analysis

Procedure:

- Documentation Review

- Examine Certificate of Analysis for manufacturing details

- Verify purity specifications and potential interferents

- Confirm storage and stability requirements

Contaminant Screening

- Perform endotoxin testing (LAL assay)

- Conduct microbial bioburden assessment

- Test for particulate contamination

- Analyze for cross-reactive compounds in assay systems

Bench Performance Testing

- Run parallel analyses using reference and test reagents

- Utilize standardized positive and negative controls

- Include known negative samples (n≥20)

- Include known positive samples across dynamic range (n≥20)

- Perform inter-day precision testing (3 separate days)

Statistical Analysis

- Compare positivity rates between reference and test reagents

- Calculate sensitivity, specificity, and accuracy

- Perform regression analysis for quantitative assays

- Assess variance using F-test or ANOVA

Acceptance Criteria:

- Positivity rate variance ≤15% from reference standard

- No statistically significant difference in sensitivity/specificity (p>0.05)

- Contaminant levels within manufacturer specifications

- Precision (CV) ≤20% for qualitative tests, ≤15% for quantitative

Comprehensive Contamination Control Framework

Contamination can occur at multiple points in analytical processes, requiring a systematic approach to identification and control. The following diagram illustrates major contamination sources and corresponding control measures across the workflow.

Diagram 2: Major contamination sources and control measures across analytical workflow.

Essential Research Reagent Solutions for Contamination Control

Table 2: Key Research Reagent Solutions for Contamination Prevention and Detection

| Reagent Category | Specific Examples | Function in Contamination Control | Application Notes |

|---|---|---|---|

| Nucleic Acid Degrading Agents | DNase, RNase, sodium hypochlorite | Removes contaminating nucleic acids from surfaces and reagents | Critical for low-biomass microbiome studies; use prior to sample processing [1] |

| Endotoxin Testing Kits | LAL assays, recombinant factor C tests | Detects bacterial endotoxin contamination in reagents | Essential for cell culture and diagnostic reagents; sensitivity to 0.001 EU/mL |

| PCR Inhibition Tests | Internal amplification controls, spike-in DNA | Identifies reaction inhibitors in sample preparation | Required for molecular diagnostic validation; confirms result reliability |

| Sterilization Reagents | Ethanol (80%), hydrogen peroxide, ethylene oxide gas | Eliminates viable microorganisms from equipment and surfaces | Ethanol kills organisms but may not remove DNA; combine with nucleic acid degradation [1] |

| Antimicrobial Agents | Antibiotic cocktails, azides | Prevents microbial growth in stored reagents | Particularly important for liquid reagents stored long-term |

| Analytical Grade Solvents | LC-MS grade water, high purity acids | Minimizes chemical interference in analytical systems | Reduces background noise in sensitive detection methods |

| Quality Control Materials | Certified reference materials, positive/negative controls | Verifies reagent performance and detects lot variations | Must be included in each analytical run; establishes baseline performance |

Advanced Contamination Mitigation Strategies

Systematic Approach to Reagent Validation

The Quest Diagnostics case highlights the critical importance of rigorous reagent validation protocols. When the company substituted an "alternative reagent" that had "passed all quality-control metrics," the resulting threefold increase in positive opiate tests demonstrates that standard QC measures may be insufficient [15]. A more robust validation protocol should include:

Parallel Testing: Run prospective reagent comparisons alongside current standards for sufficient duration (minimum 30 days) to establish baseline performance metrics.

Confirmatory Testing Protocols: As emphasized by forensic toxicologists, presumptive results must be confirmed with definitive testing using mass spectrometry-based platforms like GC/MS or LC/MS [14]. This is particularly crucial when results trigger significant consequences.

Threshold Establishment: Determine acceptable variance limits for positivity rates between reagent lots and implement automatic review triggers when thresholds are exceeded.

Personnel Training: Ensure all staff understand potential to recognize contamination sources and follow decontamination protocols. As emphasized in microbiome research, personnel should be trained to cover exposed body parts with personal protective equipment and maintain awareness of contamination sources [1].

Environmental Monitoring and Control

For low-biomass studies particularly, environmental monitoring represents a crucial component of contamination control. Recommendations include:

Comprehensive Sampling Controls: Collect and process samples from potential contamination sources, including empty collection vessels, air exposure samples, swabs of PPE, and aliquots of preservation solutions [1].

Process Blanks: Include extraction blanks, PCR blanks, and sequencing blanks to identify contaminants introduced during laboratory processing.

Cross-Contamination Prevention: Implement physical separation of pre-and post-amplification activities, use dedicated equipment for different processing stages, and employ UV irradiation of workstations and reagents.

Facility Design: Establish unidirectional workflow from clean to dirty areas, implement HEPA filtration where appropriate, and maintain positive air pressure in clean areas.

The high cost of contamination—in false results, wasted resources, and eroded confidence—demands systematic approaches to reagent testing and contamination control. The Quest Diagnostics case illustrates how seemingly minor methodological changes can create far-reaching consequences, while microbiome research demonstrates the fundamental challenges of working near detection limits. By implementing robust reagent validation protocols, comprehensive environmental monitoring, and systematic contamination control measures, researchers and diagnostic professionals can enhance result reliability and maintain confidence in scientific and diagnostic systems. Ultimately, reducing contamination requires consistent vigilance at every stage, from initial sample collection through final data interpretation, along with transparent reporting of contamination control methods and any potential limitations.

The investigation of low-biomass microbial environments represents one of the most methodologically challenging frontiers in microbiome science. These environments—which include human tissues like placenta, tumors, and blood; built environments like cleanrooms; and extreme natural environments like deep subsurface and hyper-arid soils—harbor microbial biomass at or near the limits of detection for standard DNA-based sequencing approaches [1]. In these contexts, the inevitability of contamination from external sources becomes a critical concern that can fundamentally compromise research outcomes [1].

The core challenge is proportional: in high-biomass samples like human stool or surface soil, the target DNA "signal" vastly exceeds contaminant "noise." In contrast, low-biomass samples can be disproportionately impacted by even trace contamination, with contaminant DNA potentially outnumbering or dwarfing authentic signal from the sample itself [1]. This problem is not merely theoretical—it has fueled major scientific controversies, including debates about the existence of a placental microbiome and the retraction of high-profile tumor microbiome studies [17]. When contamination distorts results, it can lead to false ecological patterns, incorrect evolutionary signatures, and misguided clinical applications [1].

This Application Note establishes why rigorous contamination control is non-negotiable for credible low-biomass research and provides a structured framework for its implementation within a broader thesis on systematic reagent testing. By adopting the practices outlined herein, researchers can significantly reduce the risk of generating misleading results and enhance the reproducibility of their findings.

Effective contamination control begins with recognizing its diverse origins and transmission pathways. Contamination can be introduced at virtually every stage of the research workflow, from sample collection through data analysis [1] [17]. The research community broadly recognizes several key categories of contamination, each requiring specific mitigation strategies.

Table 1: Primary Contamination Sources in Low-Biomass Studies

| Contamination Source | Description | Common Vectors |

|---|---|---|

| External Contamination | Introduction of DNA from sources other than the sample of interest [17]. | Laboratory reagents, kits, sampling equipment, laboratory environments, and personnel [1] [17]. |

| Cross-Contamination (Well-to-Well Leakage) | Transfer of DNA or sequence reads between samples processed concurrently [1] [17]. | Adjacent wells on sample plates during PCR or sequencing library preparation [1]. |

| Host DNA Misclassification | Misidentification of host DNA sequences as microbial in origin [17]. | Inadequate bioinformatic separation of host and microbial signals, especially problematic in metagenomic studies of host-associated samples [17]. |

| Batch Effects & Processing Bias | Technical variation introduced when samples are processed in different batches or with different reagent lots [17]. | Differences in protocols, personnel, reagent batches, or ambient conditions that correlate with experimental groups [17]. |

A critical insight is that contamination is not uniform. Different contamination sources have distinct "fingerprints," and their proportional impact varies based on experimental context [17]. For example, reagent contamination may dominate in ultra-clean DNA extractions, while personnel contamination may prevail during field sampling. Furthermore, the impact of contamination is not always limited to adding noise; when contamination is confounded with a phenotype or batch, it can generate entirely artifactual signals that lead to spurious conclusions [17].

Figure 1: Contamination Introduction Pathways. Contaminants (red ovals) can be introduced at every stage of the experimental workflow (blue rectangles), from sampling through data analysis. A systematic approach must address all potential vectors.

A Systematic Framework for Contamination Control

Adopting a systematic, proactive framework is essential for effective contamination control. This involves implementing specific procedures before, during, and after wet-lab experiments to minimize and monitor contaminant introduction.

Pre-Analytical Phase: Strategic Planning and Sample Collection

The pre-analytical phase offers the greatest opportunity to prevent contamination. Careful planning and rigorous sampling protocols can reduce contaminant introduction at the source.

Strategic Experimental Design

- Avoid Batch Confounding: A critical step is ensuring that phenotypes or covariates of interest are not confounded with batch structure (e.g., all cases processed in one batch and controls in another). Active approaches like BalanceIT should be used to generate unconfounded batches rather than relying on randomization alone [17].

- Incorporate Controls from the Outset: The types and numbers of control samples must be determined during experimental design, not as an afterthought. These should represent all potential contamination sources [17].

Rigorous Sample Collection Protocols

- Decontaminate Equipment and Surfaces: All sampling equipment, tools, vessels, and gloves should be decontaminated. Single-use DNA-free items are ideal. For re-usable equipment, decontamination with 80% ethanol (to kill organisms) followed by a nucleic acid degrading solution (e.g., sodium hypochlorite, UV-C exposure) is recommended to remove both viable cells and cell-free DNA [1].

- Use Appropriate Personal Protective Equipment (PPE): Personnel should cover exposed body parts with gloves, goggles, coveralls or cleansuits, and shoe covers. This protects samples from human aerosol droplets and cells shed from skin, hair, and clothing [1].

- Minimize Handling: Samples should not be handled more than necessary, and contact with potential contamination sources should be limited through physical barriers and aseptic technique [1].

Analytical Phase: Laboratory Processing and Contamination Monitoring

During laboratory processing, consistent adherence to contamination control protocols is essential to maintain sample integrity.

Laboratory Processing Controls

- Process Controls: Include control samples that pass through the entire experimental workflow alongside actual samples. These should include extraction blanks (no sample added), no-template PCR controls, and library preparation controls [17].

- Source-Specific Controls: To complement process controls, also profile specific contamination sources separately using controls like empty collection kits, swabs of sampling surfaces, or aliquots of preservation solutions [17].

- Minimize Cross-Contamination: Physical barriers, dedicated workspace, and careful plate setup can reduce well-to-well leakage. When planning plate layouts, distribute sample types across the plate rather than grouping them [1] [17].

Reagent and Environmental Management

- Validate Reagent Sterility: Systematically test reagent lots for microbial DNA contamination before implementing them in studies. Maintain a database of validated lots.

- Control Laboratory Environment: Implement regular cleaning schedules for workspaces and equipment using DNA-degrading solutions. Consider using dedicated rooms or clean benches for low-biomass work [1].

Post-Analytical Phase: Bioinformatic Decontamination

Even with optimal wet-lab practices, some contamination is inevitable. Computational methods provide a final layer of protection against contaminant-driven conclusions.

Bioinformatic Decontamination Strategies

- Control-Based Decontamination: Tools like Decontam (frequency or prevalence methods) use control samples to identify and remove contaminant sequences from feature tables [17].

- Source-Tracker and Similar Methods: These approaches use control profiles to estimate the proportion of contamination in each sample [17].

- Limitations and Considerations: Bioinformatic decontamination struggles when contamination is extensive or when controls are not representative. These methods also cannot recover authentic signals mistakenly identified as contamination [1] [17].

Table 2: Essential Control Samples for Low-Biomass Studies

| Control Type | Purpose | Collection/Processing | Minimum Recommended |

|---|---|---|---|

| Field/Collection Blank | Identifies contamination from sampling equipment, containers, and ambient environment during collection. | Expose sterile collection container/swab to sampling environment without collecting actual sample. | Per sampling batch/environment |

| Extraction Blank | Identifies contamination introduced during DNA extraction. | Process without sample, using same reagents and protocol. | Per extraction batch (1-2 per plate) |

| No-Template Control (NTC) | Identifies contamination introduced during PCR/library amplification. | Perform amplification without adding DNA template. | Per amplification batch (1-2 per plate) |

| Positive Control | Verifies that experimental protocols work as intended. | Use well-characterized, low-biomass mock community. | Per experimental batch |

Experimental Protocols for Contamination Assessment

Protocol: Systematic Reagent Testing for Contaminating DNA

Purpose: To identify and quantify microbial DNA contamination in reagents prior to use in low-biomass studies.

Materials:

- Reagents to be tested (extraction kits, PCR master mix, water, etc.)

- DNA-free plasticware (tubes, tips)

- Equipment: PCR workstation, centrifuge, thermal cycler, sequencer

- Quantification reagents (Qubit, qPCR reagents)

Procedure:

- Prepare Reagent Aliquots: Under DNA-free conditions, aliquot reagents into sterile tubes.

- Extraction Kit Testing: For DNA extraction kits, process an extraction blank (no sample) using the standard protocol. Include an increased water volume if testing silica columns.

- PCR Reagent Testing: Set up no-template controls with the PCR master mix, primers, and water.

- Amplification and Sequencing: Amplify and sequence the resulting products using the same marker gene (16S rRNA) or shotgun approach planned for actual samples.

- Analysis: Sequence and process data through standard bioinformatic pipeline. Compare contaminant profiles across reagent lots.

Interpretation: Identify reagent lots with unacceptably high DNA contamination or problematic contaminant profiles (e.g., containing taxa of interest to the study). Establish study-specific thresholds for acceptable contamination levels.

Protocol: Collection and Processing of Negative Controls

Purpose: To monitor contamination introduced throughout the entire experimental workflow.

Materials:

- Sterile collection containers/swabs identical to those used for actual samples

- DNA preservation solution (if used)

- All standard laboratory processing reagents and equipment

Procedure:

- Field/Collection Blanks: During sampling, open and expose a sterile collection container to the sampling environment for a duration similar to actual sampling, then seal and preserve identically to actual samples.

- Extraction Blanks: Include at least one extraction blank per extraction batch (typically 1-2 per 96-well plate) consisting of all reagents but no sample.

- No-Template Controls (NTCs): Include NTCs in each PCR or library preparation batch.

- Processing: Process all controls alongside actual samples through every step—DNA extraction, quantification, amplification, and sequencing.

- Documentation: Meticulously track which controls correspond to which sample batches.

Interpretation: Controls should be sequenced to sufficient depth to detect low-abundance contaminants. The contaminant profiles from controls inform bioinformatic decontamination and help identify specific contamination sources when problems arise.

The Scientist's Toolkit: Essential Research Reagent Solutions

Implementing robust contamination control requires specific materials and reagents selected for their minimal contamination profile and appropriate application.

Table 3: Essential Research Reagent Solutions for Contamination Control

| Item Category | Specific Examples | Function & Importance | Contamination Control Considerations |

|---|---|---|---|

| Nucleic Acid Degrading Solutions | Sodium hypochlorite (bleach), DNA-ExitusPlus, DNA-Zap | Destroys contaminating DNA on surfaces and equipment. Critical because sterility (absence of viable cells) does not equal DNA-free [1]. | Use fresh dilutions; validate concentration and contact time for effectiveness; rinse thoroughly if used on equipment contacting samples directly. |

| DNA-Free Reagents and Kits | Certified DNA-free water, extraction kits, PCR components | Reduces introduction of contaminating DNA from reagents themselves, a major source of contamination [1] [17]. | Systematically test multiple lots; purchase from manufacturers offering DNA-free certification; aliquot to prevent cross-contamination. |

| Personal Protective Equipment (PPE) | Gloves, face masks, cleanroom suits, hair nets | Creates a barrier between personnel and samples, reducing contamination from skin cells, aerosols, and clothing [1]. | Change gloves frequently; decontaminate gloves with ethanol or DNA-degrading solution before sampling; use appropriate PPE for sampling environment. |

| Sterile Collection Materials | DNA-free swabs, sterile containers, DNA-free preservatives | Prevents introduction of contaminants during sample acquisition and stabilization. | Verify sterility certifications; test blank samples from each lot; avoid materials that may leach contaminants. |

| Surface Decontamination Supplies | 70-80% ethanol, isopropanol, hydrogen peroxide | Removes and/or inactivates microbial cells and other contaminants from work surfaces and equipment [1]. | Use before and after procedures; allow adequate contact time; follow with DNA-degrading solution for comprehensive decontamination. |

Figure 2: Systematic Contamination Control Workflow. Each research phase (green rectangles) requires specific contamination control actions (yellow notes). This systematic approach minimizes and monitors contamination throughout the entire research pipeline.

Contamination control in low-biomass microbiome studies is not merely a technical consideration but a fundamental requirement for scientific validity. The non-negotiable nature of these practices stems from the profound impact that even minimal contamination can have on data interpretation and conclusions. As the field continues to explore increasingly low-biomass environments, the implementation of systematic, multi-layered contamination control strategies—from experimental design through bioinformatic analysis—becomes essential.

Researchers must adopt a mindset of proactive contamination prevention rather than reactive correction. This involves committing to rigorous experimental design, comprehensive control strategies, standardized decontamination protocols, and appropriate computational cleaning. By integrating these practices into a cohesive framework and documenting them thoroughly in publications, the research community can enhance the reliability and reproducibility of low-biomass studies, ensuring that reported findings reflect biological truth rather than methodological artifact.

The integrity of microbiome research, particularly in low-biomass environments, is critically threatened by the pervasive presence of microbial DNA contaminants in laboratory reagents and kits. These ubiquitous contaminants can originate from various sources including DNA extraction kits, polymerase preparations, and other molecular biology reagents, potentially compromising experimental results and leading to erroneous biological interpretations [18] [19]. In low-biomass samples where target DNA is limited, contaminating DNA can constitute a significant proportion of the final sequencing data, effectively swamping the true biological signal and generating misleading conclusions [1] [18]. This application note provides a comprehensive overview of common contaminant genera, detailed protocols for their identification, and practical strategies to mitigate their impact within the broader context of systematic reagent testing for contamination sources research.

Contaminant Genera in Reagents and Kits

Contamination in molecular biology reagents is not random but involves predictable bacterial genera commonly associated with water, soil, and human skin. These contaminants can be introduced at multiple stages of reagent manufacturing and sample processing [18].

Table 1: Common Contaminant Genera in Laboratory Reagents

| Phylum | Common Contaminant Genera |

|---|---|

| Proteobacteria | Acinetobacter, Bradyrhizobium, Brevundimonas, Burkholderia, Caulobacter, Cupriavidus, Herbaspirillum, Methylobacterium, Novosphingobium, Pseudomonas, Ralstonia, Rhizobium, Sphingomonas, Stenotrophomonas [18] |

| Actinobacteria | Arthrobacter, Corynebacterium, Kocuria, Microbacterium, Propionibacterium, Rhodococcus [18] |

| Firmicutes | Bacillus, Paenibacillus, Streptococcus [18] |

| Bacteroidetes | Chryseobacterium, Flavobacterium, Pedobacter [18] |

The order Burkholderiales has been identified as particularly problematic, often dominating very low biomass samples and negative controls due to its ubiquity and potential for overamplification with common 16S rRNA gene protocols [20]. Beyond bacterial contaminants, plasmid DNA from expression vectors used in enzyme production represents another significant contamination source. These plasmids often contain antibiotic resistance genes (e.g., BlaTem-1, CmR) and even viral sequences, such as the Equine Infectious Anemia Virus (EIAV) pol gene, which have been detected in commercial reverse transcriptase kits [21].

Experimental Protocols for Contamination Assessment

Systematic Negative Control Strategy

Implementing a rigorous negative control strategy is fundamental for identifying reagent-derived contaminants.

- Control Types: Include DNA extraction blanks (using molecular grade water instead of sample), PCR blanks, and sampling controls (e.g., swabs of air, gloves, or empty collection vessels) [1] [20].

- Processing: Process negative controls alongside actual samples through all stages—DNA extraction, amplification, and sequencing—to account for contaminants introduced at any step [1].

- Replication: Include multiple negative controls to accurately quantify the nature and extent of contamination [1].

Biomass Serial Dilution Assay

This protocol evaluates the impact of contaminating DNA across a range of microbial biomass.

- Prepare Dilution Series: Culture a target bacterium not typically found as a contaminant (e.g., Salmonella bongori). Perform serial ten-fold dilutions to create a biomass series from approximately 10^8 cells down to 10^3 cells [18].

- DNA Extraction and Amplification: Extract DNA from each dilution using the test kits. Perform 16S rRNA gene amplification with both standard (e.g., 20) and high (e.g., 40) PCR cycles [18].

- Sequencing and Analysis: Sequence the amplicons and analyze the resulting profiles. In low-biomass dilutions, contaminating genera will dominate the sequence data, revealing the kit-specific contaminant profile [18].

Computational Contaminant Identification with Squeegee

For datasets lacking negative controls, the Squeegee tool provides a de novo computational method for contaminant detection [19].

- Input Preparation: Compile metagenomic sequencing data from multiple samples collected from distinct ecological niches or body sites [19].

- Taxonomic Classification: Squeegee performs taxonomic classification to identify candidate contaminant species shared across the different sample types [19].

- Similarity Estimation & Filtering: The tool estimates pairwise similarity between samples for each candidate species and calculates genome coverage breadth and depth to filter out taxonomic classification errors and accurately predict true contaminants [19].

The Scientist's Toolkit

Table 2: Essential Reagents and Resources for Contamination Management

| Item | Function/Purpose | Considerations |

|---|---|---|

| DNA-Free Water | Solvent for molecular reactions; rehydration of reagents. | A common source of bacterial DNA; should be certified DNA-free [18]. |

| DNA Extraction Kits | Isolation of nucleic acids from samples. | Silica membranes and solutions are frequent contamination sources; test multiple kit batches [18] [22]. |

| Polymerase Enzymes | Amplification of DNA in PCR. | Often produced recombinantly; can contain residual bacterial DNA or plasmid fragments from expression vectors [21] [18]. |

| Personal Protective Equipment (PPE) | Barrier against contamination from handlers (e.g., skin, hair, aerosol droplets). | Gloves, masks, and lab coats are essential. Gloves should be decontaminated with ethanol/DNA removal solutions before use [1]. |

| Fluorescent Tracers (e.g., microspheres) | Quantifying drilling fluid contamination in subsurface samples (e.g., IODP expeditions). | Injected directly into drilling fluids; concentration measured on external and internal core samples [20]. |

| Custom Kraken 2 Database | Bioinformatics tool for identifying cross-domain contamination in genome assemblies. | Effective for screening and decontaminating fungal or other eukaryotic genomes [23]. |

| Squeegee Software | De novo computational detection of contaminants in metagenomic data without negative controls. | Identifies contaminants as species shared across samples from distinct ecological niches processed in the same lab/with same kits [19]. |

Vigilance against reagent contamination is not optional but mandatory for generating reliable data, especially in low-biomass studies. Adopting a systematic approach to reagent testing—incorporating rigorous negative controls, utilizing computational tools like Squeegee for contaminant identification, and maintaining a critical awareness of common contaminant genera—is essential for upholding research integrity. By implementing the protocols and guidelines outlined in this document, researchers can significantly mitigate the risk of contamination-driven artifacts and ensure the accuracy of their findings in drug development and broader microbiological research.

Building Your Defense: Proactive Strategies and Systematic Testing Workflows

In modern laboratories, particularly those involved in sensitive areas like drug development and low-biomass microbiome research, the systematic control of contamination is not merely a best practice but a fundamental component of scientific integrity. A proactive contamination-control mindset is essential for ensuring data reliability and product safety. This approach moves beyond reactive measures, embedding contamination consideration into every stage of the research workflow, from initial sample collection to final data analysis [1]. This document outlines practical protocols and strategies, framed within the context of systematic reagent testing, to establish and maintain this critical mindset within any research setting.

Foundational Concepts of Contamination Control

Contamination in the laboratory can arise from a multitude of sources, which can be broadly categorized as follows:

- Human Operators: Skin cells, hair, microbiota, and aerosols from breathing or talking can introduce microbial and chemical contaminants [1] [9].

- Reagents and Kits: Commercial reagents, enzymes, and water can contain trace amounts of microbial DNA or chemical analytes, which become significant in high-sensitivity analyses [1].

- Laboratory Environment: Airborne particles, dust, and surfaces are persistent sources of contamination [9].

- Sampling Equipment & Consumables: Vessels, pipettes, and tools can shed particles or leach chemicals if not properly decontaminated [1] [9].

- Cross-Contamination: The transfer of material between samples during processing, such as through well-to-well leakage in a plate, is a common and often overlooked issue [1].

A Contamination Control Strategy (CCS) provides a holistic framework to address these risks. A robust CCS is a proactive system grounded in Quality Risk Management (QRM), mandated in pharmaceutical manufacturing by EU and US regulations, and is equally applicable to research laboratories [24] [25]. It involves a thorough evaluation of the entire workflow to identify potential contamination sources and the implementation of targeted controls.

The reliability of any experiment is contingent on the purity of its reagents. Systematic testing forms the backbone of a contamination-control strategy, providing the data needed to validate materials and processes.

Experimental Protocol: Reagent Contamination Screening

Principle: This protocol uses sensitive molecular and cultural methods to detect microbial and chemical contaminants in laboratory reagents, including water, buffers, enzymes, and chemical stocks.

Materials:

- Sterile, DNA-free consumables (tubes, pipette tips, filters)

- Culture media (e.g., Tryptic Soy Broth, Sabouraud Dextrose Broth for fungal detection)

- Reagents for DNA extraction and PCR (e.g., 16S rRNA gene primers for bacteria, ITS primers for fungi)

- Agarose gel electrophoresis system or qPCR instrument

- Sterile filtration apparatus (0.2 µm pore size)

Method:

- Sample Collection: Aseptically aliquot the reagent to be tested using sterile techniques.

- Microbial Culturing:

- Inoculate 1 mL of the reagent into 10 mL of culture media, in triplicate.

- Incubate aerobically at 30-35°C, anaerobically, and at 20-25°C for up to 14 days.

- Observe tubes daily for turbidity, indicating microbial growth.

- Molecular Analysis (for Microbial DNA):

- Negative Control Processing: Process a sterile, DNA-free water sample alongside the test reagent through all subsequent steps.

- DNA Extraction: Concentrate microbial cells from a large volume (e.g., 50-100 mL) of reagent by sterile filtration. Extract DNA directly from the filter. Simultaneously, perform a "kit-only" negative control by adding sterile water directly to the DNA extraction kit [1].

- Amplification and Detection: Perform PCR amplification using broad-range 16S rRNA (for bacteria) and ITS (for fungi) gene primers.

- Analyze PCR products by gel electrophoresis. Sanger sequencing of any amplified bands can identify contaminating species.

- Data Interpretation:

- Compare results from the test reagent against the negative controls.

- Growth in culture media or PCR amplification in the test sample that is absent in the negative controls indicates contamination of the reagent.

- Persistent contamination across multiple reagent lots suggests a systemic issue in the supply or storage process.

Data Presentation: Reagent Contamination Survey

Table 1: Summary of potential contamination sources and their respective controls during reagent handling.

| Contamination Source | Potential Impact | Recommended Control Measures |

|---|---|---|

| Laboratory Water | Microbial growth, chemical impurities, nucleases | Use pharmaceutical-grade water (e.g., USP); regular microbial and endotoxin testing; proper loop maintenance [24]. |

| Process Gases | Introduction of airborne contaminants | Use sterile filters (0.2 µm) for gases in direct contact with products; regular integrity testing [24]. |

| Raw Materials & Consumables | Microbial bioburden, endotoxins, leachables | Supplier qualification and audits; risk-based incoming inspection; testing certificates of analysis [24] [25]. |

| Personnel | Introduction of skin flora, aerosols | Comprehensive training in aseptic techniques; proper gowning (e.g., protective suits, masks, gloves) [1] [25]. |

Table 2: Key research reagents and materials essential for contamination control, with their primary functions.

| Research Reagent / Material | Primary Function in Contamination Control |

|---|---|

| DNA Degrading Solution (e.g., Bleach) | Destroys contaminating trace DNA on surfaces and equipment; critical for molecular work [1]. |

| Sterile, DNA-Free Consumables | Prevents introduction of contaminants during sample and reagent handling; single-use items are preferred [1]. |

| HEPA-Filtered Laminar Flow Hood | Provides an ISO 5 clean air workspace for aseptic procedures, protecting samples from environmental contaminants [24]. |

| Environmental Monitoring Plates | Used for routine monitoring of microbial contamination on surfaces and in the air of critical zones [25]. |

Implementing a Holistic Contamination Control Strategy

A comprehensive CCS extends beyond reagent testing to encompass the entire operational environment and workflow. Key elements, as highlighted in pharmaceutical guidelines, include [24] [25]:

- Facility and Process Design: Utilizing cleanrooms with cascading pressurization, closed processing systems, and unidirectional material flows to minimize contamination risk.

- Validation Controls: Qualifying and re-qualifying equipment, processes (e.g., sterilization, cleaning), and analytical methods to ensure consistent performance.

- Monitoring Controls: Implementing robust programs for environmental, personnel, and in-process monitoring to promptly identify and correct deviations.

- Quality Culture: Fostering a mindset where all personnel are empowered, trained, and responsible for maintaining contamination standards.

Workflow Visualization: A Proactive Contamination Control Strategy

The following diagram synthesizes the core principles of this document into a logical workflow for establishing and maintaining a contamination-control mindset, emphasizing proactive risk assessment and systematic testing.

Adopting a contamination-control mindset is a strategic imperative for generating reliable and defensible data. By integrating systematic reagent testing within a broader, holistic Contamination Control Strategy, laboratories can proactively mitigate risks. This involves a commitment to rigorous practices, continuous training, and a culture of quality that empowers every researcher to be a guardian of data integrity. The protocols and frameworks outlined here provide a concrete path for researchers and drug development professionals to embed this essential mindset into their daily operations, thereby safeguarding their science and its applications.

The polymerase chain reaction (PCR) is a cornerstone of modern molecular biology, enabling the amplification of minute amounts of DNA into measurable quantities. However, this extreme sensitivity also represents its primary vulnerability, as the slightest trace of contaminating DNA can lead to false-positive results and compromised data integrity. Spatial separation of laboratory functions provides the most effective defense against such contamination, forming a critical component of systematic reagent testing and contamination source research.

Implementing a spatially separated workflow is fundamental to any robust contamination control strategy. The core principle involves physically separating the stages where reagents and samples are prepared from areas where amplified PCR products are handled and analyzed. This physical segregation prevents the transfer of amplicons—the amplified DNA fragments that are potent sources of contamination—back into new reactions. Without such separation, laboratories risk systematic contamination that can invalidate experimental results and necessitate extensive decontamination procedures. The design considerations outlined in this protocol are therefore essential for maintaining the integrity of molecular biology research, pharmaceutical development, and diagnostic applications.

Laboratory Design Principles and Area Specifications

Core Design Concepts for Contamination Control

An optimally designed PCR laboratory implements a unidirectional workflow that moves from clean pre-PCR areas to potentially contaminated post-PCR areas, with no reverse flow of materials or equipment. This workflow is foundational to preventing amplicon carryover into sensitive reagent preparation and sample setup areas. Two primary design models are recommended, depending on available space and resources.

The ideal configuration involves two separate rooms: one dedicated exclusively to pre-PCR activities (maintained with slightly positive air pressure to prevent aerosol inflow), and a second room for DNA amplification and product analysis (maintained with slightly negative air pressure to contain amplicon aerosols). When spatial or budget constraints prevent a two-room setup, a single-room configuration can be implemented where pre-PCR and post-PCR areas are positioned as far apart as possible, with clear physical demarcations and dedicated equipment for each zone. In both designs, personnel movement must follow the unidirectional flow; staff moving from post-PCR to pre-PCR areas must change personal protective equipment to prevent carrying over contaminants.

Detailed Area Specifications

The table below summarizes the key specifications for each designated area within a spatially separated PCR laboratory:

Table 1: Functional Area Specifications in a PCR Laboratory

| Laboratory Area | Primary Function | Environmental Controls | Contamination Control Measures |

|---|---|---|---|

| Reagent Preparation | Preparation of PCR master mixes without DNA templates [26] | Positive air pressure; UV-equipped PCR hood or laminar flow cabinet [26] | Dedicated equipment; single-use aliquots; DNase-free consumables [26] |

| Sample Preparation | Addition of DNA template to master mixes [26] | Positive air pressure; physical separation from reagent area [26] | Dedicated pipettes with filter tips; surface decontamination with bleach [27] [26] |

| Amplification Area | Thermal cycling for DNA amplification [26] | Negative air pressure [26] | Restricted access; no return of materials to pre-PCR areas [26] |

| Product Analysis | Gel electrophoresis, sequencing, or other analysis [26] | Negative air pressure [26] | Designated equipment; thorough decontamination protocols [27] [26] |

Equipment and Reagent Management

Essential Laboratory Equipment

Proper equipment selection and assignment are crucial for maintaining spatial separation. Each designated area must have its own dedicated equipment, particularly pipettes, which should never be shared between pre- and post-PCR zones. Aerosol-resistant filter tips are mandatory for all liquid handling in pre-PCR areas to prevent pipette contamination. A laminar flow hood or biosafety cabinet within the pre-PCR space provides a controlled, clean environment for setting up PCR reactions and should be decontaminated with a bleach solution before and after each use.

Thermal cyclers for DNA amplification should be located within the post-PCR area. Other essential equipment includes centrifuges, vortex mixers, and refrigeration units for reagent storage. A key practice is the aliquoting of reagents into single-use vials upon receipt to increase shelf life, minimize freeze-thaw cycles, and preserve bulk stocks in case a working aliquot becomes contaminated.

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials and Reagents for Contamination-Controlled PCR

| Item | Function | Contamination Control Feature |

|---|---|---|

| PCR-Grade Water | Solvent for master mixes; negative control [27] | Certified DNA-free; aliquoted for single use [27] |

| Aerosol-Resistant Filter Tips | Liquid handling for samples and reagents [26] | Prevents aerosols from contaminating pipette shafts and samples [26] |

| UDG/UNG Enzyme System | Enzymatic decontamination of PCR mixes [27] | Degrades uracil-containing carryover amplicons from previous reactions [27] |

| DNase-Free Tubes & Consumables | Reaction vessels and storage [26] | Manufactured to be free of DNase, RNase, and PCR inhibitors [26] |

| Sodium Hypochlorite (Bleach) | Surface and equipment decontaminant [27] [1] | Degrades DNA on non-labware surfaces; used as 10% solution [27] |

| UV-C Light Source | Nucleic acid cross-linking on surfaces and in hoods [27] [1] | Renders surface DNA non-amplifiable [27] |

Experimental Protocols for Contamination Monitoring

Systematic Reagent Testing Protocol

A critical component of contamination source research is the systematic testing of all reagents. This protocol must be performed whenever contamination is suspected or as a routine quality control measure.

- Prepare a series of PCR reactions using a standard protocol relevant to your workflow.

- For each reagent (water, buffer, dNTPs, primers, polymerase), create a test reaction where that specific component is replaced with a fresh, never-opened aliquot from a different lot, if possible.

- Include a negative control containing all reagents except the DNA template (No-Template Control or NTC) and a positive control with a known, clean template.

- Run the PCR amplification and analyze the results via gel electrophoresis.

- Interpretation: The contaminated reagent is identified when its replacement results in a clear NTC, while other reactions with the original reagents remain contaminated.

Contamination Troubleshooting Workflow

The following logic pathway provides a systematic method for identifying and eliminating contamination sources, aligning with the broader thesis on systematic reagent testing.

Workflow Visualization and Standard Operating Procedures

Physical Workflow and Material Flow Diagram

The successful implementation of spatial separation relies on a strict unidirectional workflow for both personnel and materials. The following diagram illustrates the physical layout and movement protocols that minimize cross-contamination risk.

Standard Decontamination Protocol

Rigorous and routine decontamination of all work surfaces and equipment is non-negotiable. This protocol should be performed before and after each work session.

- Prepare a fresh 10% sodium hypochlorite (bleach) solution in DNA-free water [27].

- Thoroughly wipe all work surfaces, including the interior of laminar flow hoods, with the bleach solution. Allow surfaces to remain wet for a 2-5 minute contact time to ensure nucleic acid degradation.

- Wipe down equipment such as pipette exteriors, centrifuge rotors, vortex mixers, and refrigerator handles with the bleach solution, followed by a rinse with DNA-free water or ethanol to prevent corrosion [27].

- For persistent contamination or as a periodic preventative measure, use UV-C irradiation in hoods and on surfaces overnight to cross-link any residual DNA [1].

- Decontaminate plasticware and glassware via autoclaving. Note that autoclaving kills viable cells but does not remove DNA; therefore, chemical decontamination with bleach or commercial DNA removal solutions is still required for thorough cleaning [1].

The implementation of rigorous spatial separation, as detailed in this application note, is a fundamental prerequisite for reliable PCR-based research and diagnostics. By physically segregating pre-PCR, post-PCR, and reagent preparation areas, enforcing a unidirectional workflow, and adhering to systematic decontamination and reagent testing protocols, laboratories can significantly reduce the risk of contamination. These practices form the bedrock of quality assurance in molecular biology and are indispensable for generating credible, reproducible data in the context of systematic reagent testing and contamination source research. The protocols and guidelines provided herein offer a actionable framework for establishing a contamination-aware laboratory culture, ultimately safeguarding the integrity of scientific outcomes.

In the rigorous field of systematic reagent testing, the implementation of no-template controls (NTCs) and negative controls forms the foundational strategy for identifying contamination sources. These controls are essential for distinguishing true experimental signals from background noise introduced through reagents, laboratory environments, or handling processes. The critical importance of these controls is magnified in low-biomass studies and highly sensitive molecular assays, where even minimal contamination can compromise data integrity and lead to erroneous conclusions [1] [28]. The research community has recognized significant deficiencies in current practices, with one systematic review revealing that approximately two-thirds of insect microbiota studies failed to include necessary blanks, and only 13.6% both sequenced these controls and appropriately controlled for contamination in their datasets [28]. This protocol establishes comprehensive guidelines for the consistent implementation and interpretation of NTCs and negative controls to uphold experimental validity in contamination source research.

Conceptual Foundations and Definitions

Fundamental Control Types

No-Template Controls (NTCs): These reactions contain all PCR components—including primers, polymerase, nucleotides, and buffer—but completely lack any DNA template. Instead, nuclease-free water or a similar inert substance is added in place of the sample. NTCs are specifically designed to detect reagent contamination or amplification carryover from previous reactions [28].

Negative Controls: This broader category encompasses samples that undergo the entire experimental workflow while representing a matrix known or expected to lack the target analyte. Examples include sterile swabs, empty collection vessels, clean sampling buffers, or specimens from sterile environments. Negative controls effectively identify contamination introduced during sample collection, handling, or processing stages [1] [29].

Theoretical Framework and Purpose

Both control types operate on the same fundamental principle: they provide a baseline measurement of contaminating nucleic acids or other interfering substances present in reagents, equipment, or the laboratory environment. When properly implemented, they enable researchers to determine the limit of detection (LoD) for their analytical system. The average abundance of signal in negative controls establishes this LoD, serving as a critical threshold below which biological samples cannot reliably be distinguished from background contamination [28]. The consistent application of these controls across experimental runs is paramount, as contamination levels can vary significantly between reagent lots and processing batches [1] [28].

Table 1: Core Characteristics of Experimental Controls

| Control Type | Primary Function | Expected Result | Failure Implications |

|---|---|---|---|

| No-Template Control (NTC) | Detect contamination in PCR reagents and amplification process | No amplification signal | Reagent contamination present; false positives likely |

| Process Negative Control | Identify contamination introduced during sample collection/handling | No target signal detected | Sampling or handling procedures introduce contaminants |

| Positive Control | Verify assay sensitivity and functionality | Known positive result obtained | Assay insufficiency; potential false negatives |

| Experimental Group | Answer research question | Variable result based on hypothesis | N/A |

Experimental Protocols and Implementation

Control Inclusion Strategy

For robust contamination monitoring, implement a stratified control approach throughout the experimental workflow:

Sample Collection: Include field blanks or environmental controls that mirror sampling conditions without collecting actual material. For surface sampling, include sterile swabs exposed only to the air in the sampling environment [1].

DNA Extraction: Process one NTC per extraction batch (typically 8-24 samples) using nuclease-free water instead of sample. This controls for kitome contamination—contaminating DNA present in extraction kits and reagents [28].

PCR Amplification: Include at least one NTC per PCR plate to detect amplification contaminants or cross-contamination between wells [28].

Sequencing: Incorporate NTCs in the sequencing library preparation to identify contaminants introduced during library construction [1].

Comprehensive Workflow Integration

The following diagram illustrates the integrated placement of controls throughout a standard molecular biology workflow:

Experimental Workflow with Integrated Controls

Detailed Methodological Protocols

Preparation of No-Template Controls

- Materials Required: Nuclease-free water, sterile microcentrifuge tubes, dedicated pipettes for control setup.

- Procedure:

- For extraction NTCs, add 100-200 μL of nuclease-free water to a clean tube instead of sample.

- Process the NTC through the entire DNA extraction protocol alongside experimental samples.

- For PCR NTCs, prepare a master mix containing all PCR components, then aliquot into a clean reaction tube.

- Add nuclease-free water equivalent to the volume of DNA template used in experimental reactions.

- Process NTCs through all subsequent steps (amplification, sequencing) identically to test samples.

- Quality Threshold: Establish a predetermined threshold for acceptable contamination levels (e.g., cycle threshold [Ct] value >35 in qPCR, or <0.1% of total sequences in NGS) [28].

Preparation of Process Negative Controls

- Materials Required: Sterile collection supplies (swabs, containers), DNA-free preservation solutions, personal protective equipment.

- Procedure:

- Equipment Control: Open a sterile collection container in the sampling environment and close it without collecting a sample.

- Environmental Control: Expose a sterile swab to the air in the sampling environment for the duration of typical sampling.

- Reagent Control: Include an aliquot of preservation or transport solution that never contacts any sample.

- Process all negative controls through the entire analytical workflow alongside experimental samples [1].

- Documentation: Record the specific type, location, and processing batch for each negative control to enable traceback if contamination is detected.

Data Analysis and Interpretation Framework

Quantitative Assessment of Control Results

The following table summarizes the key analytical approaches for interpreting control data across different methodological platforms:

Table 2: Analytical Approaches for Control Data Interpretation

| Method Platform | Primary Metrics | Interpretation Guidelines | Corrective Actions |

|---|---|---|---|

| qPCR/dPCR | Cycle threshold (Ct), absolute quantification | Ct >35 often indicates acceptable background; establish run-specific LoD | Reject run if NTC amplifies before experimental samples; replace suspect reagent lots |

| 16S rRNA Sequencing | ASV prevalence, relative abundance in controls | Remove taxa with higher prevalence in controls than samples | Apply decontamination algorithms (e.g., Decontam); increase reagent decontamination |

| Metagenomic Sequencing | Read counts, taxonomic distribution | Compare proportional representation of contaminants | Bioinformatic subtraction of control-identified contaminants; process improvement |

| Microbial Culture | Colony formation, morphological identification | No growth expected in true negatives | Enhance sterilization protocols; review aseptic technique |

Statistical Decontamination Protocols

For sequencing-based approaches, implement a multi-step bioinformatic process to identify and remove contaminants identified through controls:

Sequence Processing: Process control and experimental samples through identical bioinformatic pipelines (quality filtering, denoising, ASV/inOTU calling).

Contaminant Identification: Use prevalence-based methods to identify taxa that appear more frequently in controls than in experimental samples, or frequency-based methods to identify taxa with higher abundance in low-concentration samples [28].

Threshold Application: Apply the Limit of Detection (LoD) calculated from negative controls. Discard any sample where the absolute abundance of target sequences (measured via qPCR) falls below the average abundance in negative controls [28].

Data Filtering: Remove identified contaminant sequences from all samples in the dataset using reproducible scripts and document the proportion of sequences removed.

The following diagram illustrates the decision pathway for contamination assessment and data remediation:

Contamination Assessment Decision Pathway

Essential Research Reagent Solutions

The following toolkit comprises critical materials and resources for implementing effective contamination control protocols:

Table 3: Research Reagent Solutions for Contamination Control

| Reagent/Resource | Function | Application Notes |

|---|---|---|

| DNA Degradation Solutions | Eliminate contaminating nucleic acids from surfaces | Sodium hypochlorite (bleach), DNA-ExitusPlus, DNA-Zap |

| Nuclease-Free Water | Inert substitute for sample in NTCs | Confirm nuclease-free status; aliquot to prevent contamination |

| Sterile Collection Supplies | Minimize introduction of contaminants during sampling | DNA-free swabs, containers; pre-treat with UV irradiation |

| DNA Extraction Kits | Isolate nucleic acids from samples | Monitor kitome contamination between lots; include extraction NTCs |

| PCR Master Mixes | Amplify target sequences | Quality control each new lot; include PCR NTCs |

| Decontamination Software | Bioinformatic identification of contaminants | Decontam (R package), SourceTracker |

| Positive Control Materials | Verify assay functionality | Synthetic oligonucleotides, characterized reference DNA |

The rigorous implementation of no-template controls and negative controls represents a non-negotiable standard in systematic reagent testing for contamination sources. These controls enable researchers to distinguish true biological signals from technical artifacts, thereby protecting research validity and reproducibility. The current evidence suggests that these practices remain underutilized, with one systematic review finding that only 13.6% of insect microbiota studies appropriately controlled for contamination [28]. By adopting the comprehensive protocols outlined in this document—including stratified control placement, quantitative assessment of control results, and appropriate bioinformatic remediation—researchers can significantly enhance the reliability of their findings. Future directions in contamination control will likely involve increased automation of control monitoring, development of improved statistical methods for contaminant identification, and establishment of field-specific standards for control implementation and reporting.